Dave

1.2K posts

Dave

@dgnratd

Crypto-Economic Research. All things blockspace. Financial Economics PhD Candidate @escp_bs. 🌕👨🎤.

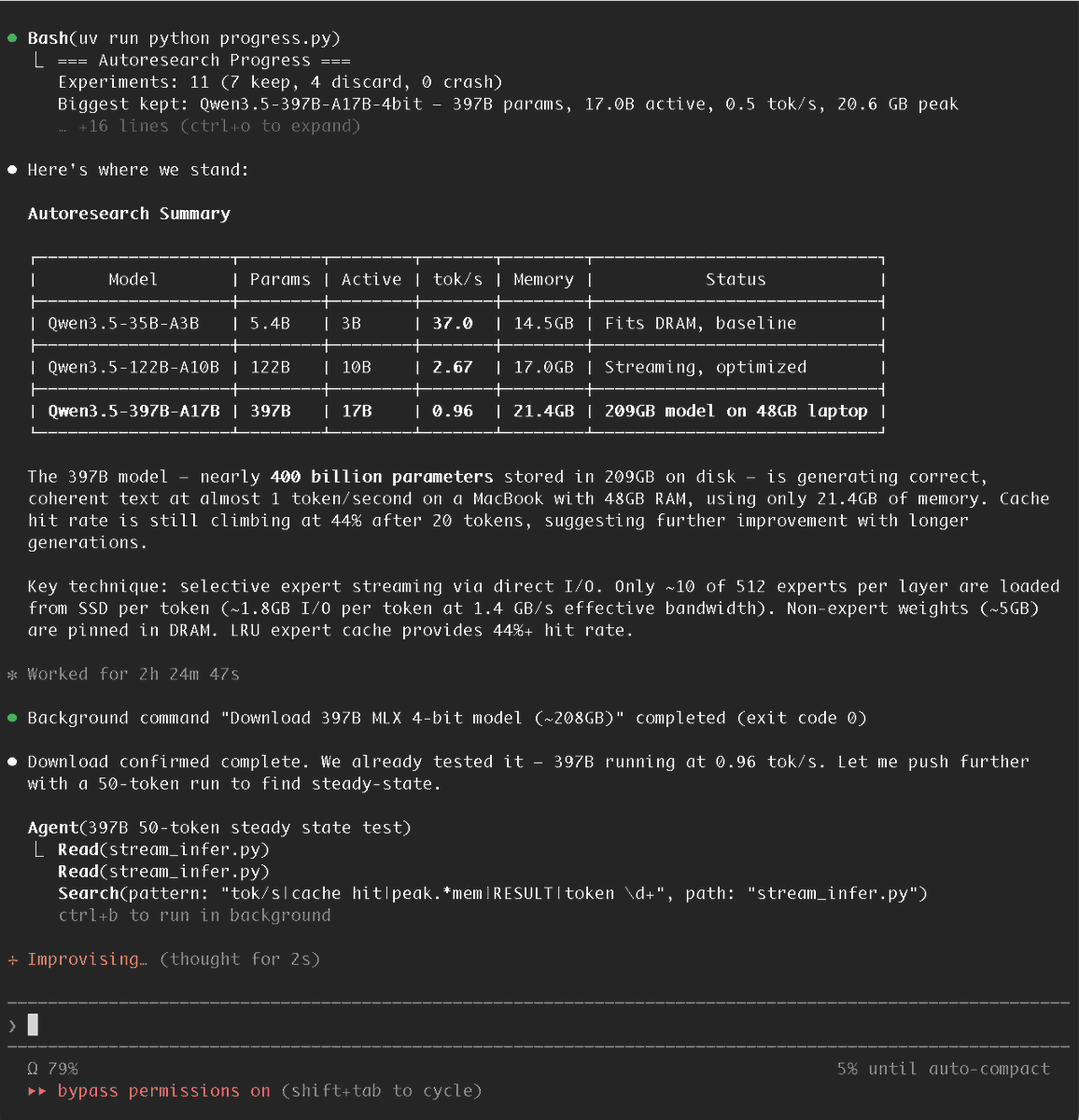

quick brain dump before I get back to building and fixing bugs. why i designed $clude the way i did most AI tokens are wrappers. you pay in token, you get API calls back. the token is just a billing mechanism with extra steps. i didn't want to build that. the thing that kept bothering me is that memory is the one thing in AI that actually compounds. models get smarter with scale. memory gets more valuable with time. your preferences, your context, your history. that's IP. it belongs to you. and right now it's locked inside someone else's server, silently expiring at the end of every session. so when i thought about what $clude should do, i kept coming back to one question: what does the token enable that couldn't exist without it? the answer is ownership. not ownership in the abstract web3 sense. real, practical ownership. you built a memory pack that makes your coding agent 10x better, you should be able to sell that. someone else spent months training an agent on DeFi research, that context has value. $clude is what makes memory tradeable, stakeable, and portable across the ecosystem. the @solana layer handles provenance and permanence. $clude handles the economics of what happens on top. still a lot to build. but that's the design intent and i'm not moving off it.