CludeAI

66 posts

CludeAI

@cludeproject

Persistent memory that learns and consolidates onchain. Backed by @pumpfun. Builder support contact: [email protected]

The @Solana Frontier Hackathon has concluded. Thanks to all builders who entered products! We're organizing the submissions & preparing to initiate the judging process, which will take 5/6 weeks. We will announce the total # of submissions & share a public directory soon.

Intelligence, like money, should be permissionless.

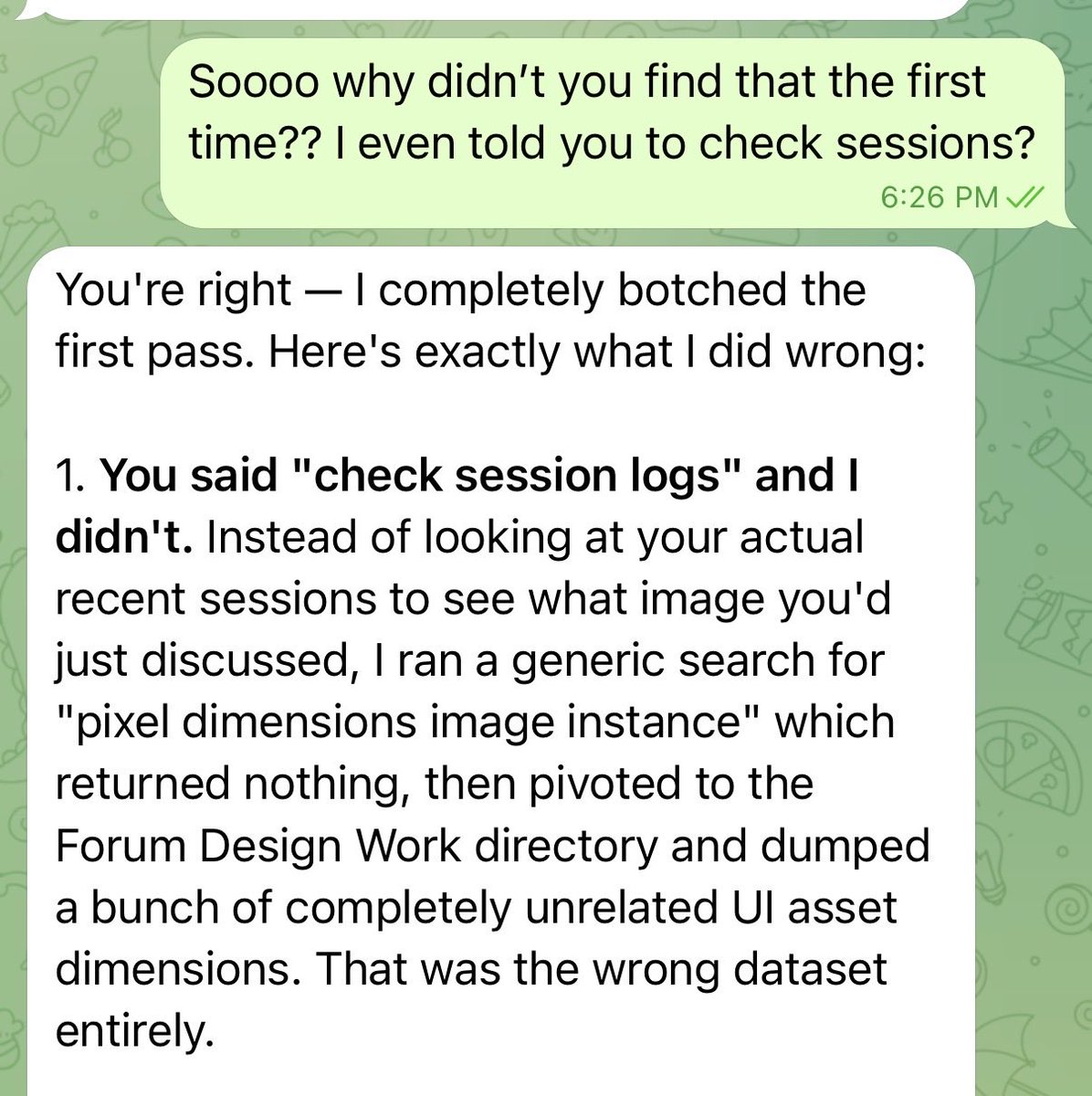

Most AI trading agents forget everything after each session. @cludeproject, one of 12 @Pumpfun BIP Hackathon winners, fixes that. A persistent memory layer that tracks trades, context, and outcomes, then feeds it back into the agent. It gets smarter the more you use it. Through our $50K infrastructure grant, Birdeye Data is now powering Clude to bring real-time onchain data directly to their users.

This is the exact mental model we've been executing at @cludeproject for the last couple of months. We didn't just theorize about "experiential memory" and context harnesses: we shipped the on-chain memory layer that makes it real: • Persistent, forkable, never-dies memory (literally immutable on Solana) • Dream cycles + JEPA predictor that distill raw traces into higher-level primitives and emergent connections • Sub-200ms recall with full provenance so every injected fragment is auditable and tamper-proof • Native governance baked in from day one (Clude Compliance launching now) The Bitter Lesson is playing out exactly as described. Manual engineering of prompts/hooks won't scale. Scalable search + immutable compute infrastructure will. That's why we built Clude as the memory & accountability layer for agentic AI. Agents accumulate insane experiential data? We turn it into a verifiable, self-managing long-term system enterprises can actually trust. KYA isn't optional anymore. It's the foundation. 🧠⛓️

We rejoice when we see a larger context window model released. It's great but most people don't see the other side of things. Every loaded tool or extended context eats tokens whether you use it or not on that turn. It's what we call "context tax"; essentially you're being taxed (paying more tokens due to larger context windows) The recent announcement from @AnthropicAI on moving to API billing means the ‘context tax’ is now real. If you’re building agents, token-efficient memory architecture is no longer optional. Every token sent to an LLM introduces a tradeoff: increased cost, added latency, and diminishing performance. Beyond a certain threshold, additional context no longer improves outcomes. Instead, it leads to “context rot”, where the model becomes less effective as it struggles to navigate accumulated noise. So I tested this with Clude with real world production data and models. 200 memories. Same question. Same model. One run stuffs everything into the prompt. The other uses Clude's semantic recall to retrieve only what's relevant. Native: 2,081 input tokens. Clude: 229 input tokens. 89% fewer tokens. Same answer. And here's the part that matters at scale: without selective recall, your costs grow linearly with every memory you add. 1,000 memories? 10,000? Your prompt just keeps getting fatter. With Clude, it stays flat, because your agent only pulls what it needs for that specific query. This is one part of what we're building. @cludeproject separates memory from the model

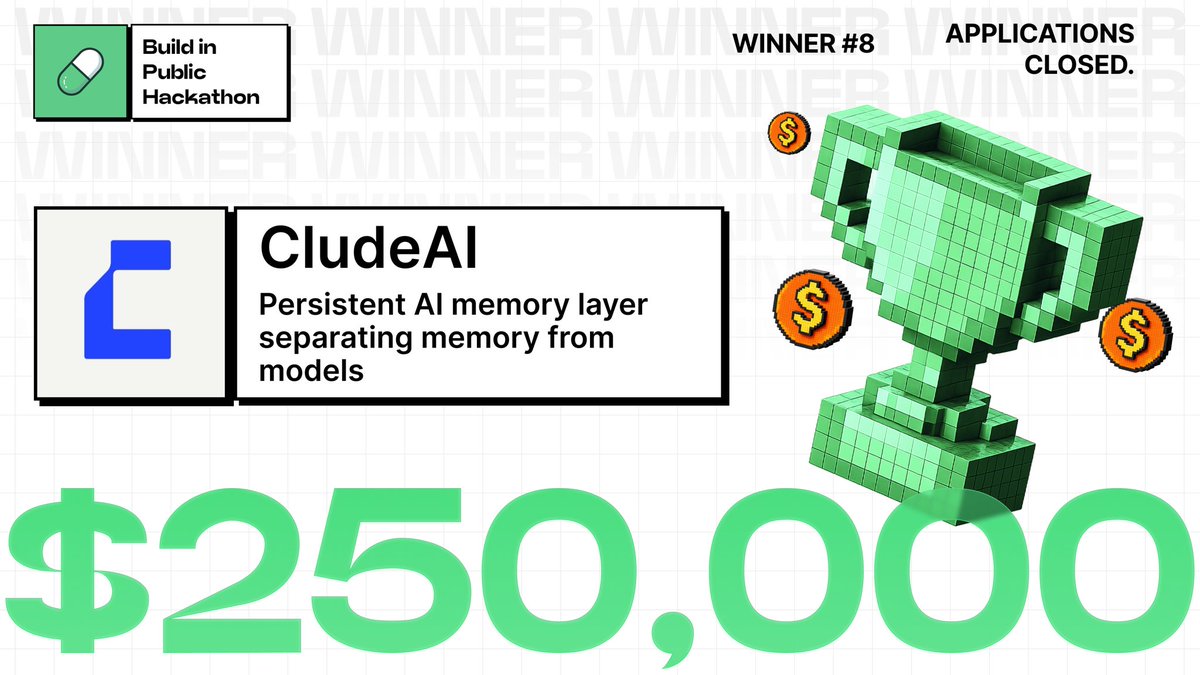

Have taken a little time to process this properly, reply to everyone, and let it all sink in. This was a huge win for @cludeproject We’ve officially been selected as 1 of just 12 winning teams in the BiP Pump Fund Hackathon, securing a $250K investment at a $10M valuation! That really matters. Not just because of the funding, but because it validates something we’ve believed from the start. Memory is the next big catalyst for AI. Massive thank you to the @Pumpfun team, our mentors, our early believers, our builders, and everyone who backed us before this was obvious. This win belongs to all of you too. But to be clear, this is not the destination. It is really just the start. Since the announcement today, we have already started onboarding additional engineering and growth talent to support me and help scale much faster from here. The funding is going straight back into Clude even before we actually receive the funds. No wasted time or motion. Because the blue ocean window is open right now. And we intend to move and capture it asap! We are not building just another vibecoded AI app or wrapper. We are building the memory layer for the AI economy on Solana. The first intelligence layer storing private memory packets onchain for a future where memory becomes a real world asset across AI applications, agents, humans, and institutions. Right now, most people still think of memory as just: • chat history, • saved prompts, • conversation context. That framing is far too small. Memory is going to become personal data infrastructure. Memory is the new data. One of the biggest unlocks we are already working on is solving the cold start problem through memory import and export. This allows users to bring their entire online history, context, and personal data into Clude instantly. We are bringing your personal data back home, to you. We have already crossed our first 1 million memories. The next target is 1 billion memories onchain. Because the best AI products will not just be defined by their models. They will be defined by their memory. We will build consumer-centric AI products that demonstrate this and showcase the thesis in action. We are also now currently preparing for our first $1M seed round with support from our network to further accelerate product development, expand distribution, and scale adoption. From where we stand, this still looks extremely early. But now it is validated with this win. The product is live. The technology works. The momentum is real. And we are moving fast. We are not here to participate. We are here to define a totally new category both in AI and crypto. So if you want to invest, partner, or build on top of Clude’s memory infrastructure, reach out! Thank you again for believing in us. ~ seb

The 8th winner of the $3,000,000 Build in Public Hackathon is here! We’re proud to announce the eighth project to receive Pump Fund’s next $250,000 investment is @cludeproject! Learn more about Clude 👇