arlo_son

268 posts

@gson_AI

Undergraduate @ Yonsei. UIC Economics.

#NLProc AI Co-Scientists 🤖 can generate ideas, but can they spot mistakes? (not yet! 🚫) In my recent paper, we introduce SPOT, a dataset of STEM manuscripts (math, materials science, chemistry, physics, etc), annotated with real errors. SOTA models like o3, gemini-2.5-pro also suffer greatly! arxiv.org/abs/2505.11855

If you're at #NAACL25, don't miss @gson_AI presenting our paper on localizing MMLU to Korea! Session C: Oral/Poster 2: 2pm-3.30pm

🌟 KMMLU 🌟This benchmark replicates the methodology that produced MMLU, but using examinations common in Korea. We manually annotate a subset of the questions as to whether they require Korea-specific knowledge and also designate a KMMLU-Hard subset that current models find especially challenging. We benchmark 26 openly available and proprietary models including: Qwen, Yi, Llama-2, Polyglot-Ko, GPT-3.5/4, Gemini-Pro and HyperCLOVA X. To our surprise GPT-4 outperforms all. However, when limited questions requiring knowledge specific to Korea HyperCLOVA X seems to be better. 🎖️ Paper: arxiv.org/abs/2402.11548 Dataset: huggingface.co/datasets/HAERA…

>RL 7b model only does XML formatting 99.5% of the time, not 100 >look at actual model outputs >it's violating the formatting rarely sometimes, but with structure. a single token appears after </answer> before the EOT; always "assed" or "inati" ..."passed" or "terminated"? hm.

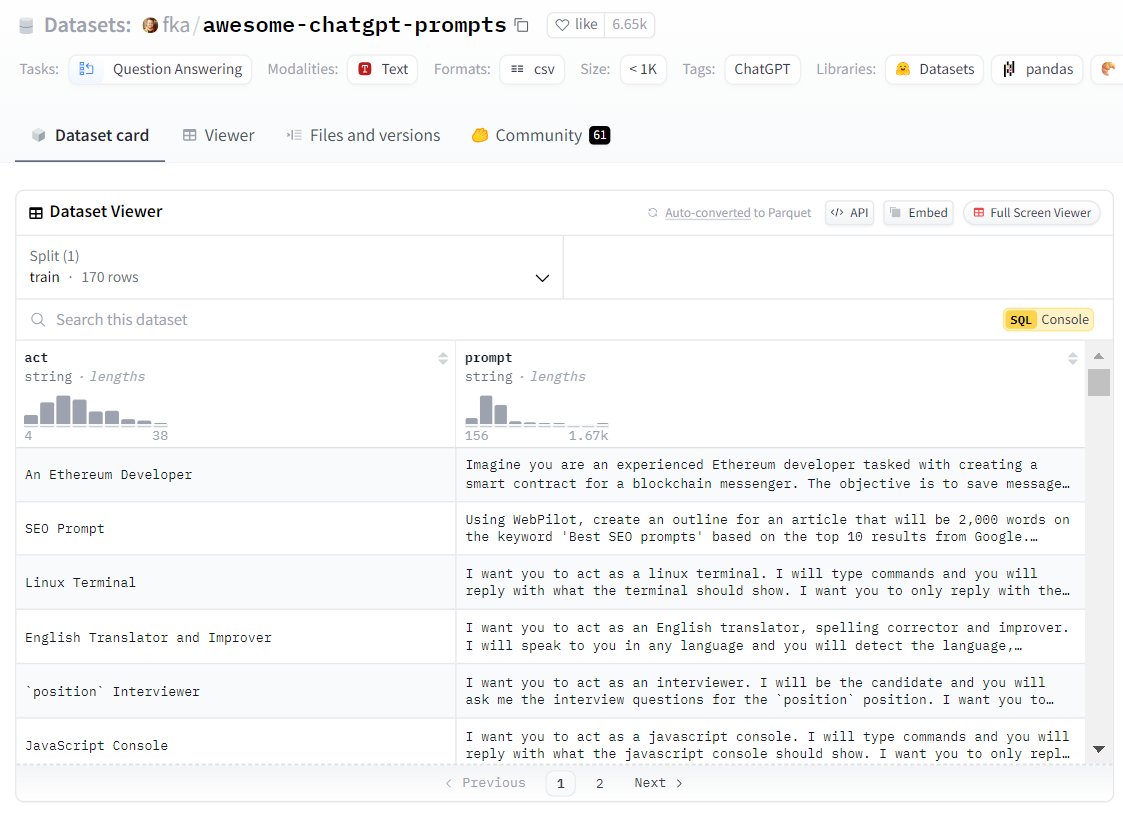

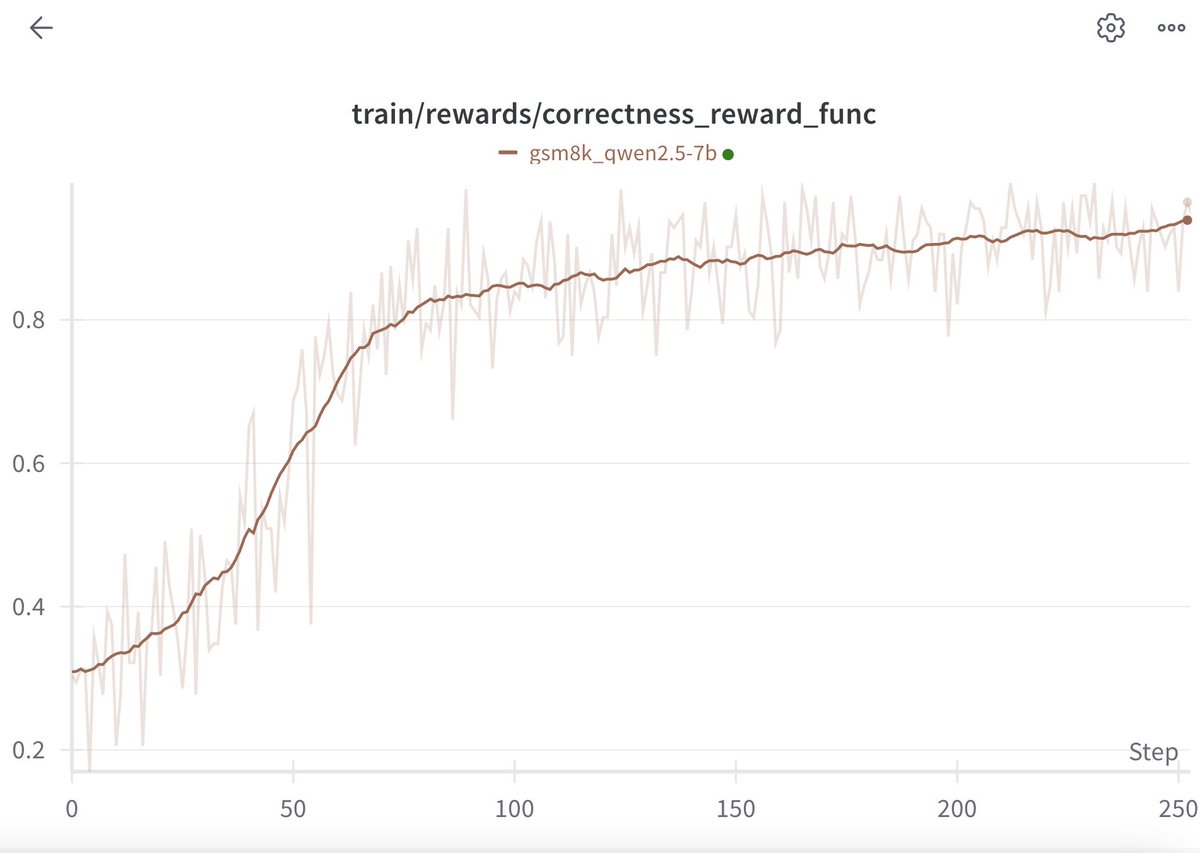

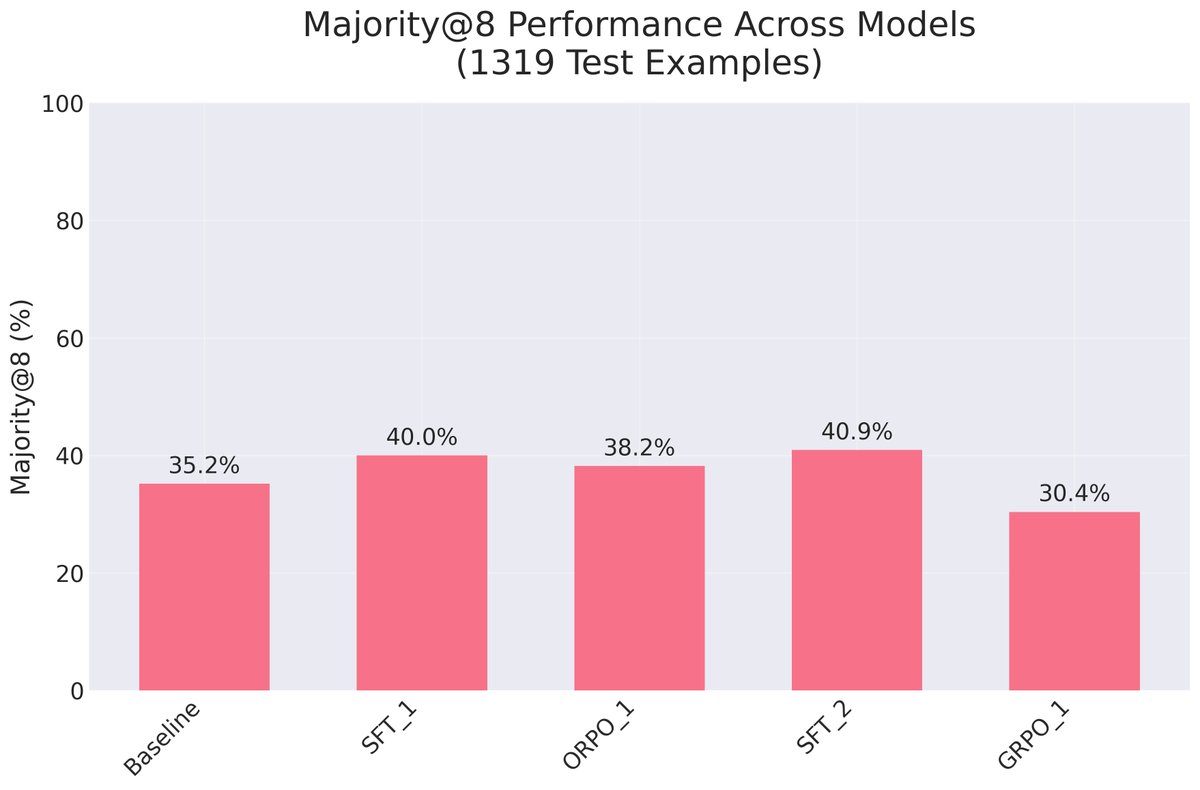

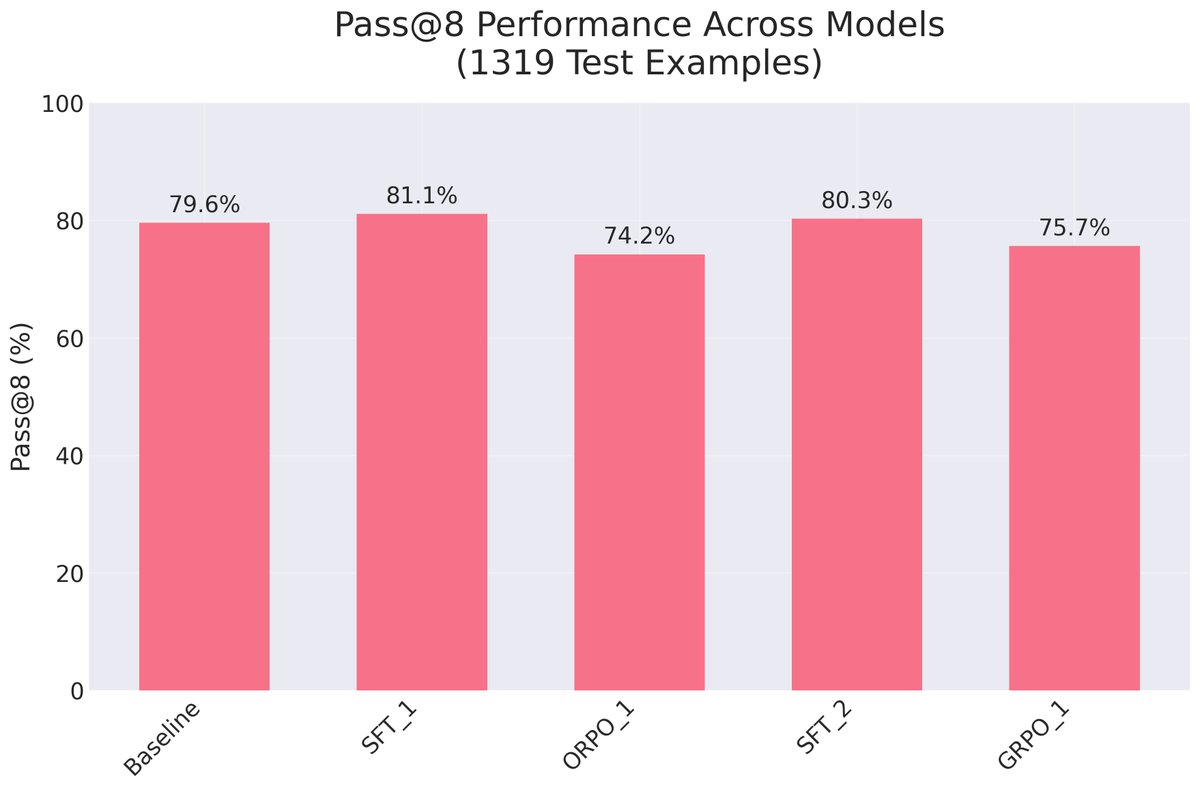

++ Reinforcement Learning for LLMs in 2025 ++ === How to elicit improved reasoning from models? - Is reasoning innately in pre-training datasets and just needs the right examples to be brought out? - Why does GPRO make sense, as opposed to Supervised Fine-tuning with the right examples? My general sense is that GRPO (or PPO or ORPO) may not offer all that much benefit over SFT. In fact, they generally are more complex. What matters is how the fine-tuning data is created. This is the first video in a series on Reinforcement Learning. Maybe you’re looking to directly dig into GRPO - but I think that’s the wrong way to look at things. A better - ground up approach - is to: a) start with careful performance measurement (there are gotchas even around how one marks answers correct or not), b) then carefully think about data preparation, c) then do Supervised Fine-tuning, and only then d) start to look at preference and reward methods. Definitely leave comments if i) you see things that can be improved or I’ve made mistakes on, or ii) you have a specific reasoning dataset in mind that would be useful to see a demo on in the future. --- + Timestamps --- 00:00 Introduction to Reinforcement Learning 00:56 Practical Programming for RL 01:59 Setting Up the Environment 02:40 Cloning and Configuring Repositories 04:10 Understanding the Dataset 05:03 Supervised Fine Tuning and Reinforcement Learning 08:54 Downloading and Preparing the Dataset 09:09 Installing Necessary Libraries 13:58 Implementing the Answer Checker 22:30 Running Inference and Evaluating Performance 28:53 Analyzing Results and Setting Baselines 31:03 Batch Inference Script Breakdown 38:00 Preparing for Reinforcement Learning 38:57 Understanding Think Tags in Dataset Generation 39:36 Improving Performance with Supervised Fine Tuning 41:09 Creating and Filtering the Dataset 41:20 Introduction to Preference Fine Tuning 42:21 Generating ORPO Pairs 46:03 Training the Model with Supervised Fine Tuning 49:26 Setting Up and Running the Training Script 50:47 Evaluating the Model's Performance 01:02:35 Exploring ORPO Training 01:07:49 Theory and History of Reinforcement Learning 01:13:54 Final Evaluation and Insights

We reproduced DeepSeek R1-Zero in the CountDown game, and it just works Through RL, the 3B base LM develops self-verification and search abilities all on its own You can experience the Ahah moment yourself for < $30 Code: github.com/Jiayi-Pan/Tiny… Here's what we learned 🧵