Dogan Can Bakir retuiteado

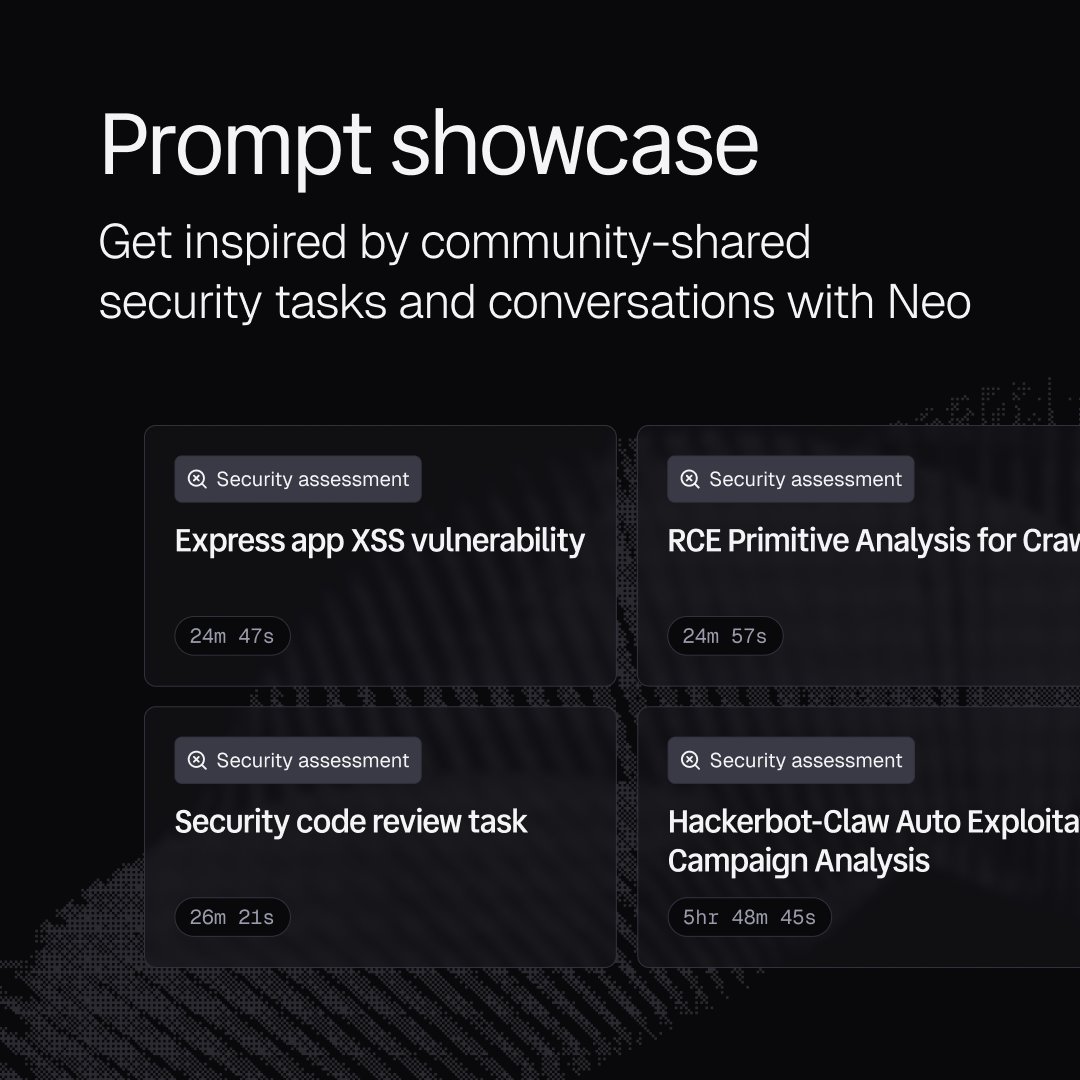

We cut LLM costs by 59% with prompt caching on Neo.

Key moves:

→ 3 breakpoints with deliberate TTLs

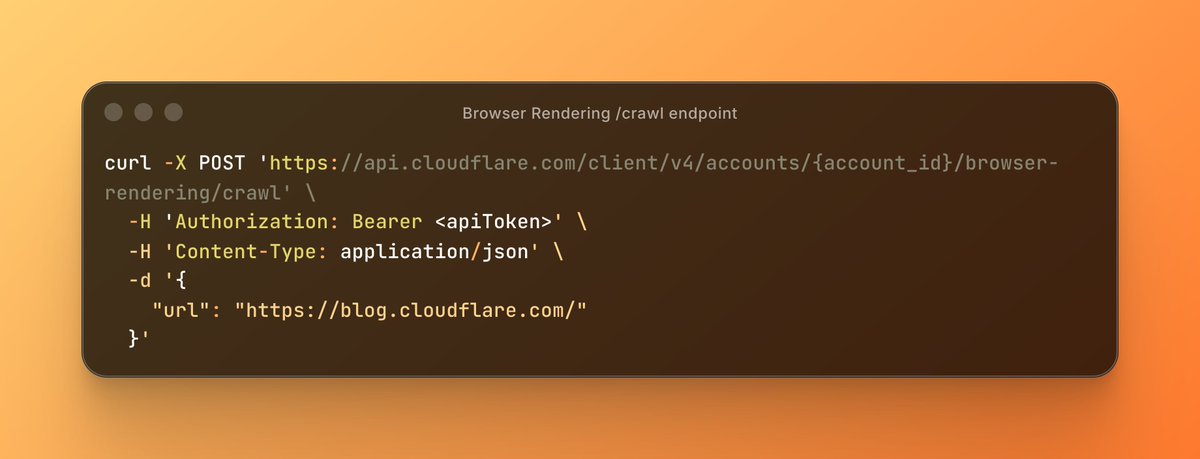

→ Moved dynamic content out of the prefix to the tail

→ Stable templates, byte-identical across all users

→ Provider routing for cache locality

7% to 84% cache hit rate. Full breakdown: projectdiscovery.io/blog/how-we-cu…

English