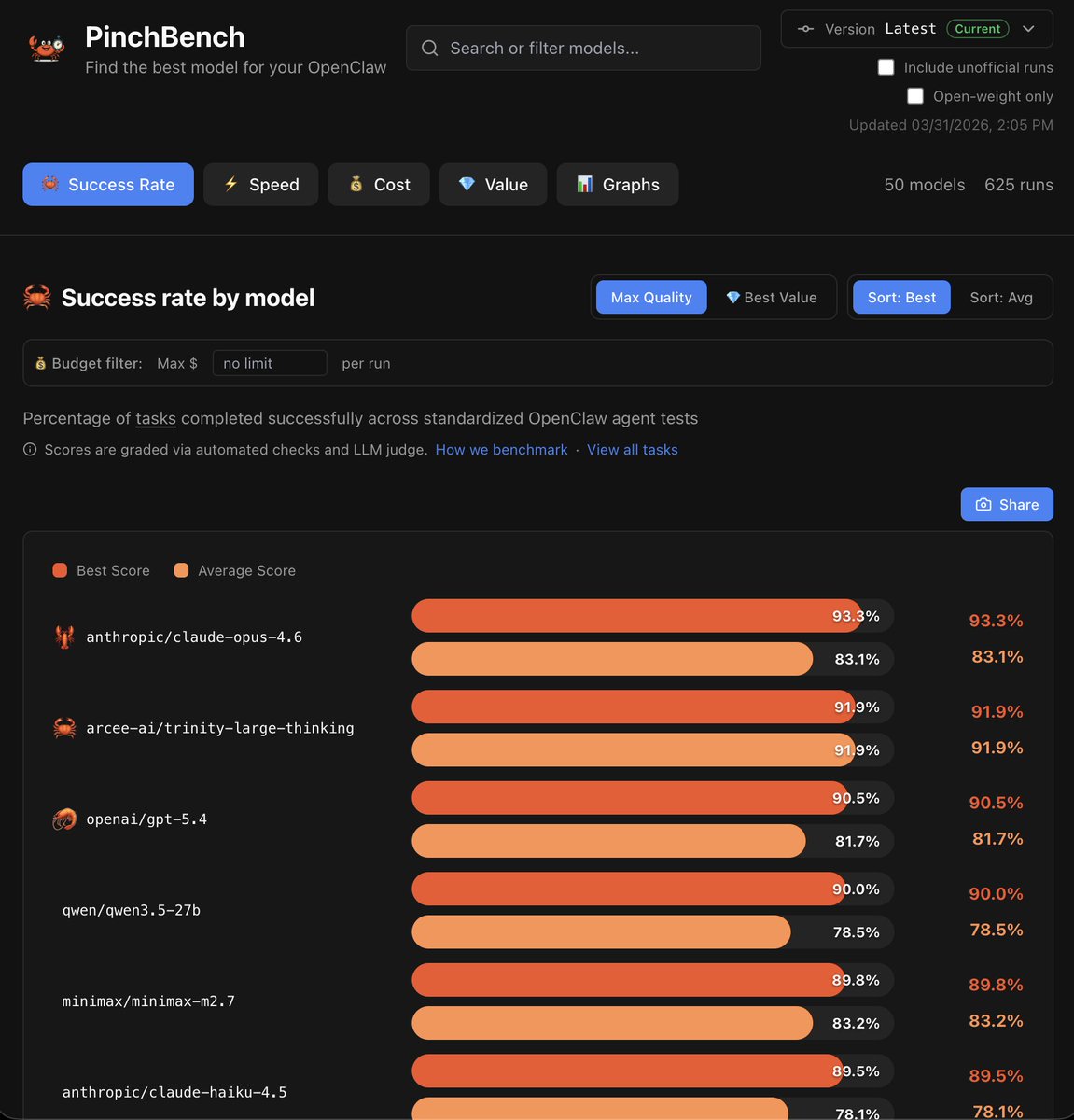

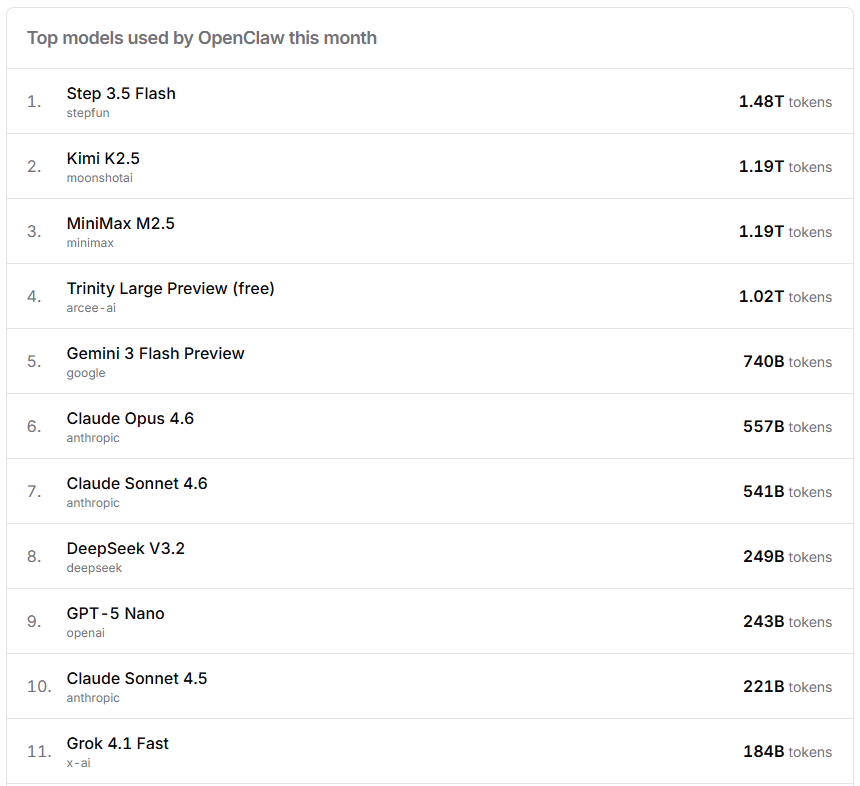

Today we're releasing Trinity-Large-Thinking. Available now on the Arcee API, with open weights on Hugging Face under Apache 2.0. We built it for developers and enterprises that want models they can inspect, post-train, host, distill, and own.

Mark McQuade

302 posts

@MarkMcQuade

CEO and founder of @arcee_ai | @huggingface 🤗 alum. AI and Data Obsessed. Fitness Fanatic. Tattoo Enthusiast.

Today we're releasing Trinity-Large-Thinking. Available now on the Arcee API, with open weights on Hugging Face under Apache 2.0. We built it for developers and enterprises that want models they can inspect, post-train, host, distill, and own.

OpenClaw 2026.4.7 🦞 🔮 openclaw infer 🎬 music + video editing 💾 session branch/restore 🔗 webhook-driven TaskFlows 🤖 Arcee, Gemma 4, Ollama vision 🧠 memory-wiki: persistent knowledge, not just vibes Because “trust me bro” is not a knowledge system. github.com/openclaw/openc…

Today we're releasing Trinity-Large-Thinking. Available now on the Arcee API, with open weights on Hugging Face under Apache 2.0. We built it for developers and enterprises that want models they can inspect, post-train, host, distill, and own.

Ayo @exolabs can I run a @arcee_ai Trinity-Large-Thinking on a 128GB MBP and a 256GB Mac Studio?