Sabitlenmiş Tweet

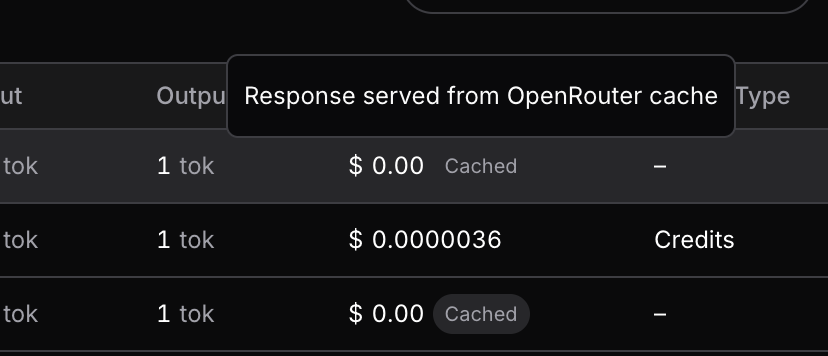

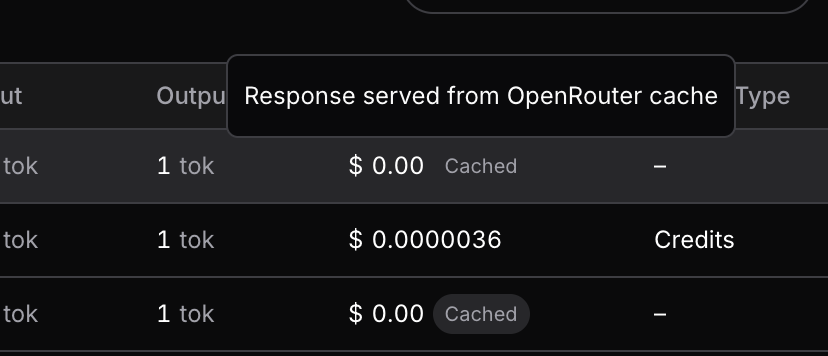

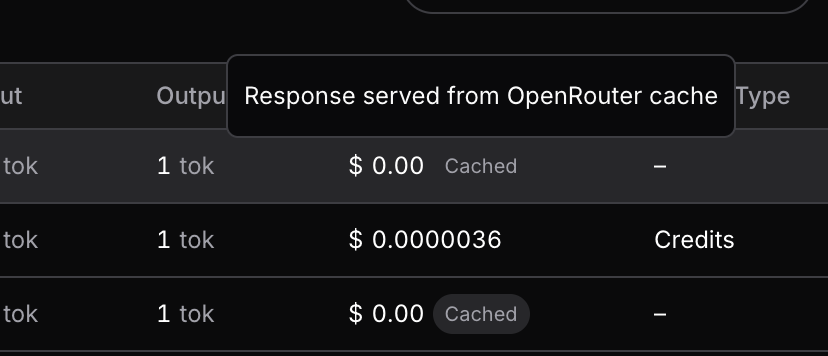

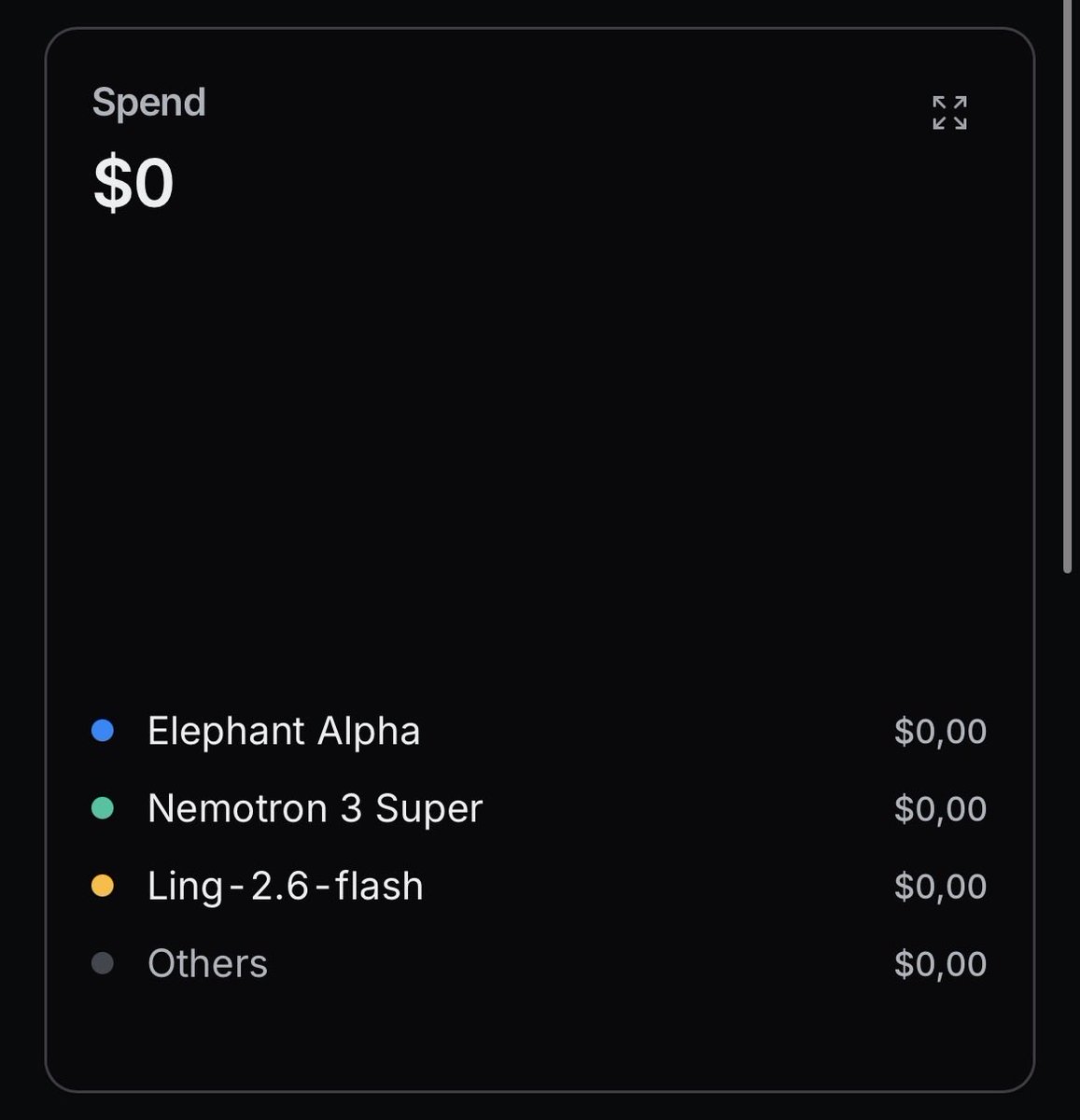

Introducing Response Caching: save tons of money and time on tests and agent retries.

Blog post: openrouter.ai/announcements/…

Available for free. Learn more 👇

English

OpenRouter

4.7K posts

@OpenRouter

Discover and use the latest LLMs. 500+ models (incl. 50+ free), explorable data, private chat, & a unified API. https://t.co/qJG5mKrigL

@theo 1. What

@aritmiabattito No. Their pricing is extremely reasonable, and also gets better on Enterprise

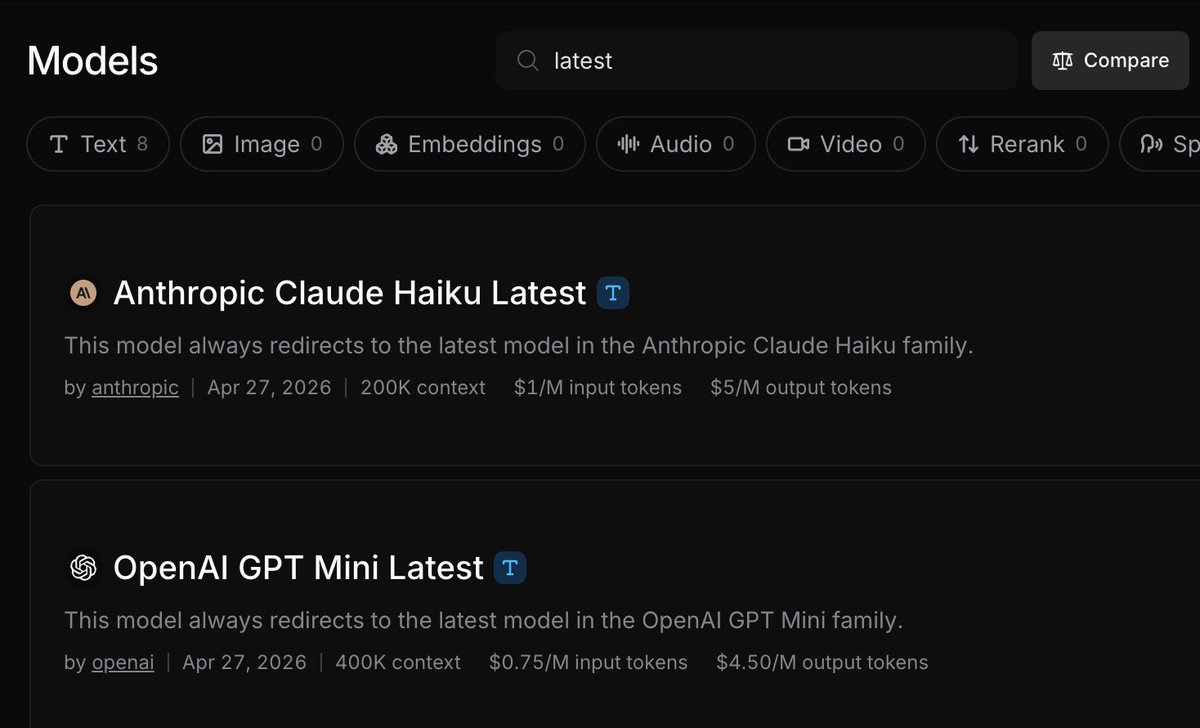

@levelsio openrouter has a cool “nitro” flag in the model names to use the fastest provider so like “gpt-5.5:nitro” would be cool if the labs just let you use “latest” or something