Maxwill Lin

152 posts

Maxwill Lin

@tensorfi

Automated RL Env Design @vmaxai | x-Meta, Autopilot, quant Make things as simple as possible.

RLM (Recursive Language Models) by @a1zhang et al. is impressive. My take is, compared to subagents, the ONLY core diff is simple: default to conditional disclosure w/ constant-sized metadata for length-unbound I/O Or in 3 words: text → var

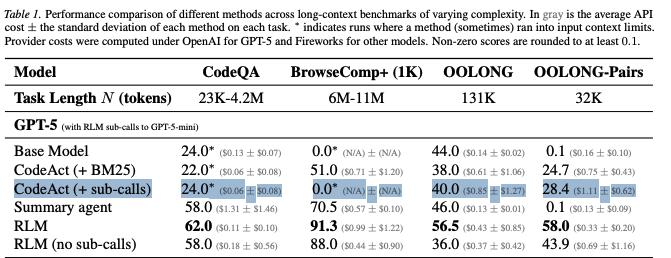

Much like the switch in 2025 from language models to reasoning models, we think 2026 will be all about the switch to Recursive Language Models (RLMs). It turns out that models can be far more powerful if you allow them to treat *their own prompts* as an object in an external environment, which they understand and manipulate by writing code that invokes LLMs! Our full paper on RLMs is now available—with much more expansive experiments compared to our initial blogpost from October 2025! arxiv.org/pdf/2512.24601

Lecture 7 of the Foundations of Blockchains lecture series (The Tendermint Protocol) is now available: youtube.com/watch?v=pS-ayi… tl;dr thread below: 1/13

@LayerZero_Labs has launched, and @StargateFinance has >3B$ TVL, but have any apes actually read the docs or looked at the code? TLDR: The team can rug LP’s even when the oracle is honest with many of the contracts owned by an EOA.

The Rust Foundation is delighted to welcome @ryan_levick, @awafaa, & Eric Garcia to the Board of Directors. Learn more about each of our new representatives: bit.ly/3q7HngE #Rust