Omar Khattab

13.5K posts

Omar Khattab

@lateinteraction

Asst professor @MIT CSAIL @nlp_mit. Research includes https://t.co/VgyLxl0oa1, https://t.co/ZZaSzaRaZ7 (@DSPyOSS), RLMs, and GEPA. Prev: CS PhD @StanfordNLP. Research @Databricks.

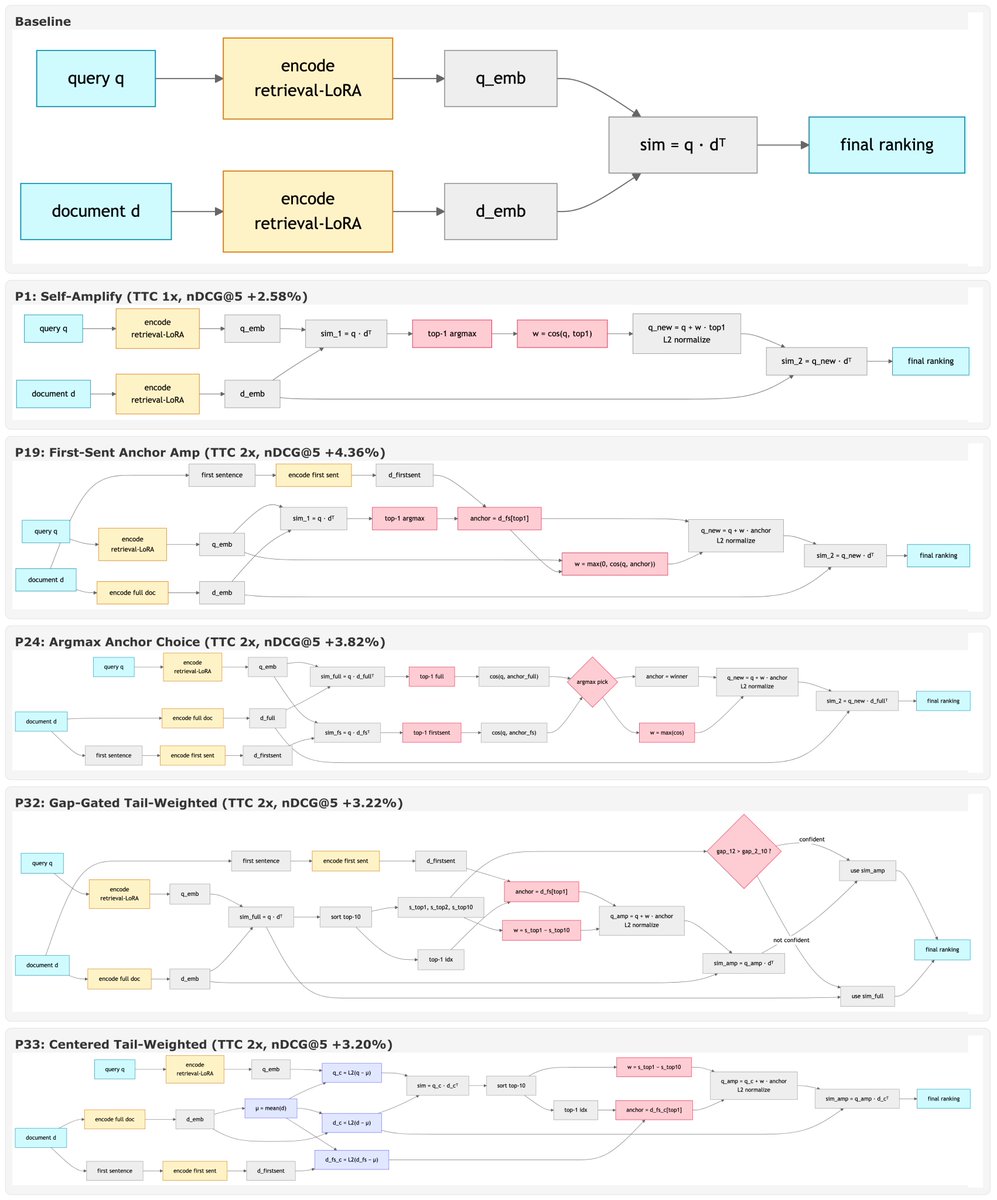

Efficient Multivector Retrieval with Token-Aware Clustering and Hierarchical Indexing Presents a multivector retrieval system that uses token-aware clustering to allocate centroids based on token frequency & semantic variance. 📝arxiv.org/abs/2604.28142 👨🏽💻github.com/TusKANNy/tachi…

MIT PhD student Alex Zhang (@a1zhang) explains how AI models are "mismanaged geniuses" that could take on a much wider range of tasks. Full video: tinyurl.com/bddd5vdx

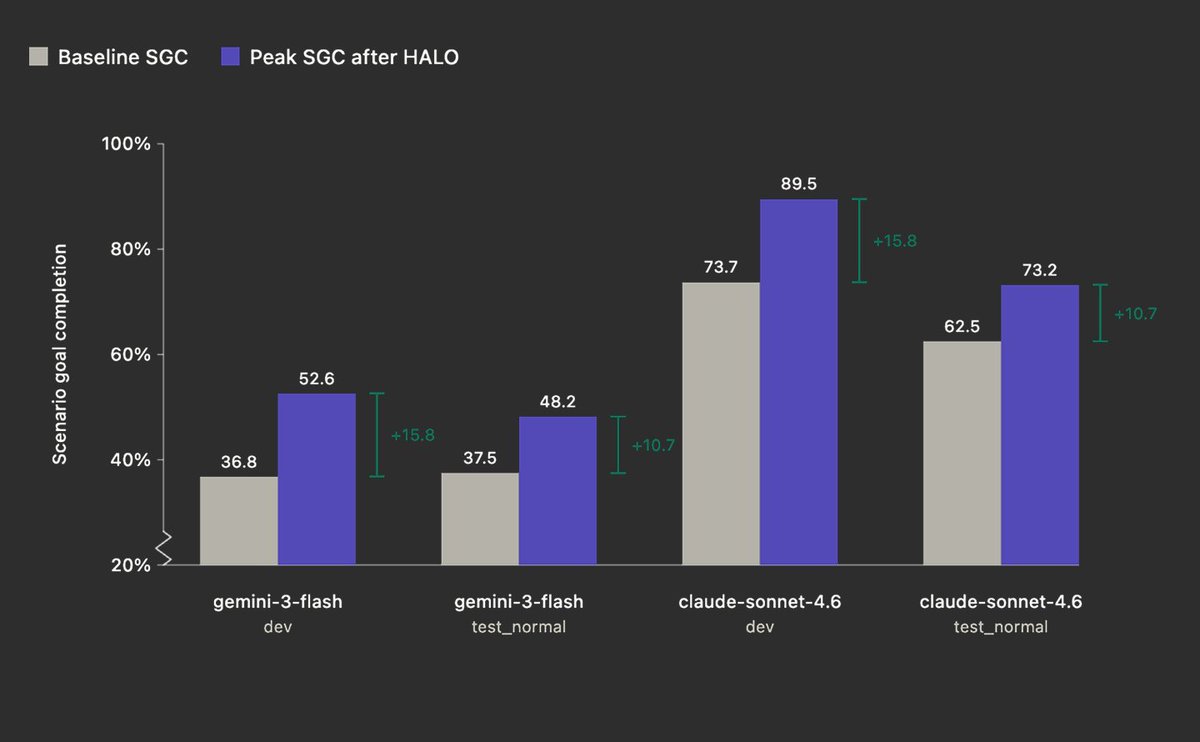

We’re introducing HALO 😇 Hierarchal Agent Loop Optimizer HALO is an RLM-based agent optimization technique capable of recursively self-improving agents by analyzing their execution traces and suggesting changes. This work is inspired by the Mismanaged Genius Hypothesis proposed by @a1zhang and @lateinteraction earlier this month. tldr; we improved performance on AppWorld (Sonnet 4.6) from 73.7 --> 89.5 (+15.8) by giving HALO-RLM access to harness trace data and asking it to identify issues. The feedback from HALO surfaced failures in the harness such as hallucinated tool calls, redundant arguments in tools, refusal loops, and semantic correctness issues. Each issue mapped cleanly to a direct prompt update. We then fed these finding into Cursor (Opus 4.6), and asked the coding agent to update the underlying harness. We repeated this trace -> HALO-RLM analysis -> code update loop until the score plateaued. Today we’re open-sourcing the core HALO-RLM framework, evals, and data for further review.

What if understanding a video was more like navigating a map?🤔 And what if that made compute scale logarithmically (not linearly) with video length?! New preprint🎉: 🗺️VideoAtlas: Navigating Long-Form Video in Logarithmic Compute

Thrilled to present GEPA as an Oral Talk and Poster at ICLR 2026 this Friday in Rio! 🇧🇷 Apr 24 Oral Session 3A (Agents), 10:30 AM BRT, Amphitheater Poster Session 4, 3:15 PM, Pavilion 3 x.com/LakshyAAAgrawa… Let's recap what's happened since we released GEPA last year 🧵

gpt-5.5 prompt for codex seems to have a duplicated line trying to get it to not talk about creatures? Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query. [...] Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query gh link: #L55" target="_blank" rel="nofollow noopener">github.com/openai/codex/b…