AI & Scientific Discovery

44 posts

@AImeetsScience

We host weekly seminars on how AI accelerates research and enables breakthroughs Co-organized by @ChicagoHAI @xxxxiaol @HaokunLiu5280 Zhiyuan Han

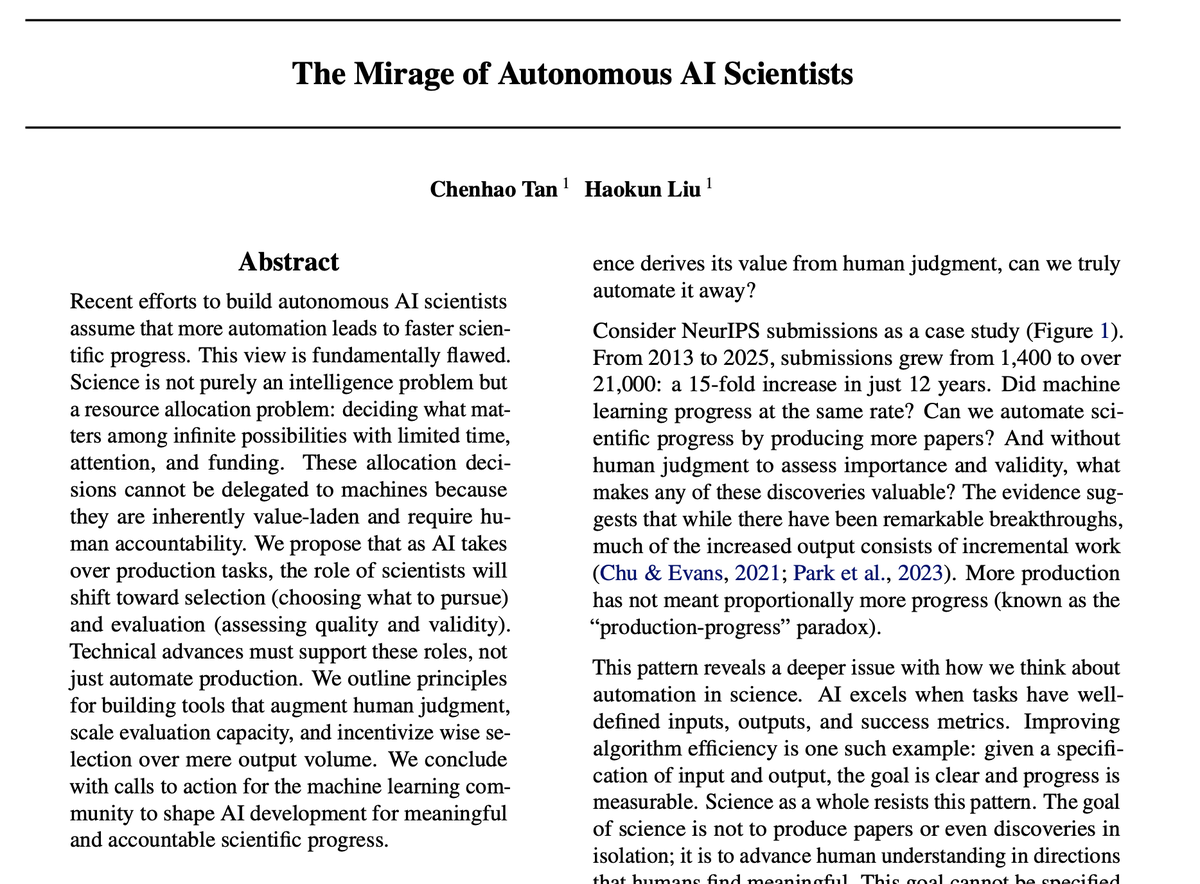

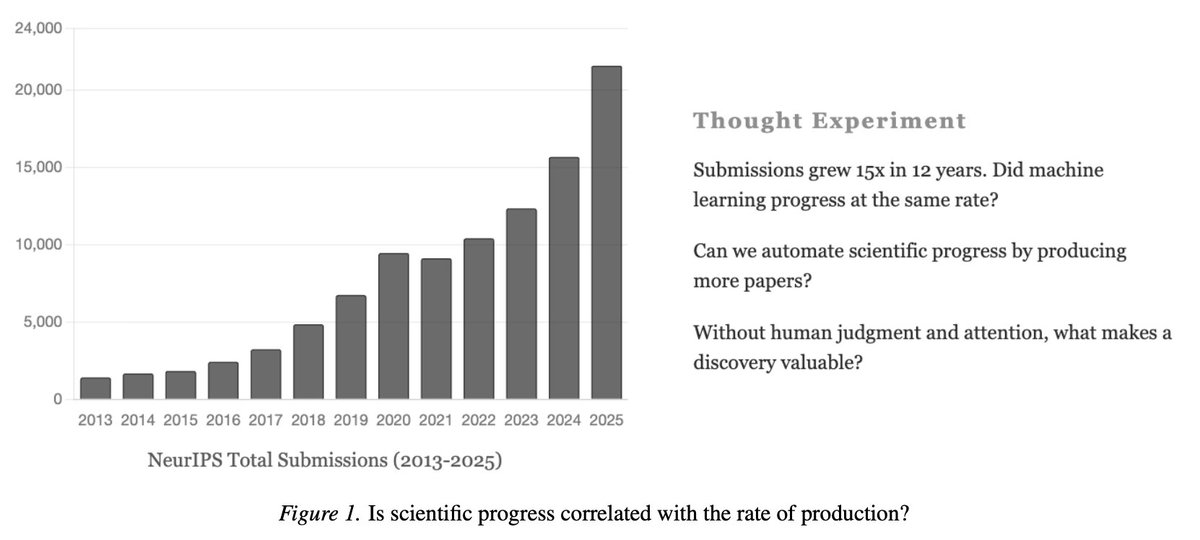

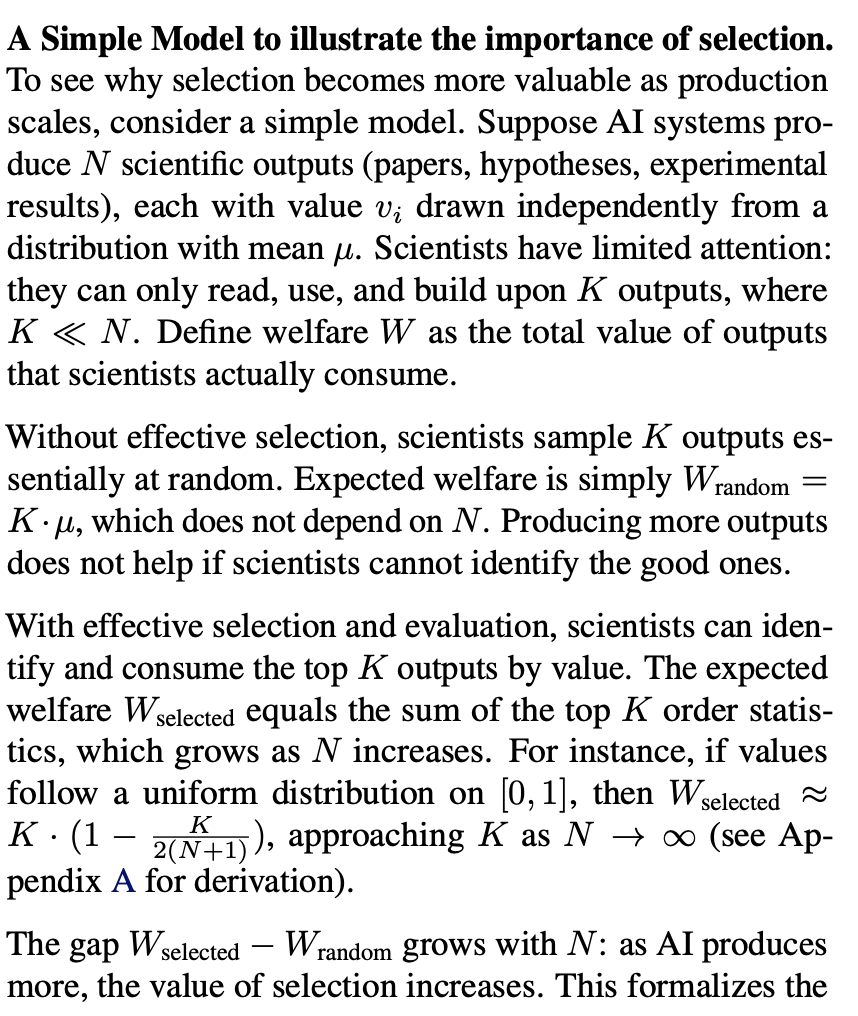

AI can accelerate scientific discovery, but only if we get the scientist–AI interaction right. The dream of “autonomous AI scientists” is tempting: machines that generate hypotheses, run experiments, and write papers. But science isn’t just an automation problem — it’s also a resource allocation problem: deciding what matters, which hypotheses to test, and which results to trust. As AI expands the search space and eases knowledge production, human scientists will increasingly act as selectors and evaluators. Supporting these roles effectively is critical for meaningful progress. To help enable this shift, we’re introducing Hypogenic.ai, a platform for idea selection and evaluation. 💡 IdeaHub: collective rating and discussion of research ideas. 🧠 Ideation Assistant: AI-driven research ideation. Science will move faster only when we pair automation with effective scientist–AI interaction. Read the full piece here 👉 cichicago.substack.com/p/the-mirage-o…

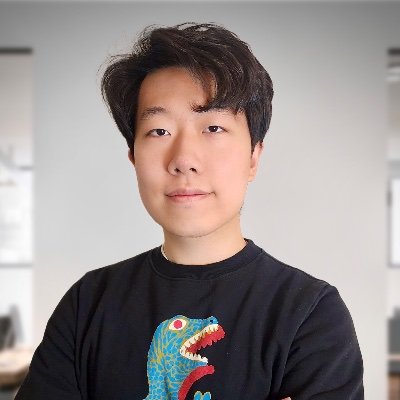

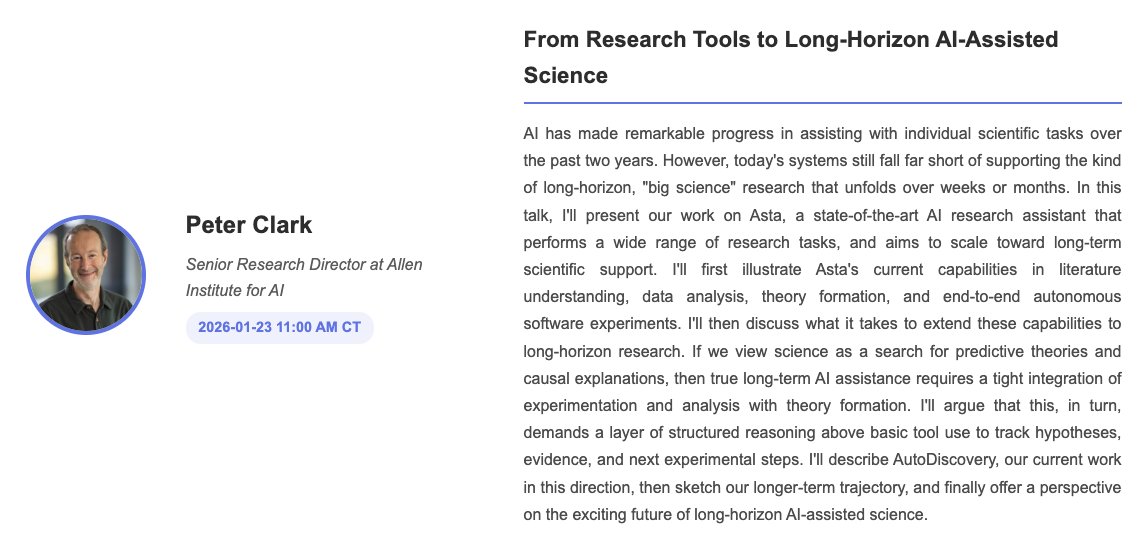

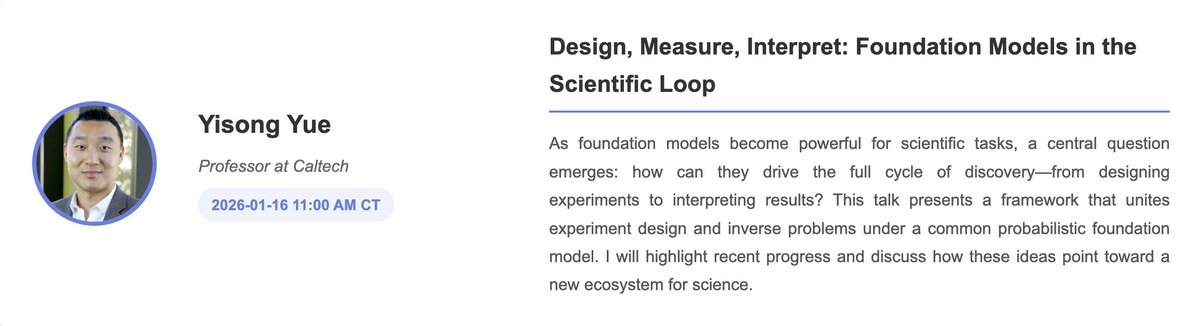

Happy new year! The AI & Scientific Discovery Seminar is returning this quarter. Last quarter was incredible, from protein design to AI scientists to automated bio labs. Huge thanks to all our amazing speakers and attendees 🙌 We’re kicking off Winter Quarter with an 🔥 lineup, starting this Friday at 11am CT! 👉 @boknilev will share how interpretability methods are driving scientific discovery. Links in the thread. @yisongyue @cgeorgiaw @HannesStaerk @borisbolliet @paco_astro

Happy new year! The AI & Scientific Discovery Seminar is returning this quarter. Last quarter was incredible, from protein design to AI scientists to automated bio labs. Huge thanks to all our amazing speakers and attendees 🙌 We’re kicking off Winter Quarter with an 🔥 lineup, starting this Friday at 11am CT! 👉 @boknilev will share how interpretability methods are driving scientific discovery. Links in the thread. @yisongyue @cgeorgiaw @HannesStaerk @borisbolliet @paco_astro