AI can optimize materials 🤘 Our (@pabbeel, @svlevine, @AIatMeta) proposed transformer model 𝗖𝗹𝗶𝗾𝘂𝗲𝗙𝗹𝗼𝘄𝗺𝗲𝗿, combined with 𝗲𝘃𝗼𝗹𝘂𝘁𝗶𝗼𝗻 strategies, discovers materials that optimize target properties. arxiv.org/abs/2603.06082

Berkeley AI Research

1.4K posts

@berkeley_ai

We're graduate students, postdocs, faculty and scientists at the cutting edge of artificial intelligence research.

AI can optimize materials 🤘 Our (@pabbeel, @svlevine, @AIatMeta) proposed transformer model 𝗖𝗹𝗶𝗾𝘂𝗲𝗙𝗹𝗼𝘄𝗺𝗲𝗿, combined with 𝗲𝘃𝗼𝗹𝘂𝘁𝗶𝗼𝗻 strategies, discovers materials that optimize target properties. arxiv.org/abs/2603.06082

Introducing M²RNN: Non-Linear RNNs with Matrix-Valued States for Scalable Language Modeling We bring back non-linear recurrence to language modeling and show it's been held back by small state sizes, not by non-linearity itself. 📄 Paper: arxiv.org/abs/2603.14360 💻 Code: github.com/open-lm-engine… 🤗 Models: huggingface.co/collections/op…

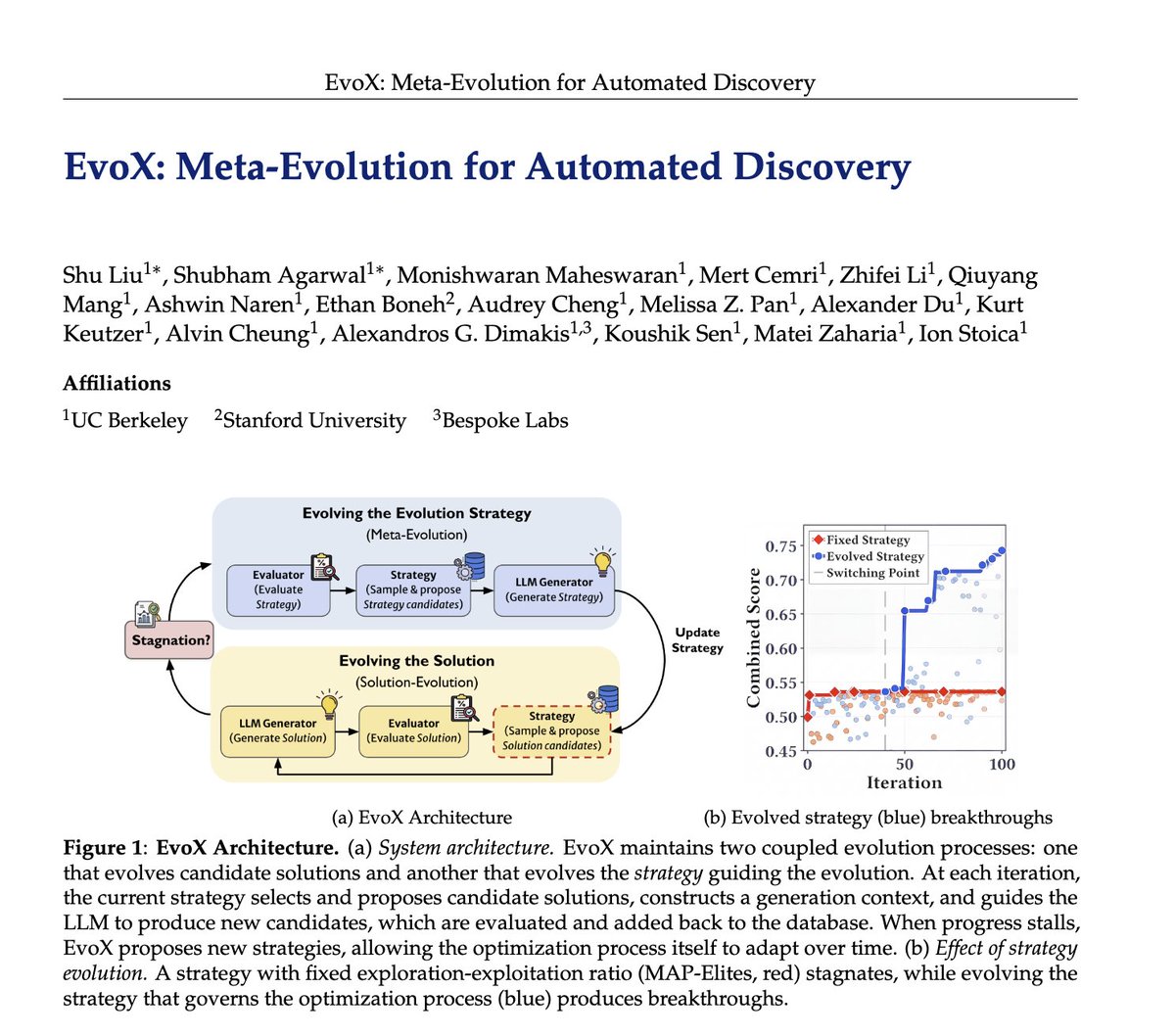

Researchers spend hours and hours hand-crafting the strategies behind LLM-driven optimization systems like AlphaEvolve: deciding which ideas to reuse, when to explore vs exploit, and what mutations to try. 🤖But what if AI could evolve its own evolution process? We introduce EvoX, a meta-evolution pipeline that lets AI evolve the strategy guiding the optimization. It achieves high-quality solutions for <$5, while existing open systems and even Claude Code often cost 3-5× more on some tasks. Across ~200 optimization problems, EvoX delivers the strongest overall results: often outperforming AlphaEvolve, OpenEvolve, GEPA, and ShinkaEvolve on math and systems tasks, exceeding human SOTA, and improving median performance by up to 61% on 172 competitive programming problems. 👇

𝗞-𝗺𝗲𝗮𝗻𝘀 𝗶𝘀 𝘀𝗶𝗺𝗽𝗹𝗲. 𝗠𝗮𝗸𝗶𝗻𝗴 𝗶𝘁 𝗳𝗮𝘀𝘁 𝗼𝗻 𝗚𝗣𝗨𝘀 𝗶𝘀𝗻’𝘁. That’s why we built Flash-KMeans — an IO-aware implementation of exact k-means that rethinks the algorithm around modern GPU bottlenecks. By attacking the memory bottlenecks directly, Flash-KMeans achieves 30x speedup over cuML and 200x speedup over FAISS — with the same exact algorithm, just engineered for today’s hardware. At the million-scale, Flash-KMeans can complete a k-means iteration in milliseconds. A classic algorithm — redesigned for modern GPUs. Paper: arxiv.org/abs/2603.09229 Code: github.com/svg-project/fl…

Excited to announce our #ICRA2026 workshop: Beyond Teleoperation Teleop, sim, human videos... we have different ideas to scale robot data, but how do they all fit together?🧩 Join us for exciting talks, panel discussions, and a Best Paper Award! Read on for details! 🧵👇

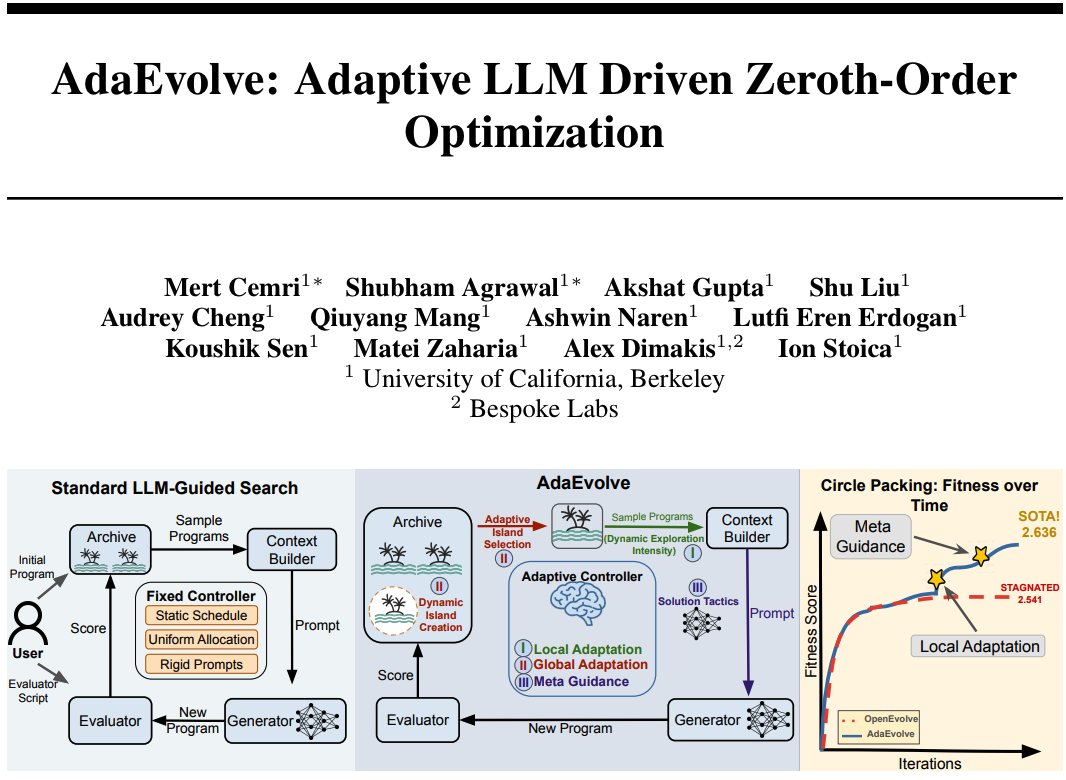

AlphaEvolve proved LLMs can discover novel algorithms, but it remains closed-source, and open-source alternatives (OpenEvolve, GEPA) rely on rigid, static search policies. Introducing AdaEvolve: a fully adaptive evolutionary algorithm that dynamically adjusts its own search strategy based on observed progress. It matches or beats AlphaEvolve and best known Human SOTA on math and systems benchmarks, and boosts Frontier-CS median scores by 33% over the best open-source baseline across 185 tasks. 🧵👇 (1/n)

AlphaEvolve proved LLMs can discover novel algorithms, but it remains closed-source, and open-source alternatives (OpenEvolve, GEPA) rely on rigid, static search policies. Introducing AdaEvolve: a fully adaptive evolutionary algorithm that dynamically adjusts its own search strategy based on observed progress. It matches or beats AlphaEvolve and best known Human SOTA on math and systems benchmarks, and boosts Frontier-CS median scores by 33% over the best open-source baseline across 185 tasks. 🧵👇 (1/n)