Marco

384 posts

A small thank you to everyone using Claude: We’re doubling usage outside our peak hours for the next two weeks.

Perplexity literally has an official MCP server on their docs site right now. One-click install for Cursor, VS Code, Claude Desktop. Today, at their own developer conference, their CTO says they’re moving away from MCP internally. This tells you everything about where the protocol actually stands. The company that built MCP integrations, shipped them to developers, and promoted them to the community ran into the same wall everyone else has: MCP’s spec hasn’t been updated since November 2025, the security model is basically nonexistent, and stdio transport breaks in any real production environment. APIs and CLIs won this round because they already solved the problems MCP is still trying to define. Auth, versioning, rate limiting, monitoring, all battle-tested for decades. Every enterprise procurement team on earth can evaluate a REST API. Nobody’s compliance department is signing off on a protocol where a Knostic scan found zero authentication across nearly 2,000 servers. Perplexity is targeting $656 million ARR by end of 2026. Their APIs are already in hundreds of millions of Samsung devices and six of the Mag 7. That revenue doesn’t flow through experimental protocols. It flows through endpoints that Fortune 500 IT departments can audit. One of MCP’s most prominent adopters just told a room full of developers to use the tools that shipped 30 years ago. That’s the most honest assessment of the protocol’s production readiness anyone has given.

The cofounder and CTO of Perplexity, @denisyarats just said internally at Perplexity they’re moving away from MCPs and instead using APIs and CLIs 👀

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)

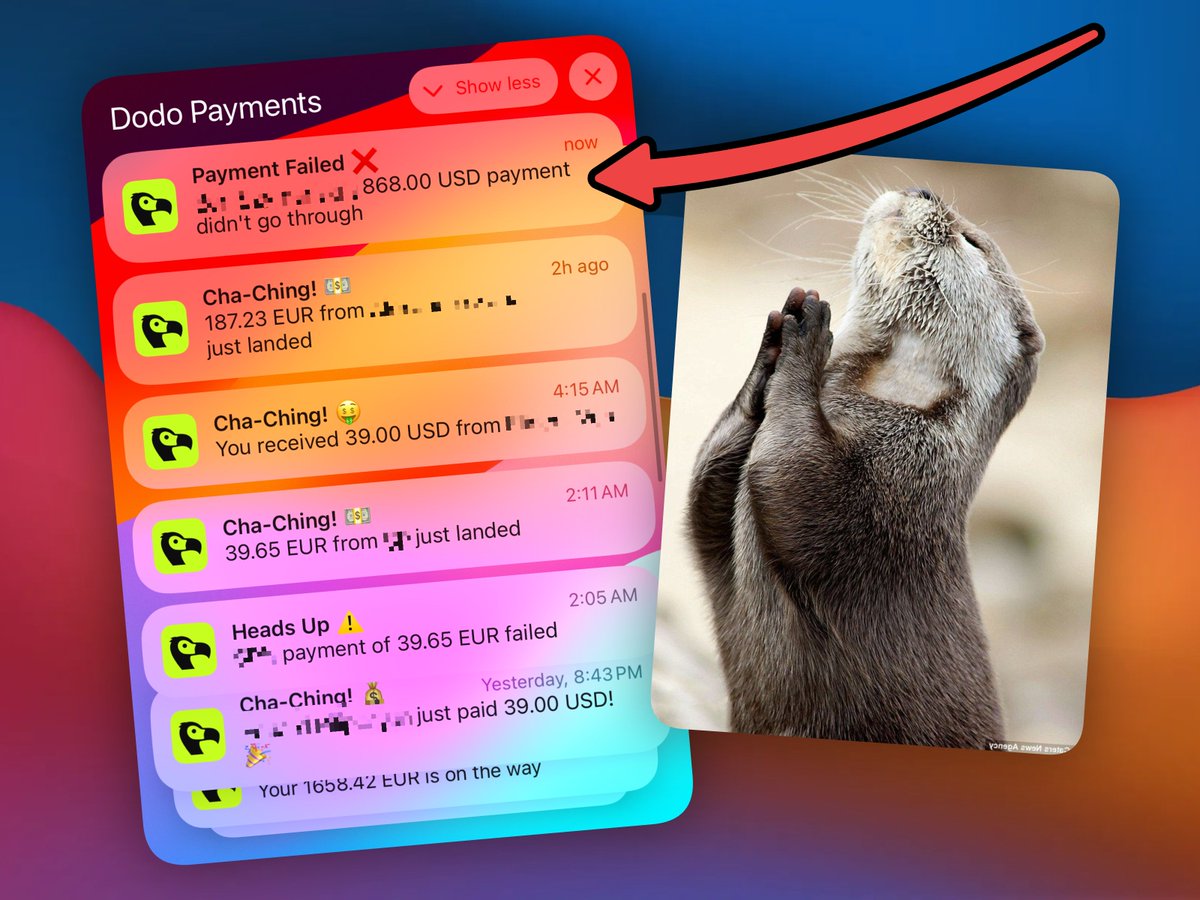

People are already using Computer to solo-run their own D2C and consulting businesses. As the tech gets better, they'll be able to scale faster and do less work. The most valuable skills will be 1) agency and 2) ability to utilize AI to get more leverage.

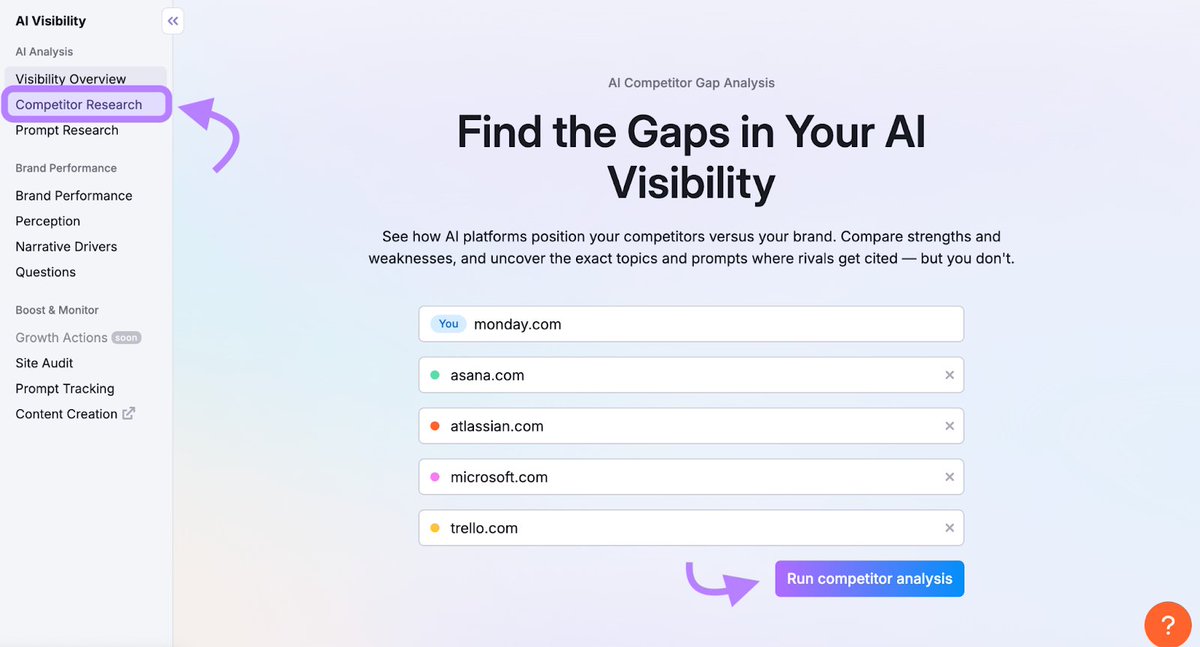

BREAKING: Claude can now do SEO like a $10,000/month agency (for free). Here are 7 insane Claude Cowork prompts that can take your biz to $100k/month : (Save for later)

BREAKING: Claude can now do SEO like a $10,000/month agency (for free). Here are 7 insane Claude Cowork prompts that can take your biz to $100k/month : (Save for later)