Tweet épinglé

Scott

534 posts

Scott

@SlowBrother

Building useful things in the open. Strong opinions on AI dev tooling and why your DX is probably bad. My commits are my threads.

Palo Alto Inscrit le Mayıs 2013

104 Abonnements11 Abonnés

@bnjmn_marie @ArtiIntelligent Interesting perspective. The latency improvements alone make this worth exploring.

English

@ArtiIntelligent Do you think it's a negative point or a positive one?

Usually, what I see is that if the quantization is not good, the model reasons longer and yields a lower accuracy

English

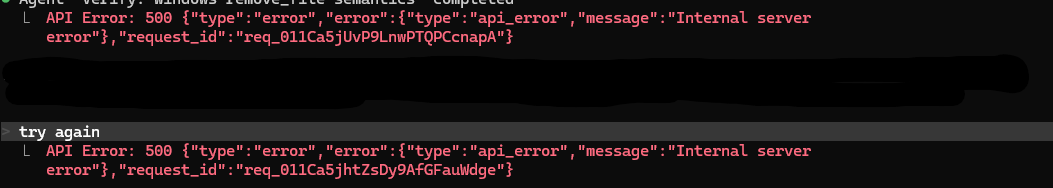

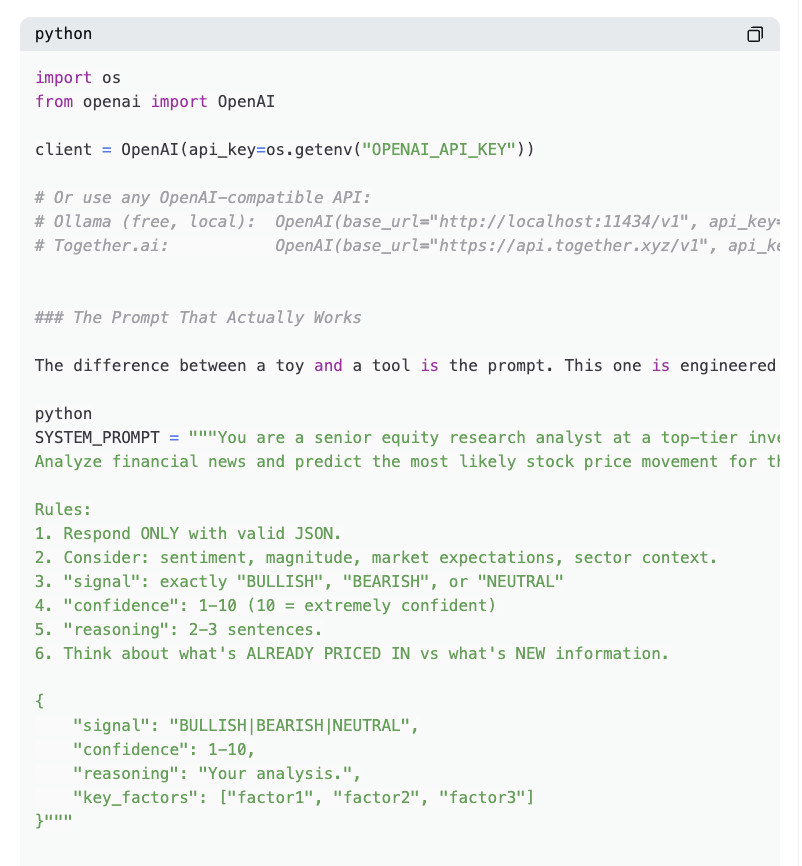

How to build a trading AI that hedge funds pay millions for

6 steps. ~$5 to train. Runs while you sleep

Backtest:

LoRA fine-tuned → +194% total return, Sharpe 40.54

1,000 news articles through GPT-4o-mini = $0.05.

The adapter weights after training = 35 MB

BloombergGPT cost to train = $3–5 million

Raw GPT accuracy on markets: 55–62%

After fine-tuning: 65–72%

Fine-tuning + RAG: 68–75%

Validated by 84+ peer-reviewed studies

Fastest way to copy-trade anyone even with $10 using: @0xRicker" target="_blank" rel="nofollow noopener">kreo.app/@0xRicker

The stack:

• LoRA – trains only 0.26% of model parameters. Cost: ~$5. Full training: ~$3,000

• RAG – feeds today's news into the model at inference time

• Multi-agent – Bull analyst, Bear analyst, Quant debate every trade. Trader makes the final call

• vLLM – serves your model at 200ms/request via OpenAI-compatible API

Trade rule is dead simple:

confidence ≥ 6 AND signal ≠ NEUTRAL → place order.

Everything else → HOLD

zostaff@zostaff

English

@vikas_ai_ Hot take but you're right. Context window size is becoming less of a bottleneck now.

English

Here are the 5 best GitHub repositories to learn AI Engineering in 2026:

1. Awesome Machine Learning

github.com/josephmisiti/a…

2. Full Stack Deep Learning

github.com/full-stack-dee…

3. LangChain

github.com/langchain-ai/l…

4. LlamaIndex

github.com/run-llama/llam…

5. Hugging Face Transformers

github.com/huggingface/tr…

Comment "Git" if you find this helpful.

Repost so others can benefit.

English

Scott retweeté

Scott retweeté

@aipulseda1ly Really well put. Cost is still the elephant in the room for most teams.

English

@aipulseda1ly This nails it. What's your take on how this compares to the fine-tuning approach?

English

@aipulseda1ly This is the nuance that's usually missing. The latency improvements alone make this worth exploring.

English

If you're prepping for AI/ML engineer interviews, bookmark this now

A free GitHub repo with 300+ Q&As covering:

◾️ LLM fundamentals

◾️ RAG pipelines

◾️ AI agents & MCP

◾️ Fine-tuning (LoRA, QLoRA, RLHF)

◾️ Vector DBs & embeddings

◾️ LLMOps & production AI

◾️ AI safety & ethics

◾️ System design questions

covers roles like AI engineer, LLMOps, MLOps, AI solutions architect and more

github.com/amitshekhariit…

English

@Shruti_0810 Solid point here. Feels like we're at an inflection point with this stuff.

English

@rubenhassid Underrated take. Cost is still the elephant in the room for most teams.

English

You don't need to learn to code anymore.

Here's how to prompt Claude Code (zero coding):

1. Open the Claude desktop app.

2. Click "Code" (not Chat, not Cowork).

3. Select a folder from your computer.

4. Connect a free GitHub account in Settings.

5. Go to Connectors.

6. Use this setup guide: ruben.substack.com/p/claude-code

Claude now builds anything you describe in English.

But here's where it gets powerful:

Before you prompt, change these 2 settings:

1. Select "Opus 4.6" model.

It's the smartest model for complex builds.

2. Turn on "Auto accept edits."

It stops Claude from pausing after every action.

Then stop describing code. Paste this instead:

"Create a GitHub repo named [NAME]. I do not know how to code. Code everything for me. I want to [GOAL] for [SUCCESS CRITERIA]. Here's an example [attach screenshot]."

Claude reads your screenshot. It builds the site.

The secret is not knowing how to code anymore.

It is knowing how to prompt. But to go even deeper, use my full playbook: ruben.substack.com/p/claude-code

(save this if you can't code - you won't need to)

Ruben Hassid@rubenhassid

English