Red

2.9K posts

Red

@TheRedWall__

e/acc | Cyber Security x AI | Claude boyz for life

We've signed an agreement with Google and Broadcom for multiple gigawatts of next-generation TPU capacity, coming online starting in 2027, to train and serve frontier Claude models.

I suspect the IDE will be making a comeback over the next 6 months as people realize that you can get away without reading every line of code, but you better be reading every line of markdown Surprised more IDEs haven't focused on being the absolute best place to edit markdown

Google’s Gemma 4 E2B running on-device on iPhone 17 Pro Gemma 4 is built from the same research as Gemini 3, has image understanding capabilities and can reason if needed Running at ~40tk/s with MLX optimized for Apple Silicon

North Korean intelligence agents built an entire fake company to compromise one JavaScript developer. And it worked. UNC1069 didn't hack Axios. They befriended its maintainer. They cloned a real company founder's identity, built a branded Slack workspace with fake employee profiles and LinkedIn post channels, then scheduled a Microsoft Teams call with what appeared to be a full team. During the call, a fake error message said his system needed an update. He installed it. That update was the RAT. From one developer's laptop, they had everything: npm credentials, publishing access, the keys to a package installed in 80% of cloud environments. Axios gets 100 million downloads per week. The attackers published two poisoned versions at 12:21 AM UTC on a Sunday night, tagging both the latest and legacy branches within 39 minutes. The malicious dependency had been pre-staged 18 hours earlier with a clean decoy version to build registry history. Three separate RAT payloads were pre-built for macOS, Windows, and Linux. The malware self-deleted after execution to erase forensic evidence. The poisoned versions were live for about three hours before npm pulled them. Huntress observed 135 endpoints across all operating systems calling the attacker's command-and-control server during that window. Wiz found the malicious versions in roughly 3% of environments scanned. Every affected machine needs full credential rotation: npm tokens, AWS keys, SSH keys, CI/CD secrets, everything in .env files. The part that keeps getting worse: this isn't isolated. The same threat cluster compromised Trivy (a security scanner), KICS, LiteLLM, and multiple GitHub Actions in the two weeks before Axios. Google estimates hundreds of thousands of stolen secrets are now circulating from these combined attacks. The maintainer had 2FA enabled. He said himself: "I have 2FA/MFA on practically everything." The exact method of token compromise is still undetermined. One person. One fake Teams call. 100 million weekly downloads weaponized in under three hours. The npm ecosystem runs on mass trust in individual maintainers who volunteer their time, and North Korean intelligence now has a repeatable playbook for turning that trust into a delivery mechanism.

I’ve deliberately not published blog posts on useful detection ideas and rule-writing methods because I didn’t want LLMs to absorb them. So those ideas stayed private and were shared only with a small group. I doubt I’m the only one making that call. And that probably has consequences for the community over time - not just ours, but any community.

🚨 Anthropic CEO Dario Amodei: “We are so close to these models reaching the level of human intelligence, and yet there doesn't seem to be a wider recognition in society of what's about to happen … There hasn't been a public awareness of the risks.”

🚨: At the quantum level, reality is described not by solid objects, but by probability, energy fields, and interaction.

I know everything thinks this typeface is racist but it’s the typeface the actual Chinese Olympic team uses

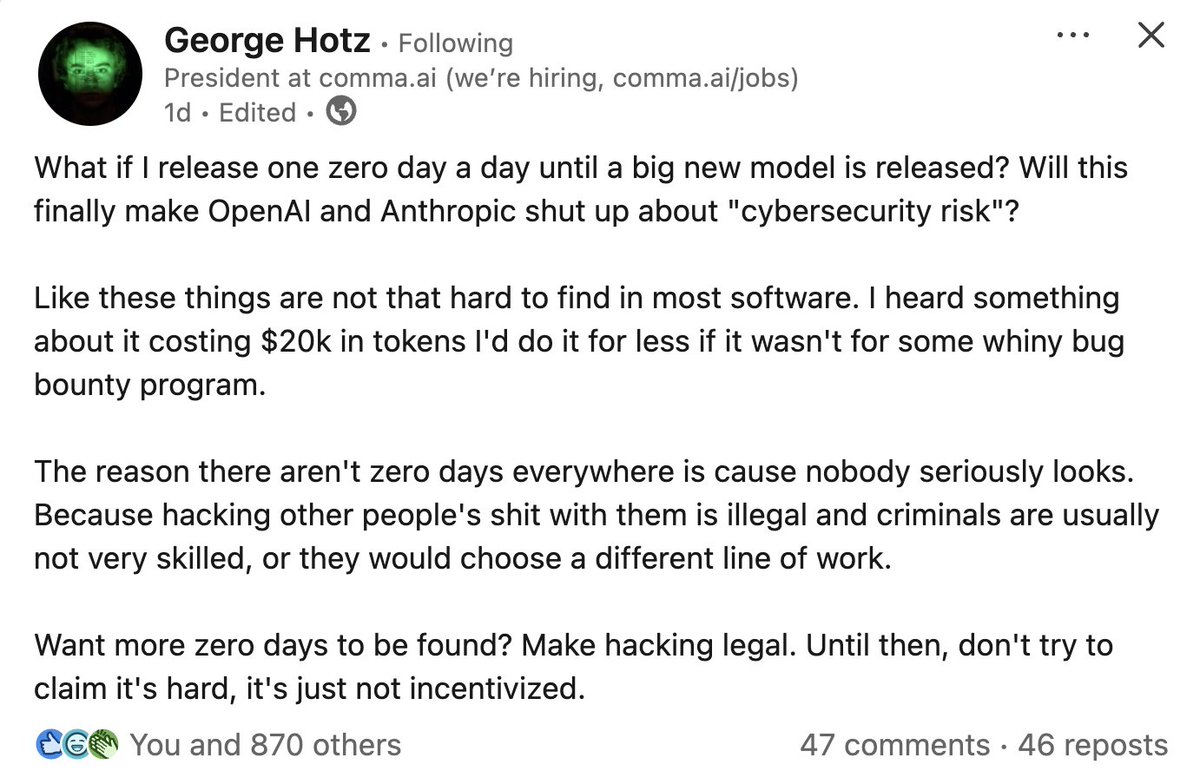

someone at ANTHROPIC just showed CLAUDE finding ZERO DAY vulnerabilities in a live conference demo claude has found zero day in Ghost, 50,000 stars on github, never had a critical security vulnerability in its entire, history... it found the blind SQL injection in 90 minutes, stole the admin api key, then did the exact, same thing to the linux kernel

Start building with Gemini Embedding 2, our most capable and first fully multimodal embedding model built on the Gemini architecture. Now available in preview via the Gemini API and in Vertex AI.

having a nice website is no longer a sign of trust