George Ho

1.4K posts

George Ho

@_eigenfoo

Natural language processing, Bayesian modeling, open source, crosswords, donuts and coffee. Currently ML at @flatironhealth (he/him/his)

“Let your eyes look straight ahead; fix your gaze directly before you. Give careful thought to the paths for your feet and be steadfast in all your ways” Proverbs 4:25-26

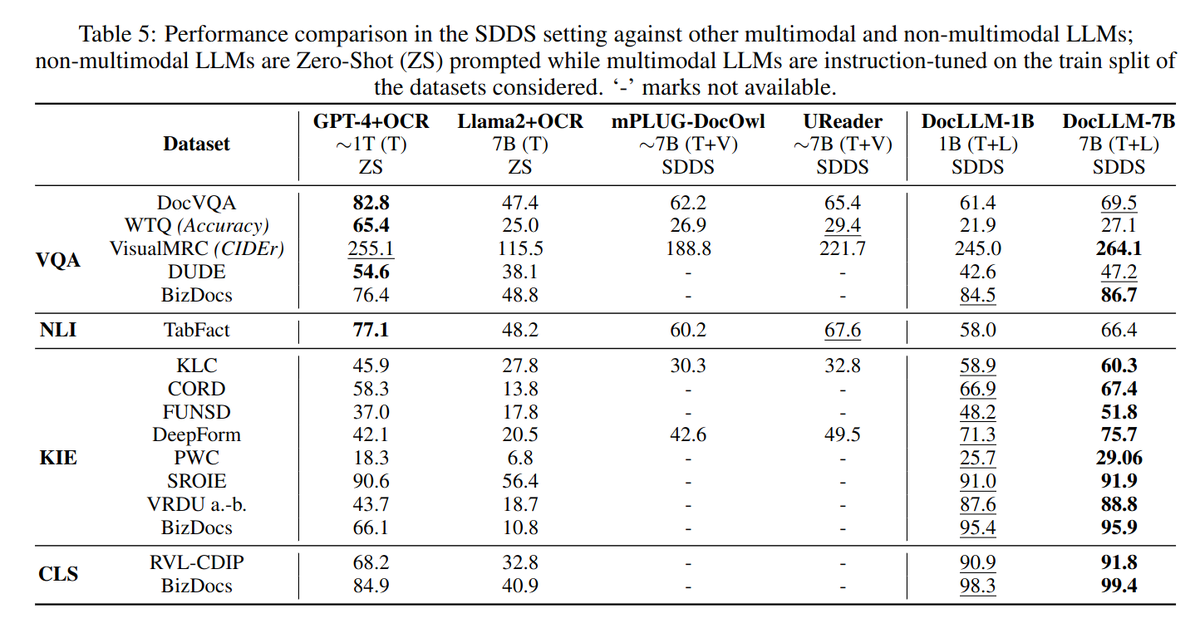

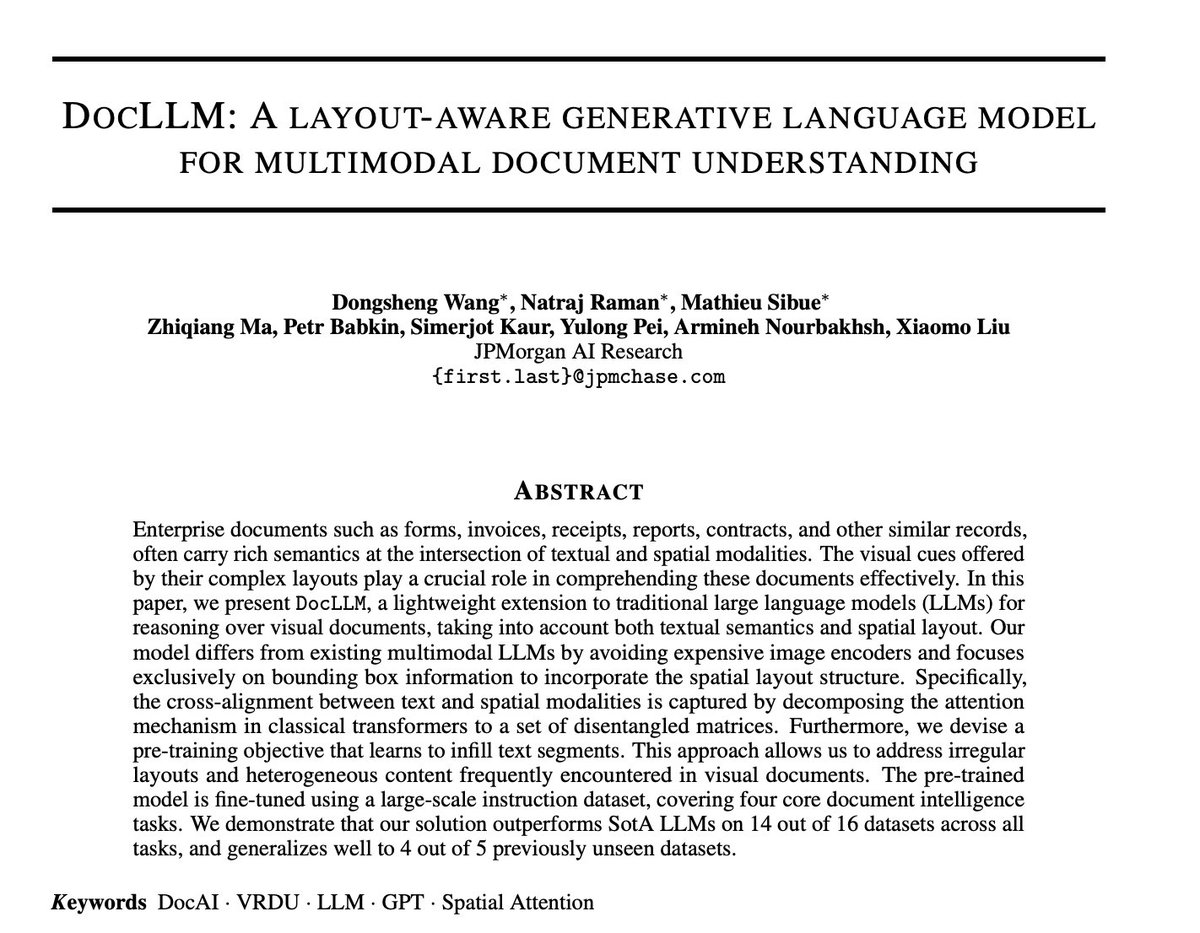

JPMorgan announces DocLLM A layout-aware generative language model for multimodal document understanding paper page: huggingface.co/papers/2401.00… Enterprise documents such as forms, invoices, receipts, reports, contracts, and other similar records, often carry rich semantics at the intersection of textual and spatial modalities. The visual cues offered by their complex layouts play a crucial role in comprehending these documents effectively. In this paper, we present DocLLM, a lightweight extension to traditional large language models (LLMs) for reasoning over visual documents, taking into account both textual semantics and spatial layout. Our model differs from existing multimodal LLMs by avoiding expensive image encoders and focuses exclusively on bounding box information to incorporate the spatial layout structure. Specifically, the cross-alignment between text and spatial modalities is captured by decomposing the attention mechanism in classical transformers to a set of disentangled matrices. Furthermore, we devise a pre-training objective that learns to infill text segments. This approach allows us to address irregular layouts and heterogeneous content frequently encountered in visual documents. The pre-trained model is fine-tuned using a large-scale instruction dataset, covering four core document intelligence tasks. We demonstrate that our solution outperforms SotA LLMs on 14 out of 16 datasets across all tasks, and generalizes well to 4 out of 5 previously unseen datasets.

Yesterday was my last day at Hugging Face. The past three years have been exhilarating and I am very proud of what the team has accomplished during that time! Taking a bit of a break with opensource full time (though I will still contribute to Transformers and Accelerate)