Sebastian Wenninger

16 posts

is there something like google docs, but for markdown? i need a cloud based collaborative markdown editor please.

Oh right i realize hell freezes over, we reached the point where app > cli That combined with the speed increase also means less windows necessary, codex goes brrrr now! developers.openai.com/codex/app/

i've officially switched from claude code to codex

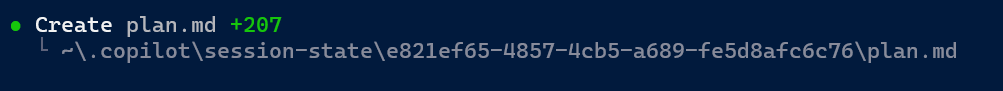

Something is cooking in GitHub #copilot

Been thinking about the Ralph Wiggum loop a bit. We now have an opportunity to do for Open Source what Folding@home did for protein folding research. Spend a few thousand tokens a day on a single run, trying to improve the performance of a random OSS library/project. Thoughts?

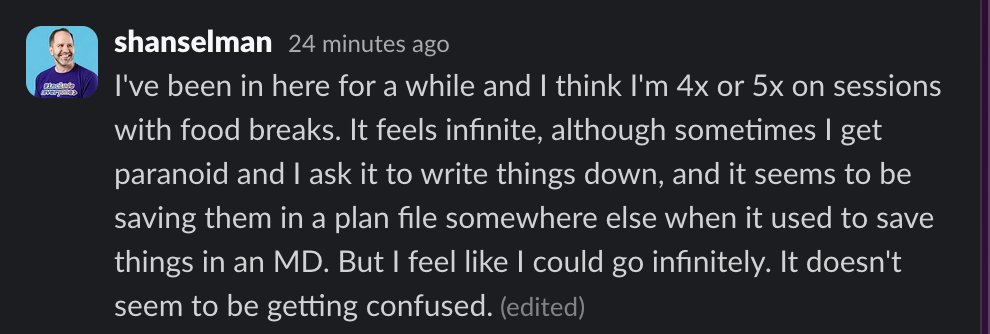

Looked at Anthropic's ralph-wiggum plugin again. Single session, accumulating context, no re-anchoring. This really defeats the purpose. The original vision by @GeoffreyHuntley: - Fresh context per iteration - File I/O as state (not transcript) - Dumb bash loop, deterministic setup Anthropic's version? Stop hook that blocks exit and re-feeds the prompt in the SAME session. Transcript grows. Context fills up. Failed attempts pollute future iterations. The irony: Anthropic's own long-context guidance says "agents must re-anchor from sources of truth to prevent drift." Their plugin doesn't re-anchor. At all. --- flow-next follows the original vision: ✅ Fresh context per iteration (external bash loop) ✅ File I/O as state (.flow/ directory) ✅ Deterministic setup (same files loaded every iteration) Plus Anthropic's own guidance: ✅ Re-anchor EVERY task - re-read epic spec, task spec, git state ✅ Re-anchor after context compaction too (compaction shouldn't happen but if it does, we're set) Plus what we added: ✅ Multi-layered quality gates: tests, lints, acceptance criteria, AND cross-model review via @RepoPrompt ✅ Reviews block until SHIP - not "flag and continue" ✅ Explicit plan → work phases - plan reviewed before code starts ✅ Auto-blocks stuck tasks after N failures ✅ Structured task management - dependencies, status, evidence Two models > one. Process failures, not model failures. Agents that actually finish what they start. github.com/gmickel/gmicke…