Ash DCosta

7.2K posts

@softwareweaver

🚀 Creator - https://t.co/ocgBEClJlZ, https://t.co/iFjecWvHYM, https://t.co/gM7uDGPBJh 🌟Interested in software and AI.

The task of a radiologist was to read scans. The purpose was to diagnose disease. When AI handles the task, the purpose doesn’t shrink. It grows. Reflecting on CEO Jensen Huang’s insights at #AdobeSummit regarding the task vs. purpose of work in the agentic era.

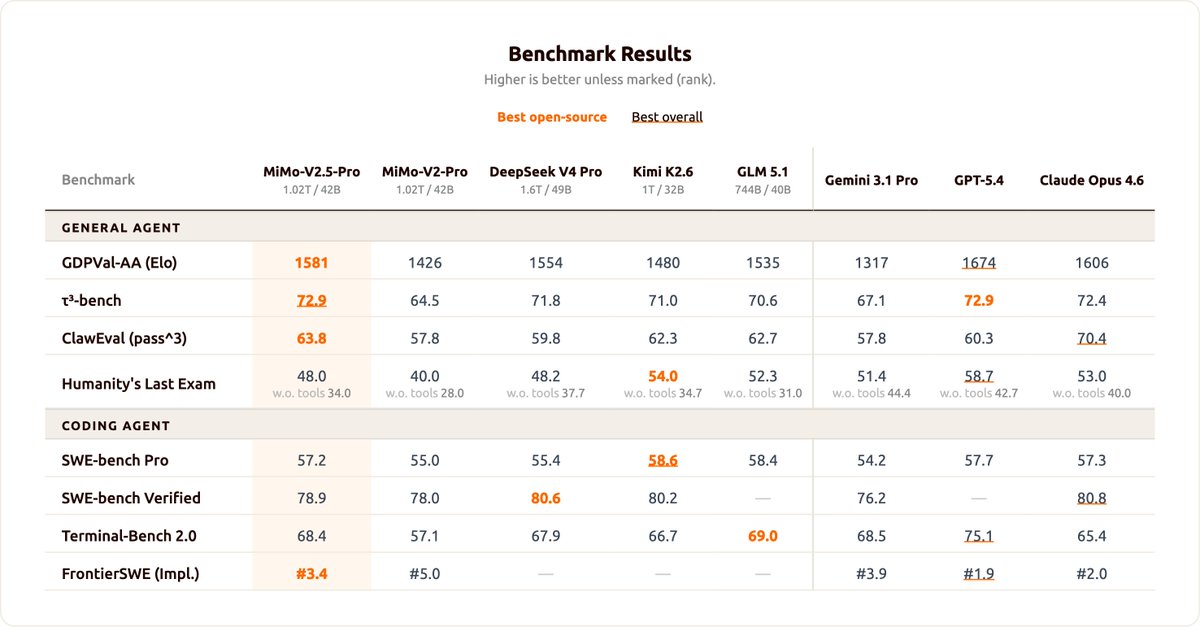

We're delighted to announce that MiniMax M2.7 is now officially open source. With SOTA performance in SWE-Pro (56.22%) and Terminal Bench 2 (57.0%). You can find it on Hugging Face now. Enjoy!🤗 huggingface:huggingface.co/MiniMaxAI/Mini… Blog: minimax.io/news/minimax-m… MiniMax API: platform.minimax.io

Claude Mythos is Delusional

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing