The AI market looks nothing like the narrative. We have the production data to prove it. The State of AI: what 500K developers are actually running in 2026. Read the full report now 👇

vLLM

875 posts

@vllm_project

A high-throughput and memory-efficient inference and serving engine for LLMs. Join https://t.co/lxJ0SfX5pJ to discuss together with the community!

The AI market looks nothing like the narrative. We have the production data to prove it. The State of AI: what 500K developers are actually running in 2026. Read the full report now 👇

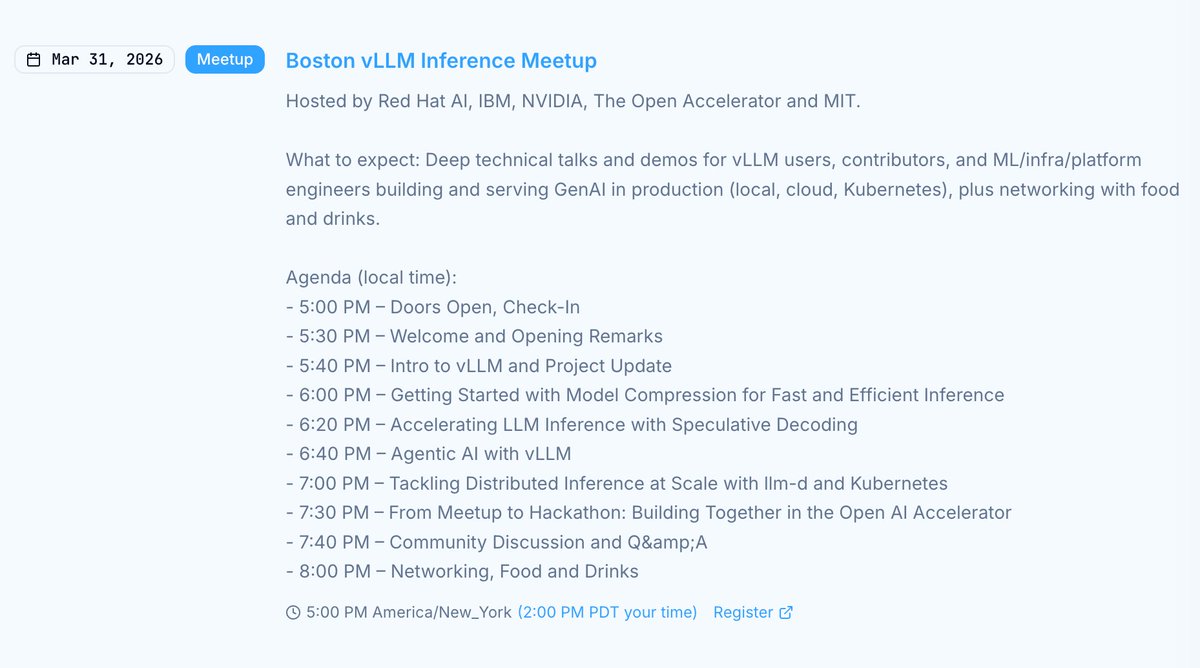

vLLM meetup is coming to Boston on March 31! Workshop + evening sessions covering: - @vllm_project update - Model compression and speculative decoding - Agentic AI with vLLM - Distributed inference at scale with @_llm_d_ and Kubernetes Pre-event workshop at 3:30 PM: Deploy Llama 3.1 8B and benchmark llm-d's cache-aware routing live. Shoutout to our sponsors: @RedHat, @IBM, @NVIDIAAI, The Open Accelerator, and @MITIBMLab! Register here 👇 luma.com/4rmkrrb7

We’re excited to introduce TorchSpec, a torch-native framework for scalable speculative decoding training developed by the TorchSpec and Mooncake teams. By streaming hidden states from inference engines to training workers via Mooncake, TorchSpec enables fully disaggregated pipelines where inference and training scale independently. 🔗 Read our latest blog from TorchSpec & Mooncake teams: pytorch.org/blog/torchspec… @lightseekorg @KT_Project_AI #PyTorch #TorchSpec #Mooncake #OpenSourceAI

🚀 Introducing Qianfan-OCR: a 4B-parameter end-to-end model for document intelligence. One model. No pipeline. Table extraction, formula recognition, chart understanding, and key information extraction, all in a single pass. Paper: arxiv.org/abs/2603.13398 Models: huggingface.co/collections/ba… 🧵 Key results ↓

Join the GPU MODE Hackathon, sponsored by AMD, and push the boundaries of LLM inference performance on leading open models, optimized for AMD Instinct MI355X GPUs. Finalists will compete for the $1.1M total cash prize pool across two independent tracks, each focused on a specific model and inference stack. Learn more and get registered here: luma.com/cqq4mojz

MiMo-V2-Pro & Omni & TTS is out. Our first full-stack model family built truly for the Agent era. I call this a quiet ambush — not because we planned it, but because the shift from Chat to Agent paradigm happened so fast, even we barely believed it. Somewhere in between was a process that was thrilling, painful, and fascinating all at once. The 1T base model started training months ago. The original goal was long-context reasoning efficiency. Hybrid Attention carries real innovation, without overreaching — and it turns out to be exactly the right foundation for the Agent era. 1M context window. MTP inference for ultra-low latency and cost. These architectural decisions weren't trendy. They were a structural advantage we built before we needed it. What changed everything was experiencing a complex agentic scaffold — what I'd call orchestrated Context — for the first time. I was shocked on day one. I tried to convince the team to use it. That didn't work. So I gave a hard mandate: anyone on MiMo Team with fewer than 100 conversations tomorrow can quit. It worked. Once the team's imagination was ignited by what agentic systems could do, that imagination converted directly into research velocity. People ask why we move so fast. I saw it firsthand building DeepSeek R1. My honest summary: — Backbone and Infra research has long cycles. You need strategic conviction a year before it pays off. — Posttrain agility is a different muscle: product intuition driving evaluation, iteration cycles compressed, paradigm shifts caught early. — And the constant: curiosity, sharp technical instinct, decisive execution, full commitment — and something that's easy to underestimate: a genuine love for the world you're building for. We will open-source — when the models are stable enough to deserve it. From Beijing, very late, not quite awake.

✨vLLM v0.17.0 is out with support for #MiniCPM-o 4.5! 🚀 Now you can serve the latest 9B #omnimodal model with vLLM’s high-throughput serving engine. For developers, this means scaling real-time, full-duplex conversations (vision, speech, and text) is now production-ready. @vllm_project 👏Check the release: github.com/vllm-project/v… #LLM #vLLM #MiniCPM #OpenSource

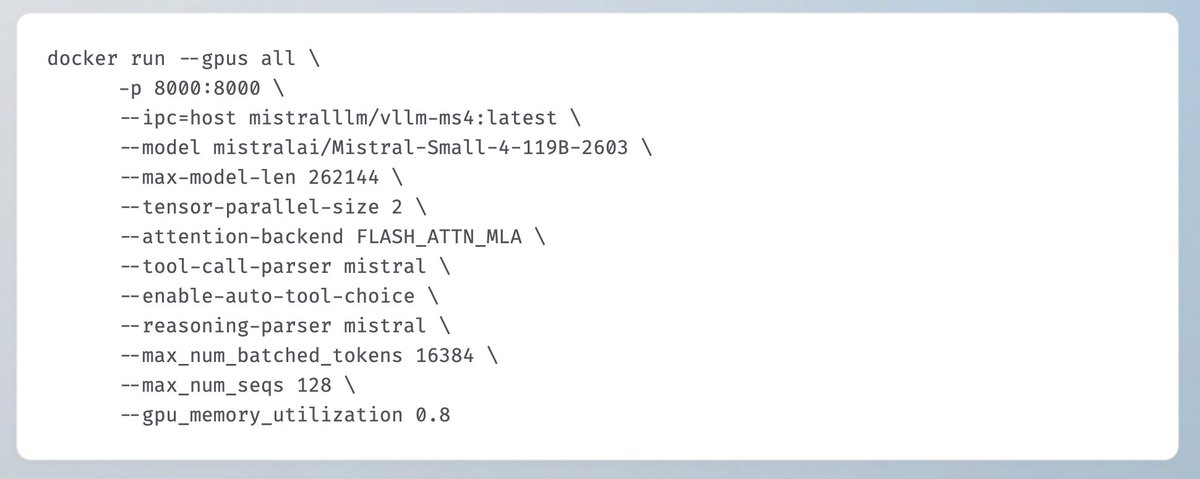

🔥 Meet Mistral Small 4: One model to do it all. ⚡ 128 experts, 119B total parameters, 256k context window ⚡ Configurable Reasoning ⚡ Apache 2.0 ⚡ 40% faster, 3x more throughput Our first model to unify the capabilities of our flagship models into a single, versatile model.

Reasoning models are growing fast, and running them efficiently requires distributing workloads across multiple GPU nodes. NVIDIA Dynamo 1.0 delivers low-latency, high-throughput distributed inference for production AI deployments—while boosting NVIDIA Blackwell inference performance by up to 7x, lowering token cost, and expanding opportunities with free #OSS. Built for production. Key features: 🔹 Disaggregated serving 🔹 Agentic-aware routing 🔹 Multimodal inference 🔹 Topology-aware Kubernetes scaling 🔹 SLA-ready quick deployments Available now with native support for SGLang (@lmsysorg), TensorRT-LLM, and @vllm_project 👉 nvda.ws/47oyMMf

This tutorial walks you through deploying the vLLM Production Stack on OKE—from infrastructure provisioning to running your first inference request. social.ora.cl/6012hNgEp

Introducing NVIDIA Nemotron 3 Super 🎉 Open 120B-parameter (12B active) hybrid Mamba-Transformer MoE model Native 1M-token context Built for compute-efficient, high-accuracy multi-agent applications Plus, fully open weights, datasets and recipes for easy customization and deployment. 🧵

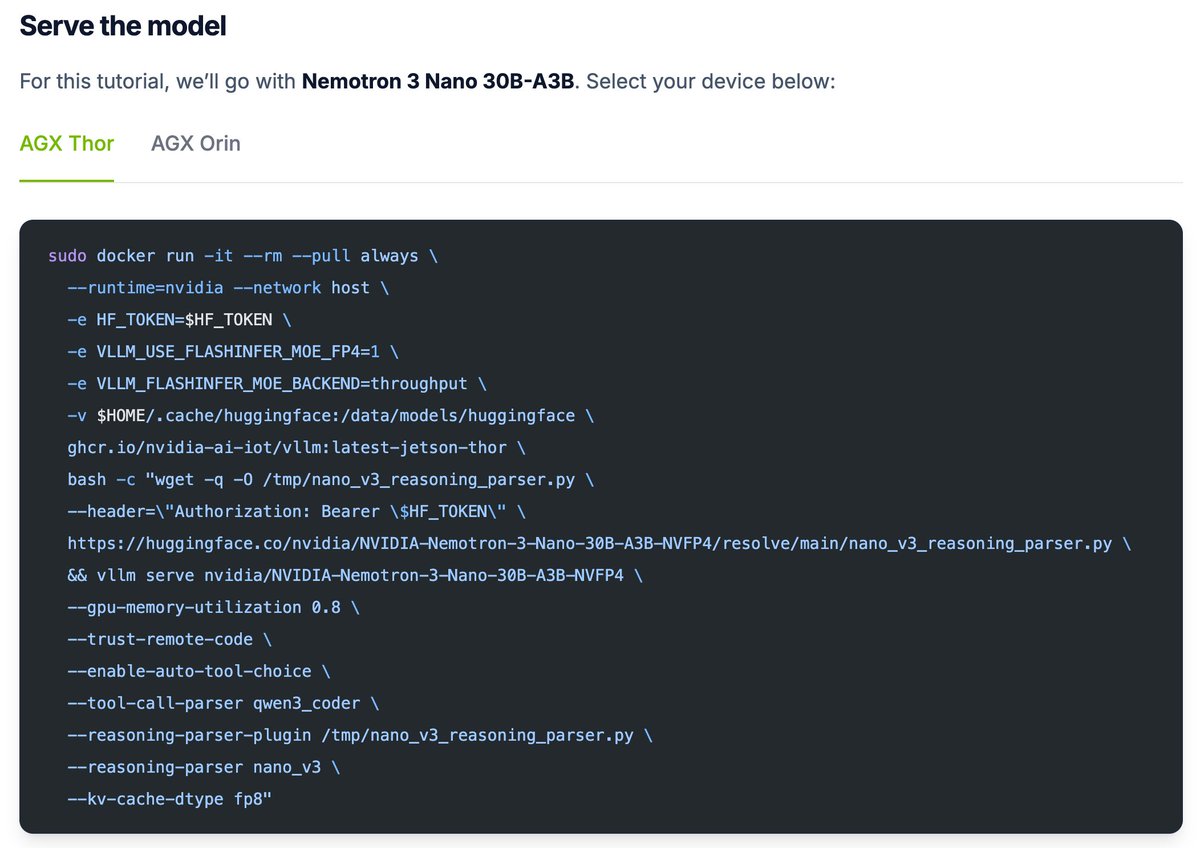

🦞 Want an always‑on personal assistant on your NVIDIA Jetson? Follow our step‑by‑step OpenClaw tutorial to run it fully local on your Jetson with zero cloud APIs. 👉 jetson-ai-lab.com/tutorials/open…