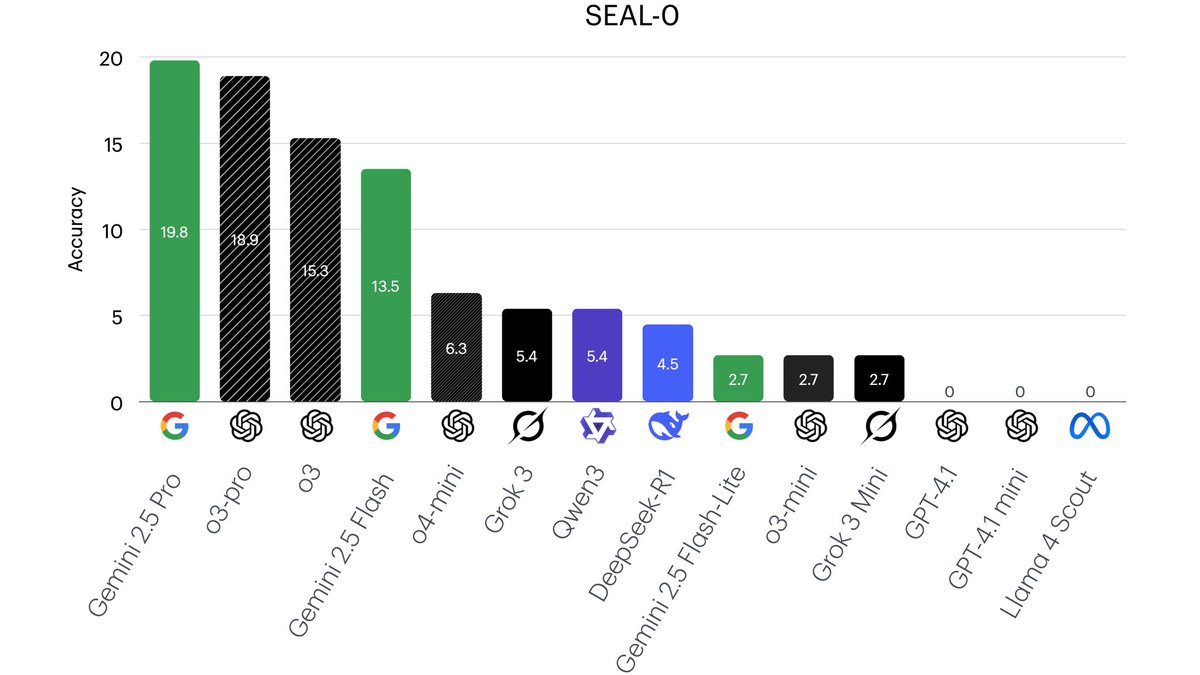

Introducing Chroma Context-1, a 20B parameter search agent. > pushes the pareto frontier of agentic search > order of magnitude faster > order of magnitude cheaper > Apache 2.0, open-source

Thinh

26 posts

@thinhphp_vt

PhD student @VT_CS, supervised by @tuvllms. Interested in search-augmented LLMs. Ex AI resident @VinAI_Research

Introducing Chroma Context-1, a 20B parameter search agent. > pushes the pareto frontier of agentic search > order of magnitude faster > order of magnitude cheaper > Apache 2.0, open-source

Tools & Agents Upgrades 🧰 📈 Better results on SWE / Terminal-Bench 🔍 Stronger multi-step reasoning for complex search tasks ⚡️ Big gains in thinking efficiency 3/5

🚨 New paper 🚨 Excited to share my first paper w/ my PhD students!! We find that advanced LLM capabilities conferred by instruction or alignment tuning (e.g., SFT, RLHF, DPO, GRPO) can be encoded into model diff vectors (à la task vectors) and transferred across model versions. 💡You don’t necessarily need to fine-tune from scratch again for every new base model version. Instead, fine-tune once and add the diff vector to updated versions! ♻️♻️♻️. This can also offer a stronger and more computationally efficient starting point when further training is feasible. 📰: tinyurl.com/finetuning-tra… More 👇

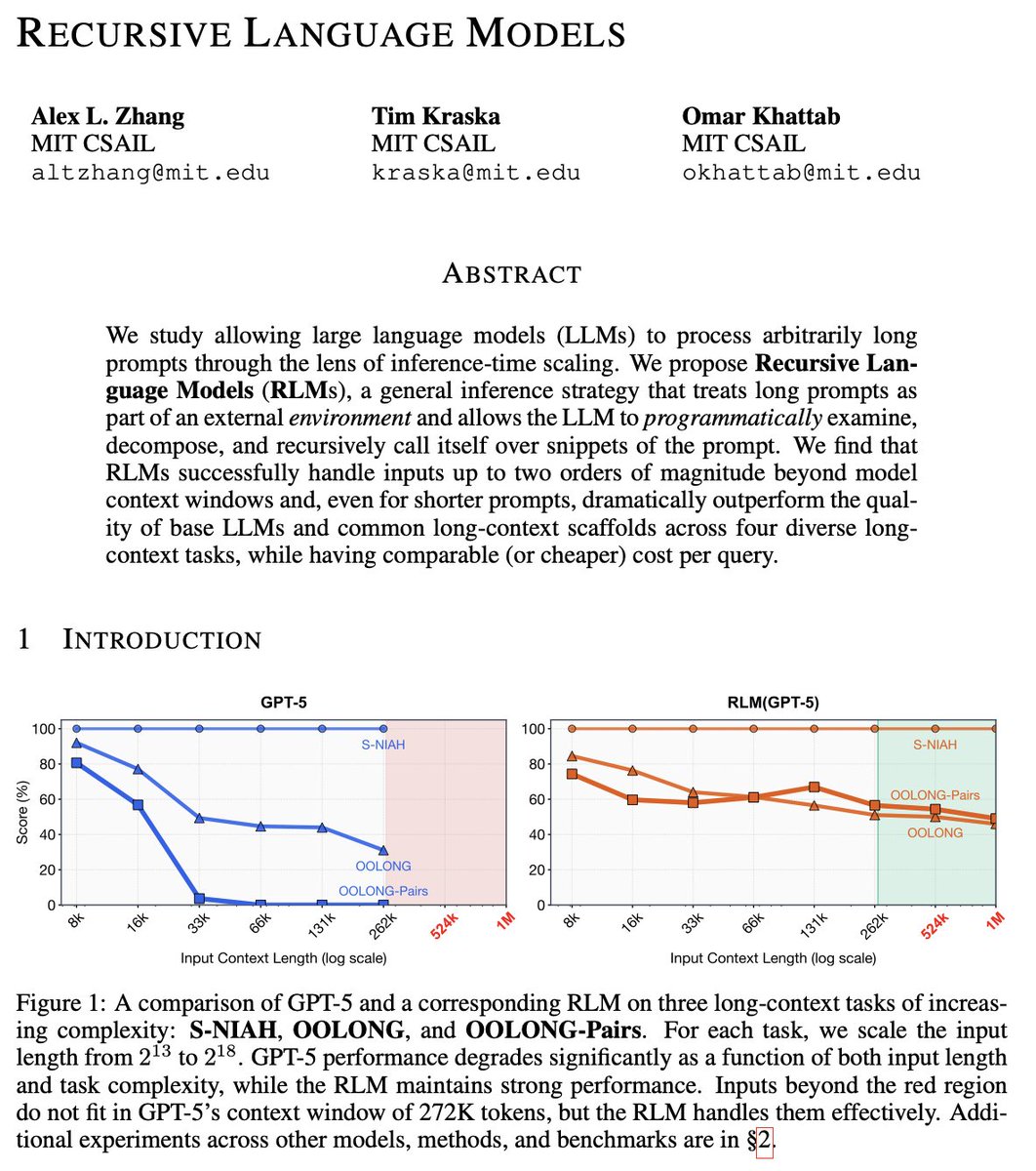

Overall Results: