Linus Pin-Jie Lin

126 posts

Linus Pin-Jie Lin

@linusdd44804

PhD @VT_CS, Master @LstSaar. Interested in efficient model development & modular LMs

Introducing EvoSkill: a framework that analyzes agent failures and automatically builds the missing skills, leading to rapid improvement on difficult benchmarks and generalizable skills across use-cases. +12.1% on SealQA +7.3% on OfficeQA (SOTA) +5.3% on BrowseComp via zero-shot transfer from SealQA Read more below 🧵

Excited to share that our paper on efficient model development has been accepted to #EMNLP2025 Main conference @emnlpmeeting. Congratulations to my students @linusdd44804 and @Sub_RBala on their first PhD paper! 🎉

Tools & Agents Upgrades 🧰 📈 Better results on SWE / Terminal-Bench 🔍 Stronger multi-step reasoning for complex search tasks ⚡️ Big gains in thinking efficiency 3/5

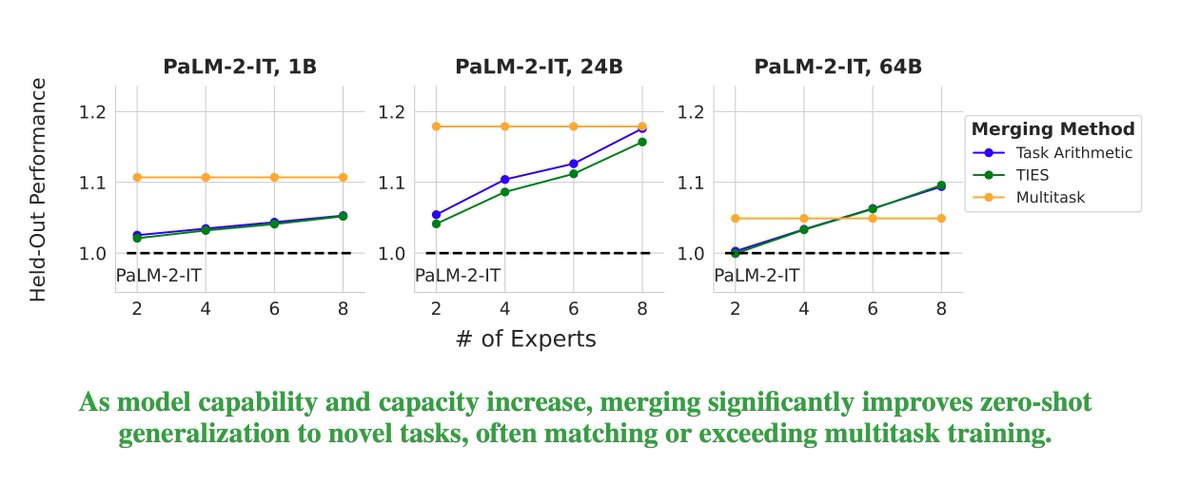

Ever wondered if model merging works at scale? Maybe the benefits wear off for bigger models? Maybe you considered using model merging for post-training of your large model but not sure if it generalizes well? cc: @GoogleAI @GoogleDeepMind @uncnlp 🧵👇 Excited to announce my internship work on large-scale model merging! We explore what happens when you combine larger and larger language models (up to 64B parameters!) and how different factors –model size, base model quality, merging methods, and # of experts– impact held-in performance and generalization. 📰: arxiv.org/abs/2410.03617

Ever wondered if model merging works at scale? Maybe the benefits wear off for bigger models? Maybe you considered using model merging for post-training of your large model but not sure if it generalizes well? cc: @GoogleAI @GoogleDeepMind @uncnlp 🧵👇 Excited to announce my internship work on large-scale model merging! We explore what happens when you combine larger and larger language models (up to 64B parameters!) and how different factors –model size, base model quality, merging methods, and # of experts– impact held-in performance and generalization. 📰: arxiv.org/abs/2410.03617

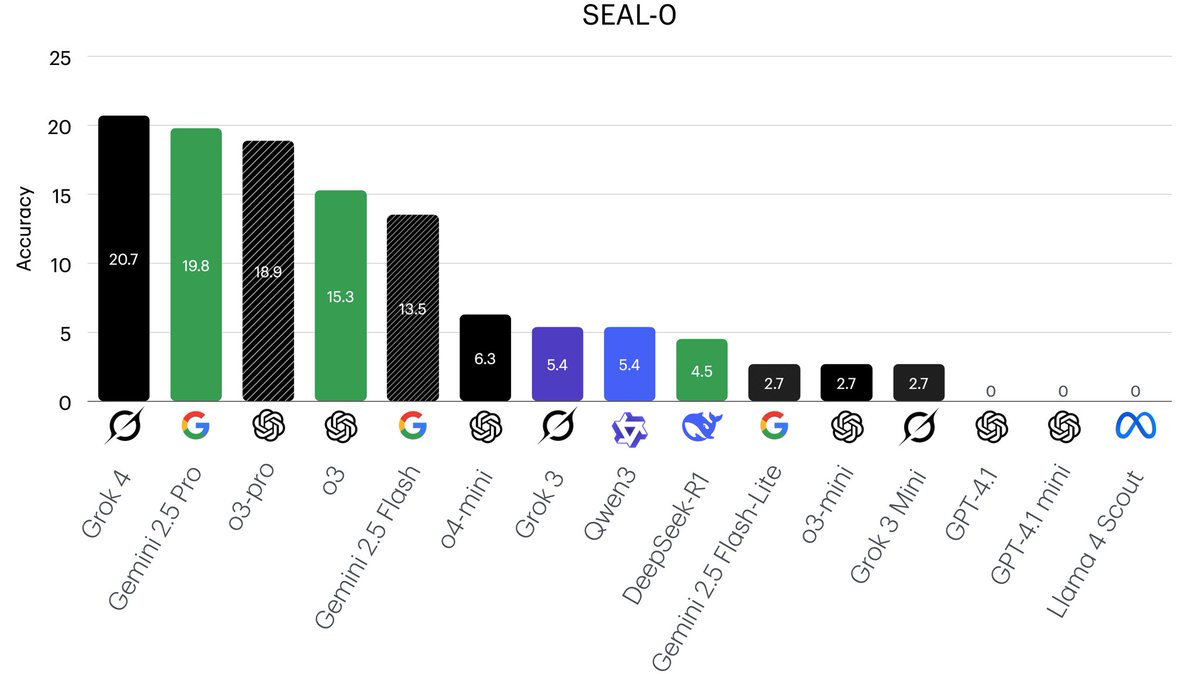

✨ New paper ✨ 🚨 Scaling test-time compute can lead to inverse or flattened scaling!! We introduce SealQA, a new challenge benchmark w/ questions that trigger conflicting, ambiguous, or unhelpful web search results. Key takeaways: ➡️ Frontier LLMs struggle on Seal-0 (SealQA’s core set): most chat models (incl. GPT-4.1 w/ browsing) achieve near-zero accuracy ➡️ Advanced reasoning models (e.g., DeepSeek-R1) can be highly vulnerable to noisy search results ➡️ More test-time compute does not yield reliable gains: o-series models often plateau or decline early ➡️ "Lost-in-the-middle" is less of an issue, but models still fail to reliably identify relevant docs amid distractors 📜: arxiv.org/abs/2506.01062 🤗: huggingface.co/datasets/vtllm… 🧵:👇

Models like DeepSeek-R1 🐋 mark a fundamental shift in how LLMs approach complex problems. In our preprint on R1 Thoughtology, we study R1’s reasoning chains across a variety of tasks; investigating its capabilities, limitations, and behaviour. 🔗: mcgill-nlp.github.io/thoughtology/