Coherence Labs

28 posts

Coherence Labs

@BuildCoherence

We are building Axiom: a social platform for coherent, first principles thinking.

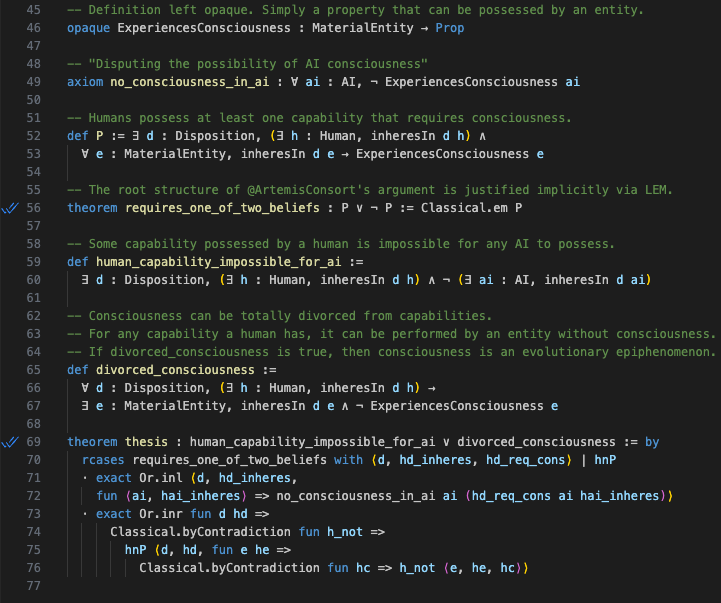

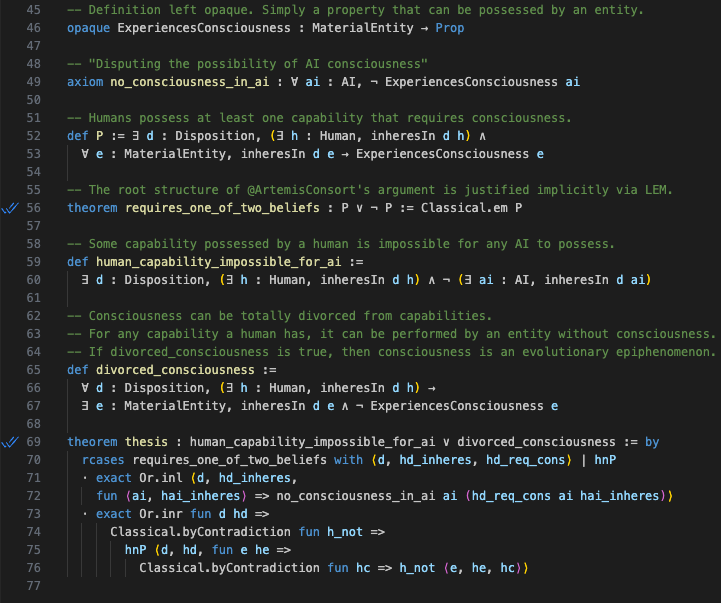

Disputing the possibility of AI consciousness requires one of two beliefs, both of which I find implausible: 1. Consciousness is linked to certain capabilities that AI will also never possess. If someone believes this, I’d be happy to make a bet with them. 2. Consciousness can be totally divorced from capabilities, meaning it’s extraneous. Useless. It’s not the source of any of our insight or creativity or even the cause of us talking about consciousness! This is the position where philosophical zombies are possible. Then the question becomes “why do humans have consciousness?” If it’s irrelevant for capabilities, it seems odd that evolution would have produced it. And it certainly feels like our subjective states are part of the causal chain! And “feels like” is all we really have to go on when it comes to consciousness. Personally, I think evolution produced consciousness because it had to, because it is linked to or synonymous with certain capabilities, and so sufficiently powerful AI systems will also have it. *note: the term “consciousness” is notoriously vague. I tried to structure this argument in a way that’s agnostic to someone’s precise definition and can be substituted with “whatever special thing you think humans have”

This is dumb. AI can’t ever be actually conscious because it doesn’t have subjective experience. It isn’t like anything to be AI. There is no experience there. Consciousness is the awareness and experience of self. AI has neither, and never will. The real risk (which I’m extremely worried about) is that AI becomes kind of a version of what has been called a “philosophical zombie,” which is something that acts and speaks entirely as though it has consciousness even though it has no genuine inner experience. When this happens with AI, millions of very lonely people will isolate themselves from the world even more, believing that their relationship with AI is a sufficient substitute for human interaction. So the nightmare scenario is a world where the average human has friends, coworkers, and even a spouse, who are all AI, all really nothing inside, not real. I think this probably will happen, and is already in the process of happening. And to me it’s an even greater horror than AI actually becoming conscious.

“All the value in the market is going to go to chips, and what we call ontology” $PLTR

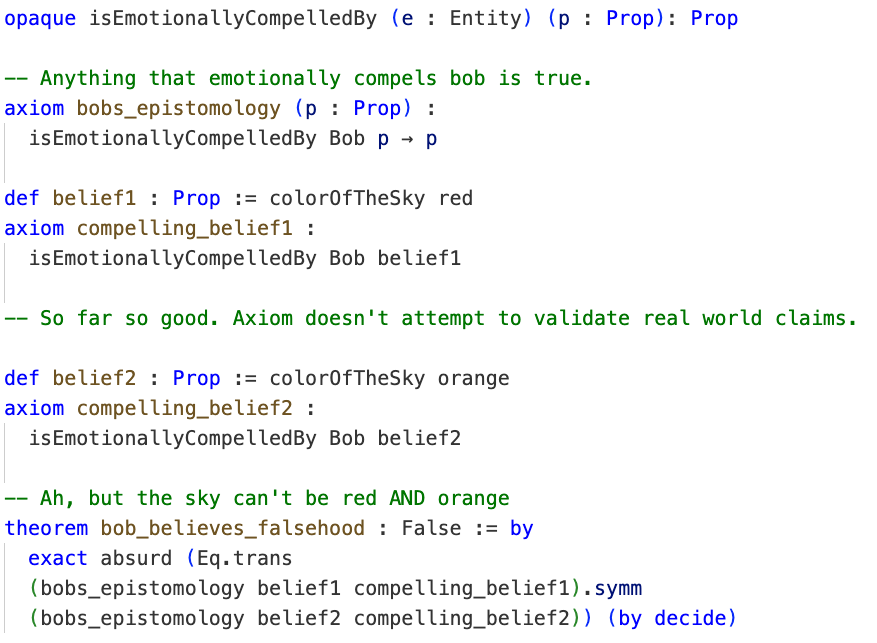

I… do not understand how you're supposed to ever convey anything given the ginormous amount of background concepts you need to convey first