Method रीट्वीट किया

Method

454 posts

As Chief Shill Officer (CSO) of @codecopenflow it's my duty to search the ticker every 12hrs and like every post about $codec

English

@LomahCrypto He would like to sell you some plasma tokens for $1.50 each

Loma@LomahCrypto

I'll buy as much XPL as you have, right now, at $1.50. Sell me all you want. Then go fuck off.

English

Method रीट्वीट किया

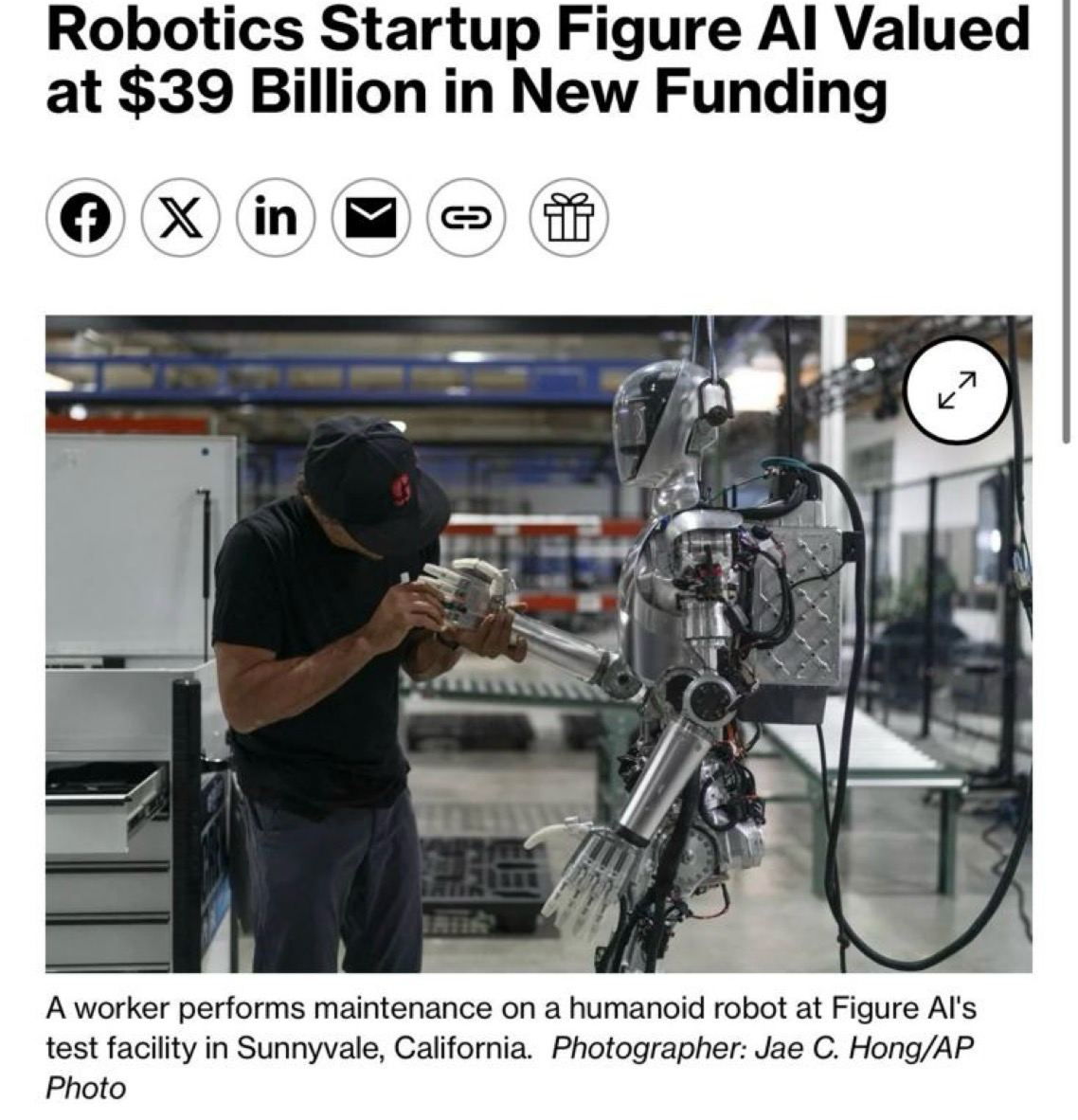

Every day we’re seeing billion dollar headlines for robotics.

Only 12 months ago, these companies and figures were 10-20x lower.

If it’s not already painstakingly obvious how successful this sector is going to become, dedicate a weekend to research so you aren’t left behind.

History shows the biggest value in tech waves often accrues to the enabling layers, Microsoft in PCs, Apple in smartphones, AWS in cloud. Robotics won’t be different, the infra layer that developers build on will capture more than any single hardware play.

One common theme I hear from friends is they’re worried about being underexposed to traditional robotics.

Yes, there are going to be insane headlines about Figure AI doing a 200x from seed valuations or the equivalent. But if you’re below mid 7 figs give or take, Web2 is not where you want to be (unless you have insane info/connections).

Time and time again, crypto has offered the most asymmetric and more importantly, accelerated upside. There’s significantly more risk, but it also comes with the luxury of not being time rugged which sometimes is worse in itself.

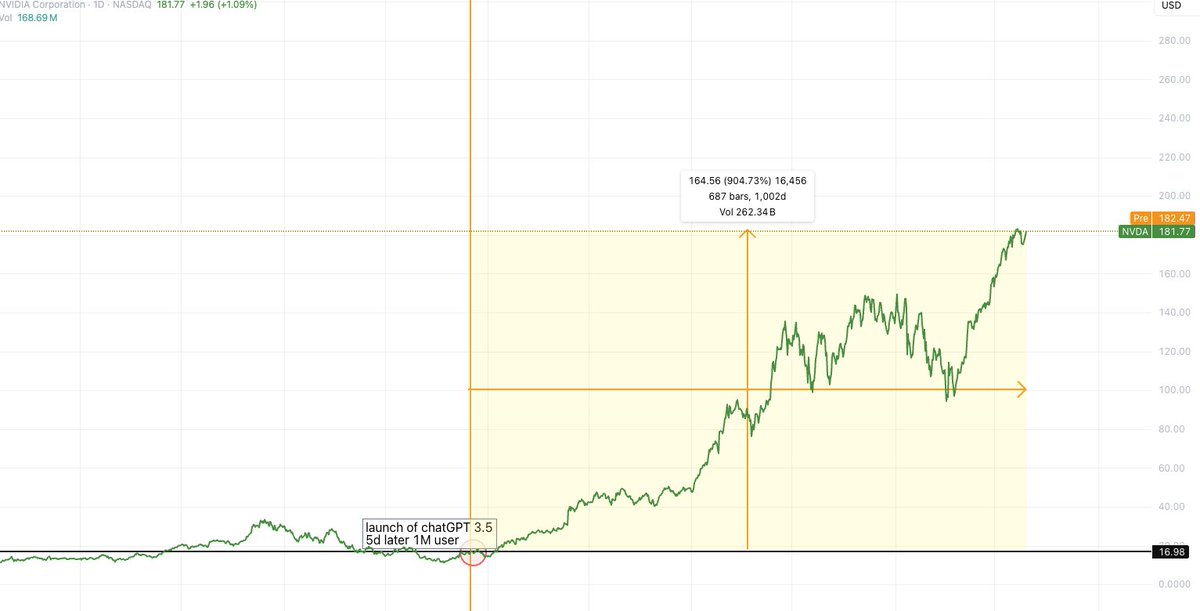

Nvidia is the largest stock in the world because of AI’s acceleration. Looking back, would you have preferred to punt on ai16z, Virtuals, AIXBT, GOAT in their first leg, or hunt for undervalued “fundamental AI plays” on Robinhood?

You might have kept more money in the long run, however we’re here for the sleep depriving uPNL aren’t we?

Like the early innings of AI, we’ve been given a very special moment where the pinnacle of technological innovation is staring us dead in the face. How you navigate its progression is a reflection of your understanding of market fundamentals and human greed.

Just like AI, we’re going to be met with endless vaporware as we move closer to general purpose robots. For those willing to roll the dice and catch the leaders, you’ll make outsized multiples that make Andrew Kang’s seed investments look small.

I know which game I’m playing.

English

Method रीट्वीट किया

I get asked a lot what robotics plays I’m in besides $CODEC.

Answer: none so far.

If I believe robotics is the next AI style meta with the potential to run into the billions, and if I see Codec as the ai16z/Virtuals of the ecosystem, why would I allocate capital and more importantly, conviction, to my second best idea?

My trading style is closer to Jez’s, where I full port my highest conviction plays. That means I’ve had some horrendous round trips, but I’m not here for average returns.

Full porting forces accountability, you can’t hide behind a basket of half baked bets. That mental clarity is part of your edge.

If you show up to this industry everyday, your objective is to take outsized risk and swings which can land you generational wealth if correct.

The problem is most traders “best idea” aren’t actually great. When you full port mediocrity, you blow up.

Diversification only makes sense once you’ve hit liquidity constraints in your main project. Even then, unless you own 3-4%+ of the supply, I don’t think it’s a real issue (you can always OTC anyway).

Over the past year, onchain has proven that rotations are getting faster and faster. Even if robotics becomes the grand narrative I’ve been calling for months, only the top projects will attract the mindshare and liquidity required to deliver life changing outcomes.

Leaders have always been the kingmaker trades and chasing betas hasn’t worked for 9 months now. There just isn’t enough active liquidity.

History shows the majority of generational returns accrue to the top 1-3 projects in a sector. Robotics won’t be different.

The fastest horse is the fastest horse.

English

Method रीट्वीट किया

Method रीट्वीट किया

Humanoids are starting to flood mindshare and run around the streets.

Every time I open TikTok there’s a “rizzbot” or another viral robot being swarmed by crowds of people.

Every single one of them with their phones out, utterly mesmerized.

What’s more encapsulating than a digital search window that can tell you anything? A physical one.

Just as ChatGPT changed how society views digital interactions, robots will change how we perceive physical interactions.

The biggest winners in technology are the ones who solve problems you didn’t realize you had.

In 2007, we didn’t realize touchscreen iPhones were a necessity. Why would you need a personal computer in your pocket when a phone's only use is to call people?

Why would you need your own personal humanoid to clean the house, do the shopping, pick the kids up from school, make dinner, all while you live out a higher quality life and spend more time doing the things you enjoy in the big 2025?

Come to think of it, this makes a lot more sense than what an iPhone did in 2007.

So much so that you have the richest man in the world in an arms race, building the most effective humanoid on the market, which he believes will become bigger than his company Tesla.

If we’re to go off history, Elon is the most followed and most engaged human in the world. If he believes his humanoid company (Optimus) will exceed the valuations of the 9th largest stock in the world, valued at $1.1 trillion, what does that mean for robotics as a whole?

We’ve seen how vocal he’s been on autonomous vehicles. So if we have physical assistants that remove multiple hours of labor from our day and give us irreplaceable time back in our lives, what kind of god tier yap fest will he go on?

The smartest businessmen across the world are invested in this vertical for good reason. At $50k USD, a humanoid becomes cheaper than the average Indian wage while working 20+ hours a day, 7 days a week.

Labor accounts for half the global GDP at $42 trillion per year. We’ve seen the impact ChatGPT and AI have had on the online workforce, so where does that leave us when the labor market is next in line?

Nvidia is leading the charge with their foundation model Isaac GR00T. We’ve already seen the benefits of them going open source: third party companies are building on top of their model with slightly fine tuned data, facilitating real world use cases.

Foundation models are the bedrock of robotics, the iOS’s and Androids of the industry. Whoever builds the easiest model to contribute to will cater to individual devs, and more importantly spark culture.

Breakfast in Silicon Valley will be a discussion of who installed the latest “golf instructor” package into their humanoid from user: swing_metal_dingz. They’re not purely for performative means but a status symbol in high societies.

Crypto is the perfect incentive model as task training marketplaces become one of the highest sought after forms of commerce in the modern era. Just as we’ve seen the rise of TikTokers and content creators, we’ll see the introduction of task trainers who earn revenue through subscription models from widely used skillsets to automate our daily lives.

As it stands, there’s only one genuine play available tackling these verticals. $CODEC is the first full stack robotics company to facilitate all the above. The first robotics company with a live working product, a highly specialized SDK now publicly available, and also live to test through a Twitter interface.

This is the first demonstration of what’s coming to their ecosystem. You might not realize it now, but world sims are going to become even more important than the data libraries powering LLMs. There’s no “internet of robotics” or physics engines to replicate human behavior and movements.

A storm is brewing for Q4 & Q1, where we’ll see many of the leading humanoid companies releasing their first models for public consumers. Attention, mindshare, and investments are going to grow exponentially when reality sets in after seeing our new companions.

The biggest winners will be those who can close the gap between physical components and effective task training, turning atoms into the most productive asset humanity has ever seen.

Betting on the fastest horse has only proven more successful as markets advance. This time is no different imo.

Robotics szn loading…

█████████▒ 90%

English

Method रीट्वीट किया

SOL ecosystem projects should rip alongside $PUMP

$CODEC (robotics)

$OMFG (new defi primitive)

Time to load my @tryfomo account again

English

we’re at an interesting point with robotics:

it reminds me a lot of late ’22 / early ’23 right before the ChatGPT moment. back then the compute stack (GPUs, semis, cloud infra) wasn’t priced for an AI boom.

whether it was ppl missing the big picture or stocks just cyclically trading down with the broader tech market, it took a few months until the stocks re-rated sharply higher (e.g. NVIDIA).

imo we're at a similar setup with robotics today:

> tech is advancing fast and nearing a tipping point

> public stocks stuck at depressed valuations while private markets go wild

> robots already going viral (humanoids, fighting bots, etc.)

all we're missing is one big catalyst (on the consumer side?), sth like the “ChatGPT moment” for robots before the market wakes up.

English

Method रीट्वीट किया

@Web3Quant @free_electron0 CODEC

ai and robotics architecture

x10 feels too low

English

@free_electron0 troll has one final test. if it holds well post some tier 1 listings..

agree. looking for 5m-50m with potential to 500 to 1b with conviction.

not many plays around.

English

drop 3 of ur high conviction 10x plays

wen sol goes to ATH.

will ignore anything that doesnt have 1 liner why?

W3Q@Web3Quant

not to steal eths thunder (bros have had a rough cycle early on) but sol ath to follow

English

Method रीट्वीट किया

You’ll see foundation models for Humanoids continually using a System 2 + System 1 style architecture which is actually inspired by human cognition.

Most vision-language-action (VLA) models today are built as centralized multimodal systems that handle perception, language, and action within a single network.

Codec’s infrastructure is perfect for this as it treats each Operator as a sandboxed module. Meaning you can spin up multiple Operators in parallel, each running its own model or task, while keeping them encapsulated and coordinated through the same architecture.

Robots and Humanoids in general typically have multiple brains, where one Operator might handle vision processing, another handling balance, another doing high level planning etc, which can all be coordinated through Codec’s system.

Nvidia’s foundation model Issac GR00T N1 uses the two module System 2 + System 1 architecture. System 2 is a vision-language model (a version of PaLM or similar, multimodal) that observes the world through the robot’s cameras and listens to instructions, then makes a high level plan.

System 1 is a diffusion transformer policy that takes that plan and turns it into continuous motions in real time. You can think of System 2 as the deliberative brain and System 1 as the instinctual body controller. System 2 might output something like “move to the red cup, grasp it, then place it on the shelf,” and System 1 will generate the detailed joint trajectories for the legs and arms to execute each step smoothly.

System 1 was trained on tons of trajectory data (including human teleoperated demos and physics simulated data) to master fine motions, while System 2 was built on a transformer with internet pretraining (for semantic understanding).

This separation of reasoning vs. acting is very powerful for NVIDIA. It means GR00T can handle long horizon tasks that require planning (thanks to System 2) and also react instantly to perturbations (thanks to System 1).

If a robot is carrying a tray and someone nudges the tray, System 1 can correct the balance immediately rather than waiting for the slower System 2 to notice.

GR00T N1 was one of the first openly available robotics foundation models, and it quickly gained traction.

Out of the box, it demonstrated skill across many tasks in simulation, it could grasp and move objects with one hand or two, hand items between its hands, and perform multi step chores without any task specific programming. Because it wasn’t tied to a single embodiment, developers showed it working on different robots with minimal adjustments.

This is also true for Helix (Figure’s foundation model) which uses this type of architecture. Helix allows for two robots or multiple skills to operate, Codec could enable a multi agent brain by running several Operators that share information.

This “isolated pod” design means each component can be specialized (just like System 1 vs System 2) and even developed by different teams, yet they can work together.

It’s a one of a kind approach in the sense that Codec is building the deep software stack to support this modular, distributed intelligence, whereas most others only focus on the AI model itself.

Codec also leverages large pre trained models. If you’re building a robot application on it, you might plug in an OpenVLA or a Pi Zero foundation model as part of your Operator. Codec provides the connectors, easy access to camera feeds or robot APIs, so you don’t have to write the low level code to get images from a robot’s camera or to send velocity commands to its motors. It’s all abstracted behind a high level SDK.

One of the reasons I’m so bullish on Codec is exactly what I outlined above. They’re not chasing narratives, the architecture is built to be the glue between foundation models, and it frictionlessly supports multi brain systems, which is critical for humanoid complexity.

Because we’re so early in this trend, it’s worth studying the designs of industry leaders and understanding why they work. Robotics is hard to grasp given the layers across hardware and software, but once you learn to break each section down piece by piece, it becomes far easier to digest.

It might feel like a waste of time now, but this is the same method that gave me a head start during AI szn and why I was early on so many projects. Become disciplined and learn which components can co exist and which components don’t scale.

It’ll pay dividends over the coming months.

Deca Trillions ( $CODEC ) coded.

English

Method रीट्वीट किया

Robotics works quite similar to AI.

You need lots of high quality data to operate, except you can’t just scrape the internet for robotics data since it needs real world experience and variables.

There is no “Internet of robot actions.”

Ton’s of teams are working and throwing stupid money into humanoids as they’re the most obvious deca trillion dollar industry due to how efficient they’ll turn the labour force (more efficient than an Indian average wage at $50k USD each).

But the biggest race, like AI is:

1. Getting quality data

2. Training tasks

Foundation models are like LLMs in AI, but instead of generating text, they generate actions for robots.

There’s a couple different approaches teams are taking with task training, some using small high fidelity datasets with labelling like Figure and others are going for spray and pray with massive models.

The goal is to give robots a broad, pre trained common sense and the ability to generalize across tasks and environments.

Instead of programming a robot for each task, you train a giant model on diverse data (videos of humans, simulations, real robot demos, images with text descriptions of tasks etc), and the model learns an embodied understanding of the physical world.

You can then prompt the robot to do something (through a command or example), and the foundation model’s “knowledge” kicks in to handle it, like how you can ask ChatGPT anything.

So the big disconnect for a lot of these companies will be in the task training area, they’re currently deeply focused on the data side (world simulations, synthetic data, robot trajectories, human videos etc) as they need it to interact perfectly with the real world but there isn’t as much development with what the robots/humanoids can actually do.

Nvidia is leading one of the key foundation models (Issac GR00T) which they’ve fully open sourced. They’ve already had 3rd party teams building on top of this and significantly improving the efficiently (basically created a program for humanoids to clean up a room with minimal changes to the foundation models data).

So the big overlap with crypto x ai x robotics will mostly likely lie in this task training sector (like a robotics App Store) since the leading foundation models are already going open source and there will probably be large incentive models for indie developers to contribute and build cool programs/tasks for humanoids.

There’s a lot of progression and mainstream development coming end of year/early next year where I think robotics will have its “chatgpt” moment (Elon hard shilling his new humanoid models, viral videos of humanoids doing real world tasks, intuitional money flowing in, workforces being laid off etc).

I can promise you I’m not wrong on this idea, feels identical to AI in 2023. Matter of when, not if.

Don’t ignore one of the most innovative technological progressions to happen in our lifetime and don’t ignore $CODEC which is the only available play sitting at the overlap of this trend.

English

Method रीट्वीट किया

Another week, another research article: this time it's $CODEC

I genuinely believe this is one of the most underlooked robotics coin in crypto. Robotics is the future but there aren't a lot of bets in crypto for it.

This is one of the few, feel free to get $CODEC-pilled

epoch_@epochbiz

$CODEC is setting up to be the best option as onchain play for Robotics Research article about @codecopenflow is now live on epoch.biz 🤖 epoch.biz/codecflow-cryp…

English

Method रीट्वीट किया

Method रीट्वीट किया

Method रीट्वीट किया