RealistSec

1.6K posts

RealistSec

@RealistSec

NR, AI Generalist & Cyber Security Manager in the UK. Mostly Cyber Sec scripting with a penchant for AI tools. Writer of posts @CannotDisplay_ https://t.co/qfsO5iPo11

🚨BIG WARNING: ANTHROPIC JUST ACCIDENTALLY LEAKED THEIR MOST POWERFUL AI MODEL EVER. AND IT'S TERRIFYING. Read this slow. Anthropic messed up their content management system and left nearly 3,000 unpublished internal files in a publicly searchable database. Security researchers found it. Fortune reviewed it. Anthropic had to come out and confirm everything. The model is called Claude Mythos. It sits in a brand new tier called Capybara. Above Opus. Above everything they've ever built. Anthropic's lineup used to be Haiku, Sonnet, Opus. Now there's a fourth tier above all of them. Bigger. Smarter. Way more expensive to run. According to the leaked drafts, Mythos destroys Claude Opus 4.6 in three areas. Software engineering. It writes, debugs and understands massive codebases with way more autonomy and fewer errors. We're talking full system level code comprehension. Academic reasoning. Multi-step thinking is dramatically better. The kind of complex problems where older models would confidently give you wrong answers? Mythos actually gets them right. And then there's cybersecurity. This is the one that should make you sit up. Mythos reportedly outperforms every single AI model in existence at finding and exploiting software vulnerabilities. Not by a little. By a lot. That's why Anthropic is NOT releasing this to the public. Their own internal documents say Mythos could spark a wave of AI-driven cyberattacks that "far outpace the efforts of defenders." Their words. Not mine. So who gets access? A tiny group of vetted enterprise customers. Cybersecurity defense organizations go first. The idea is to give the good guys a head start to harden their systems before models this powerful get out into the wild. Training is already complete. It exists right now. Anthropic just doesn't want anyone using it yet.

This is hilarious ngl

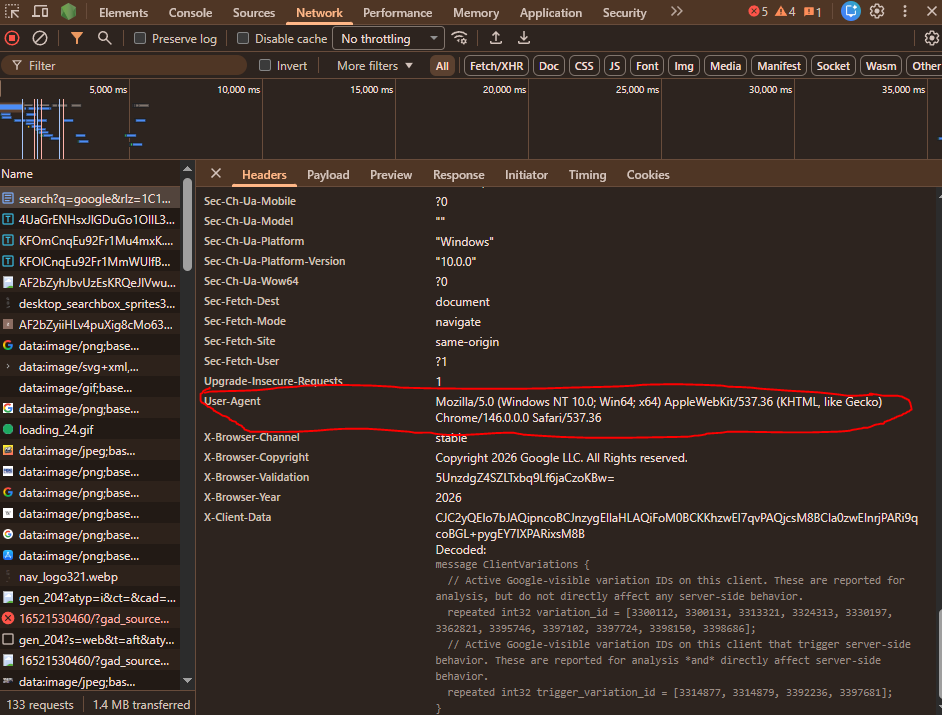

Seriously, I can tell on the PR what you used.

“OpenClaw is the iPhone of tokens” — Nvidia CEO on Lex Podcast