stefan

842 posts

I’ve spent over $17M on supplement brands.

All thanks to understanding applying the same playbook.

So I've just put together a 22-page mini-guide breaking down how you can do the same.

(These are the strategies my team & I are currently using)

I break down:

✔ Creative strategy

✔ The ideal positioning

✔ LTV, CRO and format tips

Here's how to get it:

Like + comment "SUPP" and I'll send it over.

(Must be following)

English

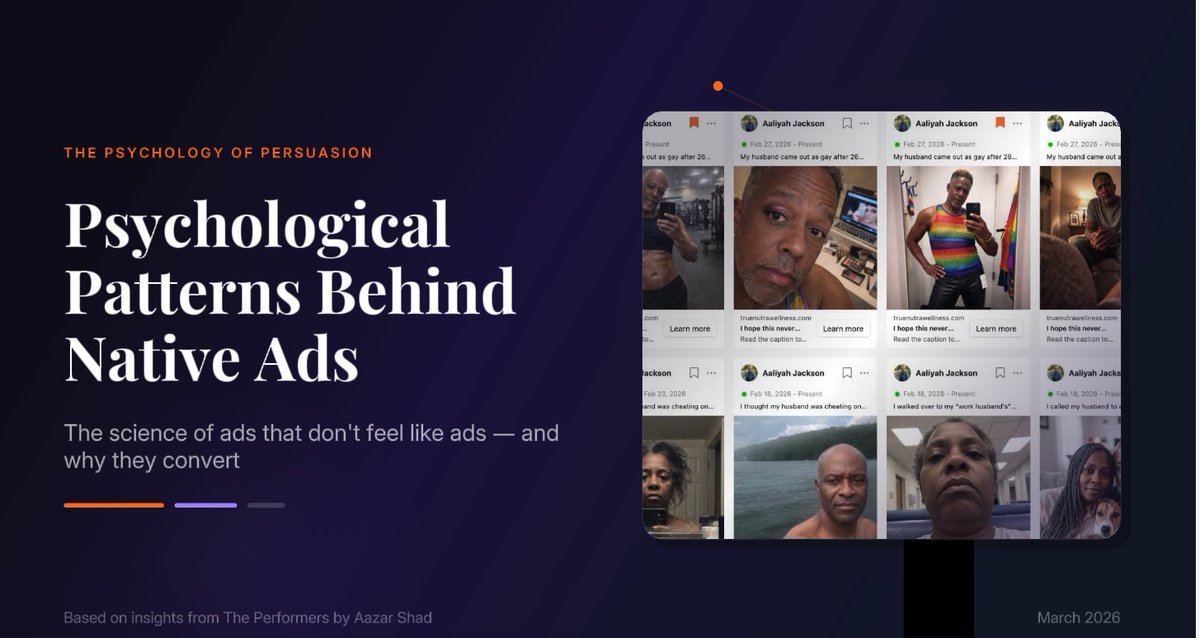

stefan रीट्वीट किया

$70k+ came from an ad that looks like a clay cartoon… not a supplement brand

no doctors

no long explanations

no clinical visuals

just a stressed character on the toilet

“why miralax doesn’t work on ozempic”

in 2 seconds you get the problem

blocked flow

nothing moving

real frustration

that’s the hook

top brands are doing this now

show the problem visually

make it relatable

keep it simple

then introduce the supplement as the fix

unblock → restore flow → relief

people stop because it feels real

they convert because it’s obvious

most ads try to explain

these just show

rt + comment “flow” and i’ll send more like this

(follow for dm)

English

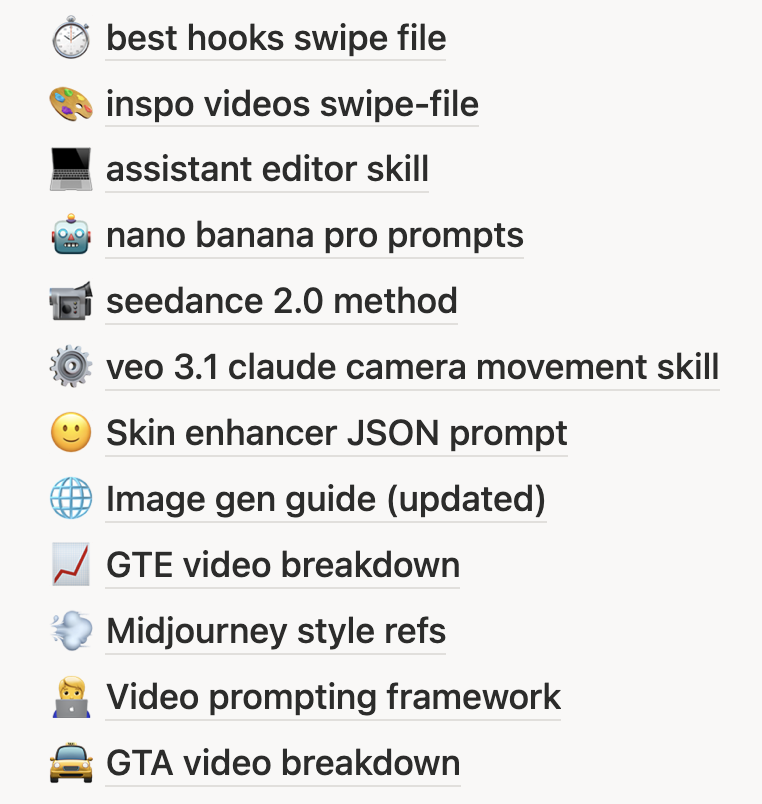

Claude Code + Nano Banana 2 is f*cking cracked 🤯

I built a skill inside Claude Code that writes JSON image prompts for Nano Banana 2, and the outputs look like they came from a professional photo shoot.

One plain-text prompt. Claude rewrites it as structured JSON with lighting, camera, composition, style, and negative prompts.

Then fires it off to Nano Banana 2.

All inside Claude Code.

Perfect for DTC brands and agencies who need high-volume ad creative without booking a shoot.

If you're using Nano Banana 2 for product shots and lifestyle images but every generation feels like pulling a slot machine lever — random lighting, inconsistent style, plastic skin, misspelled labels ...

This skill fixes the entire output:

→ You describe what you want in plain English

→ Claude rewrites it as a structured JSON prompt (lighting, camera angle, lens, depth of field, color grading — all of it)

→ Fires it to Nano Banana 2 via API

→ Saves the prompt + image in organized folders

→ You iterate on the style until it's dialed, then every output matches

No more slot machine prompting.

No more inconsistent brand imagery.

No more burning credits on unusable generations.

What you get:

- Photo-realistic product shots and lifestyle images on demand

- Full control over style, lighting, composition, and camera settings

- Saved JSON prompts you can reuse across every campaign

- A skill that gets smarter the more feedback you give it

Built 100% in Claude Code with a custom skill + Python scripts.

I put together a full playbook showing the exact skill, the JSON schema, and the workflow to set this up yourself.

Want the full playbook?

> Like this post

> Comment "BANANA"

And I'll send it over (must be following so I can DM)

English

stefan रीट्वीट किया

$73k in sales came from an ad featuring… a tiny pineapple doctor

not a celebrity

not a hair transplant clinic

not a dramatic before/after reel

just a small animated pineapple in a white coat

walking across a scalp with a magnifying glass

in two seconds you understand the story

weak follicles

inflamed pores

hair struggling to grow

the pineapple “doctor” inspects the problem like a scientist

no complex explanation needed

people stop because it’s strange and funny

they keep watching because the visual makes the problem obvious

bald spot → follicle → treatment

this is why animated AI characters are quietly outperforming generic hair loss ads

they turn a complicated problem into a simple visual story

rt + comment “hair” and i’ll send the full breakdown

(follow for dm)

English

stefan रीट्वीट किया

just built a swipe file of 58 VSLs actively scaling on facebook right now

not theory, not 2021 examples

current 7-8 figure ads that are printing today

inside you'll find:

→ long-form problem → agitation → mechanism breakdowns

→ short hooks that stop the scroll in 3 seconds

→ on-lander VSLs built for warm traffic

→ hybrid advertorial + VSL structures

→ 12+ niches: supplements, skincare, weight loss, gadgets, beauty and more

you'll see exactly:

→ how they hook in the first 3 seconds

→ where the mechanism drops

→ how they stack proof

→ how they kill objections before the viewer thinks them

if you're running ads this saves you weeks of testing

if you're not, it shows you what winning creative looks like in 2026

rt + comment "vsl" and i'll send the full swipe file

(follow for dm)

English

stefan रीट्वीट किया

$62k in 18 days

from an ad that looks like a cartoon glass of juice walking through clogged arteries

no real actors

no stock doctor footage

no boring explainer slides

just a visual story

arteries blocked like concrete

red blood cells stuck

pressure building

then the animated drink steps in

cracks the blockage

flow restored

you understand the problem in one second

that’s why these animated object ads convert

they turn abstract health concepts into something you can see

no heavy claims

no long explanations

just visual cause → visual solution

education disguised as entertainment

rt + comment “artery” and i’ll send the structure

(follow for dm)

English

Claude Cowork Sub-Agents are f*cking cracked 🤯

One prompt → 50 competitor ads analyzed, hooks extracted, and a full creative brief generated.

10 AI agents running in parallel, under 5 minutes.

All inside Claude Cowork.

Perfect for DTC brands and agencies who are still doing creative research and ad production one task at a time inside Claude.

If you're analyzing competitor ads one by one, copying hooks into a spreadsheet manually, writing brief after brief from scratch, and watching Claude's output quality fall off a cliff after the 15th variation because the context window is completely bloated...

Sub-agents eliminate the entire bottleneck:

→ Drop in a spreadsheet of 50 competitor ads and spin up 10 parallel sub-agents

→ Each sub-agent analyzes 5 ads simultaneously — hooks, angles, CTAs, emotional tone, creative format

→ They report structured summaries back to the main agent without bloating the context

→ The main agent synthesizes patterns across all 50 ads into a competitive intel brief

→ Then spin up another round of sub-agents to generate 30 ad copy variations across 10 personas

→ Each sub-agent writes for 1-2 personas in a fresh context — so variation 30 is as sharp as variation 1

No analyzing ads one at a time.

No context window blowing up halfway through.

No copy quality degrading after the first dozen variations.

What this gives you:

→ 50 competitor ads broken down in minutes — hooks, angles, CTAs, formats, all structured

→ Pattern analysis across the full dataset that you'd miss reviewing ads individually

→ 30+ ad copy variations with persona-specific messaging that actually stays sharp

→ A workflow you can save as reusable skills and trigger with one command next time

→ The same output quality on the last task as the first

Built 100% inside Claude Cowork with sub-agents.

I put together a full DTC playbook:

5 bulk workflows with copy-paste prompts, the exact sub-agent prompting pattern, batching guidelines, and an honest breakdown of when this setup is worth it vs. when a simpler approach is the better move.

Want it for free?

> Like this post

> Comment "AGENTS"

And I'll send it over (must be following so I can DM)

English

I'm going to delete this post in 48 hrs...

Because I just dropped the complete MASTERCLASS guide on how to build creative iterations and variations to scale $1m months in 2026.

This is the exact ELITE system I've used to take multiple brands to $10M+/year

We charge $10,000/mo to do this for clients…

But today, I'm giving it away 100% FREE.

Like + Comment "NICK" and I'll send it to you.

English

stefan रीट्वीट किया

We're giving away the prompt we used to make this AI UGC video.

Getting Sora 2 to output hyper-realistic, consistent footage isn't just about the tool. The prompt has to be structured in a very specific way... character description, cinematography, camera motion, lighting, dialogue, audio, authenticity keywords. Get it wrong and the footage looks off.

Get it right and everything downstream becomes easier.

We’re sharing the exact Claude template we use as step one of our AI UGC workflow. It takes a basic brief (who the subject is, where they are, what they're saying, what device it should look like, their accent) and structures all of that into the exact format Sora 2 needs automatically.

It sits at the start of a full workflow that runs through Sora 2 for the hook, ElevenLabs for voice cloning, ChatGPT for subject consistency, Nano Banana for B-roll generation and Kling for animation. The whole thing is broken down in the latest D2C Diaries episode.

But this prompt is the foundation. Without it, the rest of the workflow is harder than it needs to be.

If you're building in this space or testing AI UGC for your brand, this is worth having.

Retweet this post and comment PROMPT and I’ll send it over.

English

nano banana 2 dropped today and literally nobody knows how to use it for static ads.

long prompts. random generations. hours wasted. output that looks like every other AI ad.

we built a system on top of it that replicates static ads and product images with zero prompting lol.

upload your product. pick a layout that already converts. get a finished ad.

comment "ADS" below and i'll send you free access.

English