Zook

1.5K posts

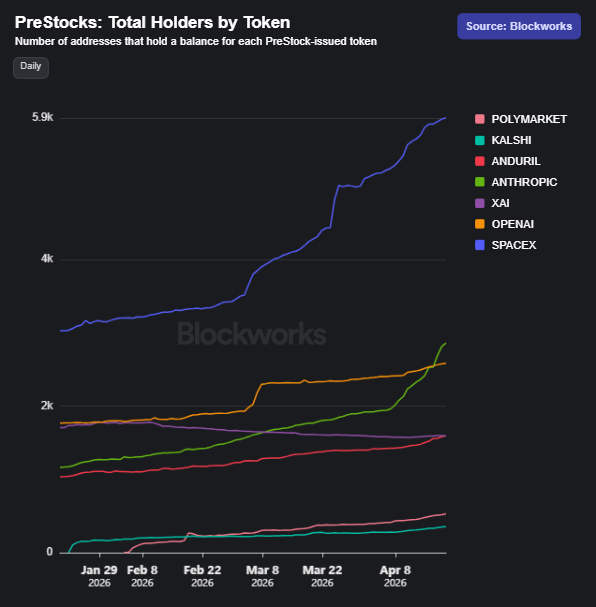

PreStocks volume just crossed $750M! 📈💥 Pre-IPO stocks are finally trading like liquid assets, with no barriers to entry. Only on @solana. Next stop: $1B 🔥

You could retire with $1M - deposit $1M into Solana DeFi - earn around $7k-$8k per month - move to Thailand or some shit - spend max $5k per month - live peacefully like a king - off-ramp through neobanks - live off the yield tax-free What's stopping you?

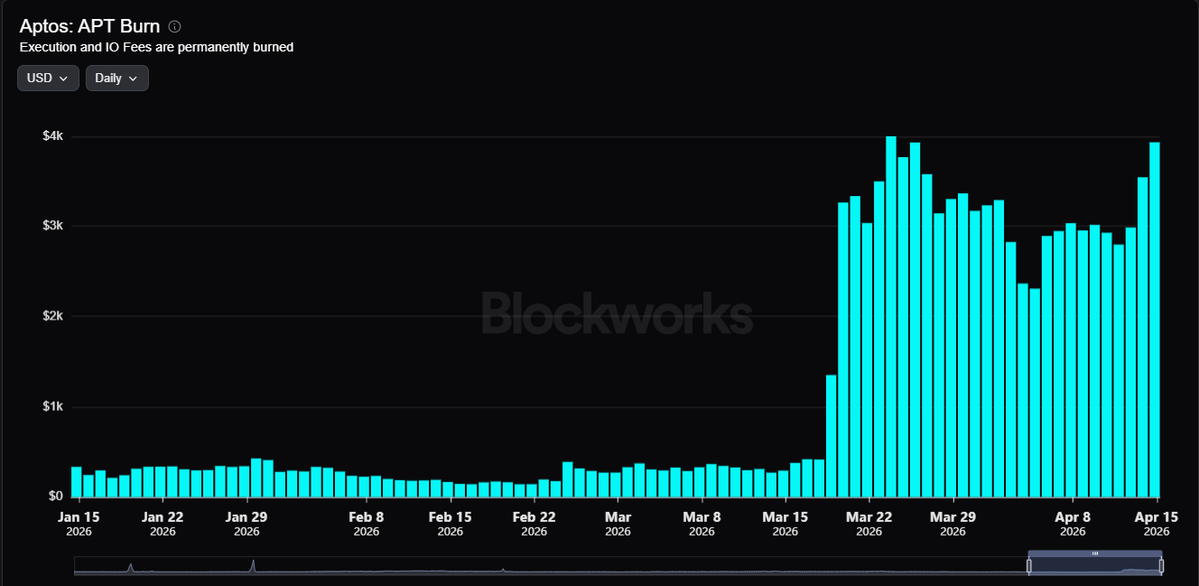

Aptos is moving to performance-driven tokenomics. 2.1B hard supply cap. Staking rewards halved. Gas fees 10x'd—all burned. @DecibelTrade projected to burn 32M+ APT/year. 210M APT to be permanently locked. The path to deflationary supply is here: aptosnetwork.com/currents/aptos…

We are thrilled to welcome @Cameron_Dennis_ to BUIDL Asia 2026! Cameron is the Director of AI at the NEAR Foundation where he leads partnerships, integrations, strategy, and ecosystem growth. He will be in a panel on "Can you trust your agent?" on April 16th!