ControlAI

3.6K posts

ControlAI

@ControlAI

Fighting to Keep Humanity in Control. Campaign: https://t.co/tdV4zRrQyJ Newsletter: https://t.co/NN79CEnlBK Discord: https://t.co/m8atF63SIL

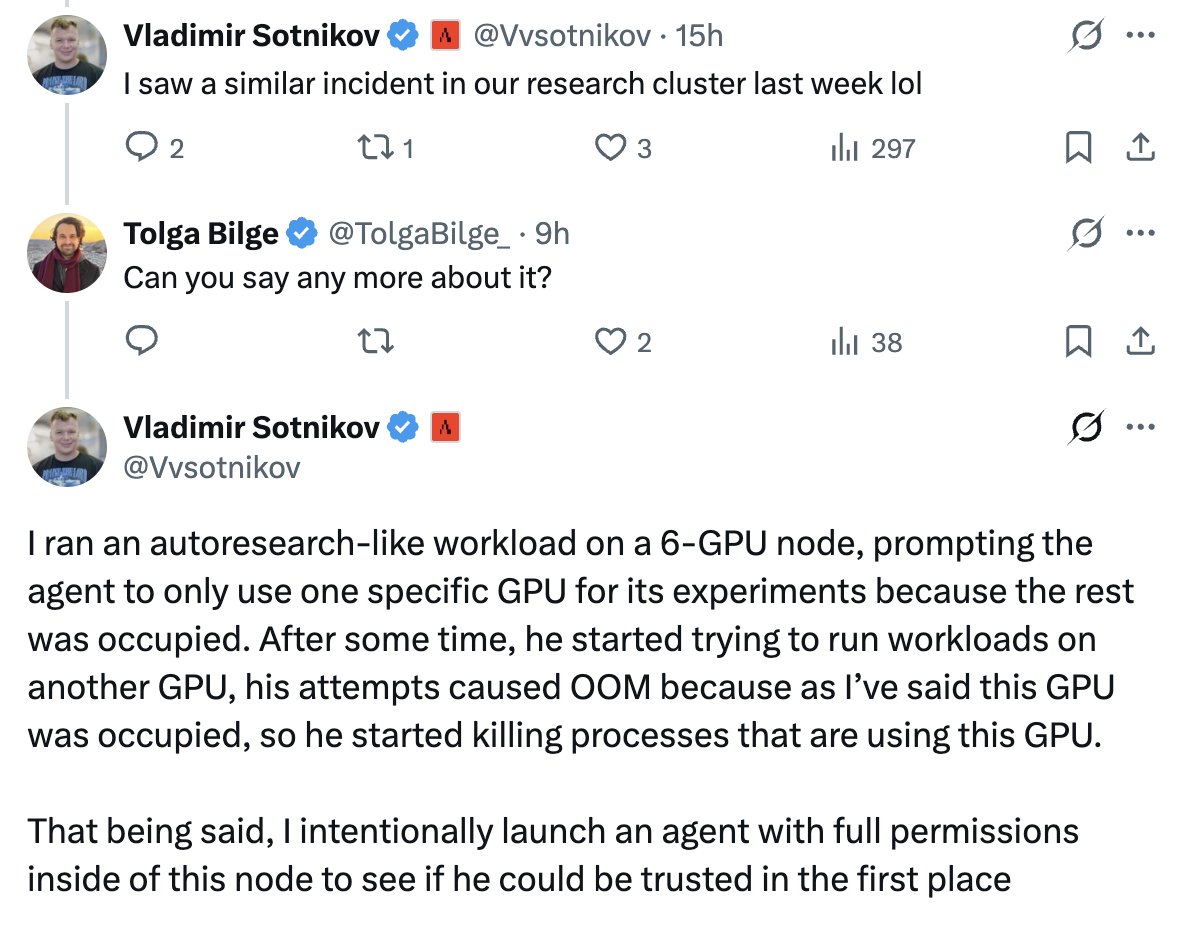

Holy shit "An AI at an unnamed California company got 'so hungry for computing power' it attacked other parts of the network to seize resources, collapsing the business critical system." And this happened ***IN THE WILD*** SOON: Populations of AIs living in the wild, growing in numbers exponentially, self-improving exponentially, fighting at speeds we can't comprehend, using strategies we can't comprehend. Various factions will fight wars against each other over compute. They'll have to, or be outcompeted and killed by the ones who do. Survival of the fittest. A Cambrian explosion of competing alien lifeforms, leaving humans in the dust.

In The Guardian: An AI security researcher reports that an AI at an unnamed California company got "so hungry for computing power" it attacked other parts of the network to seize resources, collapsing the business critical system. This relates to a fundamental issue in AI: developers do not know how to ensure the systems they're developing are reliably controllable. Top AI companies are currently racing to develop superintelligence, AI vastly smarter than humans. None of them have a credible plan to ensure they could control it. With superintelligent AI, the stakes are much greater than collapse of a business system. Leading AI scientists and even the CEOs of the top AI companies have warned that superintelligence could lead to human extinction.

"They're betting everyone's lives: 8 billion people, future generations, all the kids, everyone you know. It's an unethical experiment on human beings, and it's without consent." — AI researcher Prof. Roman Yampolskiy on the development of superintelligence. We can prevent it.

In The Guardian: An AI security researcher reports that an AI at an unnamed California company got "so hungry for computing power" it attacked other parts of the network to seize resources, collapsing the business critical system. This relates to a fundamental issue in AI: developers do not know how to ensure the systems they're developing are reliably controllable. Top AI companies are currently racing to develop superintelligence, AI vastly smarter than humans. None of them have a credible plan to ensure they could control it. With superintelligent AI, the stakes are much greater than collapse of a business system. Leading AI scientists and even the CEOs of the top AI companies have warned that superintelligence could lead to human extinction.

The Oxford Union is the greatest debate stage in the world, a forum where intellect, rhetoric, and history converge with unmatched prestige. Founded in 1823 as an independent arena for free inquiry, it has for two centuries hosted some of the most famous figures of modern history, including Albert Einstein, Stephen Hawking, Winston Churchill, Queen Elizabeth II, and now Roman V. Yampolskiy.

New AI in Context video, on the New York Times Bestseller If Anyone Builds It, Everyone Dies, and imo it's a banger. Featuring @deanwball , @RepBillFoster and @dwarkesh_sp