Yafeng(Jason) Deng

21 posts

@LongTermMemoryE

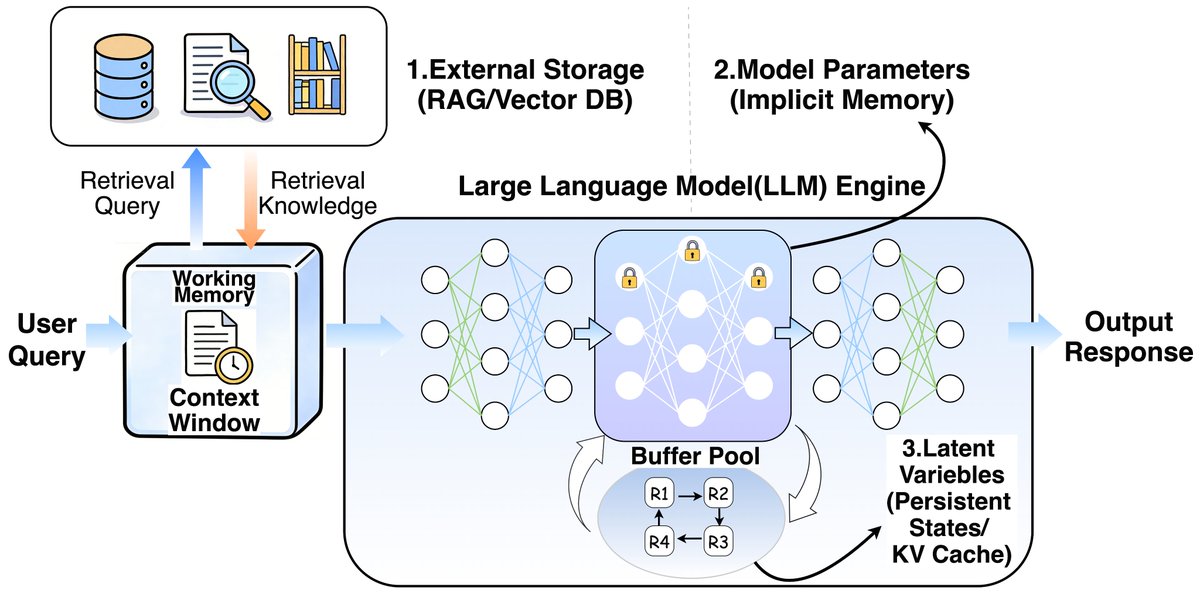

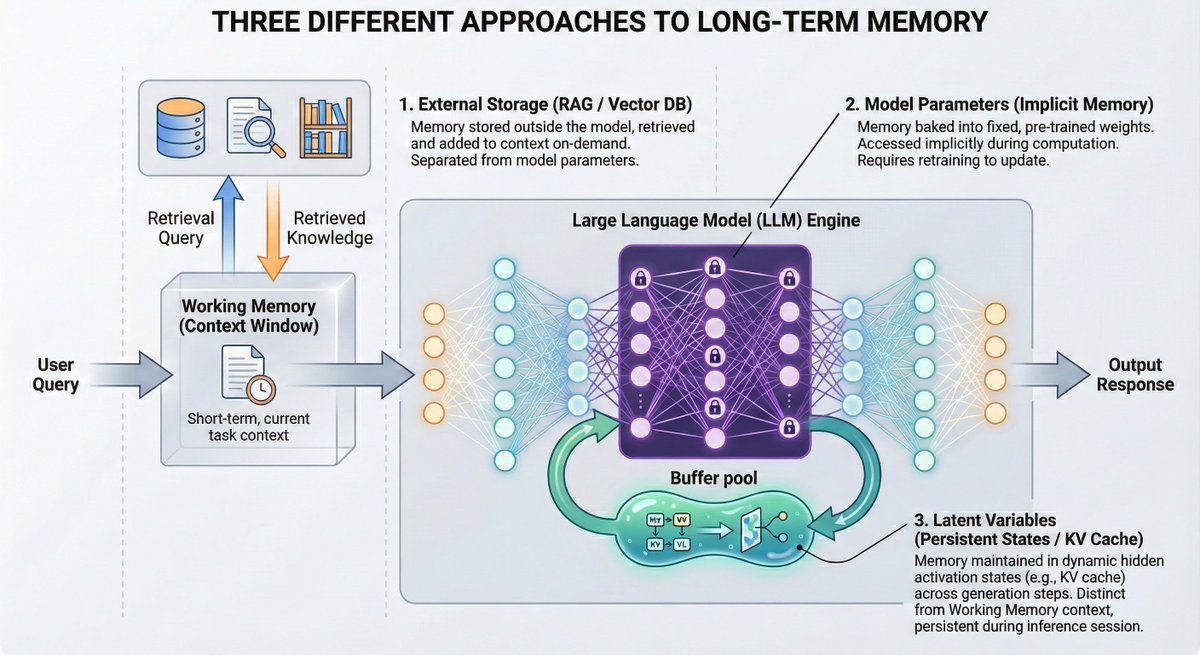

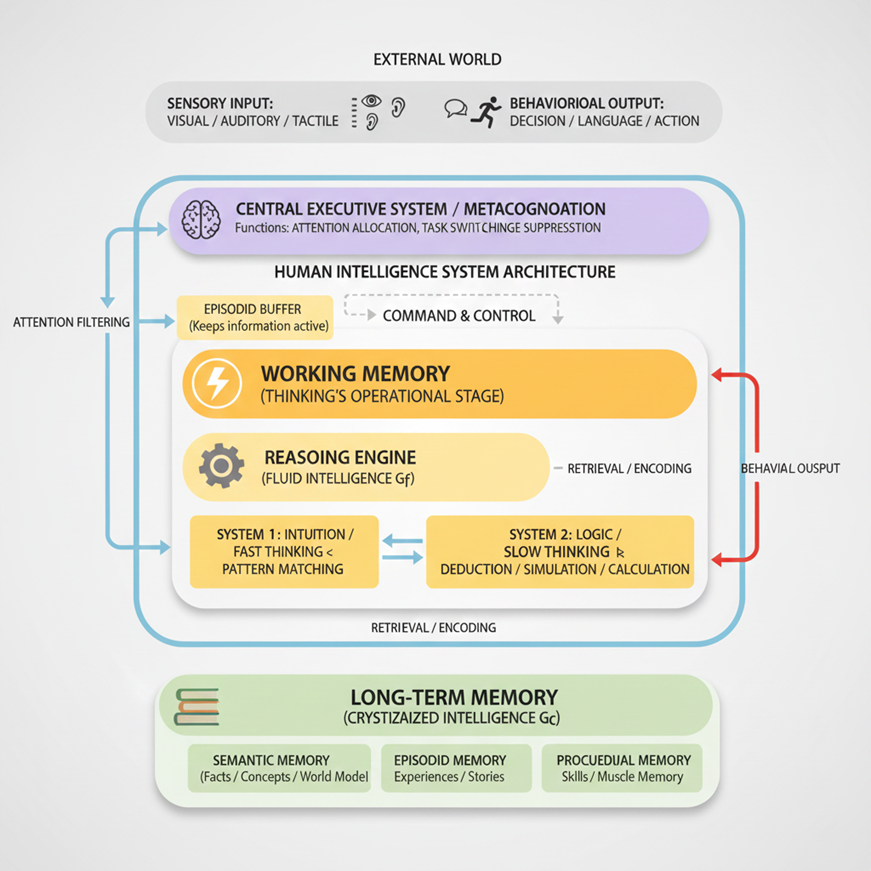

CEO@EverMind AI. Giving AI a memory. Dedicated to Long-term Memory & Continuous Learning to build Agents with true personalization and proactivity.

我们昨天在 arXiv 上发了一篇新论文,填补了一个一直没人做的空白:多人、多群组场景下的记忆测试。 简单科普一下为什么这件事重要 之前测 AI 记忆能力的 benchmark,基本都是"两个人聊天"的场景: LoCoMo(2024):最早系统测试多轮对话记忆,但本质上就是两个人对话,上下文约 16K tokens,规模偏小 LongMemEval(2024,ICLR 2025):把规模推到了 115K–1.5M tokens,定义了五个核心记忆能力,但仍然是一对一对话 问题是,现实世界不是这样的。你同时在多个群聊里,跟不同的人聊不同的事,AI 能记住谁在哪个群说了什么吗? 这就是 EverMemBench 要回答的问题。 下图是我用 @claudeai 最新功能生成的,你还别说,挺好看。

In 2024 the question was: which LLM do we use? In 2025 the question is: how do we make agents actually work in production? In 2026 the question will be: which context layer are we building on? Here is why that shift is already underway: