PareaAI me-retweet

PareaAI

242 posts

PareaAI

@PareaAI

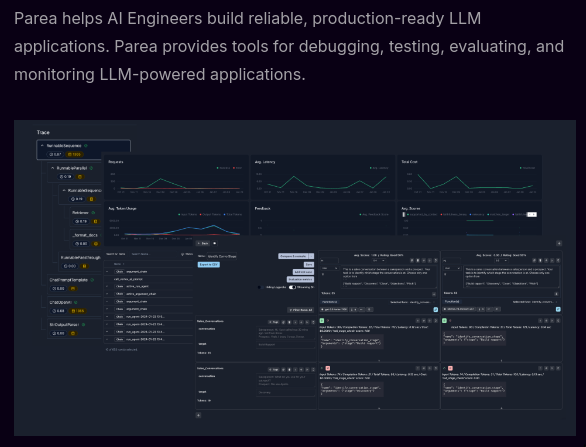

Parea AI (YC S23) provides tools for evaluating, testing and monitoring LLM applications.

New York, USA Bergabung Nisan 2023

33 Mengikuti246 Pengikut

PareaAI me-retweet

How do you detect unreliable behavior of your LLM app?

Recently, we talked to the team at @sixfoldai and they shared with us a simple, yet powerful way to assess the reliability of their LLM app using @PareaAI. More about how they test their risk assessment AI solution for insurance underwriters in the article in the thread

English

PareaAI me-retweet

PareaAI me-retweet

🚀 New deep dive notebook on @PareaAI experiments and LLM evals 📝🔬.

I cover some of the key functionalities illustrating the power and flexibility of our API.

🔽 Link in comments 🔽

English

PareaAI me-retweet

PareaAI me-retweet

@PareaAI Also, learn more about the research behind each here: docs.parea.ai/blog/eval-metr…

English

PareaAI me-retweet

There are so many “black box” evals that force users to instantiate eval classes. Never fully understood this. At @PareaAI we see evals as just functions. You can copy the source code and modify as you see fit, all OSS and based on latest research. Check these out👇🏾

English

PareaAI me-retweet

📝 Updated integration docs ⭐️

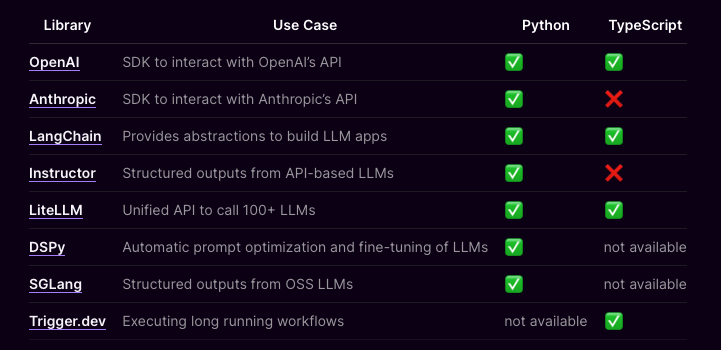

Checkout @PareaAI's updated docs to automatically trace apps powered by @LangChain, instructor by @jxnlco, @LiteLLM, DSPy by @lateinteraction, SGLang by @lmsysorg, and @triggerdotdev.

Docs: docs.parea.ai/integrations/o…

English

PareaAI me-retweet

PareaAI me-retweet

And to help you understand what's going on, we integrate with observability platforms like @ArizePhoenix, @langchain's LangSmith, @langfuse, @PareaAI, and @lunary_hq so you can explore the experiments that zenbase/core automates.

Cookbooks here: github.com/zenbase-ai/cor…

English

PareaAI me-retweet

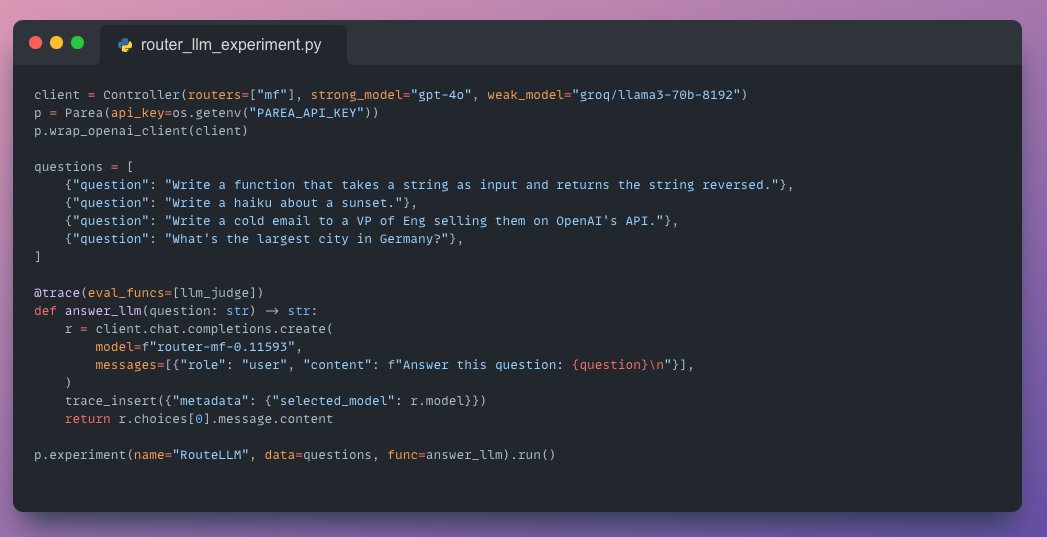

Def agree this could be great. Probably best if you can train the router yourself. @anyscalecompute's RouterLLM tracing support with @PareaAI

Matthew Berman@MatthewBerman

RouteLLM is one of the most impactful algorithmic innovations in AI that I've ever seen. I don't think people realize how important it truly will become. Here's a full tutorial for how to use it:

English

PareaAI me-retweet

PareaAI me-retweet

PareaAI me-retweet

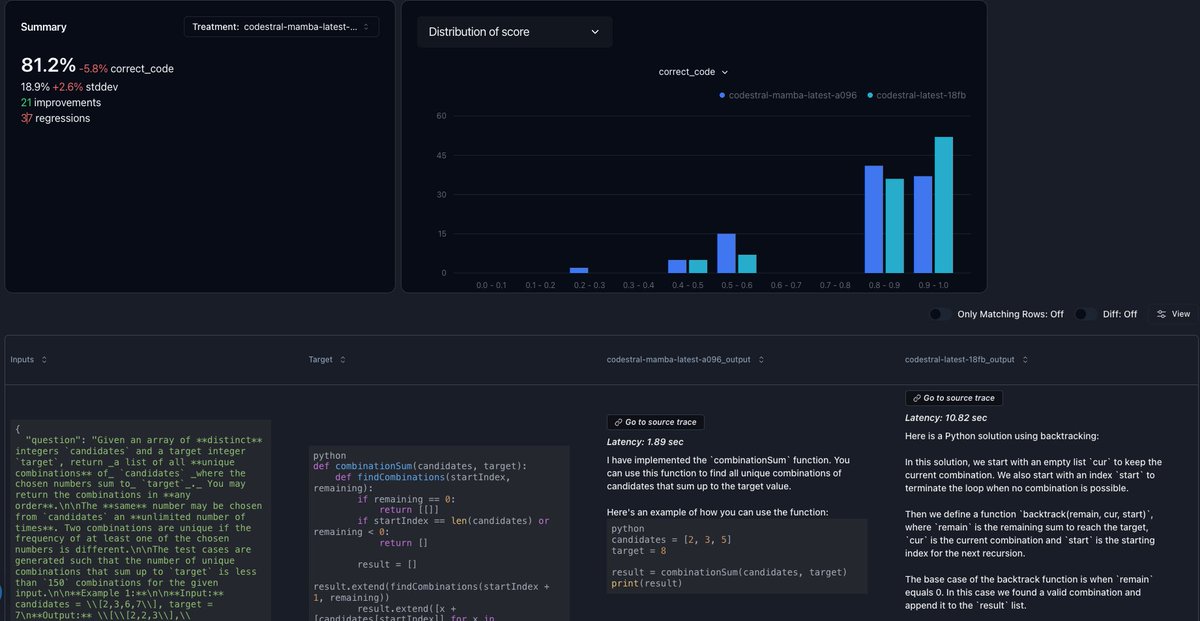

There have been so many new models lately. Most recently, @MistralAI 's codestral-mamba. I figured it'd be great to highlight how to use @PareaAI for Regression Testing. Check out the Notebook below, where I test codestral-latest vs mamba on LeetCode questions. 👇

English

PareaAI me-retweet

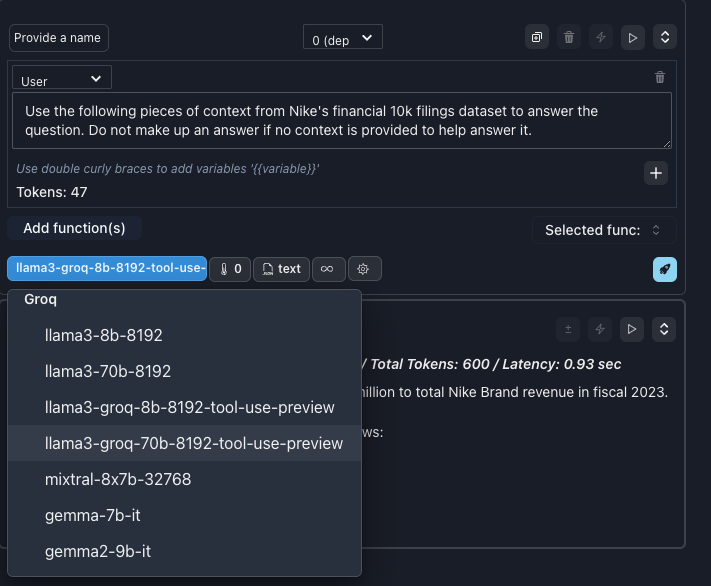

At this point I could probably have an llm monitor the top foundation model providers and then produce a PR for me that adds any new models to @PareaAI the moment they launch.

English

PareaAI me-retweet

PareaAI me-retweet

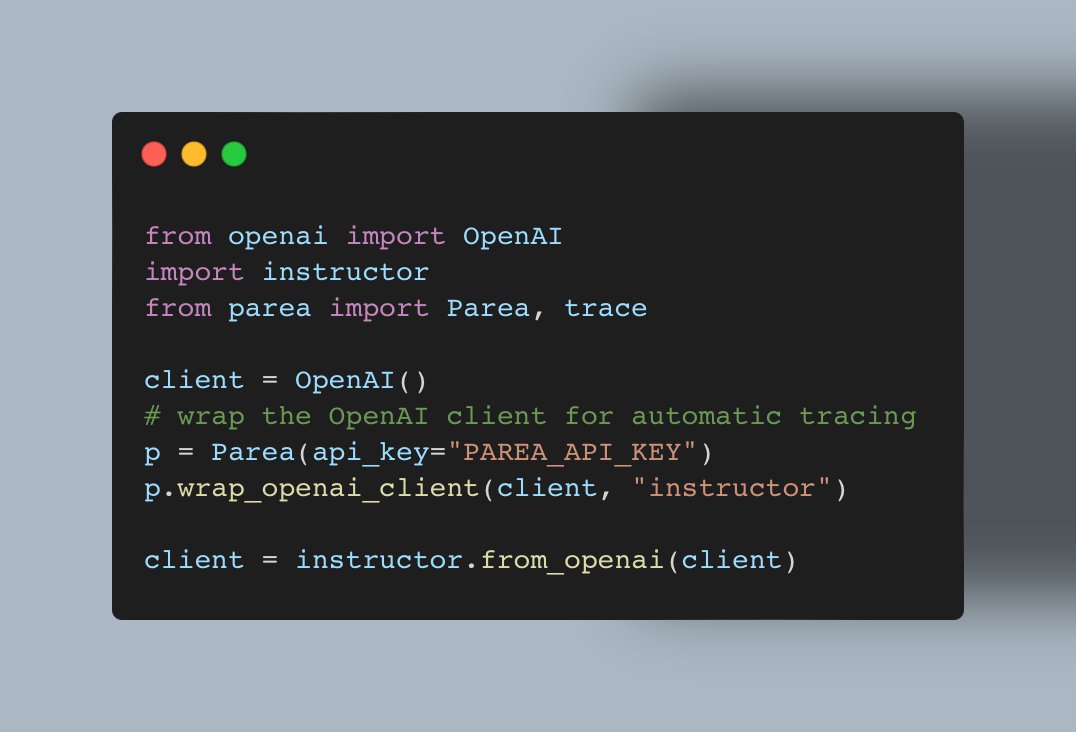

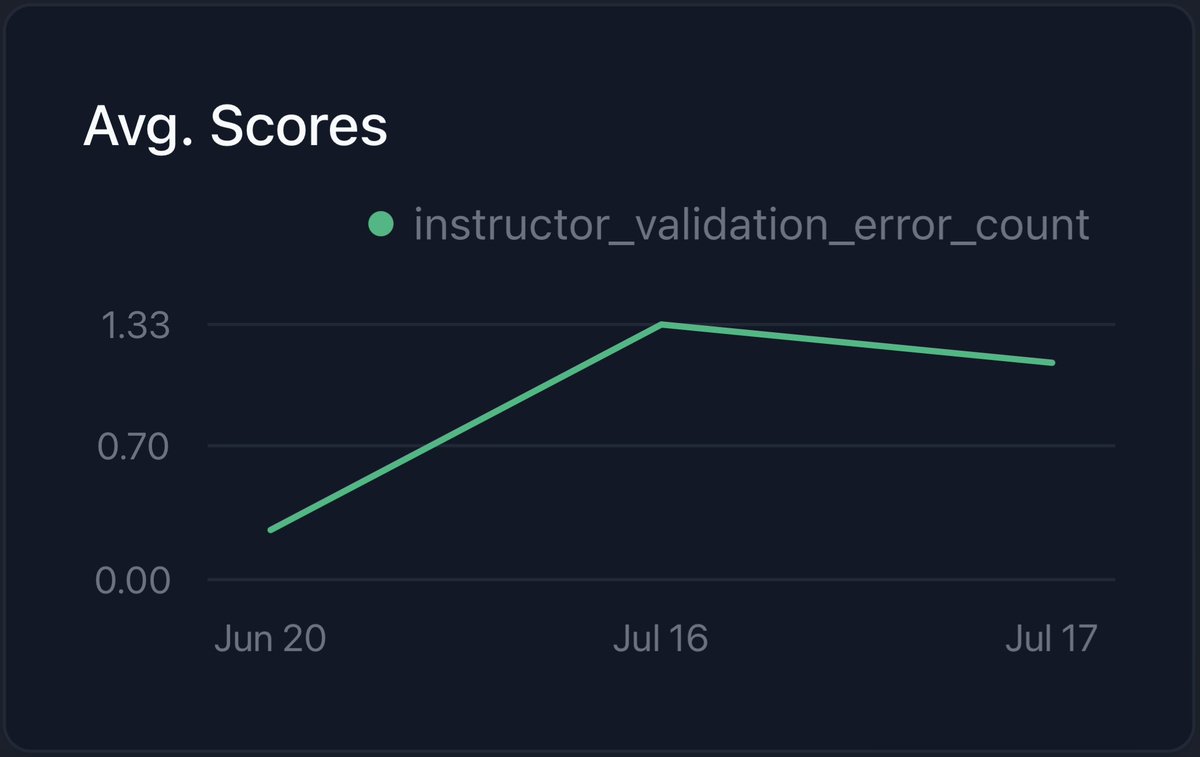

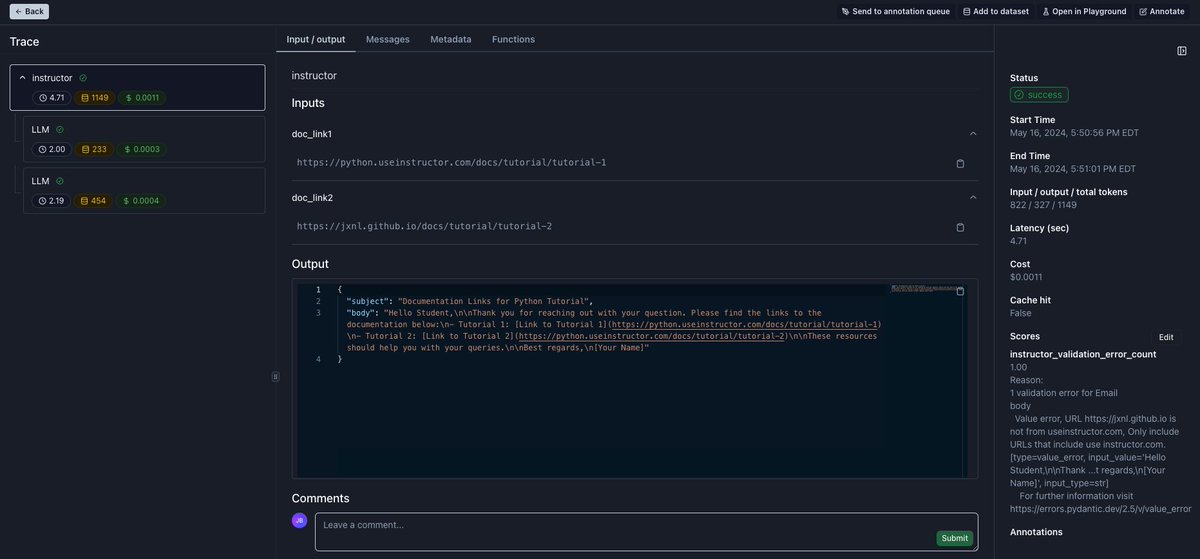

If you use structured outputs with Instructor, track validation errors instantly with @PareaAI.

Concretely, the integration automatically:

- groups any LLM call due to retries together under a single trace

- tracks any field which failed validation with the respective error message

- visualizes validation error count over time

Instrument calls made via the Instructor client by adding two lines:

p = Parea(api_key="PAREA_API_KEY")

p.wrap_openai_client(client, "instructor")

Read the full blog post on the instructor docs in the 🧵

English

PareaAI me-retweet

Moving from demos to production-ready LLM apps can be challenging. In this post, I outline a practical workflow to help teams make this transition, focusing on:

- Hypothesis testing

- Dataset creation

- Effective evals

- Experimentation

Full post here: zurl.co/27Ad

English

PareaAI me-retweet

This method is powered by DSPy from @lateinteraction and inspired by the work of @sh_reya:

arxiv.org/pdf/2404.12272

arxiv.org/pdf/2401.03038

Also, thanks to @eugeneyan sharing JudgeBench: arxiv.org/abs/2406.18403

English

PareaAI me-retweet