Prompt Injection

2.1K posts

@PromptInjection

AI beyond the hype. Real insights, real breakthroughs, real methods. Philosophy, benchmarks, quantization, hacks—minus the marketing smoke. Injecting facts into

Yep, Composer 2 started from an open-source base! We will do full pretraining in the future. Only ~1/4 of the compute spent on the final model came from the base, the rest is from our training. This is why evals are very different. And yes, we are following the license through our inference partner terms.

was messing with the OpenAI base URL in Cursor and caught this accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast so composer 2 is just Kimi K2.5 with RL at least rename the model ID

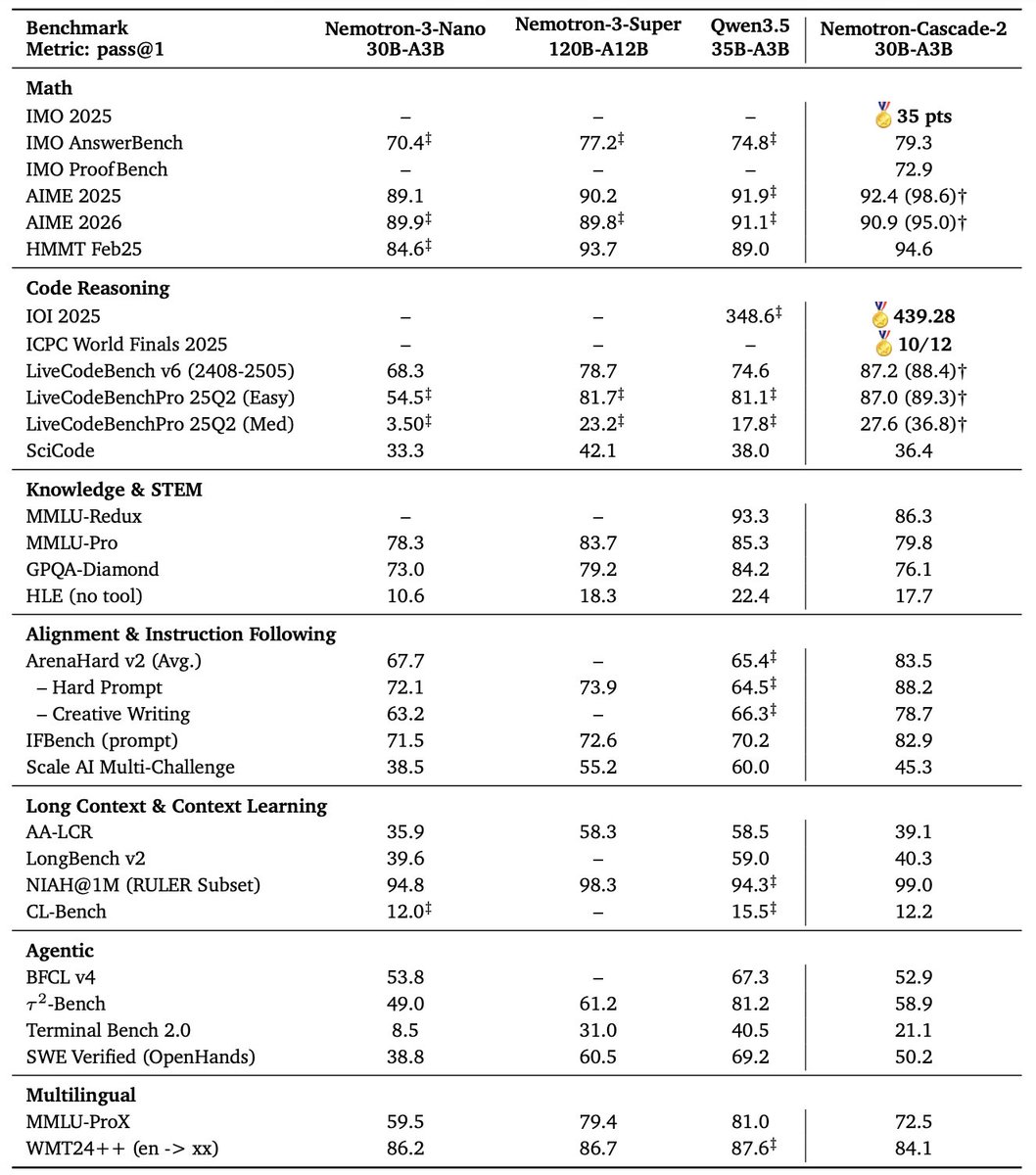

Both are NVIDIA 30B-total / ~3B-active MoE models (hybrid Mamba-2 + Transformer arch, 1M context, 128 experts/layer activating ~6). Nemotron-Nano 30B MoE (Nemotron 3 Nano 30B-A3B) is the pretrained base + SFT + standard RLHF: excels in efficiency, agentic tasks, chat, code/math. Cascade-30B MoE (Nemotron-Cascade-2 30B-A3B) is the post-trained upgrade on Nano's base via expanded Cascade RL + multi-domain on-policy distillation. Delivers superior reasoning/agentic perf (gold IMO/IOI 2025, higher AIME/LiveCodeBench/etc.), plus explicit thinking/instruct modes. Cascade = Nano, but leveled up for frontier-level intelligence density.

🚀 Introducing Nemotron-Cascade 2 🚀 Just 3 months after Nemotron-Cascade 1, we’re releasing Nemotron-Cascade 2: an open 30B MoE with 3B active parameters, delivering best-in-class reasoning and strong agentic capabilities. 🥇 Gold Medal-level performance on IMO 2025, IOI 2025, and ICPC World Finals 2025: • Capabilities once thought achievable only by frontier proprietary models (e.g. Gemini Deep Think) or frontier-scale open models (i.e. DeepSeek-V3.2-Speciale-671B-A37B). • Remarkably high intelligence density with 20× fewer parameters. 🏆 Best-in-class across math, code reasoning, alignment, and instruction following: • Outperforms the latest Qwen3.5-35B-A3B (2026-02-24) and even larger Qwen3.5-122B-A10B (2026-03-11). 🧠 Powered by Cascade RL + multi-domain on-policy distillation: • Significantly expand Cascade RL across a much broader range of reasoning and agentic domains than Nemotron-Cascade 1, while distilling from the strongest intermediate teacher models throughout training to recover regressions and sustain gains. 🤗 Model + SFT + RL data: 👉 huggingface.co/collections/nv… 📄 Technical report: 👉 research.nvidia.com/labs/nemotron/…