Wei Ping

335 posts

@_weiping

Distinguished Research Scientist & Director @Nvidia | Post-training, Reasoning, RL, Multimodal

The UALM paper is accepted as an Oral presentation at ICLR. Key takeaways: 1) a unified LM for audio understanding and text2audio generation 2) use a proper audio codec and delay pattern for audio outputs 3) scale data!!! 4) use classifier free guidance arxiv.org/abs/2510.12000

🚨BREAKING: Kimi K2.5 by @Kimi_Moonshot is now the #1 open model in Code Arena! In Code Arena’s agentic coding evaluations, Kimi K2.5 is now: - #1 open model, surpassing GLM-4.7 - #5 overall, on par with top proprietary models like Gemini-3-Flash - The only open model in the top 5 🏆Kimi K2.5 is the best open model across Text, Vision, and Code Arena. Huge congrats to the @Kimi_Moonshot team for continuing to push the frontier of open models 👏

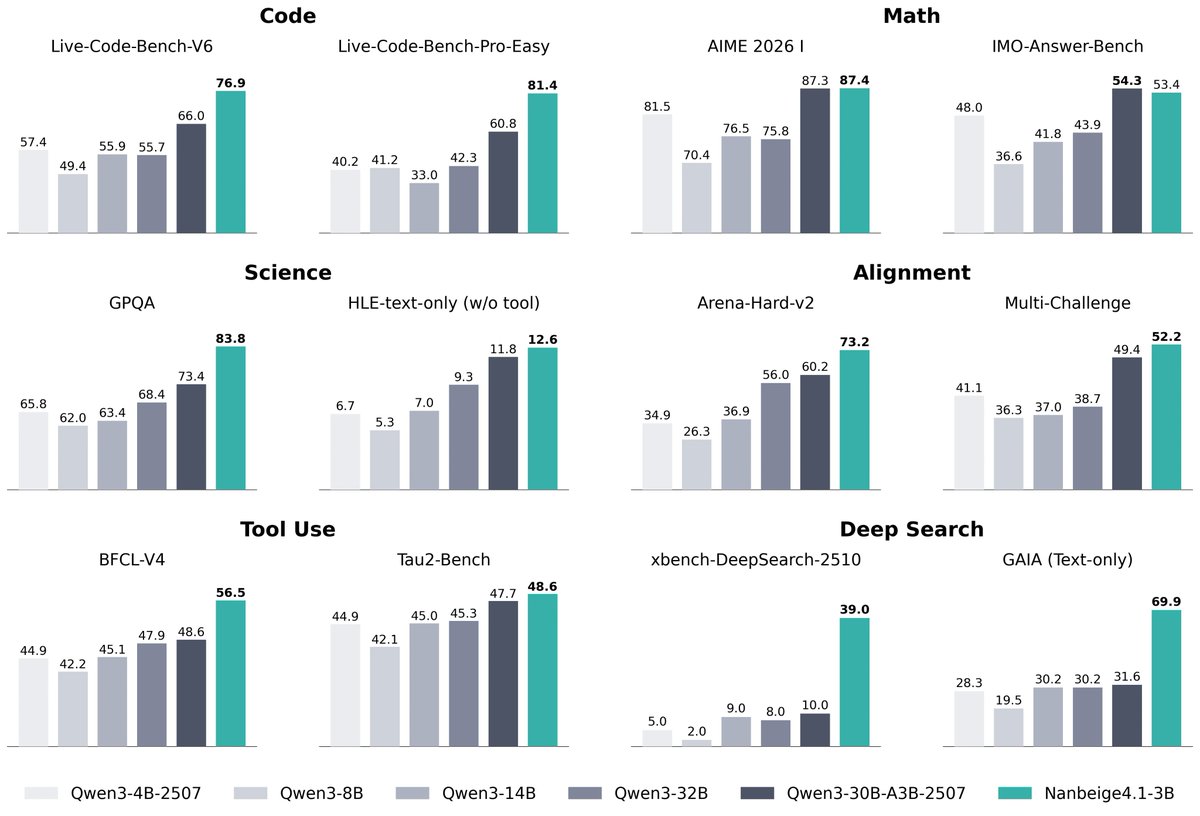

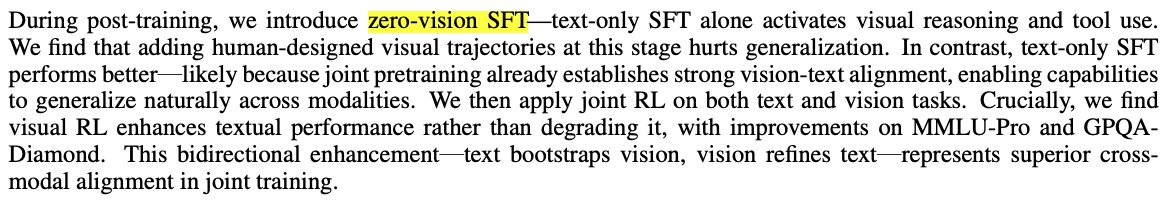

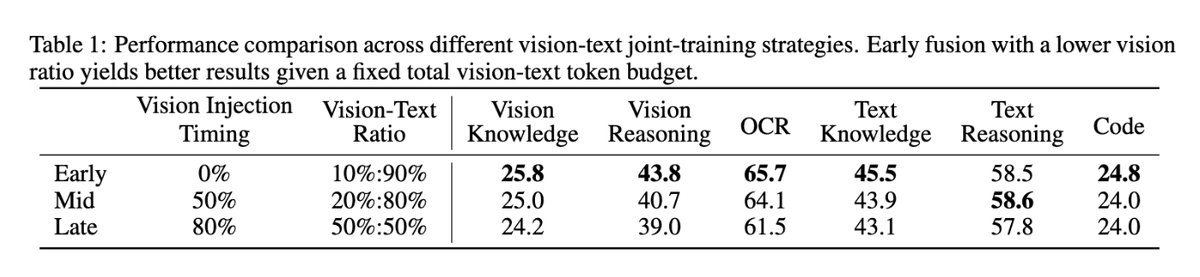

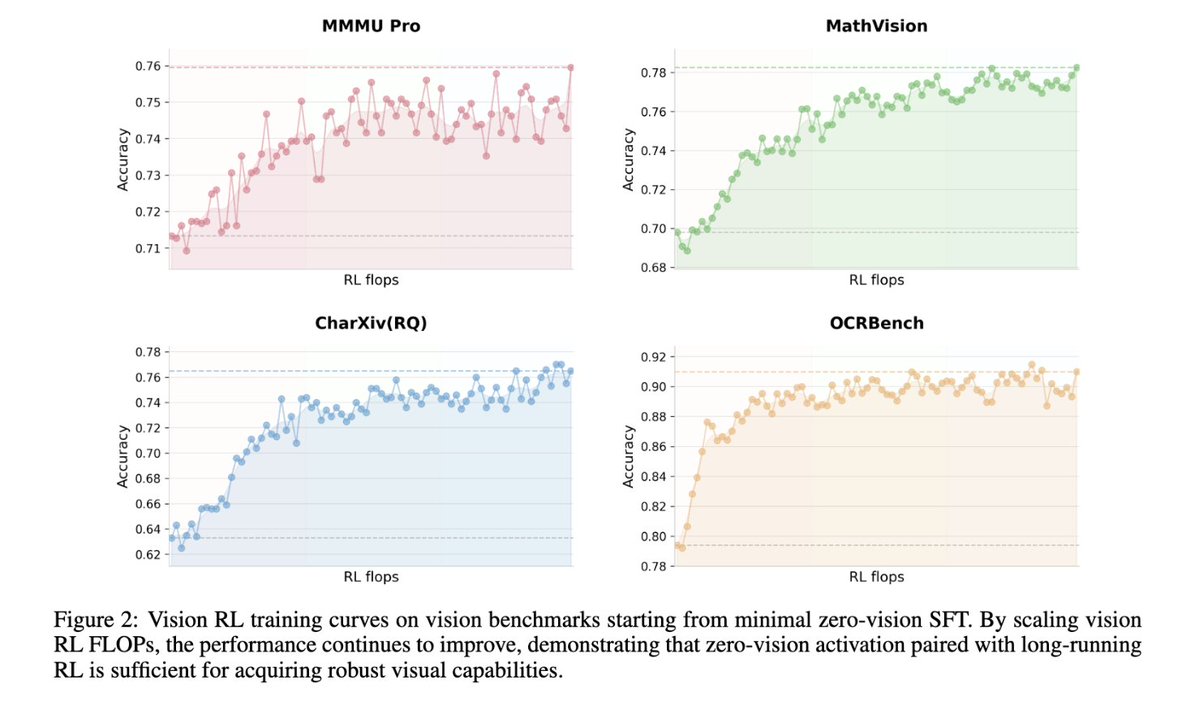

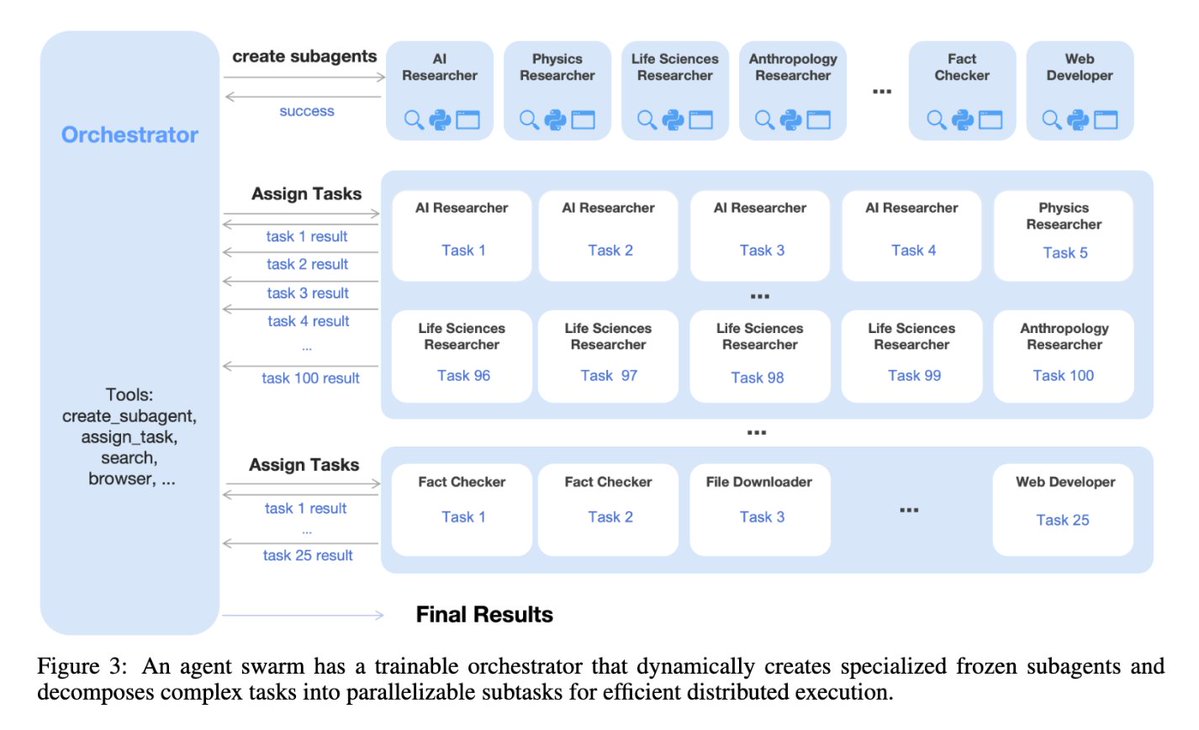

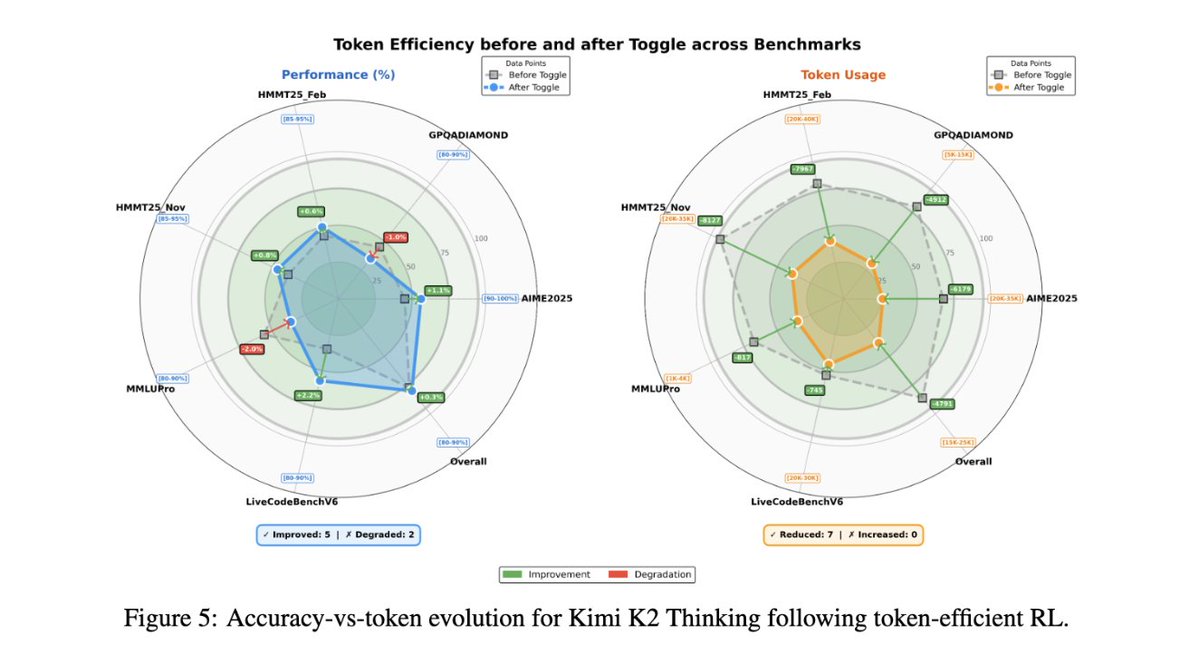

Kimi K2.5 tech report just dropped! Quick hits: - Joint text–vision training: pretrained with 15T vision-text tokens, zero-vision SFT (text-only) to activate visual reasoning - Agent Swarm + PARL: dynamically orchestrated parallel sub-agents, up to 4.5× lower latency, 78.4% on BrowseComp - MoonViT-3D: a unified image–video encoder with 4× temporal compression, enabling 4× longer videos in the same context - Toggle: token-efficient RL, 25–30% fewer tokens with no accuracy drop Here's our work toward scalable, real-world agentic intelligence. More details in the report 👉github.com/MoonshotAI/Kim…

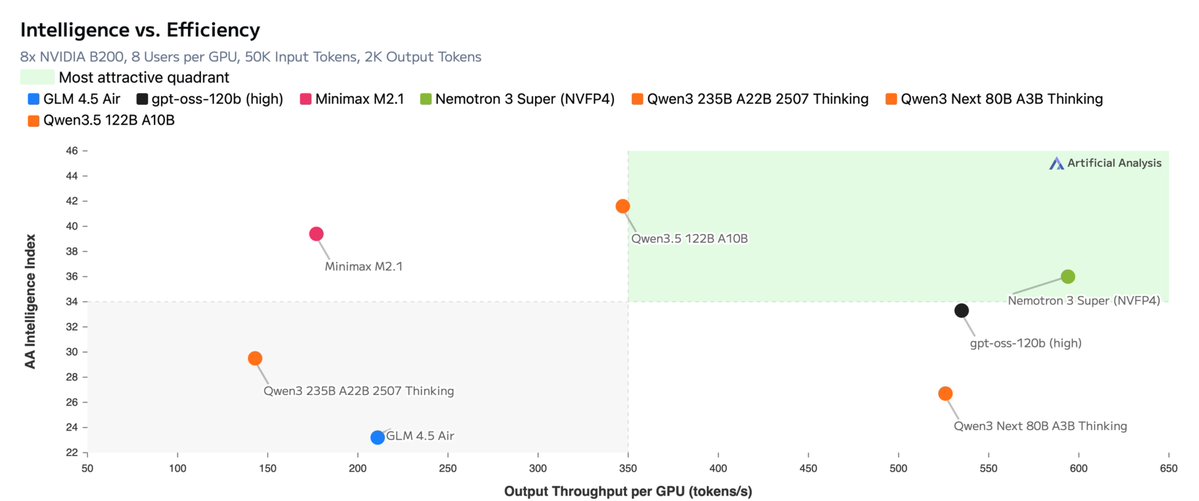

We just launched an ultra-efficient NVFP4 precision version of Nemotron 3 Nano that delivers up to 4x higher throughput on Blackwell B200. Using our new Quantization Aware Distillation method, the NVFP4 version achieves up to 99.4% accuracy of BF16. Nemotron 3 Nano NVFP4: nvda.ws/4t63z9y Tech Report: nvda.ws/4bj3pp0

Every frontier AI lab has lost co-founder(s): - OpenAI: 8 of 11 gone - Thinking Machines: 3 of 6 gone - SSI: 1 of 3 gone - DeepMind: 1 of 3 gone - xAI: 3 of 12 gone One exception: Anthropic. All 7 co-founders are still there. Anthropic culture is worth studying.

Introducing Cowork: Claude Code for the rest of your work. Cowork lets you complete non-technical tasks much like how developers use Claude Code.

Just an ordinary day at a robotics company.

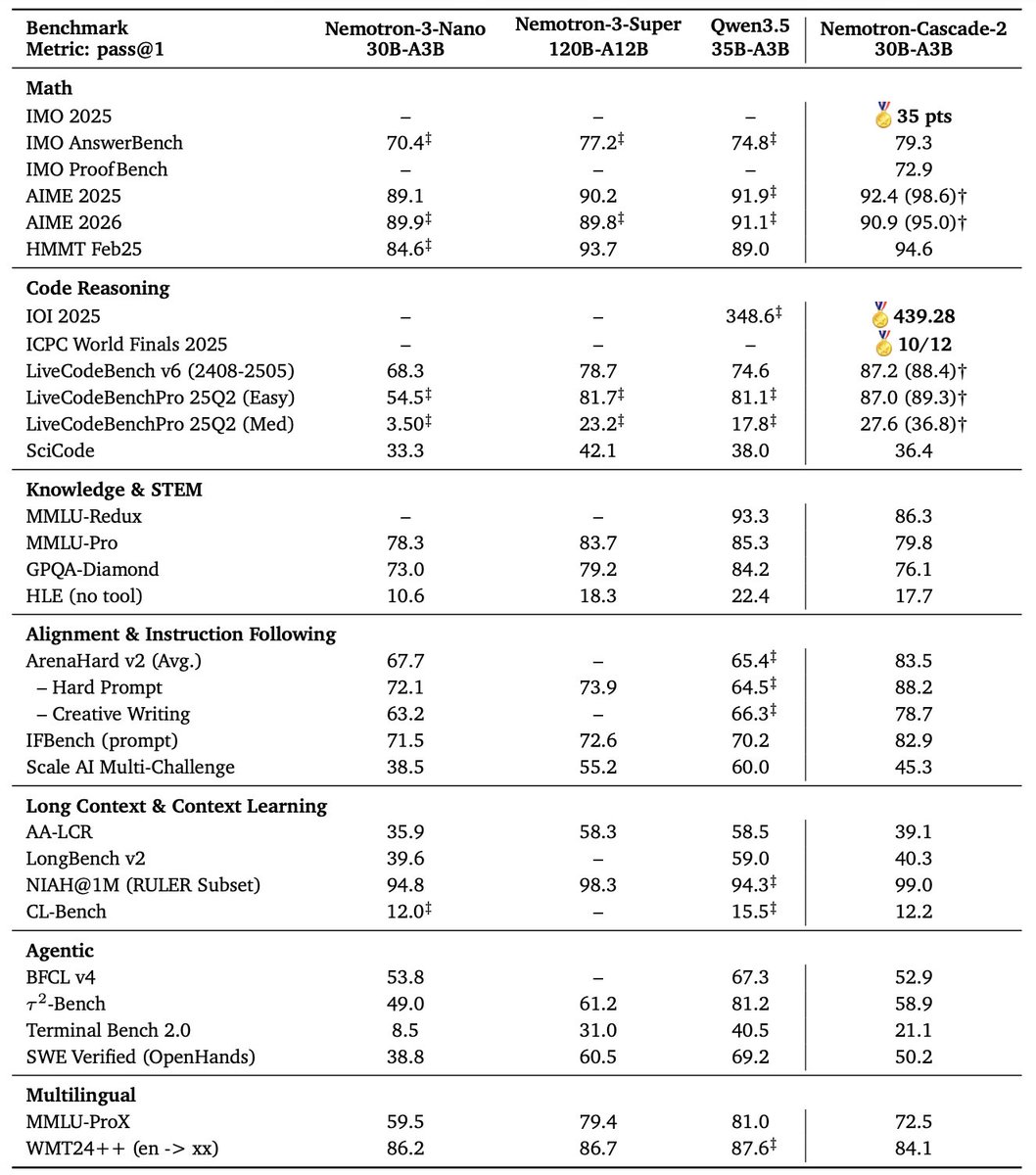

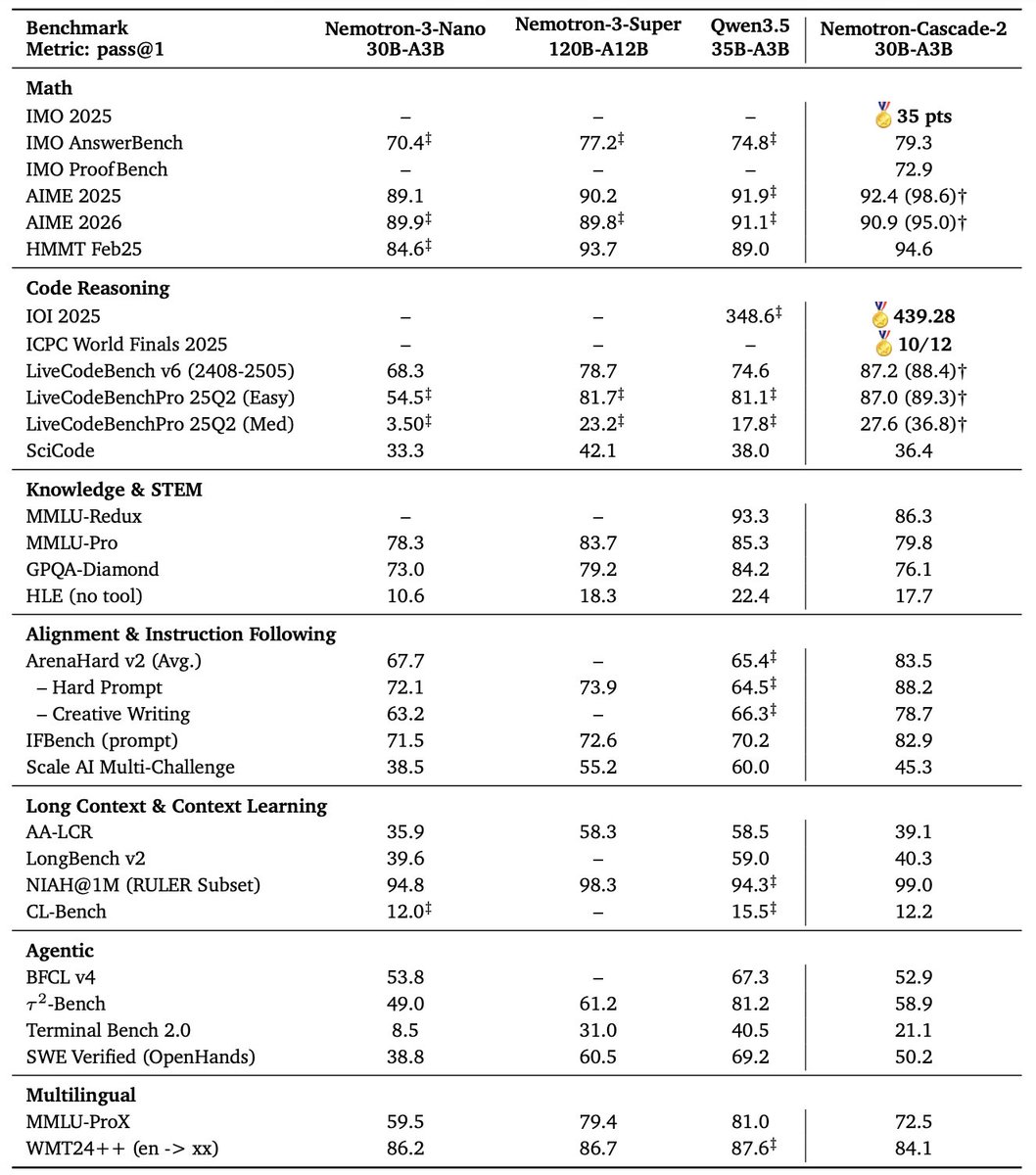

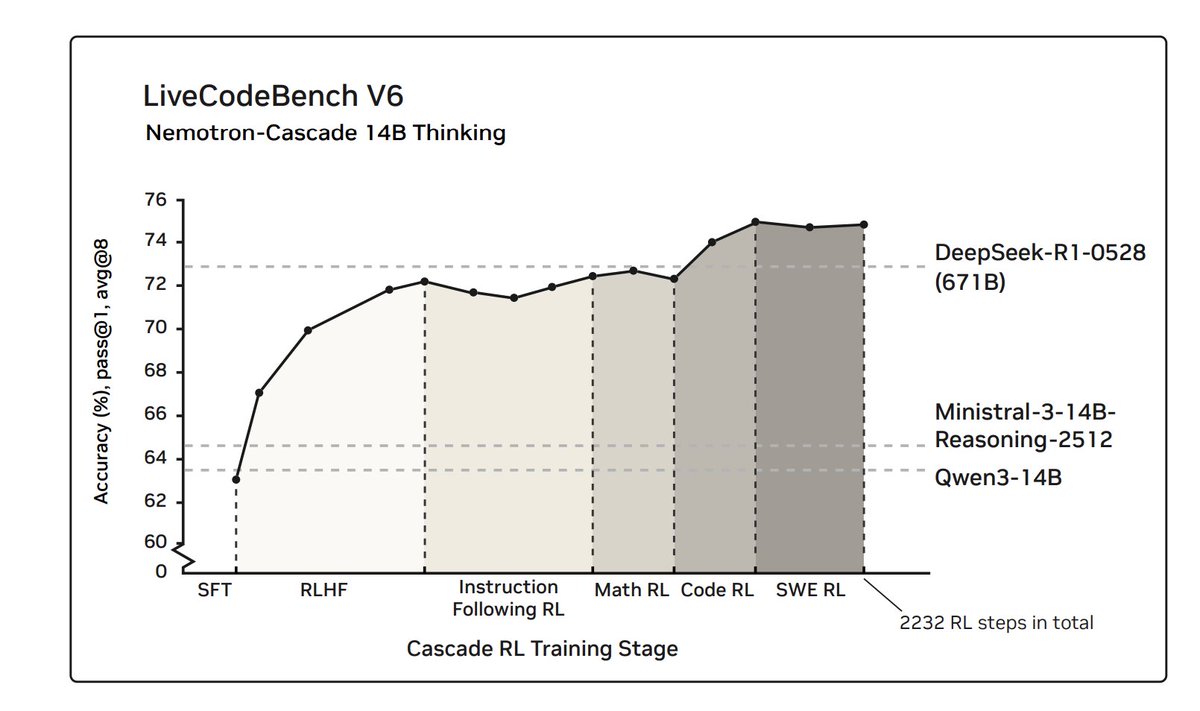

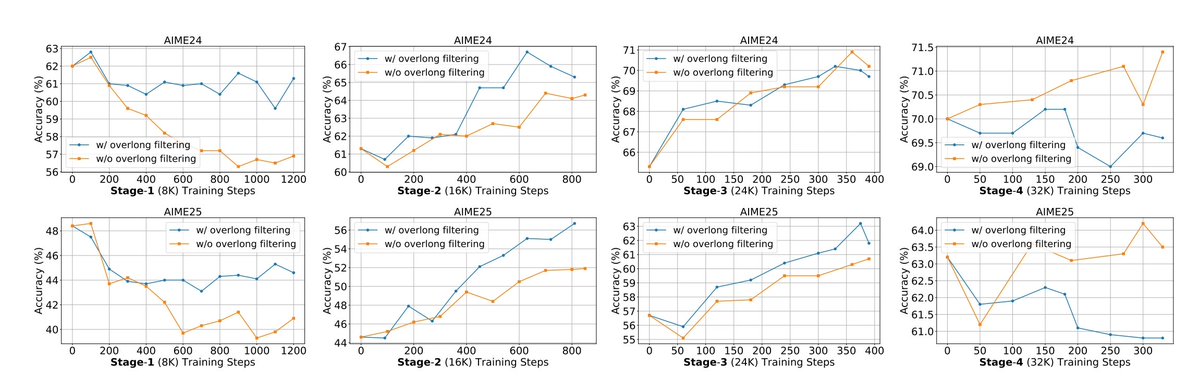

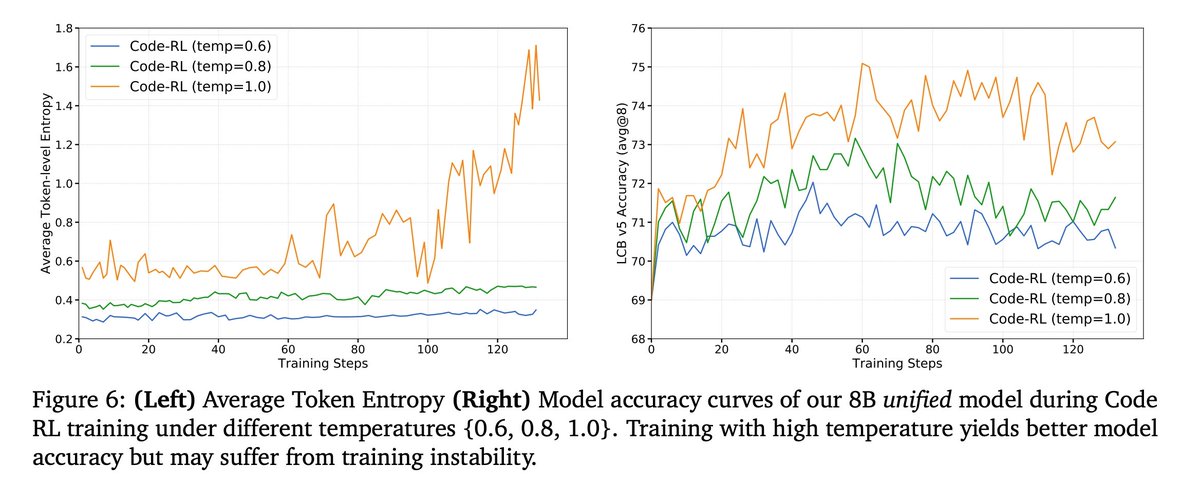

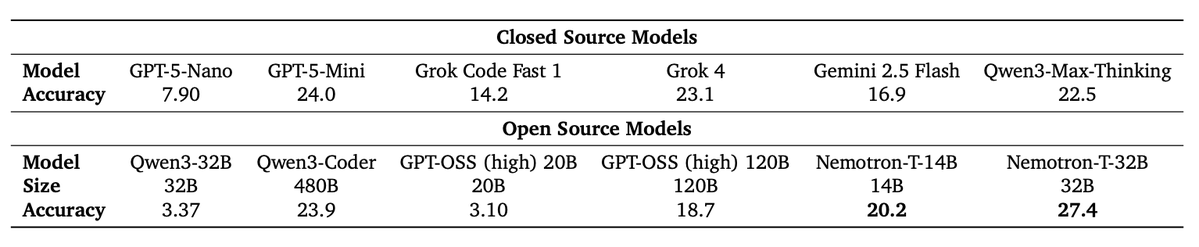

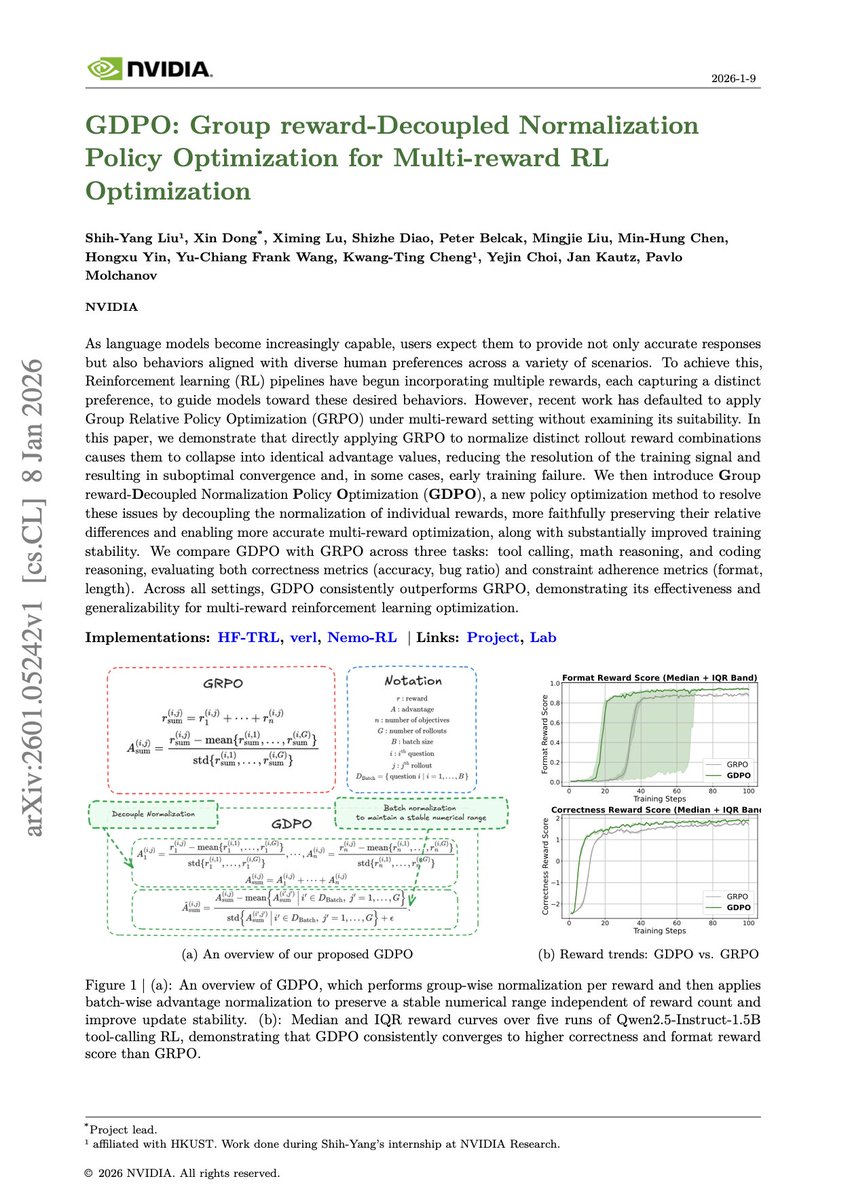

Banger paper from NVIDIA. Training general-purpose reasoning models with RL is complicated. Different domains have wildly different response lengths and verification times. Math uses fast symbolic verification. Code requires slow execution-based verification. Alignment needs reward model scores. Blending all these heterogeneous prompts together makes the infrastructure complex, slows training, and makes hyperparameter tuning difficult. This new research introduces Cascade RL, a framework that trains models sequentially across domains rather than mixing everything together. First RLHF for alignment, then instruction-following RL, then math RL, then code RL, then software engineering RL. This sequential approach is resistant to catastrophic forgetting. In RL, the model generates its own experience, so old behaviors remain if they stay reward-relevant. Unlike supervised learning, where previous data disappears, RL optimizes cumulative reward rather than fitting exact targets. RLHF, as a pre-step, actually boosts reasoning ability far beyond mere preference optimization by reducing verbosity and repetition. Subsequent domain-specific RL stages rarely degrade earlier performance and may even improve it. Here are the results: Their 14B model outperforms its own SFT teacher, DeepSeek-R1-0528 (671B), on LiveCodeBench v5/v6/Pro. Nemotron-Cascade-8B achieves 71.1% on LiveCodeBench v6, comparable to DeepSeek-R1-0528 at 73.3% despite being 84x smaller. The 14B model achieved silver medal performance at IOI 2025. They also demonstrate that unified reasoning models can operate effectively in both thinking and non-thinking modes, closing the gap with dedicated thinking models while keeping everything in a single model. Paper: arxiv.org/abs/2512.13607 Learn to build effective AI Agents in our academy: dair-ai.thinkific.com

🚀 Introducing Nemotron-Cascade! 🚀 We’re thrilled to release Nemotron-Cascade, a family of general-purpose reasoning models trained with cascaded, domain-wise reinforcement learning (Cascade RL), delivering best-in-class performance across a wide range of benchmarks. 💻 Coding powerhouse After RL, our 14B model: • Surpasses DeepSeek-R1-0528 (671B) on LiveCodeBench v5/v6/Pro. • Achieves silver-medal performance at IOI 2025 🥈. • Reaches a 43.1% pass@1 on SWE-Bench Verified, and 53.8% with test-time scaling. 🧠 What is Cascade RL? Instead of mixing heterogeneous prompts across domains, Cascade RL trains sequentially, domain by domain, which reduces engineering complexity, mitigates heterogeneous verification latencies, and enables domain-specific curricula and tailored hyperparameter tuning. ✨ Key insight Using RLHF for alignment as a pre-step dramatically boosts complex reasoning—far beyond preference optimization. Subsequent domain-wise RLVR stages rarely hurt the benchmark performance attained in earlier domains and may even improve it, as illustrated in the following figure. 🤗 Models & training data 🔥 👉 huggingface.co/collections/nv… 📄 Technical report with detailed training and data recipes 👉 arxiv.org/pdf/2512.13607