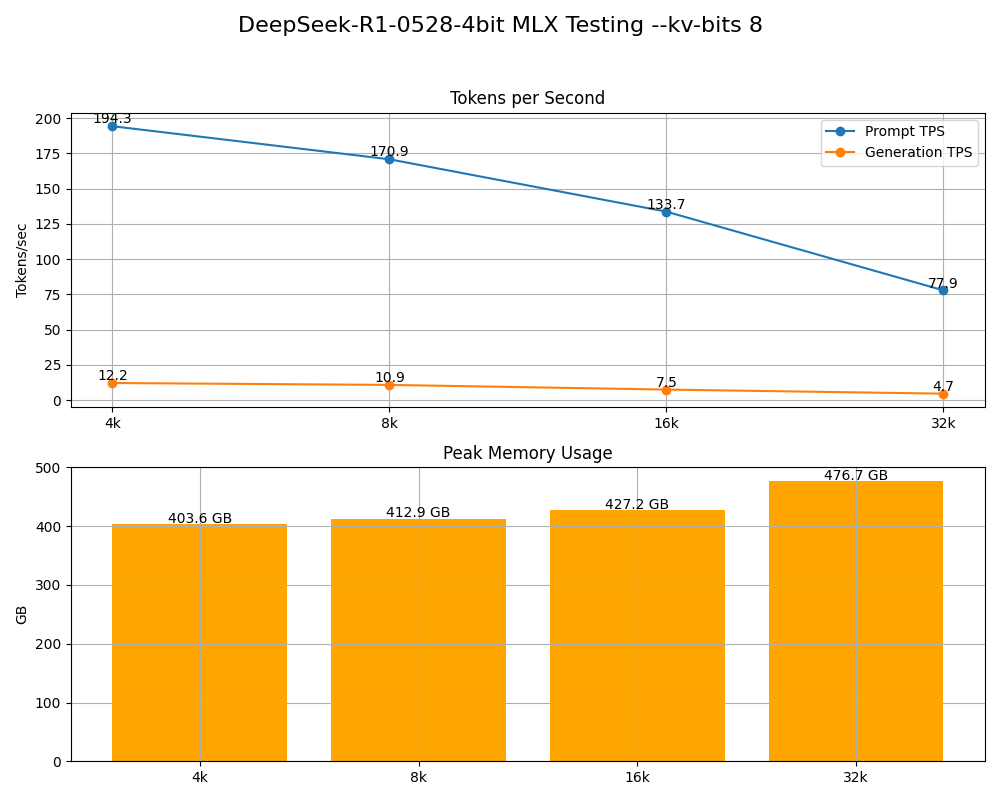

Welcome to Prompt Injection Yes, the AI hype is real. Models are getting faster, sharper, stranger. Entire workflows are disappearing overnight. AGI is no longer a joke - it's a visible direction of travel. Some of what’s happening today would’ve looked like CGI fantasy five years ago. But that doesn’t make the noise true [...] ⬇️⬇️⬇️