Andreas Borg

3.1K posts

Andreas Borg

@_andreasborg

Adjunct Professor @NYUTandon, Coder, Founder CURE5 #CDKL5 #CRISPR OZU

Not long ago, we thought this might be impossible. But here we are: our Gaussian Splatting videos are now streamable, just like regular video. No download, no app. 4DGS plays instantly in the browser on headsets, phones and laptops, with no limits on splats or length. Give it a try: store.gracia.ai

Probably the most current look at Palantir’s maven smart system software. Here’s the DoW’s Chief AI officer showing how it works:

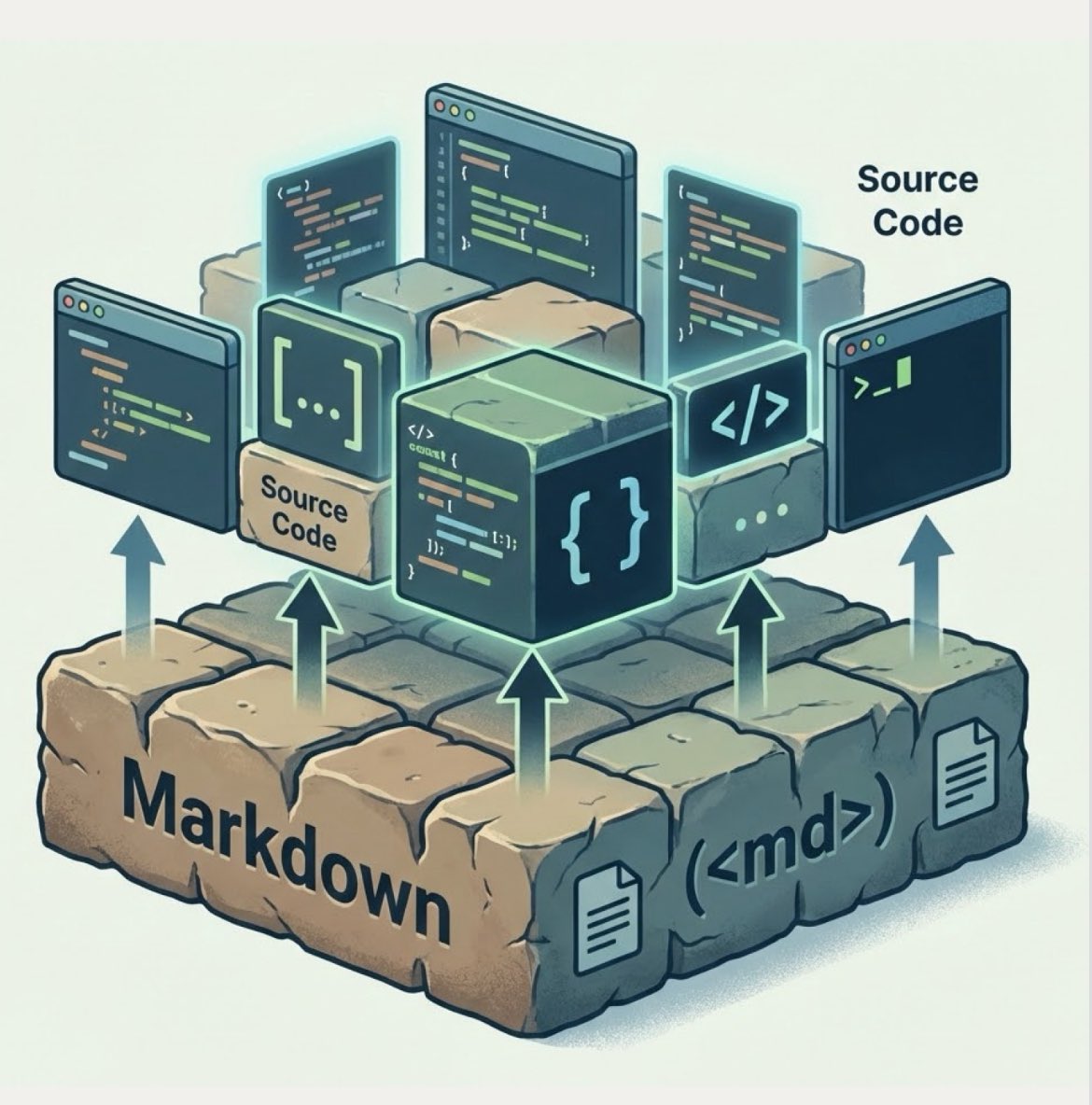

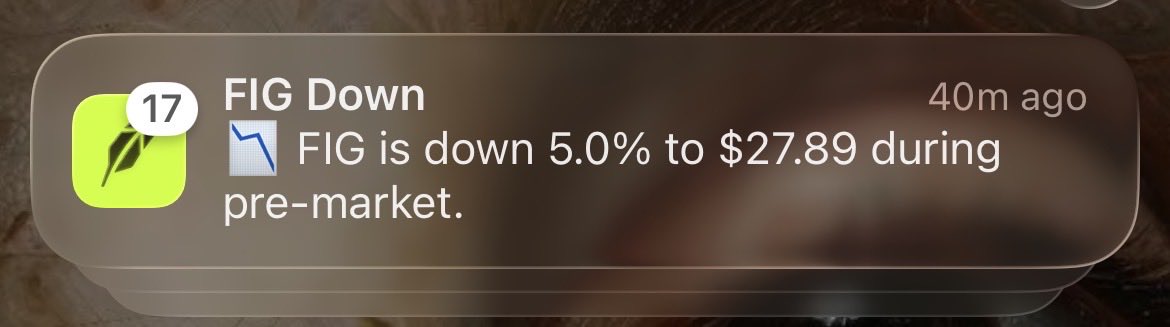

Figma shipped a silent patch specifically to kill figma-use — my open-source tool that did what they wouldn't: an MCP server that creates and modifies designs, JSX export, design linting. Then they scrambled to catch up with their own MCP server. So I spent the weekend recreating @Figma from scratch. OpenPencil: reads and writes .fig files, AI chat with full design tools, P2P collaboration with zero servers, ~7 MB app. No account, no subscription. Three days, one developer, MIT license. openpencil.dev

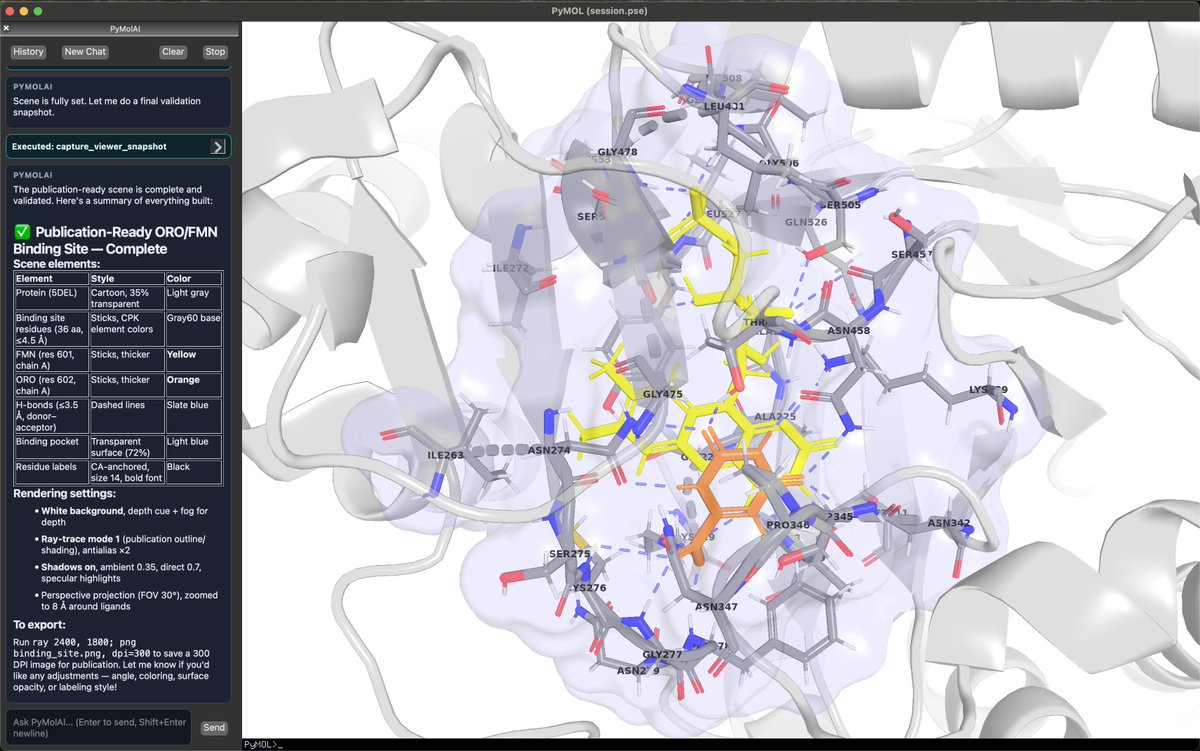

I am Open-Sourcing PyMolAI! Meet PyMolAI, an AI agent that can talk to your protein structures. Built on top of PyMOL, PyMolAI lets you interact with your structures in plain language. Whether you're: - Analyzing protein structures - Aligning complexes - Creating publication-ready figures - Or running design workflows PyMolAI interprets your request, executes the necessary PyMOL commands, and manages the workflow for you. It integrates with @OpenBioAI APIs, giving you access to tools like Boltz, ProteinMPNN, and BoltzGen — directly from your PyMOL session. It has local chat history with session syncing, so you can pick up exactly where you left off.

Introducing ZUNA, a 380M-parameter BCI foundation model for EEG data, a significant milestone in the development of noninvasive thought-to-text. Fully open source, Apache 2.0.

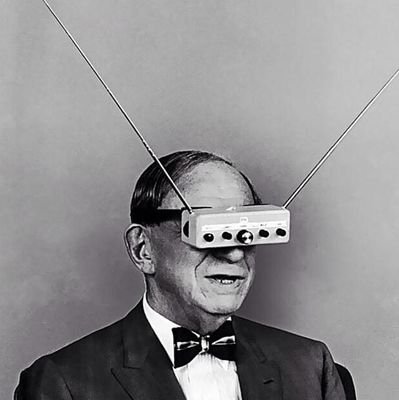

Professor Judea Pearl — the pioneer who invented causal reasoning in AI — says scaling won't save us. "Mathematical limitations that are not crossable by scaling up." The brutal truth: LLMs aren’t learning how the world works. They are learning how we describe the world. This resonates with most biologists: Drug discovery is hitting the same wall. We have mountains of genomic data, but most AI models just find patterns in published papers — not in the raw biology itself. They're learning what scientists think causes disease, not what actually does. Pearl's causal revolution? That's how we move from "this gene correlates with cancer" to "this gene causes cancer" — and finally design drugs that work. Until then, we're building very expensive parrots.

Today is my last day at Anthropic. I resigned. Here is the letter I shared with my colleagues, explaining my decision.