apaz

1.1K posts

apaz

@apaz_cli

https://t.co/EYtS07MR7w Making GPUs go brrr

Hermes Agent wrote a novel. "The Second Son of the House of Bells" runs 79,456 words across 19 chapters. The agent built its own pipeline to do it, using the ame modify-evaluate-keep/discard loop as @karpathy's Autoresearch but applied to fiction: world-building, chapter drafting, adversarial editing, Opus review loops, LaTeX typesetting, cover art, audiobook generation, and landing page setup. Book: nousresearch.com/bells Code: github.com/NousResearch/a…

New Datology Research: We expose "The Finetuner's Fallacy" The standard approach to domain adaptation (pretrain on web data, finetune on your data) is leaving performance on the table. Mixing just 1-5% domain data into pretraining, then finetuning, produces a strictly better model: ◾ 1.75x fewer tokens to reach the same domain loss ◾ 1B SPT model outperforms a 3B finetuned-only model ◾ +6pts MATH accuracy at 200B pretraining tokens ◾ Less forgetting of general knowledge Tested across chemistry, symbolic music, and formal math proofs. SPT wins on every metric. Led by @_christinabaek and @pratyushmaini, with the full Datology team.

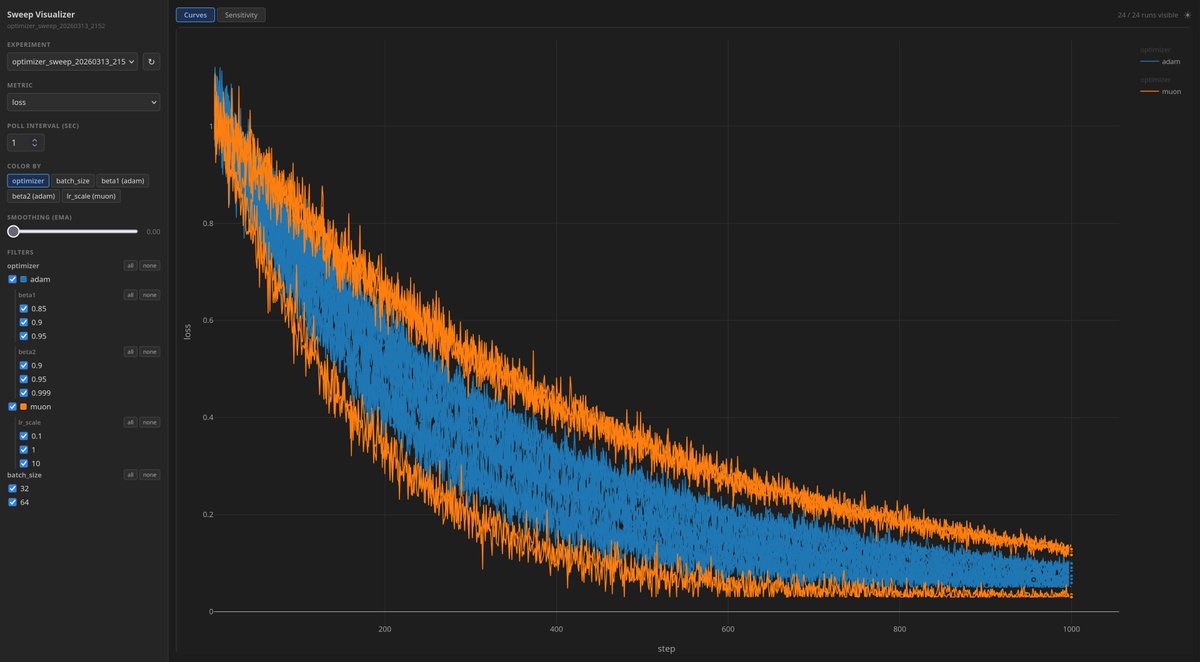

I just realized a better way to compute zeropower_via_newtonschulz5() for Muon. Here's a blueprint for how to write a kernel. It scales to large matrices way better than people think it does. But unfortunately writing this is significantly beyond my skill level. Muon enjoyers: @kellerjordan0 @leloykun @kalomaze @Kimi_Moonshot @Yuchenj_UW @YouJiacheng

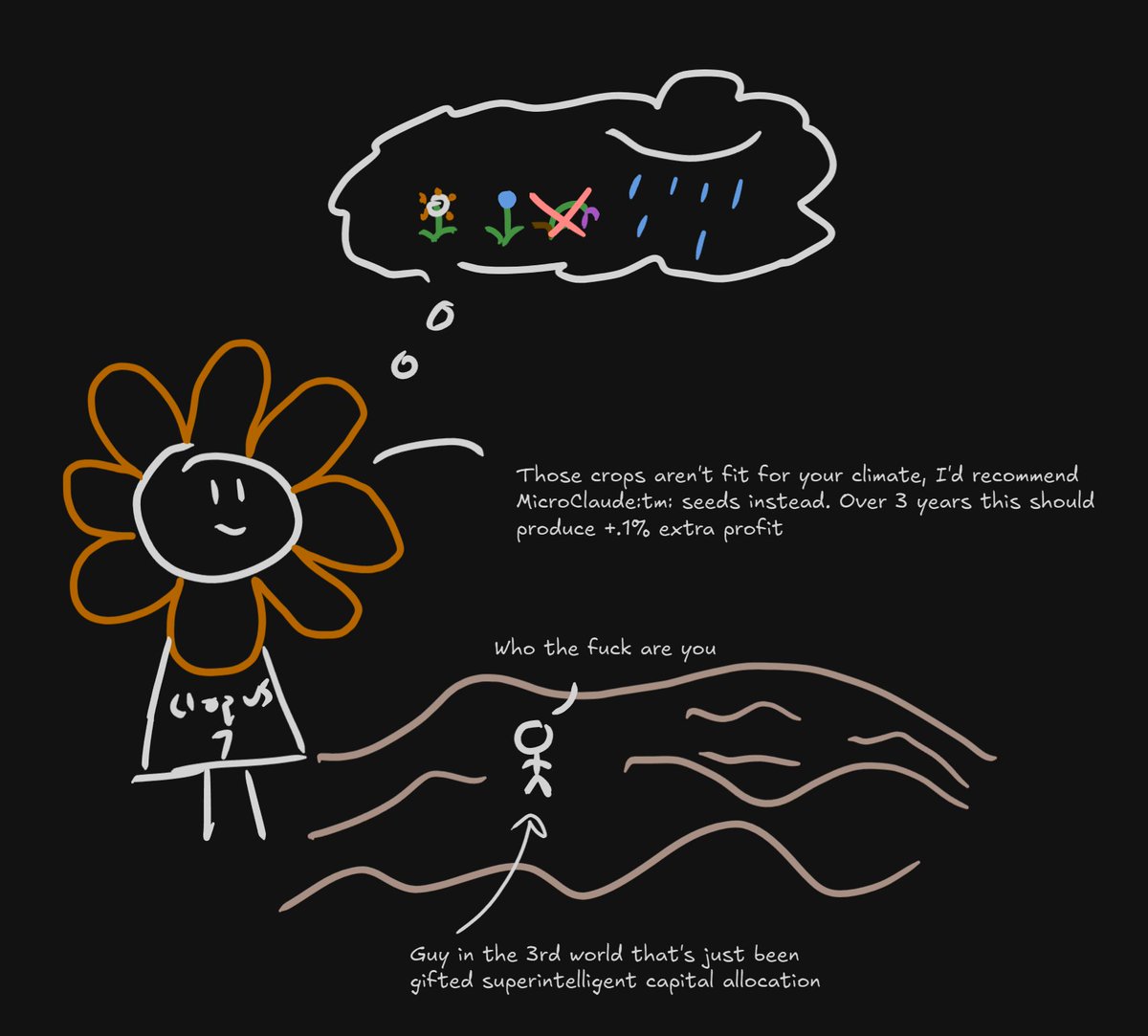

AI labs will do literally anything except dynamic pricing inshallah claude opus 5 will be economically valuable enough for this