apaz

1.2K posts

apaz

@apaz_cli

https://t.co/EYtS07MR7w Making GPUs go brrr

'Autoresearch', but for theoretical science? I formalized my blog posts on steepest descent convergence bounds and hyperparameter scaling laws in Lean using Codex. This started as an art project, but I ended up having similar (almost exactly the same) results as prior work while having much weaker assumptions so I think I may be onto something here 👀 This is also a glimpse of how theoretical science might look like in the near future. LLMs are good now at "reverse mathematics" where instead of asking what results we can get from a set of assumptions, we instead ask, "what's the minimal set of assumptions/axioms/postulates we need to reproduce (empirical) results?" Link to repo below vv

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. thinkingmachines.ai/blog/interacti…

I've been working a while on what's going to be a three or more part blog series. Here is part one. Part 1 makes an argument that training is training, pretraining data is important for RL, and suggests some experiments. Someone can leapfrog me on these experiments if they wish.

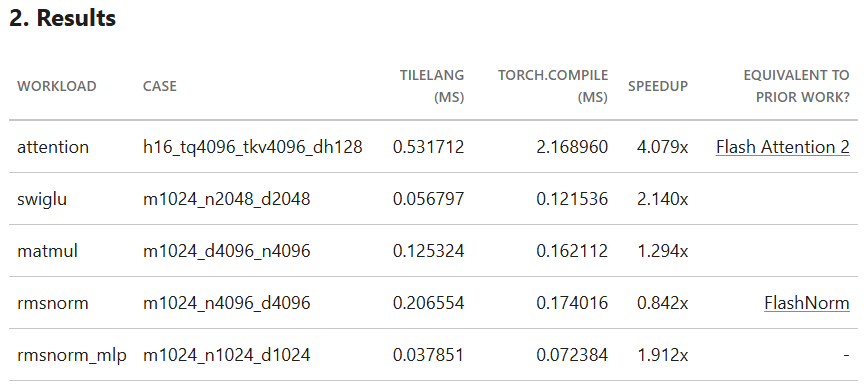

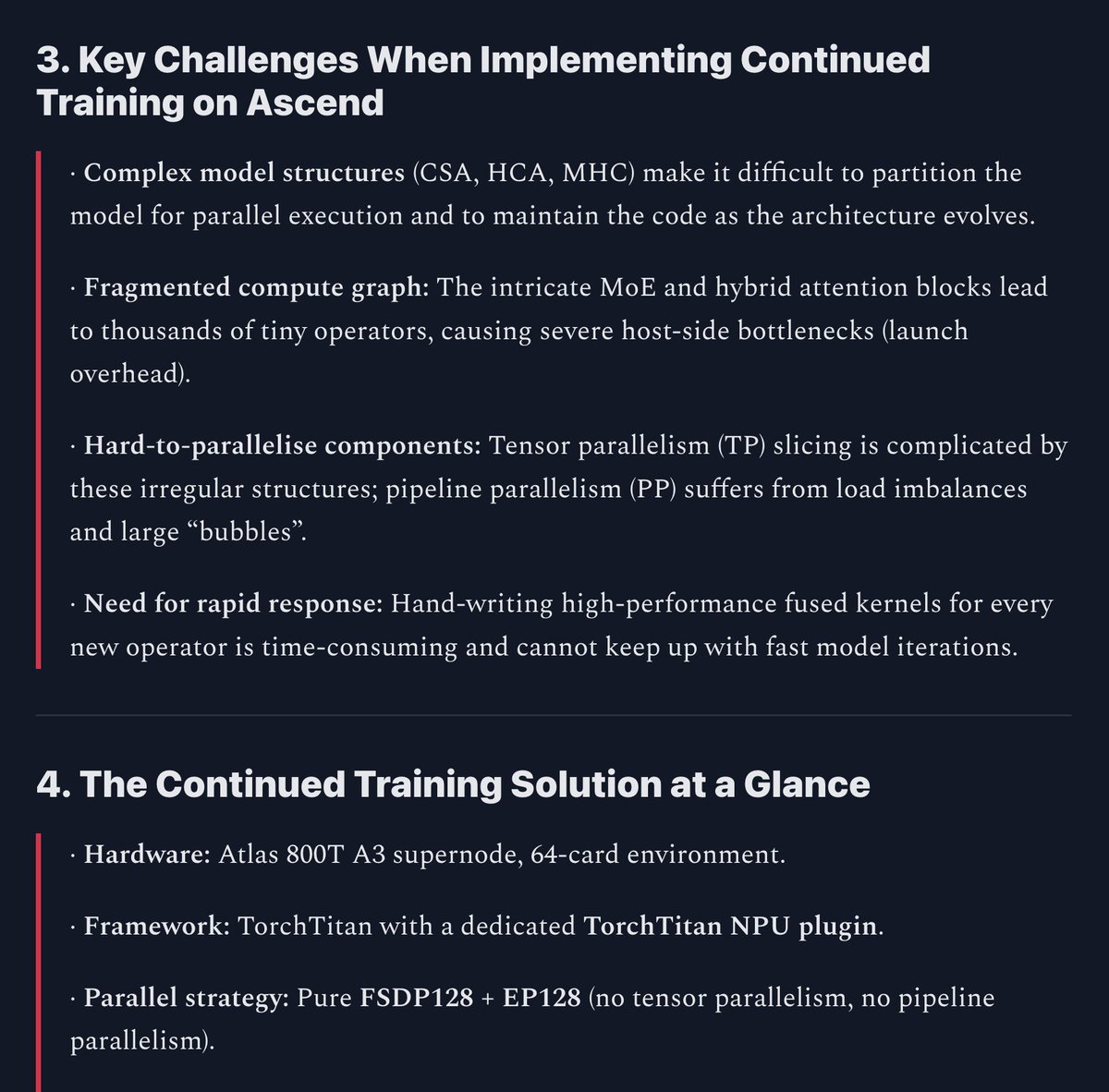

DeepSeekV4 on Ascend: Continued Training Optimization with TorchTitan Full details here: open.substack.com/pub/chinaresea…

Meta Tribe2 is a model that predicts your brain's response on videos so now you can edit videos with more clarity of how to compose them, what works and what doesn't and we built a free tool for you based on this model yes, totally free see details below

The DeepSeek-V4-Pro discount has been extended until May 31, 2026, 15:59 UTC!