things are about to get interesting from here on

Derek Deming

451 posts

@ddeming_

Building something new; engineering and infra; prev: @MSFTResearch | ex PhD (ABD / dropped out)

things are about to get interesting from here on

Just let Opus go for over an hour on a new feature. When it was done, I asked how I can test it. 20 minutes later, it realized I can't test it because it did the whole thing entirely wrong. Idk how you guys use this model every day for real work 🙃

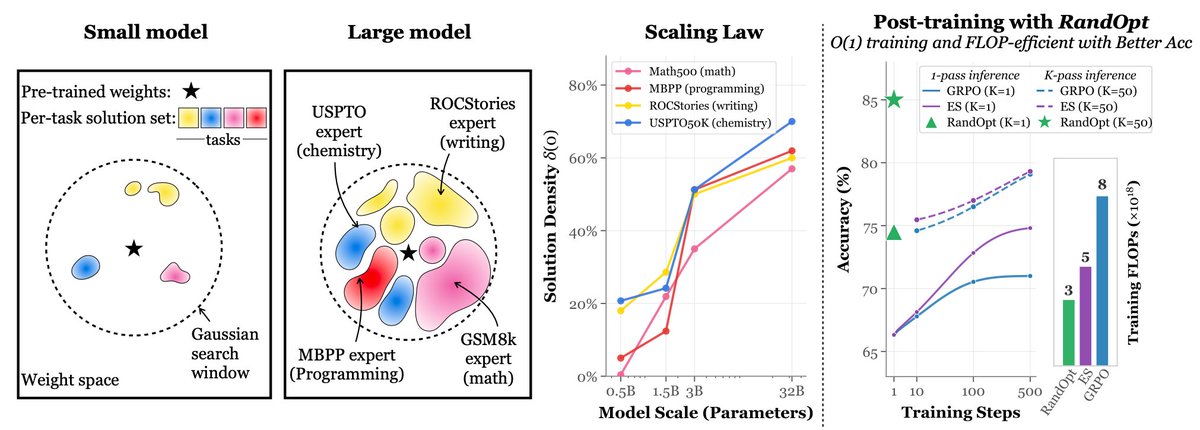

(2/7) 💵 With training costs exceeding $100M for GPT-4, efficient alternatives matter. We show that diffusion LMs unlock a new paradigm for compute-optimal language pre-training.

🚨 BREAKING: A Google researcher and a Turing Award winner just published a paper that exposes the real crisis in AI. It's not training. It's inference. And the hardware we're using was never designed for it. The paper is by Xiaoyu Ma and David Patterson. Accepted by IEEE Computer, 2026. No hype. No product launch. Just a cold breakdown of why serving LLMs is fundamentally broken at the hardware level. The core argument is brutal: → GPU FLOPS grew 80X from 2012 to 2022 → Memory bandwidth grew only 17X in that same period → HBM costs per GB are going UP, not down → The Decode phase is memory-bound, not compute-bound → We're building inference on chips designed for training Here's the wildest part: OpenAI lost roughly $5B on $3.7B in revenue. The bottleneck isn't model quality. It's the cost of serving every single token to every single user. Inference is bleeding these companies dry. And five trends are making it worse simultaneously: → MoE models like DeepSeek-V3 with 256 experts exploding memory → Reasoning models generating massive thought chains before answering → Multimodal inputs (image, audio, video) dwarfing text → Long-context windows straining KV caches → RAG pipelines injecting more context per request Their four proposed hardware shifts: → High Bandwidth Flash: 512GB stacks at HBM-level bandwidth, 10X more memory per node → Processing-Near-Memory: logic dies placed next to memory, not on the same chip → 3D Memory-Logic Stacking: vertical connections delivering 2-3X lower power than HBM → Low-Latency Interconnect: fewer hops, in-network compute, SRAM packet buffers Companies that tried SRAM-only chips like Cerebras and Groq already failed and had to add DRAM back. This paper doesn't sell a product. It maps the entire hardware bottleneck and says: the industry is solving the wrong problem. Paper dropped January 2026. Link in the first comment 👇

This codex issue is now fully resolved and stable for the last couple of hours. You have come to expect it, but yes, that means we will be reseting rate limits in a bit. Enjoy.

I've been working on a new LLM inference algorithm. It's called Speculative Speculative Decoding (SSD) and it's up to 2x faster than the strongest inference engines in the world. Collab w/ @tri_dao @avnermay. Details in thread.

ByteDance just published something I've been waiting for someone to build: CUDA Agent! It trained a model that writes fast CUDA kernels. Not just correct ones — actually optimized ones. It beats torch.compile by 2× on simple/medium kernels, ~92% on complex ones, and even outperforms Claude Opus 4.5 and Gemini 3 Pro by ~40% on the hardest setting. The key idea is simple but kind of brilliant: CUDA performance isn’t about correctness, it’s about hardware. Warps, memory bandwidth, bank conflicts — the stuff you only see in a profiler. So instead of rewarding “did it compile?”, they reward actual GPU speed. Real profiling numbers. RL trained directly on performance. That’s a big shift. Paper: arxiv.org/abs/2602.24286 Project: cuda-agent.github.io

GPT-5.2-high took 26 TIMES LONGER than Claude 4.5 Opus to complete the METR benchmark suite