Trade Whisperer@TradexWhisperer

❄️ $MU If you want to understand why HBM will solve the AI inference bottleneck, read this thread.

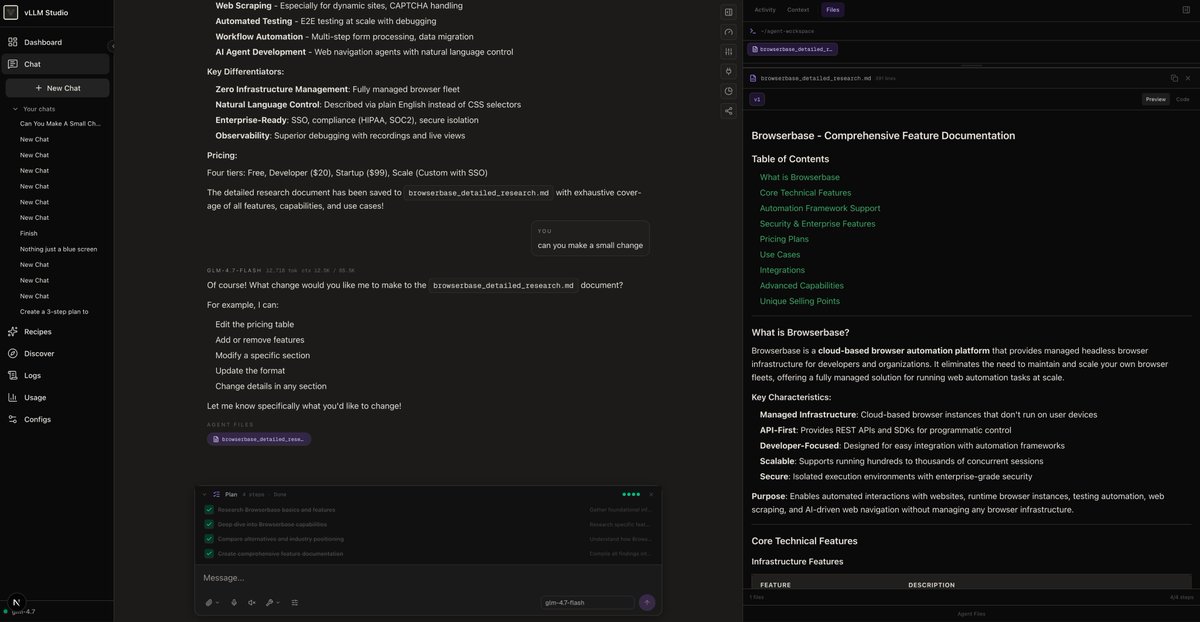

"The working memory of the AI is stored in the HBM. If you have a long conversation with an AI, overtime, that memory, that context memory is going to grow TREMENDOUSLY" -Jensen at CES 2026.

Endless Inference = Endless Memory

Inference is becoming a memory-bound challenge, not just a compute one. The explosive growth of AI inference will drive a structural shift in the memory industry, particularly for leaders like Micron.

High Bandwidth Memory (HBM) sits in the critical path for overcoming inference bottlenecks for billions of users worldwide. It will also flatten the DRAM market's historically volatile supply-and-demand cycles, ushering in a prolonged and durable fundamentals with sustained high pricing and profitability.

Let me start with a quote from 1996. Yes, three decades ago.

“It’s the Memory, Stupid!” — Richard Sites

In 1996, computer architecture pioneer and lead designer of the DEC Alpha, Richard Sites, famously declared, “It’s the Memory, Stupid!” in a seminal paper. He emphasized that memory hierarchies, not just raw processing power, were the true bottlenecks in computing performance. Three decades later, his words resonate more strongly than ever in the age of AI.

As AI models grow larger and more complex, the focus has shifted from training these massive systems to deploying them efficiently through inference: the process of generating predictions, recommendations, or responses in the real world. Every ChatGPT query? That's an inference call.

During inference, models must rapidly access enormous amounts of data from memory to produce outputs. Traditional memory solutions often cannot deliver the required bandwidth, causing processors to idle while waiting for data. This is the classic “memory wall” problem Sites warned about.

In essence, training thrives on brute-force GPU compute due to its high arithmetic intensity, while inference relies heavily on high-bandwidth memory (HBM) to keep data flowing fast enough to fully utilize that compute.

HBM breaks through the memory wall by offering ultra-low latency and massive throughput, often in the terabytes-per-second range. This specialized DRAM is stacked directly onto processors like $NVDA / $AMD GPUs or Google's TPUs with a 3D architecture with through-silicon vias (TSVs). These act like high-speed elevators in a vertical “apartment building” of memory dies, minimizing latency, maximizing bandwidth, and reducing power consumption: perfect for AI workloads.

A decade from now, AI inference will explode as younger generations integrate AI deeply into daily life. Projections estimate the AI inference market reaching $250–520 billion by 2030–2034, with inference compute demand growing at over 35% CAGR in the coming years, outpacing training.

By 2030, inference is expected to account for over half of AI data center workloads, dominating even more in the 2030s as billions of people and devices rely on AI daily.

HBM production is DRAM-intensive and diverts significant resources from consumer markets, contributing to the dramatic DRAM price surges we have seen recently.

Producing 1GB of HBM consumes roughly 3 times more wafer capacity (the raw silicon starting material) than 1GB of standard DDR5 DRAM.

Yields are lower due to the complexity of stacking and interconnects, requiring even more wafers for usable output.

Result: Even though HBM represents only a fraction of total DRAM bits shipped, it consumes a disproportionate share of production resources.

It is a zero-sum game. Every wafer used for HBM is one not used for regular DDR5 or LPDDR5X.

Total DRAM supply growth remains limited (around 10–16% YoY in 2026), while demand surges 30–35%+, creating a severe imbalance. New fabs and capacity expansions are underway, but meaningful relief likely will not arrive until 2027–2028.

Micron has sold out its entire 2026 HBM capacity (including industry-leading HBM4), confirming this sustained AI-driven demand in the foreseeable future.

Long-term forecasts remain uncertain as AI is still in its early stages. Physical AI has yet to see a significant breakthrough, and the market is only beginning to understand the long-term dynamics of AI and HBM.

What's for sure is that the AI Inference Winter Is Coming. Billions of people will ask questions to ChatGPT and Grok and we need $MU HBM for AI to proliferate.

HODL the Shares.