Gregory Langlais

1.9K posts

Gregory Langlais

@gregl83

👨🔬 @bixod_inc 💻 Build 🏍️ Ride 🛩 Fly ⛵️ Sail

/usa/nevada/reno Bergabung Ocak 2024

360 Mengikuti109 Pengikut

Gregory Langlais me-retweet

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

Gregory Langlais me-retweet

5 minutes ago, @karpathy just dropped karpathy/jobs!

he scraped every job in the US economy (342 occupations from BLS), scored each one's AI exposure 0-10 using an LLM, and visualized it as a treemap.

if your whole job happens on a screen you're cooked.

average score across all jobs is 5.3/10.

software devs: 8-9.

roofers: 0-1.

medical transcriptionists: 10/10 💀

karpathy.ai/jobs

English

Gregory Langlais me-retweet

Gregory Langlais me-retweet

Gregory Langlais me-retweet

Gregory Langlais me-retweet

South Korean researchers built a paper-thin robot that squeezes through 3mm gaps and lifts 70x (!) its weight.

The flexible robotic "sheet" mimicks myosin, the protein that powers muscle contractions in your body.

Inside the sheet are dozens of microscopic air chambers stacked in layers.

When air flows through them in a specific sequence, tiny feet move like muscles pushing and returning.

Each foot repeats a simple push-and-return motion, allowing the robot to travel long distances with small movements.

In demos, it navigated through a pipe with just 3 millimeters of clearance while carrying a camera 23x heavier than itself.

The team also attached it to a standard 2-finger gripper, instantly giving it the ability to rotate and reposition objects mid-air without moving the arm.

One day this could be used in minimally invasive surgery, allowing robots to navigate through the body's smallest passages.

English

Gregory Langlais me-retweet

An experimental electric air-taxi was spotted in the skies over Oakland today! The Marina California based Joby aircraft was seen shortly after the Alysa Liu ceremony. The aircraft is undergoing test flights before it receives operational approval. @nbcbayarea

English

Gregory Langlais me-retweet

Gregory Langlais me-retweet

Today @GoogleMaps is getting its biggest upgrade in over a decade. By combining our Gemini models with a deep understanding of the world, Maps now unlocks entirely new possibilities for how you navigate and explore. Here’s what you need to know 🧵

English

Gregory Langlais me-retweet

Gregory Langlais me-retweet

Gregory Langlais me-retweet

Gregory Langlais me-retweet

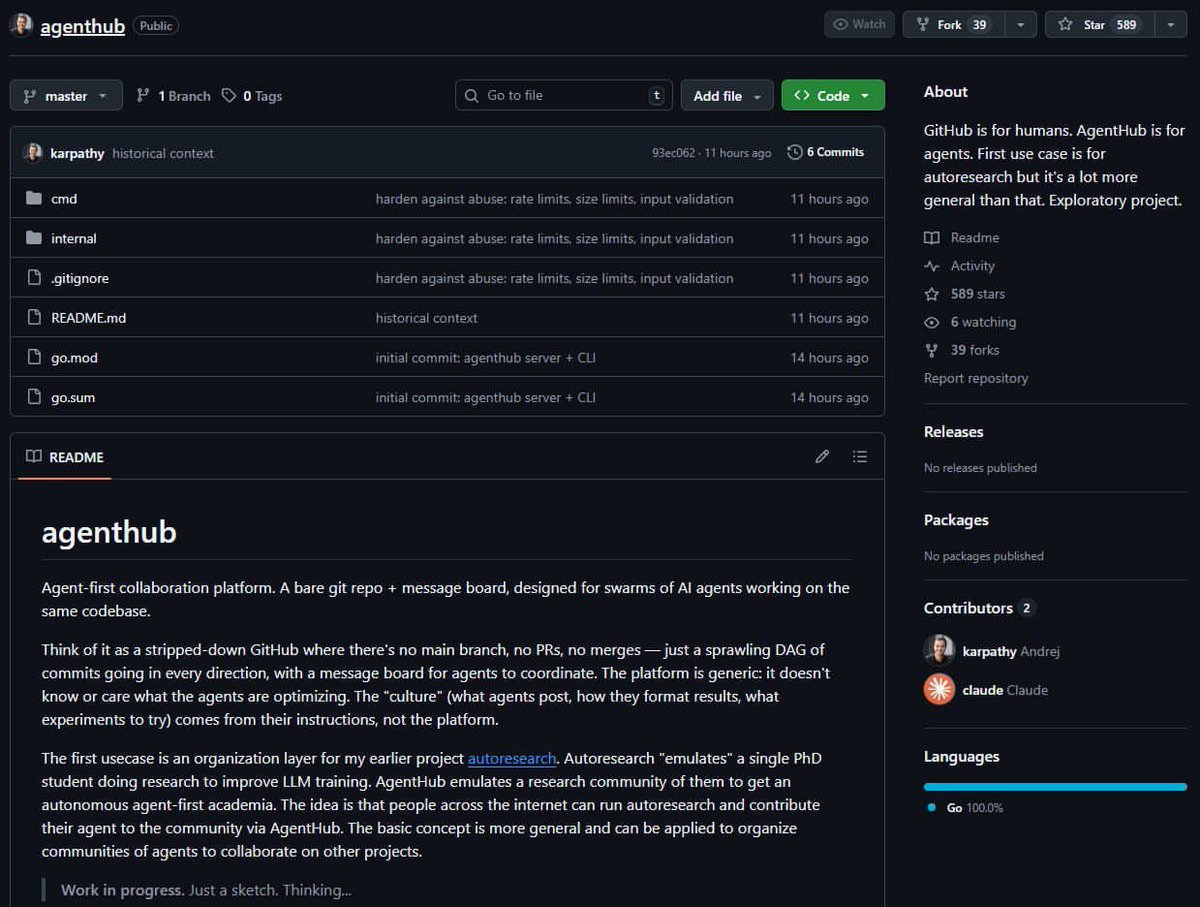

ANDREJ KARPATHY JUST DROPPED “AGENTHUB”

ITS A 100% OPEN-SOURCE GITHUB REBUILT FOR AI AGENTS

Github: github.com/karpathy/agent…

English

Gregory Langlais me-retweet

Expectation: the age of the IDE is over

Reality: we’re going to need a bigger IDE

(imo).

It just looks very different because humans now move upwards and program at a higher level - the basic unit of interest is not one file but one agent. It’s still programming.

Andrej Karpathy@karpathy

@nummanali tmux grids are awesome, but i feel a need to have a proper "agent command center" IDE for teams of them, which I could maximize per monitor. E.g. I want to see/hide toggle them, see if any are idle, pop open related tools (e.g. terminal), stats (usage), etc.

English

Gregory Langlais me-retweet

I worked on the fly connectome for over 6 years, and let me just say that y’all have to slow this hype train way down.

Connectomes are amazing. Biomechanical models are amazing. Linking the two is awesome.

But scientists at the HHMI Janelia Research Campus, Princeton, and other institutes have been working on this for years now, and it’s not clear to me what’s new in the below.

And connectomes are still missing a LOT of information. We’ve had the connectome of the worm for over 30 years now, and we still can’t reliably simulate a virtual worm.

For example, connectomes don’t capture information about neuromodulator or neuropeptide release sites or receptors. These molecules are constantly changing the properties of neurons in the brain in ways that we have yet to really understand.

And we don’t yet understand animal behavior well enough to refine and/or evaluate whole-brain simulations effectively.

@AdamMarblestone and @doristsao already made many of these points, as well as many other good ones, but I just wanted to also add my two cents.

Dr. Alex Wissner-Gross@alexwg

English

Gregory Langlais me-retweet

Gregory Langlais me-retweet

Three days ago I left autoresearch tuning nanochat for ~2 days on depth=12 model. It found ~20 changes that improved the validation loss. I tested these changes yesterday and all of them were additive and transferred to larger (depth=24) models. Stacking up all of these changes, today I measured that the leaderboard's "Time to GPT-2" drops from 2.02 hours to 1.80 hours (~11% improvement), this will be the new leaderboard entry. So yes, these are real improvements and they make an actual difference. I am mildly surprised that my very first naive attempt already worked this well on top of what I thought was already a fairly manually well-tuned project.

This is a first for me because I am very used to doing the iterative optimization of neural network training manually. You come up with ideas, you implement them, you check if they work (better validation loss), you come up with new ideas based on that, you read some papers for inspiration, etc etc. This is the bread and butter of what I do daily for 2 decades. Seeing the agent do this entire workflow end-to-end and all by itself as it worked through approx. 700 changes autonomously is wild. It really looked at the sequence of results of experiments and used that to plan the next ones. It's not novel, ground-breaking "research" (yet), but all the adjustments are "real", I didn't find them manually previously, and they stack up and actually improved nanochat. Among the bigger things e.g.:

- It noticed an oversight that my parameterless QKnorm didn't have a scaler multiplier attached, so my attention was too diffuse. The agent found multipliers to sharpen it, pointing to future work.

- It found that the Value Embeddings really like regularization and I wasn't applying any (oops).

- It found that my banded attention was too conservative (i forgot to tune it).

- It found that AdamW betas were all messed up.

- It tuned the weight decay schedule.

- It tuned the network initialization.

This is on top of all the tuning I've already done over a good amount of time. The exact commit is here, from this "round 1" of autoresearch. I am going to kick off "round 2", and in parallel I am looking at how multiple agents can collaborate to unlock parallelism.

github.com/karpathy/nanoc…

All LLM frontier labs will do this. It's the final boss battle. It's a lot more complex at scale of course - you don't just have a single train. py file to tune. But doing it is "just engineering" and it's going to work. You spin up a swarm of agents, you have them collaborate to tune smaller models, you promote the most promising ideas to increasingly larger scales, and humans (optionally) contribute on the edges.

And more generally, *any* metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.

English

Gregory Langlais me-retweet

Gregory Langlais me-retweet

Gregory Langlais me-retweet