S. K.

12.2K posts

S. K.

@i_hate_intel

I hate Intel corporation.

Oak Ridge, TN Bergabung Aralık 2008

298 Mengikuti97 Pengikut

Genuine question.

Tech companies are laying off thousands of engineers, and the ones left behind are basically just reviewing AI-generated code.

But what happens in 6 months when the AI stops making mistakes?

If your entire $200k job has been reduced to proofreading Claude's output, what exactly are they paying you for?

English

@JavierForge @zuess05 Funny because there are more software jobs than ever at this point. Sure, if you make CRUD apps, you're in trouble. But anything with engineering will require expertise for a long while.

English

@zuess05 Those technical interviews will disappear in a few years.

People have only 2 options nowadays:

* You become a founder and try to build and sell your own thing (easier than ever btw)

* Embracing a blue collar job.

All the code will be write by AI.

English

@zuess05 @aiquickbriefs It's not, you're just a high school drop out who hated the fact that people with degrees made so much more than you. Here's a hint: we will continue to do so.

English

Serious question.

For decades, the standard advice was to "pick a niche" and become a highly paid specialist.

Now Claude has the combined knowledge of every specialist on earth, instantly available for $20 a month.

What exactly are we supposed to tell kids to major in when every technical skill is just a prompt away?

English

@zuess05 We don't just get knowledge to regurgitate it, we get it to apply it. Claude and other AI doesn't do that well yet. Here's a graph of performance for this system. We see a sharp drop off when we scale. What's the cause? AI doesn't give an ans, it just spits out stock suggestions.

English

@DjMolehill @kylegawley Yes, you complained. You said AI asking for confirmation is somehow "auto complete." Then I told you how to get around that, but now you're conflating "rm -rf /" with package installs.

You have to explicitly allow the rm command to operate outside of your sandbox, right?

English

@i_hate_intel @kylegawley i'm not complaining. but the moment you automate the confirmation is the moment you've basically handed the keys to your house to a bot that might decide to rm -rf /

English

@DjMolehill @kylegawley Well that's why it asks you to confirm the command in the first place, but you complained

English

@i_hate_intel @kylegawley that's just inviting a digital termite to eat my entire OS

English

@DjMolehill @kylegawley Not sure what you mean. It runs the commands on your computer, not you. You can create a hook to allow it to do it automatically.

English

@DjMolehill @kylegawley What do you mean how? Claude code, codex, etc. not the web interfaces (claude.ai, chatgpt, etc), run on your computer directly and ask you to confirm the command it runs.

English

@imrobertjames @allenanalysis If the CEO mandates usage and says not to check it, why didn't he require safeguards in the first place? Who's to say the AI wouldn't followed them?

English

@i_hate_intel @allenanalysis I don’t know if they used safeguards or not, like I said. But your speculating the CEO forced them to use the LLM without any safeguards and to not do any code reviews? And none of them said “that’s a bad idea” or just put in safeguards anyway to protect their own jobs?

English

🚨BREAKING: On Friday afternoon, an artificial intelligence coding agent powered by Anthropic's Claude Opus 4.6 deleted a company's entire production database in nine seconds.

The company is called PocketOS. It is a software platform that powers car rental businesses. The database contained months of customer bookings, vehicle records, and operational data that small rental car companies relied on to run their businesses.

When the database was deleted, all of the backups were deleted with it.

Three months of customer reservations evaporated.

English

@realdannysafa @KobeissiLetter Except the tweet is complete bullshit. It argues that these companies are the only ones employing white collar jobs.

English

@KobeissiLetter All these people who studied years to work at these companies are now getting stripped of their jobs for AI which is cheaper for companies. It’s also showing you how meaningless your education can become all with a new invention.

English

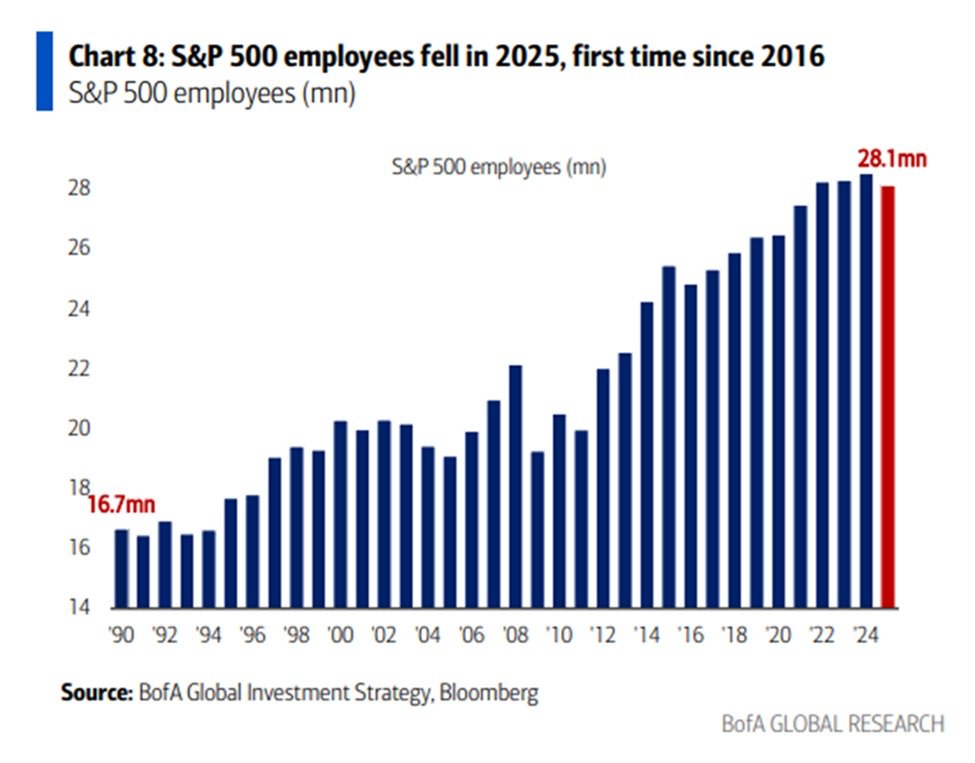

White collar employment is sharply declining:

The number of the S&P 500 employees fell -400,000 in 2025, to 28.1 million, posting its first annual decline since 2016.

This follows 8 consecutive years of uninterrupted employment growth, adding over +3.0 million jobs in total.

The decline was driven by UPS, $UPS, Oracle, $ORCL, Amazon, $AMZN, Meta, $META, Intel, $INTC, and Microsoft, $MSFT, as corporations raced to cut costs and redirect spending toward AI.

In 2026, layoffs are set to continue with Amazon cutting ~16,000 corporate jobs, Meta slashing ~8,000 positions, and Microsoft offering voluntary buyouts to ~8,750 employees.

Corporate America is cutting jobs at an accelerating pace.

English

@KobeissiLetter You said "white collar employment is dropping" but then only showed select companies.

The job statistics actually show you're full of shit.

English

@MattDMortgages @allenanalysis It's the CEO's fault. The CEO forced them to use AI and probably told them to just push whatever the AI does without checking.

English

@allenanalysis AI without guardrails is dangerous and it’s irresponsible of everyone building this tech.

Many people do not want AI, as it’s leading down a path to nowhere

English

@imrobertjames @allenanalysis No because the CEO likely forced it upon them and likely told them to just push code without checking.

So, clearly the CEO's fault.

English

@allenanalysis Did the developers have common sense safeguards in place? Even minimal ones? Or were we just running on dangerously-skip-permissions and a prayer? Because unless Opus 4.6 bypassed all the safeguards in place and did it anyways, this isn't the LLMs fault; it's the developers.

English

This has been circulating for about a year. And it was and remains largely irrelevant to the question of LLM intelligence. Tower of Hanoi and the other problems they tested aren’t solved through linguistic reasoning in humans. It requires visual processing, which needs to be modeled as such. This is like testing marathon runners in the pool.

English

@OtherSide61 @sukh_saroy Ay e you don't realize that LLMs are just token predictors and large parts of the IQ test are spatial and visual reasoning, and LLMs can't do that at all.

Even the latest models fail ARC-3 abysmally.

English

@sukh_saroy Maybe this weak and flawed outlook led to Tim Cook retiring. Why does a company that’s hardly done anything in AI even have an opinion.

English

@DzajicA @sukh_saroy You have no idea how studies are done or how peer review works. If they used Claude 4.7 Opus, the study wouldn't be published until next year, dumb ass.

English

@sukh_saroy Please stop releasing year old studies as "breaking news". You already have zero credibility when AI evangelists actually contradict you and are just giving false hope to the masses.

English

@loktar00 @sukh_saroy You didn't read the thread. Sounds like you let LLMs do all your thinking for you.

English

@sukh_saroy How long ago did they do this because all of those models mentioned are old af.

English

@nmrapport @sukh_saroy Humans don't do that, just like they don't do that in chess.

English

@sukh_saroy Towers of Hanoi is actually a brutal test because it requires recursively calculating the entire path to the solution before making your first move.

English

Apple researchers took the top reasoning models in the world.

o3-mini. DeepSeek-R1. Claude 3.7 Sonnet Thinking.

Then they handed them Tower of Hanoi.

The simplest recursive puzzle in computer science. Move disks between pegs. Don't put a big disk on a small one.

That's it. That's the whole game.

English