Mounir IDRASSI

127 posts

Mounir IDRASSI

@idrassi

PGP: 607E 5A7A D030 D38E 5E5C 2CA5 02C3 0AE9 0FAE 4A6F, #VeraCrypt author, IDRIX founder, AM Crypto founder

Kobe - Japan Bergabung Ekim 2018

38 Mengikuti143 Pengikut

@rezoundous What release of Mythos? Have you seen it except in your wet dreams?

English

@burkov Disappointing and sad behavior from members of such small community

English

When I launched ChapterPal, I offered all the subscribers of my AI newsletter free access to a paid plan for the first three months. Because this was a one-time event, I decided to keep it simple and put the validation of whether three months had expired on the frontend.

Once a day, a script would check if the returning user was officially subscribed to a paid plan, and if not, this complimentary subscription would be cancelled.

A couple of days ago, I noticed that the Premium OCR (where expensive LLMs are involved) was used by users who aren't on the Stripe subscribers list.

After an investigation, I found 9 users who had been using their complimentary paid subscription 3 months after it was supposed to be cancelled. Most of them used ChapterPal daily.

These users fooled the frontend verification script by pretending that they had already been tested earlier today, so there was no need to verify their subscription status again.

Two days ago, I manually downgraded these users to the free plan.

None of them have paid to subscribe.

English

@louszbd Issue doesn't occur anymore on official API, so it was only during preview period probably to save compute.

English

@louszbd Are you capping context to 100k in your autonomous benchmark? After 100k context size, it starts to behave erratically.

English

we open-sourced glm-5.1

agents could do about 20 steps by the end of last year. glm-5.1 can do 1,700 rn. autonomous work time may be the most important curve after scaling laws. glm-5.1 will be the first point on that curve that the open-source community can verify with their own hands.

hope y'all like it^^

Z.ai@Zai_org

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Blog: z.ai/blog/glm-5.1 Weights: huggingface.co/zai-org/GLM-5.1 API: docs.z.ai/guides/llm/glm… Coding Plan: z.ai/subscribe Coming to chat.z.ai in the next few days.

English

@arkinterli @Zai_org I just checked again and now it works well beyond 100k context size. So the issue we observed seems to have been specific to the preview version and now it is fixed. Congrats to @Zai_org

English

@arkinterli @Zai_org Noticed the same. In my harness, I configured compaction at 100k to make it work properly.

English

Introducing GLM-5.1: The Next Level of Open Source

- Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo.

- Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations.

Blog: z.ai/blog/glm-5.1

Weights: huggingface.co/zai-org/GLM-5.1

API: docs.z.ai/guides/llm/glm…

Coding Plan: z.ai/subscribe

Coming to chat.z.ai in the next few days.

English

Mounir IDRASSI me-retweet

I spent the evening looking into quantum computing timelines as a non-expert in quantum computing. Here is what I’ve learned:

We currently have machines with ~1,000–1,500 physical qubits at error rates around 10⁻³, and Google’s algorithm requires ~500,000 physical qubits operating coherently together with surface code error correction, yoked qubit storage, magic state cultivation producing ~500K T states per second, and reaction-limited execution at 10μs cycle times — none of which has been demonstrated beyond small-scale proof-of-concept experiments.

Scaling from where we are to where this needs to be isn’t a matter of incremental improvement along a Moore’s Law curve; it requires solving qualitatively new engineering problems in qubit fabrication yield, correlated error suppression across a massive chip (or multi-chip interconnects that don’t exist yet), cryogenic wiring and control electronics for half a million qubits, real-time classical decoding at the required throughput, and sustained coherence of a “primed” quantum state across minutes of wall-clock time — any one of which could prove to be a multi-year bottleneck, and all of which must be solved simultaneously.

Given the above, I just don’t see how we’re going to get to a cryptographically relevant quantum computer by 2030, especially given that we need a ~350× increase in physical qubit count with simultaneously tighter error correlations, an entirely new cryogenic control and wiring architecture to address half a million qubits, real-time decoding infrastructure that doesn’t exist yet, magic state distillation factories operating at industrial throughput, and multi-minute coherent idle times for primed states — and historically, solving even one of these at scale has taken the field the better part of a decade.

English

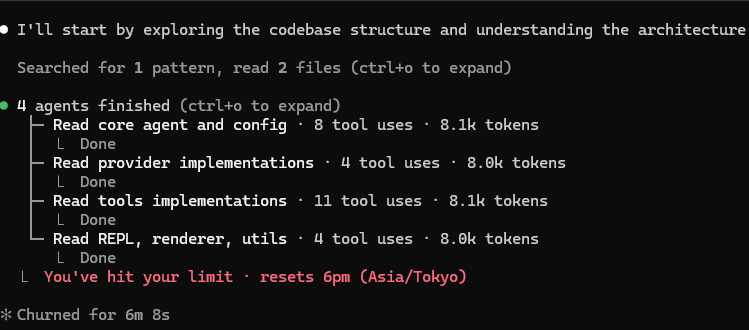

Issue is real: didn't use Claude for 1 week, just tried it to review a small codebase, and boom...limit is hit!!

How come QA didn't catch this?

Lydia Hallie ✨@lydiahallie

We're aware people are hitting usage limits in Claude Code way faster than expected. Actively investigating, will share more when we have an update!

English

@burkov Sad outcome for real quality content. Ads will become the new paywall to access users and everybody will lose.

English

As I expected, Google's response to a flood of AI-generated slop is to simply stop indexing new websites.

I put ChapterPal online 4 months ago, and Google only indexed 40 pages since then.

And only when you search "ChapterPal" will you see any of ChapterPal's pages in the search results.

Search is broken and, I'm afraid, beyond fixing.

English

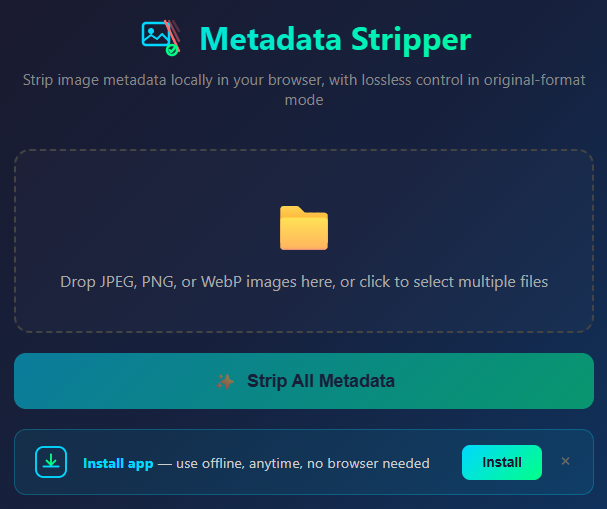

Remove C2PA metadata from AI-generated images: llambada.com/p/LE7LHtNh/rem…

English

Mounir IDRASSI me-retweet

@burkov Thanks! I was confused cause it spent lot of time creating an app, so it seemed creating "my" app. After that, there was no hint that I should scroll to the bottom to see the conversation part, so I just thought this was the app. Probably a UX enhancement could help onboarding.

English

I launched Llambada! 🚀🎉 Build cool mini-apps by talking to AI and earn money when paid users use your mini-apps.

No coding knowledge is needed.

I'm still setting up the revenue-sharing system (it will be based on a concept similar to Medium or Spotify), but you can already start building your mini-apps.

The code is still an early alpha, so DM me your feedback if you notice anything that doesn't work as it should.

llambada.com

English