Sabitlenmiş Tweet

BURKOV

23K posts

BURKOV

@burkov

Books: https://t.co/0EmPM3De9B & https://t.co/45NGbbXIzC App: https://t.co/n2jvMtYhVm PhD in AI, author of 📖 The Hundred-Page LMs Book & The Hundred-Page ML Book

Québec, Canada Katılım Haziran 2009

117 Takip Edilen57.4K Takipçiler

Its not about my IP, so VPN won't help.

Every new page in Wikipedia must be approved by some committee and they have these made up rules about what goes and what doesn't. I tried to make a page about my dad that died many years ago.

To me he was a person that could be remembered that way, but I realized that that's not how Wikipedia works.

I then asked other people and they confirmed me that they had same problems.

I used to donate to @Wikipedia but after that bitter experience I stopped doing it. To me they are some kind of data-mafia.

And I really don't understand why wouldn't you get good info about people that used to walk the Earth.

That info has value, always.

I'm sure that AI people would be thrilled to get more data about people, even if dead.

But it is what it is.

English

A guy from some company reached out to me on LinkedIn and offered for a monthly fee to create a Wikipedia page about me and keep it maintained.

When I asked why wouldn't I create it myself and then task an agent to maintain it, he said that if I change my mind to reach out to them.

I mean, if he spends time writing these cold DMs, some idiots should be paying?

English

@burkov @m2saxon @tdietterich @arxiv historically most arxiv papers are published eventually

lag times for most papers is 1-3 years, historically,

I believe the record is over a decade delay

English

Attention @arxiv authors: Our Code of Conduct states that by signing your name as an author of a paper, each author takes full responsibility for all its contents, irrespective of how the contents were generated. 1/

English

BURKOV retweetledi

The Hundred-Page Machine Learning Book (PDF + EPUB + extra PDF formats) by Andriy Burkov is on sale on Leanpub! Its suggested price is $40.00; get it for $14.00 with this coupon: leanpub.com/theMLbook/c/Le… @burkov #data_science #computer_science

English

A common story in recent AI work is that you can train an LLM to reason well even when the feedback during training is unreliable, a setting called "weak supervision."

In normal reinforcement learning for reasoning, a model attempts a problem and gets a reward signal telling it whether the answer was correct; weak supervision is when that signal is degraded in some way: only a handful of training problems are available, the correctness labels are mostly wrong, or there are no verified answers at all and the model has to fall back on judging its own outputs.

This Google, UCLA, and NYU paper looks at when training still works under those conditions and when it falls apart, and finds that the deciding factor is set before reinforcement learning even starts.

The authors track how quickly a model's training reward climbs to its ceiling, and show that models which reach the ceiling fast tend to memorize answers while models that climb slowly actually learn reasoning that transfers to new problems.

Read with an AI tutor: chapterpal.com/s/s74yjv24/whe…

PDF: arxiv.org/pdf/2604.18574

English

1. Most arXiv papers are never published elsewhere.

2. There are currently no serious venues in computer science that still demand a full copyright transfer to them.

3. The fact of making a preprint Creative Commons doesn't prevent the author from publishing the final version with a publisher.

There's absolutely no drawback in sharing your research as CC and plenty of not doing this.

English

@burkov @tdietterich @arxiv The reason the arxiv license is the correct choice for authors is that it won't interfere with the license of whatever publisher eventually takes the final copy

English

Qwen3.5-Omni Technical Report is now on @ChapterPal if you would like to read it with an AI tutor: chapterpal.com/s/6b9f54a6/qwe…

PDF: arxiv.org/pdf/2604.15804

Qwen3.5-Omni is an omnimodal LLM that achieves state-of-the-art performance across 215 audio and audio-visual benchmarks, introduces an innovative Adaptive Rate Interleave Alignment (ARIA) method for stable speech synthesis, and demonstrates emergent audio-visual vibe coding capabilities.

English

@RomeoLupascu I wouldn't create a page about myself. I think it's pathetic. But I don't see why I couldn't. Use a VPN and do it. What's the problem?

English

But you can't create a wiki page of yourself. Neither for anyone else that the wiki-gods deem it "important" or "public figure" etc.I know, I tried it.

On the other hand you are a published PhD so it should work. Try it and let us know if they let you create that page of yourself, I'm curious if you can.

Wikipedia is only for fancy people.

But they may be able to do it for you for $$ like some "Wikimafia" I guess.

English

This week's issue of my AI newsletter is out:

Choosing the right agentic design pattern: A decision-tree approach

[Ars Technica] The newest AI boom pitch: Host a mini data center at your home

The fall of the theorem economy: How AI could destroy mathematics and barely touch it

[OpenAI] GPT image generation models prompting guide

Natural language autoencoders produce unsupervised explanations of LLM activations

Interactive KL Divergence visualisation

Full-stack optimizations for agentic Inference with NVIDIA Dynamo

[Project] Maximal brain damage without data or optimization: Disrupting neural networks via sign-bit flips

True Positive Weekly #161 open.substack.com/pub/aiweekly/p…

English

@OfficialLoganK *Thousands of prompts and many sleepless nights, then maybe.

English

@rahulpmishraa Hey Rahul. Did you try to read it with an AI tutor on @ChapterPal? Try the concepts that you haven't understood fully. Let me know how it went.

English

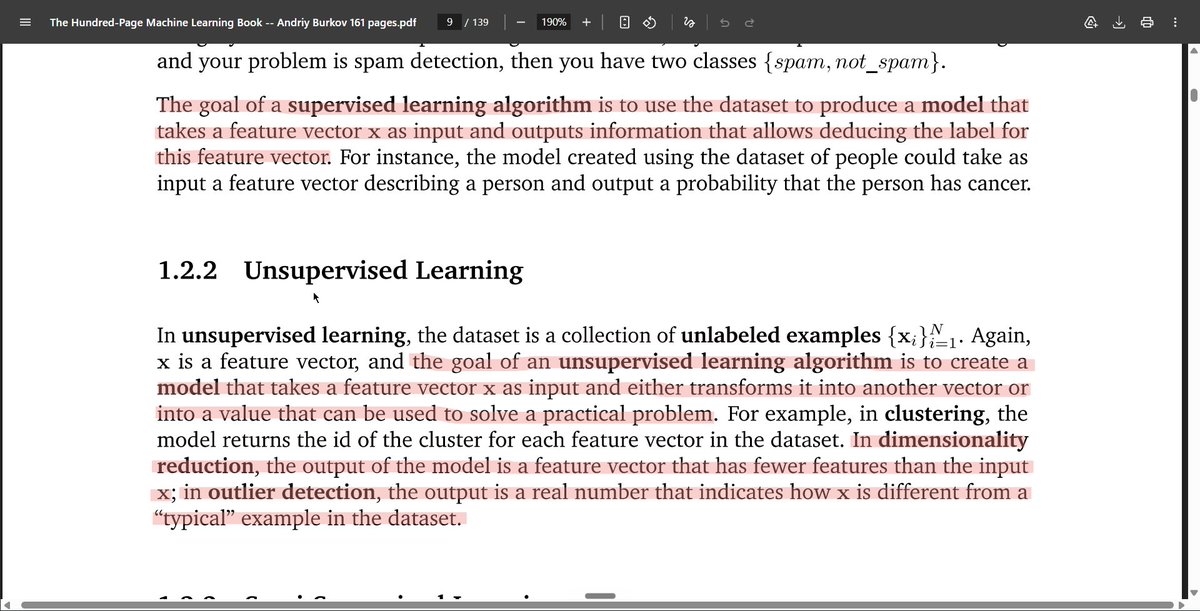

Finished The Hundred-Page Machine Learning Book in 10 days.

Didn’t understand every concept fully, especially deep learning, but consistency mattered more.

The book strengthened my ML fundamentals and gave me a clearer picture of how ML fits together.

#MachineLearning #AI

English

@acatovicx A rule that you cannot enforce isn't a rule. Anyone can create a page about themselves and many do.

If you want to be 100% clean, instead of doing it yourself over a VPN, ask your friend or a relative to create it.

Again, a rule that you cannot enforce isn't a rule.

English

@burkov You can’t write a Wikipedia page about yourself it’s not allowed. It’s still a human curated and maintained, that’s their “selling point”. This person was offering you honest service. Why would anyone visit your Wikipedia page is another matter.

English

@Autodidac178306 @Walmart It might be genuine. I have an agreement with Post and Telecom press.

English

Flipkart is India's biggest online store. It belongs to @Walmart, which makes Walmart the largest counterfeit seller in India and probably in the world.

All these pages selling my book on Flipkart sell shitty-quality print for a 93% discount.

Walmart knows that such a discount is impossible unless shit is sold, but they don't do anything, because money doesn't smell, huh @walmart? Money doesn't smell, you greedy fucks?

English

There are two main families of methods for training neural networks to generate images. One steers random noise smoothly into a real-looking sample. The other learns to undo a process that gradually destroys data by adding noise.

They were developed separately, look different in the math, and come with different design choices — what noise schedule to use, whether to start from Gaussian noise specifically, when to make the generation process random versus deterministic.

This paper shows the two are really one construction with a choice attached: pick any path from noise to data you like, then decide separately whether to follow it smoothly or with random jitter.

The statistical behavior along the path is the same either way; only the individual sample trajectories differ.

Read with an AI tutor: chapterpal.com/s/74v4ypup/sto…

PDF: arxiv.org/pdf/2303.08797

English

𝐃𝐞𝐭𝐞𝐜𝐭𝐢𝐧𝐠 𝐨𝐯𝐞𝐫𝐟𝐢𝐭𝐭𝐢𝐧𝐠 𝐢𝐧 𝐍𝐞𝐮𝐫𝐚𝐥 𝐍𝐞𝐭𝐰𝐨𝐫𝐤𝐬 𝐝𝐮𝐫𝐢𝐧𝐠 𝐥𝐨𝐧𝐠-𝐡𝐨𝐫𝐢𝐳𝐨𝐧 𝐠𝐫𝐨𝐤𝐤𝐢𝐧𝐠 𝐮𝐬𝐢𝐧𝐠 𝐑𝐚𝐧𝐝𝐨𝐦 𝐌𝐚𝐭𝐫𝐢𝐱 𝐓𝐡𝐞𝐨𝐫𝐲

Hari K. Prakash, Charles H Martin

arxiv.org/abs/2605.12394

English