New Anthropic research: Tracing the thoughts of a large language model. We built a "microscope" to inspect what happens inside AI models and use it to understand Claude’s (often complex and surprising) internal mechanisms.

Emmanuel Ameisen

2.1K posts

@mlpowered

Interpretability/Finetuning @AnthropicAI Previously: Staff ML Engineer @stripe, Wrote BMLPA by @OReillyMedia, Head of AI at @InsightFellows, ML @Zipcar

New Anthropic research: Tracing the thoughts of a large language model. We built a "microscope" to inspect what happens inside AI models and use it to understand Claude’s (often complex and surprising) internal mechanisms.

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

done

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…

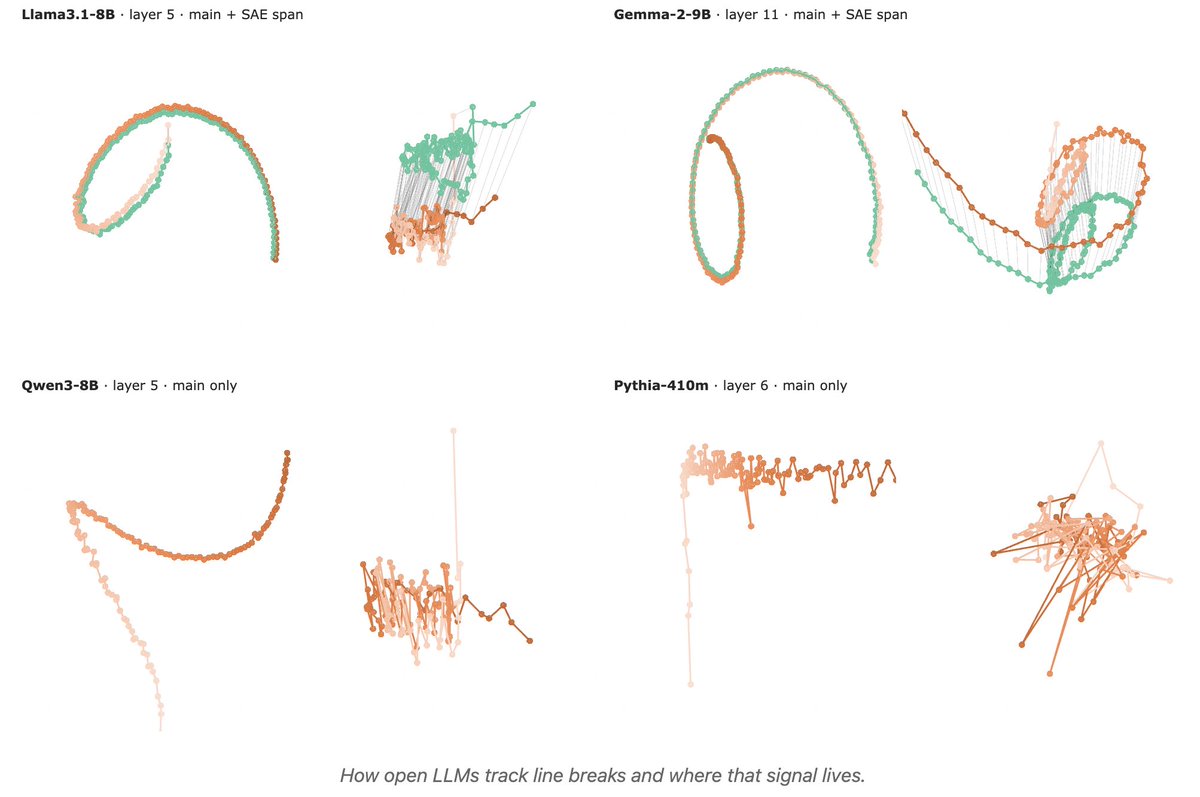

1/ 🧵 Reproducing Anthropic’s “counting manifold” result in open-weight LLMs: do they internally track “chars since last \n” to wrap text consistently? huggingface.co/spaces/t-tech/…

Introducing Claude Opus 4.6. Our smartest model got an upgrade. Opus 4.6 plans more carefully, sustains agentic tasks for longer, operates reliably in massive codebases, and catches its own mistakes. It’s also our first Opus-class model with 1M token context in beta.