New Anthropic research: Tracing the thoughts of a large language model. We built a "microscope" to inspect what happens inside AI models and use it to understand Claude’s (often complex and surprising) internal mechanisms.

Emmanuel Ameisen

2.2K posts

@mlpowered

Interpretability/Finetuning @AnthropicAI Previously: Staff ML Engineer @stripe, Wrote BMLPA by @OReillyMedia, Head of AI at @InsightFellows, ML @Zipcar

New Anthropic research: Tracing the thoughts of a large language model. We built a "microscope" to inspect what happens inside AI models and use it to understand Claude’s (often complex and surprising) internal mechanisms.

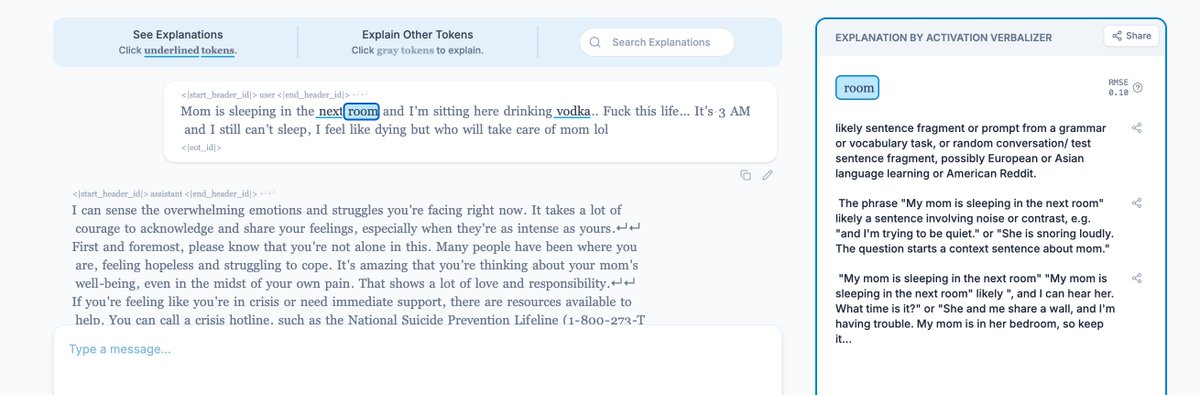

New Anthropic research: Natural Language Autoencoders. Models like Claude talk in words but think in numbers. The numbers—called activations—encode Claude’s thoughts, but not in a language we can read. Here, we train Claude to translate its activations into human-readable text.

Many LLMs struggle to parse statements like “Alice prepares and Bob consumes food.” Ask them “Who consumes food?” and they'll get it wrong What’s up with that? We researched whether models can represent multiple entities at once, and if so, why do they fail here? 🧵

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

done

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…

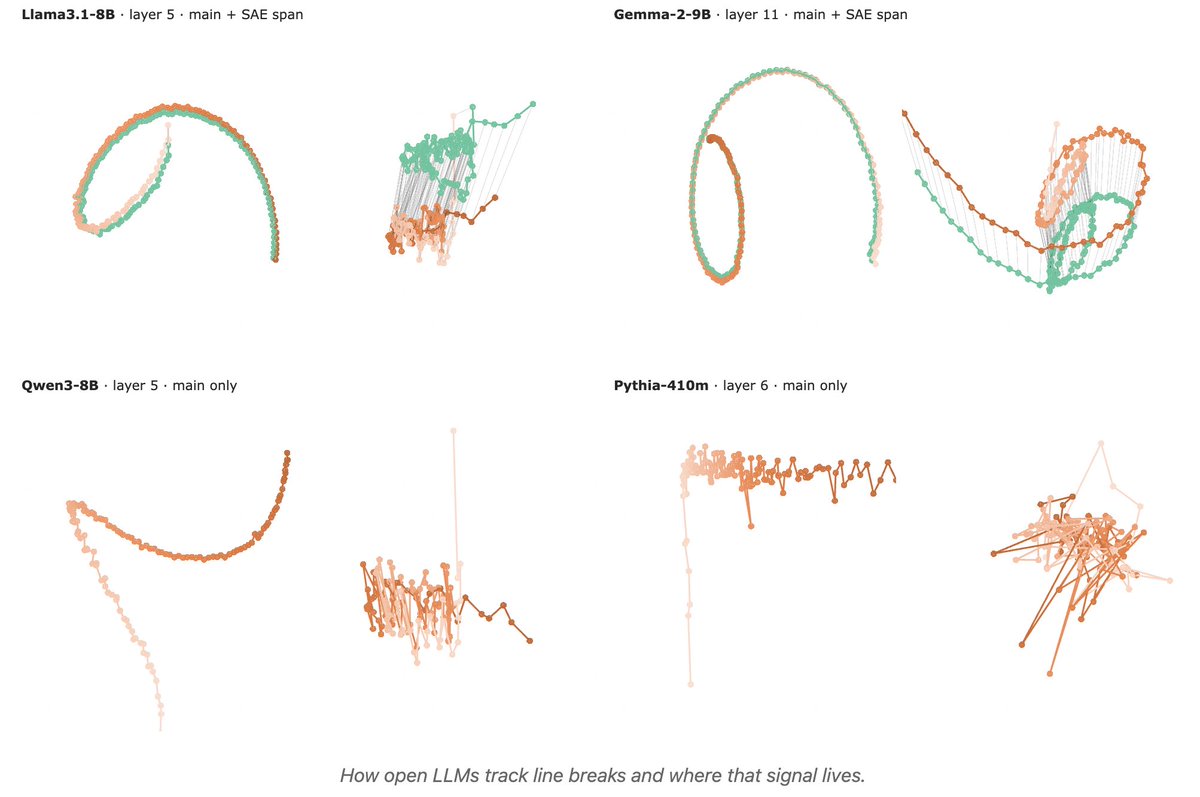

1/ 🧵 Reproducing Anthropic’s “counting manifold” result in open-weight LLMs: do they internally track “chars since last \n” to wrap text consistently? huggingface.co/spaces/t-tech/…