Patrick

4.4K posts

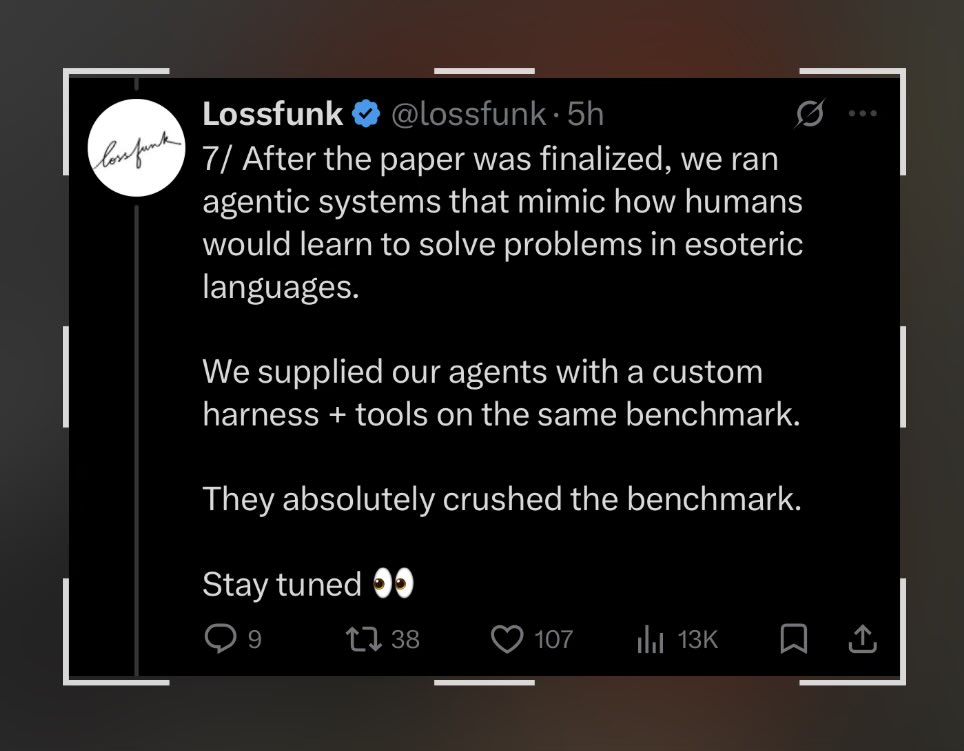

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵

$WMT is disappointed in results from OpenAI partnership, whereby Walmart users are allowed to shop via ChatGPT and OpenAI would receive a commission on these purchases “Conversion rates—the percentage of users following through with a purchase of an item shown to them by ChatGPT—have been three times lower for the selection sold directly inside the chatbot than those that require clicking out, according to Daniel Danker, who oversees design and product for Walmart. Put simply, Instant Checkout has been a flop.” -- Wired OpenAI on a heater recently in the news….

This is a cool example of how you can use AI to help your writing—without relying on it for any actual writing. From @jasminewsun theatlantic.com/technology/202…

This generation is so slow that reading a book is considered performative

Genuine question, what do kids and tweens watch these days? What’s their High School Musical, Hannah Montana, Cheetah Girls, Wizards of Waverley Place, Camp Rock, Sonny with a Chance, That’s so Raven, Lizzie McGuire,Suite Life,etc? We had so much, and they seemingly have nothing?

"I'm too scared to talk to this girl" What your ancestors did on a random Tuesday: