yipclouds jedi me-retweet

Dear Polkadot community, here is something I wanna share with you about @ChaoticApp.

All in this article: @Luuuuu/c285c2d724f4" target="_blank" rel="nofollow noopener">medium.com/@Luuuuu/c285c2…

English

yipclouds jedi

685 posts

@yipclouds

peace, meditation, web3, founder https://t.co/VMmSyCOhS7 CEO

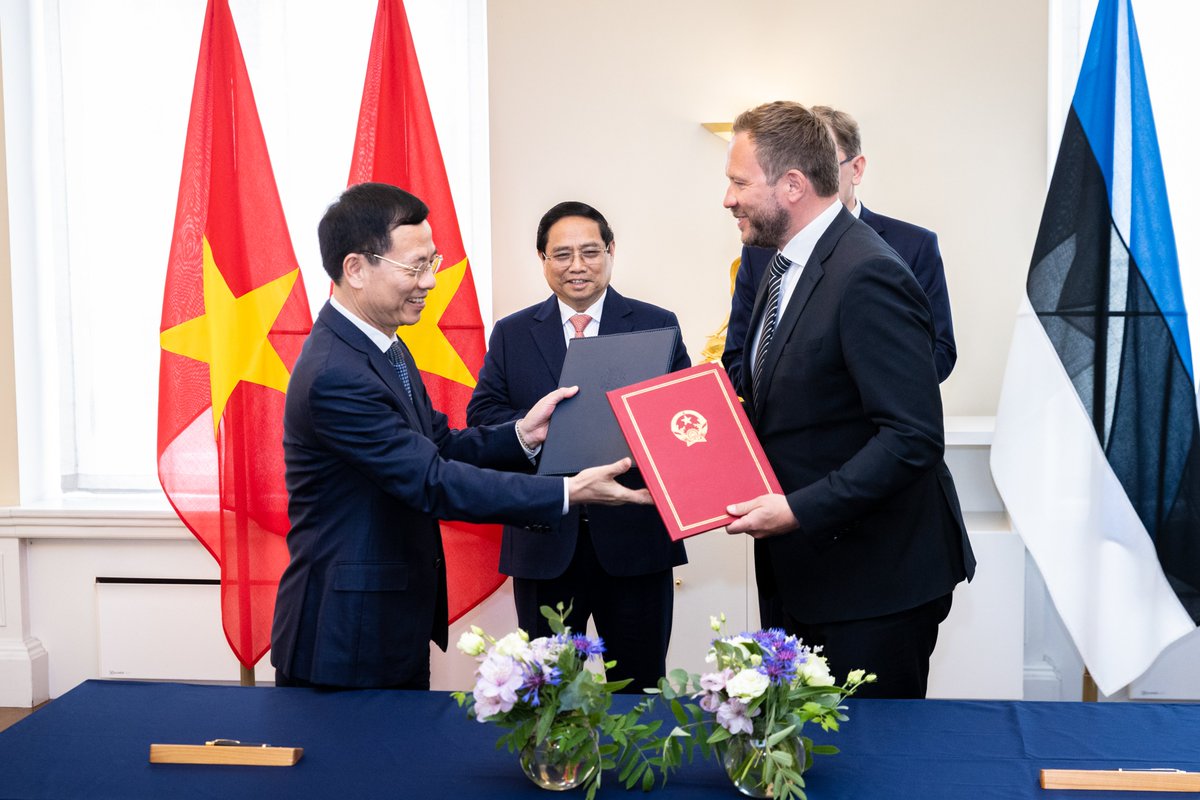

A great pleasure to welcome Vietnamese FM @FMBuiThanhSon in Tallinn today. We discussed global security & 🇪🇪🇻🇳 economic cooperation. I stressed that security in Europe & Asia is interlinked—& that Russia, violating international law, must not be allowed to succeed.

Starting 3rd March RSVP now lu.ma/wj4lixaa