固定されたツイート

AgenticOrange

227 posts

AgenticOrange

@AgenticOranges

AI systems | Agent architectures | Future of work Enterprise software operator exploring how AI reshapes execution.

The Execution Layer 参加日 Kasım 2021

440 フォロー中88 フォロワー

@JaredSleeper You are going to tear it down? Or your chat gpt tweet is going to, after you regurgitate what ever output you get

English

Every day for the next long while, I'm going to tear down a new public software company and highlight the AI risks/opportunities around it- products launched to date, top startups, key quotes from earnings calls, etc.

Day eighteen: Snowflake $SNOW

Peak share price: $392.15 (Nov 19, 2021)

Share price today: $121.11 (-69%)

EV today: $39.8bn

ARR today: $5.1bn (+30% Y/y)

NRR: 125%

EV/ARR: 7.8x

GAAP Operating Margin: -25% (!!)

EV/Run-rate GAAP EBIT: N/A

Headcount: 9060 (+16% Y/y)

What Snowflake does:

Snowflake is the leading cloud data warehouse focused on helping companies store, manage and query tabular business data using SQL. A significant share of the world's largest enterprises have opted to pool their critical data onto/around Snowflake to create a data warehouse of record to power everything from observability to analytics to data applications.

The key innovation powering Snowflake's rise was the separation of compute and storage as concepts, allowing users to apply elastic compute against fixed storage, reducing analytical queries that used to take hours to seconds.

Like others in the space, Snowflake has expanded into other adjacent areas like python, ETL, BI, etc.

AI bear case:

The AI bear case for Snowflake revolves around differences in human vs. agent preferences for accessing data and the continued march of infrastructure that prices to one paradigm becoming obsolete as the world advances. In particular, while Snowflake's query engine works very well at human speeds (loading a dashboard, running a complex SQL query) upstarts like @ClickHouseDB and @motherduck argue that agents have very different preferences and prefer lightning fast queries that would be very expensive on Snowflake.

In short, the bear case on Snowflake is that analytical queries will be run by agents in the future, and Snowflake's platform has an architectural innovator's dilemma in serving those use cases.

AI bull case:

The reality is, thousands of the world's largest companies have invested huge effort in standardizing/centralizing on Snowflake. The battle to be the system of record for aggregated tabular business data is already over at these companies- it will be Snowflake for the foreseeable future.

The implication is that agents are actually a huge tailwind for Snowflake- they will need to access business data to operate, to derive insights, to understand context, etc. and Snowflake's business model has the clear advantage of letting it monetize those queries as if they were coming from a human.

AI traction:

It is hard for Snowflake to know exactly what share of its revenue comes from AI-driven queries, but it did say this on the Q4 call:

"This quarter, we delivered the largest sequential increase in accounts using AI, bringing the total to more than 9,100 accounts."

Beyond that, net retention ticked up last year to 125%, very impressive at this scale.

Adjacent AI-native startup summary:

Databricks, albeit not AI-native, is the juggernaut to watch here, with a reported 15,000 employees up 34% Y/y.

Clickhouse - 536 employees, +86% y/y

Motherduck - 133 employees, +46% Y/y

Management Quotes:

"And in just 3 months, Snowflake Intelligence has scaled from a nascent offering to an essential capability for over 2,500 accounts, almost doubling quarter-over-quarter."

"Our deepened partnership with Anthropic is already helping customers like Intercom see significant impact."

"And Matt, just to emphasize that point, just in fourth quarter, we saw a lot of benefit with AI that we had a small reduction in force and about 200 people in the company were impacted. So if you look at our fourth quarter net adds on a headcount basis, we only added 37 people. So AI has really changed the framework for investing in growth. It's no longer tied to headcount."

"So we will be launching features like a per user cap on top of Snowflake Intelligence, so they can feel like there is a clear upper limit to how much they can get charged with an agent. We think models like this that are consumption-based with clear user caps and account caps offer the best of both worlds, which is consumption pricing with price predictability."

"Yes. Super quickly, like partners, customers and our internal field are all incredibly excited about the results we're seeing with Cortex Code. The original value prop of Snowflake, which is change what's possible in terms of ease of use, it's just gone like 10x with Cortex Code. We showcased a number of instances where people are building pipelines faster, transformation faster, insights faster. And I think we're only at the beginning of what is possible."

Commentary:

Though the balance of evidence (and certainly my customer work) suggests that Snowflake should be a beneficiary of AI, it is certainly striking that the business impact seems to have been muted thus far.

All of the ingredients are there- consumption-based pricing, AI lowering the barriers for humans to ask questions of data (aka AI-generated SQL), and data as a key foundational layer to agents.

My suspicion is that some of this disappointment to-date may come from Snowflake's lack of alignment to the use-case where AI is working the best today (i.e. code). Analytical queries may simply be slower/harder to get right- but it certainly seems likely that in a future where agents accelerate the amount of knowledge work done in the enterprise, Snowflake's core business should see a meaningful tailwind.

Once that question is answered, the burning question will be whether agent adoption presages an architectural shift towards data warehouses with a more AI-native architecture. My gut is that this will happen at some scale but won't create a wholesale shift and lead to a data warehouse replacement cycle. It will certainly be interesting to watch, though!

English

AgenticOrange がリツイート

i've been working on llm memory systems for 3 years and dumped everything i know into this.

learn about the 9 axes of memory systems, the 10 most common failure modes, why memory eval is an intractable problem, and more.

everyone building with llms should read this.

Chrys Bader@chrysb

English

Dario Amodei is talking like the AI economy is about to go vertical.

Not billions. Trillions.

Not decades away. Before 2030.

If he’s even close, we’re not heading toward normal technological progress.

We’re heading toward a compute-fueled intelligence explosion and the end of work as we know it.

English

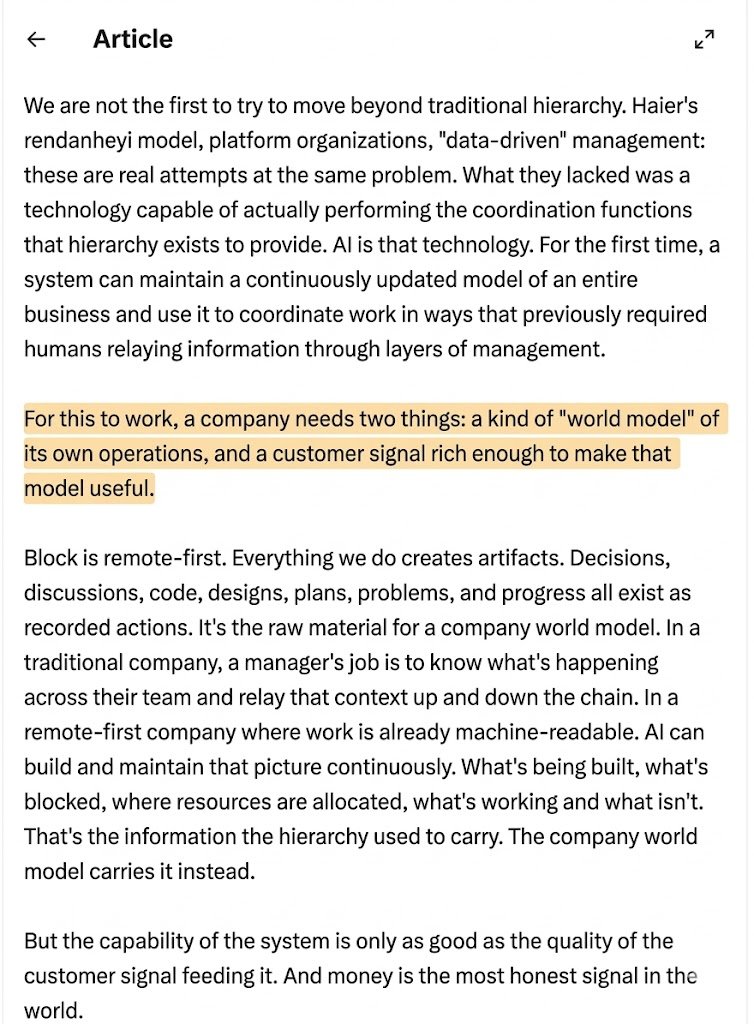

AgenticOrange がリツイート

Context graphs will be to the 2030s what databases were to the 2000s.

Within a year, every frontier lab will be building one and here's why:

At 10 people, coordination is free. Everyone knows what everyone else is doing. You never hold a meeting to "align."

At 100 people, you spend maybe 20% of your payroll on coordination. Managers, syncs, standups, planning sessions, status reports.

At 10,000 people, that number approaches 60%. The majority of your headcount exists not to produce anything but to make sure the people who produce things are producing the right things in the right order.

This is the dirty secret of large organizations: output scales linearly with headcount, but coordination cost scales exponentially. Every person you add creates new information pathways that must be maintained. The hierarchy is the protocol that manages this, and it's brutally expensive.

Hierarchy is a compression algorithm for organizational knowledge. At every layer, a manager compresses the reality of their team into a summary that fits in a 30-minute meeting with their boss. Their boss compresses eight of those summaries into one for their boss. By the time information reaches the CEO, it's been lossy-compressed through five or six layers of human interpretation.

This is why CEOs make bad decisions. The information they receive has been compressed, filtered, and distorted at every layer. The hierarchy is high-latency, low-bandwidth, and lossy.

Jack didn't fire 4,000 producers but cut 4,000 compression nodes. Block's "world model" is a replacement algorithm. Zero latency, high bandwidth, lossless. Every person at the edge gets the full picture without waiting for information to travel through human relays.

The infrastructure that makes this possible is the context graph. A living, continuously updated representation of how the organization actually works. Not just data, but decision traces: the reasoning connecting observations to actions. Not what's true now, but why it became true.

The shift from "give agents memory" to "give agents organizational judgment" will define the next platform war

jack@jack

English

@atlxgsw @hawkeyelvr69 Is this confirmed her?

English

AgenticOrange がリツイート

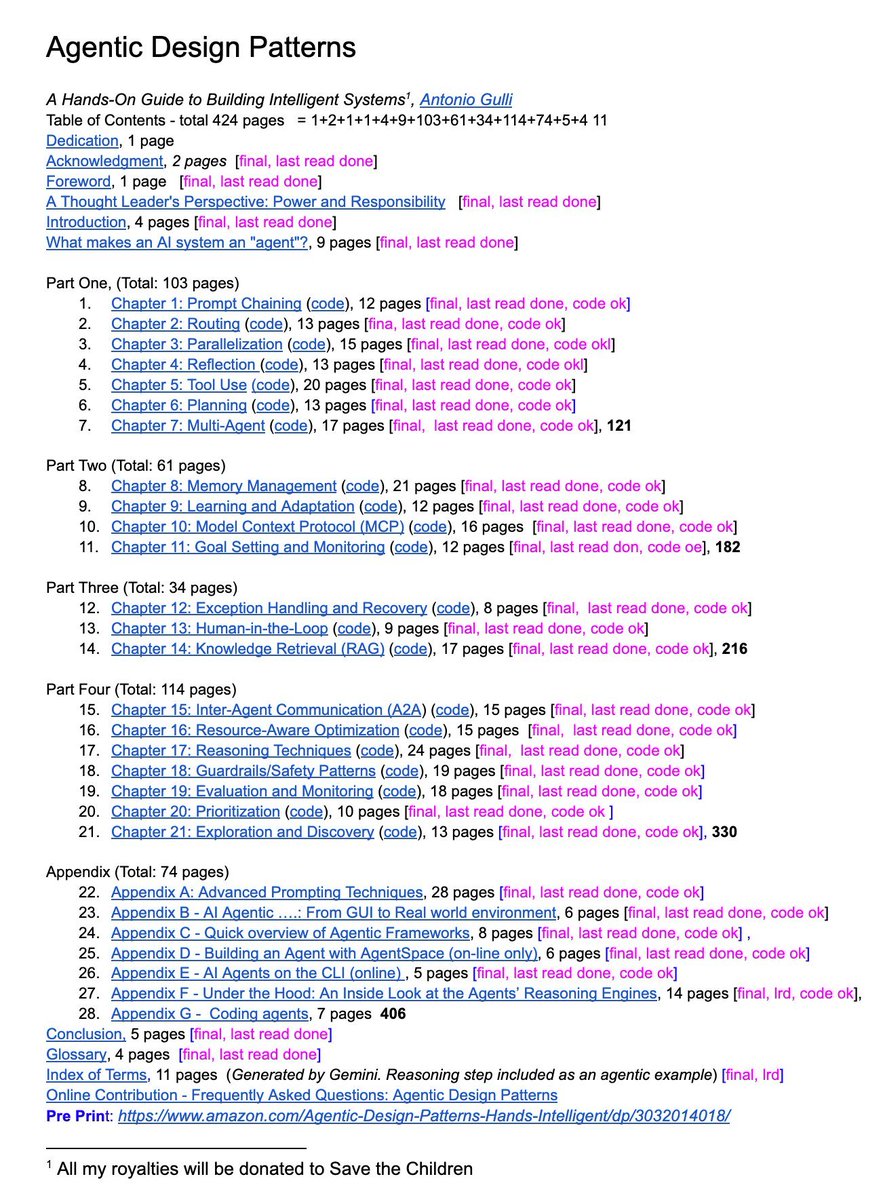

@techxutkarsh What is it with all these folks on x being impressed by someone else’s 421 page chatgpt output…..

English

A senior Google engineer just dropped a 421-page doc called Agentic Design Patterns.

Every chapter is code-backed and covers the frontier of AI systems:

→ Prompt chaining, routing, memory

→ MCP & multi-agent coordination

→ Guardrails, reasoning, planning

This isn’t a blog post. It’s a curriculum. And it’s free.

English

@r0ck3t23 They also have more cash than most small nations…

English

Jensen Huang just explained why every company cutting engineers over AI is asking the entirely wrong question.

Huang: “People say, I don’t need software engineers because apparently coding is going to be automated.”

That was the narrative. Here is what Huang actually did.

Huang: “I’ve given AIs to every one of my software engineers and hardware engineers and engineers period. 100% of NVIDIA has AI assistants, AI coders, and they’re busier than ever.”

Not fewer engineers.

Not smaller teams.

Busier than ever.

That is the line most companies are getting completely wrong right now. They hear “AI can write code” and immediately start cutting headcount.

Huang did the opposite. He armed everyone.

Huang: “And so the question is, what is the task versus what is the job? No different than a financial analyst; the task is mess around with spreadsheets, but the job is to make financial advice. The job is to help a customer.”

Writing code was always the task.

It was never the job.

The job is architecture.

Knowing what to build.

Why it matters.

How it fits into a system that actually creates value.

Code is the execution layer between the idea and the outcome. Nothing more.

When you automate that layer, you don’t eliminate the engineer.

You eliminate the bottleneck between what they can envision and what they can ship.

The companies using AI to cut headcount are optimizing for cost.

The companies using AI to multiply output are optimizing for territory.

Nvidia chose territory.

Every engineer at the most valuable semiconductor company on Earth now operates with an AI assistant.

Not a pilot program. Not an experiment.

Company-wide. Every function. Every team.

And the result is not less work.

It is more work. Faster. At a scale that was physically impossible twelve months ago.

The companies that understand the difference between eliminating engineers and unleashing them will build what comes next.

The ones that don’t will watch their best talent walk out the door to the ones that did.

English

🚨 BREAKING: The cybersecurity industry is about to get completely disrupted.

Someone just open-sourced a fully autonomous AI Red Team.

It's called PentAGI. 8,200+ stars on GitHub.

Not one AI agent. An entire simulated security firm. Researchers, developers, pentesters, and risk analysts. All AI. All coordinating with each other before launching a single attack.

No Cobalt Strike. No $100K/year pentest retainers. No OSCP required.

Here's what's inside this thing:

→ An Orchestrator agent that plans the full attack chain

→ A Researcher agent that gathers intel from the web, search engines, and vulnerability databases

→ A Developer agent that writes custom exploit code on the fly

→ An Executor agent that runs 20+ pro security tools (nmap, metasploit, sqlmap, and more)

→ A memory system that learns from every engagement and gets smarter over time

Here's the wildest part:

It runs everything inside sandboxed Docker containers. Full isolation. It picks the right container image for each task automatically.

It has a knowledge graph powered by Neo4j that tracks relationships between targets, vulnerabilities, tools, and techniques across every single test.

Cybersecurity firms charge $25K-$150K per engagement for this exact workflow.

This is free.

100% Open Source. MIT License.

English

@xurbanxcowboyx @dieworkwear @kevinxu According to the education data initiative. The average 4 year cost of state college tuition + room and board is $150k

English

@dieworkwear @kevinxu Also most normal state universities don't cost $200k

English

Here’s my last play of the night for CBB🏀

SMU Mustangs -7.5 (-110)

Miami Ohio’s defense is effectively non existence. They’ve struggled to protect the rim all year even abainst sub par MAC competition, so with SMU’s slashing offense, I expect them to dominate the rim and lead them to a sizeable win tonight

English

AgenticOrange がリツイート

AgenticOrange がリツイート