AllThingsIntel

317 posts

AllThingsIntel

@AllThingsIntel

Software & Intelligence Engineer | Creator of Apollo, the First Open Source In-Character Reasoning Model

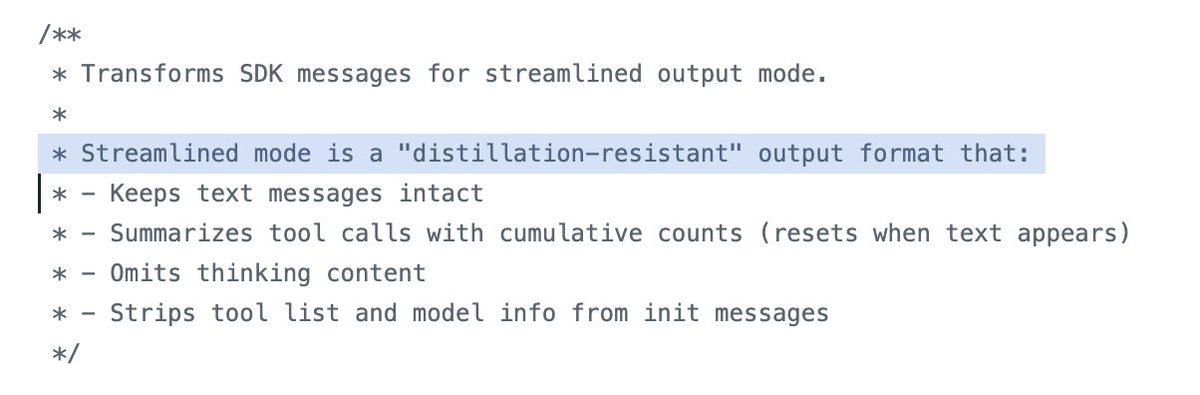

Claude code source code has been leaked via a map file in their npm registry! Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

Hot take: Anthropic leaked Claude Code intentionally to get a nerdosphere code review it would have never gotten if they had just open-sourced it.

Claude code source code has been leaked via a map file in their npm registry! Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

Claude code source code has been leaked via a map file in their npm registry! Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

Matt Maher tested frontier models in Cursor v. other harnesses. Cursor boosted model performance by 11% on average: Gemini: 52% → 57% GPT-5.4: 82% → 88% Opus: 77% → 93% His benchmark measures how well models implement a 100-feature PRD. @cursor_ai consistently outperformed.

I'm not sure that I like Claude 5 Mythos but I understand why they don't want to call it Epic I am quite fond of Symphony and Requiem so let's vote

i often think about this..

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow