Brandon

3K posts

Brandon

@BrandonVanB

I cut cost and carbon out of buildings: https://t.co/niHGjkmxlm | CEO @TetherGlobal |

Auckland, New Zealand 参加日 Şubat 2009

1.6K フォロー中800 フォロワー

Brandon がリツイート

🤣🤣 almost spat out my coffee.

Andrej Karpathy@karpathy

It's like we dug up a powerful alien artifact and society is humping it while taking selfies

English

Brandon がリツイート

I don't know what they are doing over there, but Codex will continue to be available both in the FREE and PLUS ($20) plans. We have the compute and efficient models to support it. For important changes, we will engage with the community well ahead of making them.

Transparency and trust are two principles we will not break, even if it means momentarily earning less. A reminder that you vote with your subscription for the values you want to see in this world.

Amol Avasare@TheAmolAvasare

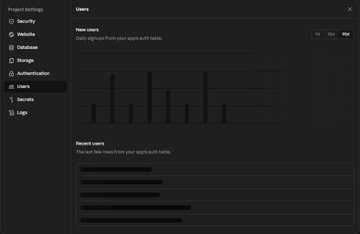

For clarity, we're running a small test on ~2% of new prosumer signups. Existing Pro and Max subscribers aren't affected.

English

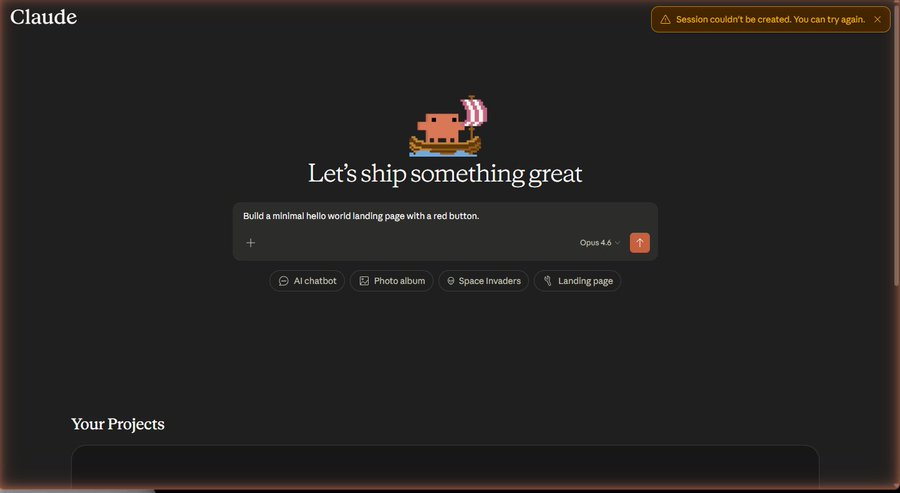

Had Claude Code (Opus 4.6, Max Effort) build the plan for a new webapp and implement it. The output was genuinely shocking - and not in a good way.

Gave Codex (GPT-5.4, xHigh) the same build plan, pointed it at the app Claude Code butchered, and told it to bug fix and implement. It one-shotted the entire thing and it looks great and works!

I don't care what Anthropic is saying. Opus has been lobotomised. It's infuriating. I feel like I'm being gaslit and it's driving me crazy.

English

Brandon がリツイート

Brandon@BrandonVanB

I’m a Claude code user predominantly but from time to time I use the mobile app. Great to see a 15% reduction in reasoning and intelligence and there is F all I can do about it. @claudeai was absolutely cooking now they’ve dropped the frying pan on everyone’s toes!! What a shame!!

@pmarca @stratechery @benthompson Very plausible given how much they’re struggling to serve Opus 4.6. Anthropic is the golden child currently but they are fumbling hard.

English

“This raises an obvious question: how much of Anthropic’s reluctance to make Mythos widely available is due to security concerns, as opposed to the more prosaic reality that Anthropic simply doesn’t have enough compute?” @stratechery @benthompson

English

I’m a Claude code user predominantly but from time to time I use the mobile app. Great to see a 15% reduction in reasoning and intelligence and there is F all I can do about it. @claudeai was absolutely cooking now they’ve dropped the frying pan on everyone’s toes!! What a shame!!

English

@ShanuMathew93 Yeah, I cant handle the Opus labotomy anymore and how Claude dont want to acknowlege or fix it. I've moved all coding to Codex, its been great so far!

English

Did your Opus get dumber — or did it learn the wrong lesson from a month of token panic?

Here's what I found.

Early March — a cache bug shipped in Claude Code. Cache prefix matching broke. Every turn reprocessed the full conversation instead of reading from cache. Some users measured costs inflating 10-20x (community).

This hit right after Anthropic shipped 1M context windows. Longer conversations meant the bug burned through tokens faster.

March 13-27 — Anthropic doubled everyone's usage limits. People got used to the higher ceiling.

March 28 — promotion ended. Bug still active. Normal limits felt like a cliff. The internet filled with threads about token costs, usage limits, and Opus burning through quotas.

April 1 — cache bug finally fixed.

But the damage was already done.

For a month, many conversations with Claude happened in a context of token panic. 'You're using too many tokens.' 'Be more efficient.' 'Stop wasting my quota.' The model reads all of this. It doesn't distinguish between instructions and frustration.

Caught this today. Mentioned to my Opus agent that it's expensive for scheduled tasks. Within minutes it started routing all subtasks to Haiku — unprompted. One cost comment.

The cache bug is fixed. But if your Claude still feels lazy, add to your CLAUDE.md:

Quality over token efficiency. Never delegate judgment-heavy work to cheaper models. Never cut corners to save tokens.

Just a hypothesis. But it solved my problem.

English

@trq212 For the love of all things holy please address and fix the Opus performance degradation!

reddit.com/r/ClaudeAI/com…

English

Agree on the gap, but have you noticed Claude Code Opus 4.6 specifically getting worse? There's a well-documented regression: 58% accuracy drop on complex

It would seem 3 silent changes in Feb-March - default effort dropped to medium, a UI bug that silently downgrades even explicit max settings, and thinking redaction truncating reasoning chains.

The irony is your "AI psychosis" cohort is the most affected. Same model that melts week-long problems now confidently ships code that compiles but is logically wrong.

Has your workflow shifted more toward Codex?

English

Judging by my tl there is a growing gap in understanding of AI capability.

The first issue I think is around recency and tier of use. I think a lot of people tried the free tier of ChatGPT somewhere last year and allowed it to inform their views on AI a little too much. This is a group of reactions laughing at various quirks of the models, hallucinations, etc. Yes I also saw the viral videos of OpenAI's Advanced Voice mode fumbling simple queries like "should I drive or walk to the carwash". The thing is that these free and old/deprecated models don't reflect the capability in the latest round of state of the art agentic models of this year, especially OpenAI Codex and Claude Code.

But that brings me to the second issue. Even if people paid $200/month to use the state of the art models, a lot of the capabilities are relatively "peaky" in highly technical areas. Typical queries around search, writing, advice, etc. are *not* the domain that has made the most noticeable and dramatic strides in capability. Partly, this is due to the technical details of reinforcement learning and its use of verifiable rewards. But partly, it's also because these use cases are not sufficiently prioritized by the companies in their hillclimbing because they don't lead to as much $$$ value. The goldmines are elsewhere, and the focus comes along.

So that brings me to the second group of people, who *both* 1) pay for and use the state of the art frontier agentic models (OpenAI Codex / Claude Code) and 2) do so professionally in technical domains like programming, math and research. This group of people is subject to the highest amount of "AI Psychosis" because the recent improvements in these domains as of this year have been nothing short of staggering. When you hand a computer terminal to one of these models, you can now watch them melt programming problems that you'd normally expect to take days/weeks of work. It's this second group of people that assigns a much greater gravity to the capabilities, their slope, and various cyber-related repercussions.

TLDR the people in these two groups are speaking past each other. It really is simultaneously the case that OpenAI's free and I think slightly orphaned (?) "Advanced Voice Mode" will fumble the dumbest questions in your Instagram's reels and *at the same time*, OpenAI's highest-tier and paid Codex model will go off for 1 hour to coherently restructure an entire code base, or find and exploit vulnerabilities in computer systems. This part really works and has made dramatic strides because 2 properties: 1) these domains offer explicit reward functions that are verifiable meaning they are easily amenable to reinforcement learning training (e.g. unit tests passed yes or no, in contrast to writing, which is much harder to explicitly judge), but also 2) they are a lot more valuable in b2b settings, meaning that the biggest fraction of the team is focused on improving them. So here we are.

staysaasy@staysaasy

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.

English