Hardware at the speed of software

231 posts

Hardware at the speed of software

@HardwareSpeed

Hardware should move at the speed of software. Exploring how spatial AI will make that happen.

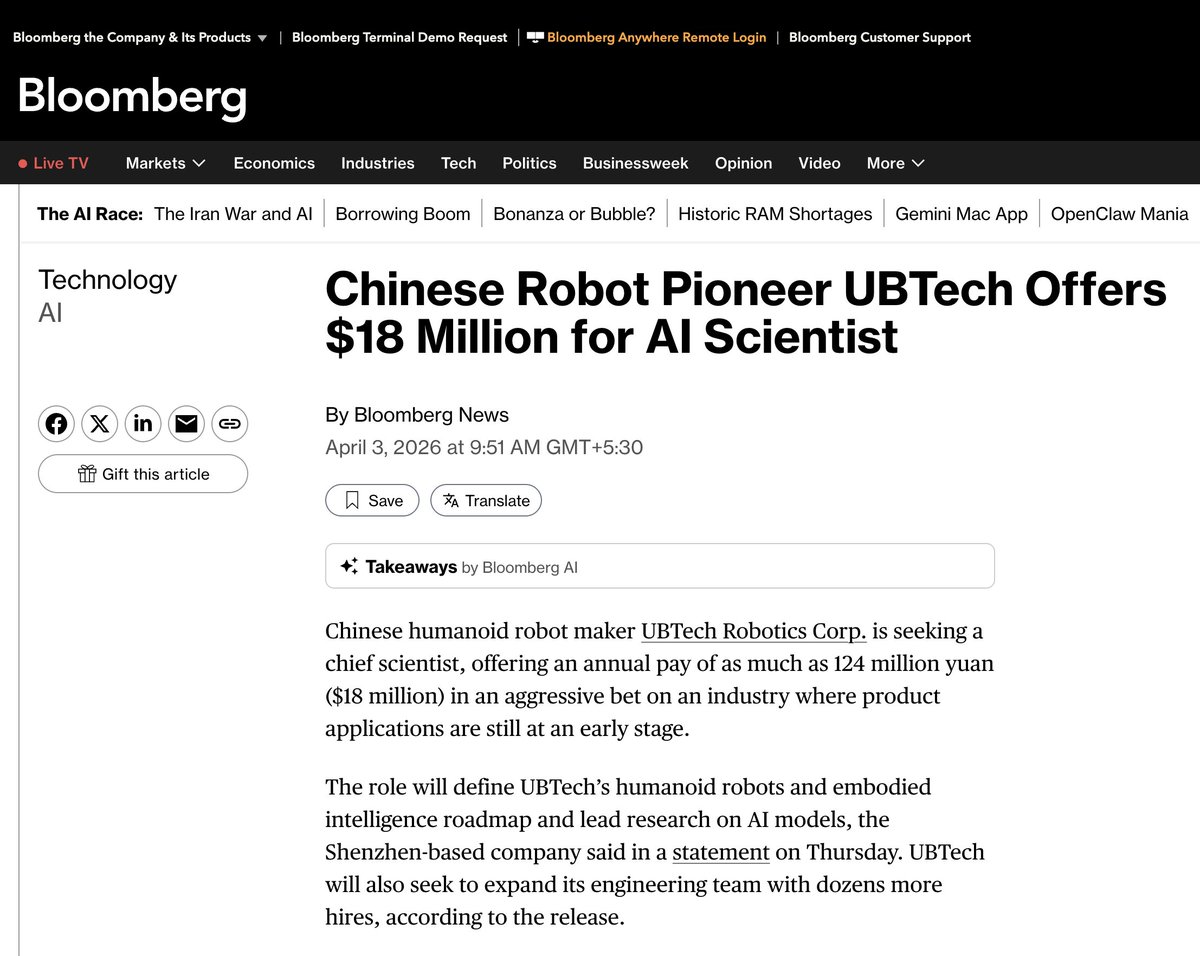

Top talents worldwide gather to explore the future of embodied intelligence! Compete in the Reasoning-Action and World Model tracks for a $530K prize pool. Top teams present at ICRA 2026 and receive AGIBOT robot purchase vouchers. Apply now and join the challenge.

Robot car sales are here! The AgiBot A2 interactive service robot is at the 2025 Shanghai Auto Show, showcasing its skills at booths for SAIC Roewe, BAIC, Changan, and more. It's handling car demos, bilingual interaction, and sales pitches like a pro!

Ceux qui travaillent dans des métiers manuels disent encore qu'ils ne seront jamais remplacés... voyez plutôt ce qui suit. La Chine est en train de construire des usines à données pour robots. À une échelle que personne n'avait anticipée. Ce que vous voyez sur cette vidéo : des rangées d'opérateurs humains équipés de casques VR et de contrôleurs, qui téléopèrent des robots humanoïdes en temps réel. Chaque geste est capturé, enregistré, puis envoyé dans le cloud pour entraîner l'IA. C'est comme ça que les robots apprennent. Pas en lisant du code. En copiant des humains (tant que les modèles monde ne seront pas opérationnels). Le plus grand centre de ce type vient d'ouvrir dans le Sichuan, à Zigong. 6 000 m². Objectif : 15 000 données d'entraînement par jour. 3 millions d'entrées de haute qualité par an. Un nouveau métier est né : "entraîneur de robots IA". Les opérateurs portent des casques VR, leurs mouvements sont répliqués en temps réel par les robots Walker S2. Tri de colis, préparation de café, ménage. Tâche après tâche, le robot accumule des milliers de trajectoires de données par session. Pourquoi c'est crucial : la Chine compte plus de 140 fabricants de robots humanoïdes et plus de 330 modèles différents. Le goulot d'étranglement n'est plus le hardware. C'est la donnée. Et la Chine résout ce problème par la force brute : des centaines de travailleurs dans des centres géants qui font des tâches banales pendant des heures. Les ouvriers se surnomment eux-mêmes "cyber-travailleurs". Parallèlement, le 29 mars, la première ligne de production automatisée de robots humanoïdes en Chine a démarré dans le Guangdong. Capacité : 10 000 unités par an. Un robot sort de la chaîne toutes les 30 minutes. Goldman Sachs estime le marché mondial des robots humanoïdes à 38 milliards de dollars d'ici 2035. Pendant que l'Occident débat de l'IA ... la Chine entraîne des armées de robots. Littéralement. La prochaine révolution ne s'écrit pas en lignes de code. Elle s'apprend par imitation. Et elle a déjà commencé.

weekend update: what was supposed to be a dog, ended up as a spider 🕷️