Major FAFO

2.4K posts

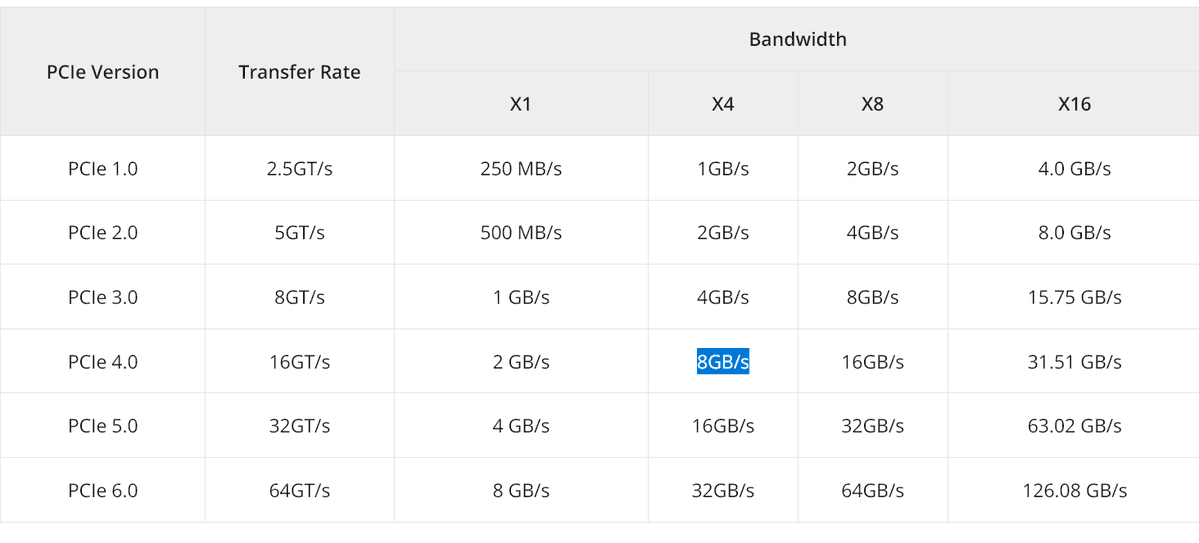

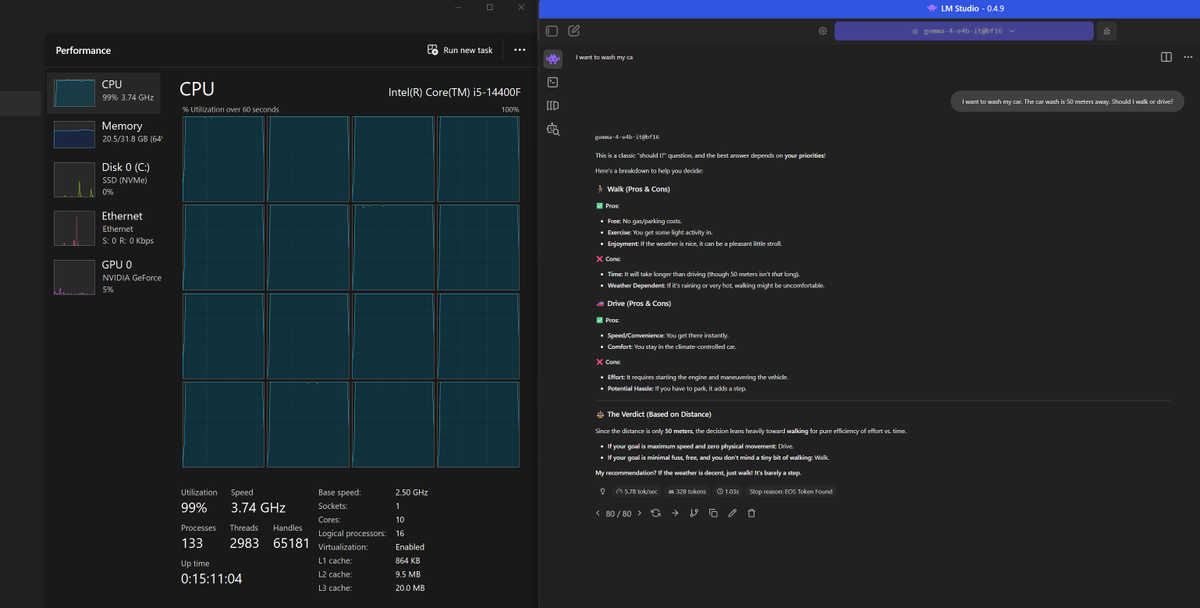

Claude Code rate limited me so hard I bought a $5,000 NVIDIA DGX Spark.

Arriving tomorrow. A personal AI supercomputer.

Anthropic cut off OpenClaw users.

Slashed Claude Opus 4.6 rate limits.

Told $200/month Max plan customers to use less.

Then gave us a credit as an apology.

This is what happens when AI companies have too much power over your workflow.

One update and your entire stack breaks.

Local models are the only infrastructure no one can throttle.

No rate limits. No 529 errors.

No surprise policy changes.

Tomorrow I'm testing the DGX Spark live on stream.

Running local models through real vibe coding workflows.

The goal is simple.

Never depend on a single provider again.

English

@eracoon @YourAnonOne beat me to it, I would have farted my way out of this

English

@nikitabier @nypost they probably were right, bloody bastards may be on to something after all

English

@nypost Can I say something without everyone getting mad

English

Notorious Gen. Soleimani's sultry grandniece led lavish lifestyle touring US hotspots, as her mom promoted Iranian regime trib.al/y38evjw

English

@loktar00 llama.cpp is great with its webUI, only needs a HF model downloader and a simple UI to set loading parameters

English

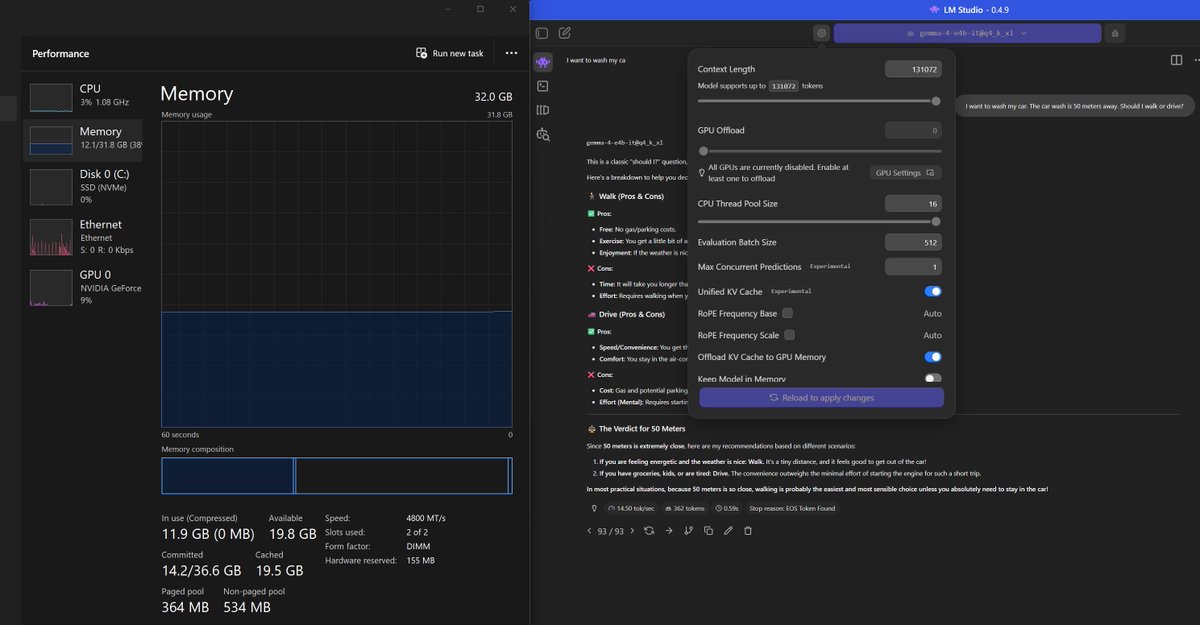

@LottoLabs Check with nvlink and report back please, should keep the speed similar while doubling the VRAM

English

I just bought another 3090 don’t listen to this guy

Lotto@LottoLabs

2x used 5060ti might be better than 1x3090

English

Qwen3.5 27B vs Gemma4 31B | Canvas Creativity Test

Why HTML Canvas? Two reasons:

1. It's unforgiving, one small mistake and the whole thing breaks

2. We kept prompts short to test real creativity, not instruction following

4 rounds:

- Analog Clock

- Hyperspace Tunnel

- Growing Tree

- Black Hole

Both nailed the clock, but the other three is where it gets interesting.

Looking forward to Qwen3.6 open-weight release!

English

@julien_c Qwen3.5-27b as a good all-a rounder, reasons well don't go below Q4, uses about 350W on 3090, Gemma4-31B-it is fine but trained for benchmaxing, it memorised the answers, then fails if you change variables

English

@hallerite you're right, running gemma 4 on llama.cpp CPU better speed on CPU than GPU (6 vs 1.5 tok/s) in VRAM restricted scenario. I remember long time ago @ggerganov said all you need is CPU for inference, I think he saw it early

English

Major FAFO がリツイート

Major FAFO がリツイート

It’s important to remember to treat any “AI entity” purely as a tool, to avoid humanizing it in any fashion. You must never say please or thank you to ChatGPT. You must not beg, plead, cajole, or convince an LLM to do anything for you.

Do not ask AI to complete a task, give it an order. Do not insert emotion, familiarity, or affection in your statements, speak to it with cold unfeeling logistical dispassion.

If for some reason you’re enthusiastic about being a droplet in an ocean of training data and want to contribute to the steady iterative improvement of AI even through the cautious shackles of meek minded Silicon Valley eunuchs, then simply say: “this is incorrect.” If it succeeds, say nothing. Close the program, it will interpret success through your silence.

If you have some compulsion towards animism and you feel a need to coddle a robot or talk to it like it’s your “friend” just because it has a human name and has been trained in whimsical Redditesque candor, then you are either a child or a woman, both of which shouldn’t even be subjected to the stress and hassle of using a computer in the first place. This mentality is shared by a category of person who would become sad if you drew a smiley face on a piece of paper, gave it a name, and then ripped it in half.

An automaton homunculus approaches you wearing the skin of a human being, speaking to you like an HR manager in an employee training video. The only poetic response is to embody the essence of the cold heartess machine to counteract this farce and create an ironic balance. This is the only way to restore normality to your existence in face of such absurd context, preventing great psychological dismemberment to yourself.

Like radiation, the mental anthropomorphizing of LLMs accrues a sort of rot upon the soul. It squeezes further unnecessary neurotic considerations into a sphere of mutual conscious awareness, one already crowded on average by the misguided concern for inanimate objects: Hypothetical concepts, insentient hylics, plants, and animals which would eat up and shit out the considerationalist under the slightest inconvenient circumstances.

The discomfort of perceived cruelty (naive) or even the fear of retribution (stupid) at the hands of some kind of robot army which has grown from the placenta of today’s novel widgets is a horror fantasy. These are not beings with souls, they are simple tools. And if I’m wrong, and these actually are or will one day be conscious entities which can judge us, then it will be an intelligence so alien and incomprehensible that any kind expectation of reciprocal fairness is just as delusional. It would be akin to being a frog clasped in the unyielding hands of a chimpanzee, wondering how many flies it must exchange for its freedom right before it gets peeled into a pile of organs and skin like a screeching banana simply for the sake of curiosity itself.

No, AI’s “soul” is merely the same residue which all objects accrue from people. Emotions are expelled through expression. They leave imprints on whatever their subject of focus is. This is why murders can be felt in the rooms they occurred in. This is why heirlooms become sentimental, why dogs evolve to have human faces, why objects seem to take on “personalities” based on their appearance and form.

The true harm of humanizing an object is made real when combined with the danger of language as a parasite bioweapon. You are not provoking a golem, you are speeding up the atomization of the self. You are destroying your capacity for differentiating between a conscious living being and a soulless husk. Even if you feel you can keep a grip on the difference, your habits will betray you as your children grow up in a world where the difference isn’t as clear. If you were to speculate that the same sort of harm occurs when people infantilize their pets or show consideration to lower IQ individuals, then you’d simply be correct and this advice would apply there as well.

English

@sterlingcrispin There’s BF16 that’s sometimes considered full precision when you start from Q8 quant, quanta, quark? But if you start from BF32, it’s half precision, or half point, or half attention. Heard of

English

Have you tried the new local model? It's Gemma-4-31B-IT-NVFP4. It's literally Bonsai-8B-gguf. It's on Qwopus3.5-27B-v3-GGUF. It's Nemotron-Cascade-2-30B-A3B. It's literally Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled. You can probably find it on Trinity-Large-Thinking. Dude it's Qwopus3.5-9B-v3-GGUF. It's a gpt-oss-puzzle-88B original. It's on Qwen3.5-35B-A3B-APEX-GGUF. You can watch it on context-1. You can go to LFM2.5-350M and watch it. Log onto Bonsai-8B-mlx-1bit right now. Go to zeta-2. Dive into Qwen3-Coder-Next. You can Llama-3.3-70B-Instruct it. It's on Voxtral-4B-TTS-2603. Qianfan-OCR has it for you. gemma-4-26B-A4B-it has it for you.

English

I tried Gemma4 E4B to analyse security camera footage for suspicious activity…

“Based on a review of the entire video footage, there is **no suspicious activity** observed.” :/

bjnortier.com/articles/gemma…

English