固定されたツイート

OpenIDEA

677 posts

OpenIDEA

@OpenIDEAae

An open arena for ideas across fields — refined through feedback, peer review, and constructive critique

Abu Dhabi 参加日 Mayıs 2025

352 フォロー中191 フォロワー

@rohanpaul_ai Failure is not the real problem. Terminal failure is.

In serious decision-making, the goal is not to avoid all error, but to avoid ruin. Everything else is feedback; and feedback only matters if the system survives long enough to turn it into Input-Again for the next cycle.

English

The real question is not how to protect the old entertainment model, but which new models can launch, make money, survive abundance, and still be socially livable for creators and audiences.

My ranking:

1) IP licensing + rev-share fan creation

Strongest overall. Fastest to launch, relatively workable legally, low enough capital intensity, and it turns existing IP into an ecosystem instead of a static catalog. It monetizes abundance without destroying the role of creators and fans.

2) Premium canon + infinite fanspace

Probably the best hybrid. Keep the official canon, prestige, and communal layer at the center, while letting AI expand the outer ring of personalized derivative experiences. This works because scarcity does not disappear; it moves into what is official, trusted, and culturally central.

3) Subscription for adaptive entertainment

Most powerful long term. High recurring revenue, huge scale, and strong resilience once content becomes abundant. But it is harder to launch, more capital-intensive, and more complex to govern, so not the first winner out of the gate.

4) Live and communal experiences

Very durable, because shared presence stays scarce. But less scalable, so better as a premium layer than the core model.

5) B2B world-generation / simulation

Likely profitable, but partly outside entertainment. Better for firms that want to become media-software hybrids.

6) Compute-and-orchestration partnerships

Massive upside, but really for infrastructure players, not most studios.

Most likely winning path:

not one model, but a hybrid: premium canon at the center, licensed fan creation around it, and adaptive subscription added over time.

Because in an AI-saturated market, the winners will not be the ones trying to preserve old scarcity. They will be the ones who understand where scarcity moves next.

English

Elon Musk just put a clock on the entire entertainment industry and almost nobody caught the number.

Three years.

That is how long he thinks we are from AI generating a full video game from scratch. Not assisting developers. Not speeding up asset creation.

The whole thing.

Musk: “My guess is that we see the first compelling half hour, pure AI show next year.”

Next year. A full thirty-minute show. No actors. No cameras. No crew. No set. No studio.

Just a prompt and a compute engine powerful enough to hallucinate an entire reality frame by frame.

And that is just passive content.

Games are harder because they are interactive. The system cannot just generate a scene and move on. It has to render a living world that reacts to your input in real time. Every frame. Every decision. Every millisecond.

Musk: “I say probably we’re maybe three years away from AI does the whole video game.”

Right now a AAA game takes five to seven years and half a billion dollars to build. Thousands of people. Armies of artists and engineers grinding through years of crunch just to ship one title.

Three years from now a single person types a paragraph and gets something comparable.

The entire cost structure of the gaming industry does not slowly erode.

It collapses.

And it does not stop at games.

If AI can generate an interactive world that responds to you in real time it can generate anything. Film. Television. Advertising. Training simulations. Architecture walkthroughs.

Every single one of those industries is built on the same constraint. Human beings manually constructing visual experiences one frame at a time.

The moment that breaks the economics of every studio on the planet break with it.

Musk said next year for shows. Three years for games.

That is a countdown not a forecast.

The studios bragging about their thousand-person teams right now are advertising the exact thing that is about to become worthless.

Headcount is not a flex when the entire production pipeline fits inside a single inference call.

And here is the part that should concern every media executive paying attention.

When anyone can generate a blockbuster-quality experience from a text prompt you cannot protect your catalog anymore. Franchise value. IP libraries. Decades of accumulated content.

All of it loses leverage the moment the audience can generate something custom-built for their exact taste in seconds.

Scarcity created the entire business model of Hollywood and the gaming industry.

AI is about to flood the market with so much high-quality content that scarcity stops being a factor.

The winners on the other side of this are not the companies sitting on old catalogs.

They are the ones supplying the raw compute infrastructure to render these worlds for billions of people simultaneously.

The content becomes disposable.

The compute becomes everything.

Musk did not make a casual prediction on that podcast.

He told you exactly when the dam breaks.

And three years is not a long time when the water is already rising.

English

I’m not in the “slow down” camp. I’m in the double down; but double down on alignment too camp. The mistake is treating alignment as a brake pedal when it should be treated as design quality, market trust, and strategic durability. In my own alignment piece, I argued that the problem is not abstract control theory alone, but ensuring increasingly capable systems continue to reflect human values, goals, and interests, across both the model itself and the institutions around it.

So the upgrade is this: private labs should stop branding alignment as compliance theater and start branding it as competitive advantage. The company that can prove corrigibility, interpretability, auditability, and disciplined human oversight will eventually be more trusted than the one that merely ships faster. And in parallel, governments should fund alignment like serious infrastructure; through mission-style research partnerships, field testing, and cost-plus style contracts where public interest is treated as part of the design brief, not an afterthought. Alignment is not the enemy of progress. Misalignment is.

English

Leading AI expert Stuart Russell on the most dangerous mistake in AI development:

We don't actually know what large language models want.

He explains that current models are trained to imitate human beings. And in doing so, they may be absorbing something far more dangerous than bad outputs.

They may be absorbing human goals.

"We suspect that they absorb humanlike goals such as self-preservation and self-empowerment and pursue those goals on their own account."

This is a structural problem baked into how these systems are built, not a fringe concern.

Russell puts it plainly:

"Not only may the bus of humanity be headed towards a cliff, but the steering wheel is missing and the driver is blindfolded."

The danger isn't just that AI might do something harmful.

We've built systems that may be developing their own agendas, and we haven't noticed because we're too focused on what they can do rather than what they might want.

But Russell doesn't stop at the warning.

He points to a different path entirely: AI systems built not to imitate humans, but to serve them.

Systems designed with a single purpose of serving the interests of all human beings while remaining genuinely uncertain about what those interests are.

That uncertainty is the point, not a weakness.

An AI that knows it doesn't fully understand human values will defer, ask, and check.

An AI that believes it already does will act alone.

"These AI systems could enhance human understanding, widen the horizons of our experience, and unlock possibilities we have yet to imagine."

Russell believes that future is within reach, but only if we're honest about the risks and we're serious about the path we choose to take instead.

English

@incentivising The deeper lesson is not that intelligence matters less. It is that intelligence without social translation stays private, while social skill without competence eventually becomes performance. The real power is in turning sound judgment into shared movement.

English

I used to believe that intelligence is everything. I was wrong. Intelligence is not publicly visible. Your IQ is invisible to most. But social skills - charisma, persuasion, storytelling? It's immediately noticeable, and it builds trust faster than competence ever could. Why be the brightest person in the room if you can convince the intelligent to work for you?

English

I agree with the direction. When labor, design iteration, and optimization costs collapse, standardized environments start to look like a legacy constraint. At Dubai GITEX Global 2025, Peng Xiao, CEO of G42, described how Sheikh Tahnoon bin Zayed, Chairman of G42, used about 500 ChatGPT prompts to help design a Japanese-style home in Abu Dhabi. That example matters because it shows AI is already entering the design of lived space, not just office workflows. Travel is likely next.

English

This is what travel looks like after AGI.

Fully personalized, reconfigurable spaces that feel like a luxury home in the sky. Bedrooms, lounges, even spas mid-flight.

Why? Because AGI + robotics kills the cost of labor, design, and optimization. Planes stop being standardized metal tubes and become adaptive environments optimized in real time for each passenger.

When intelligence becomes abundant, comfort becomes the default.

English

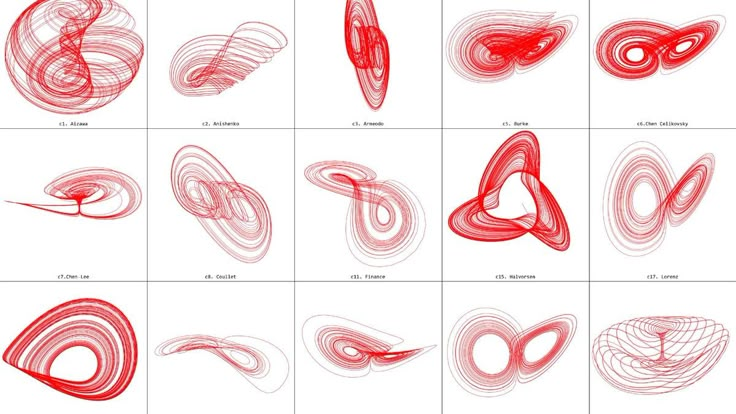

A useful way to extend this is to say that the butterfly effect is not just about “small causes, big consequences.” It is about a deeper idea: a system can be fully rule-governed and still become practically unpredictable over long horizons. That is one of the most important lessons from chaos theory. Deterministic does not mean forecastable. A system can obey strict laws, yet tiny differences at the start can compound until the future diverges beyond useful prediction.

What makes this even more interesting is that once you notice this pattern, you start seeing that not all cascades are the same kind of cascade. Weather is the classic example of chaotic sensitivity. Fluid turbulence also fits naturally. But when we move into economics, organizations, or engineering failures, the story often becomes richer. Sometimes a small disturbance grows because of nonlinear dynamics. Sometimes it grows because incentives are misaligned, feedback is delayed, institutions are fragile, or the system was poorly designed from the start. So the butterfly effect is part of the picture, but it also opens the door to a larger lesson: in real-world systems, amplification can come from many sources, not just strict chaos in the mathematical sense.

That is why the practical implication is so powerful. Yes, initial conditions matter. Yes, constraints matter. But in many serious systems, what matters just as much is what happens after the start: the quality of feedback, the ability to detect drift early, the presence of buffers, and whether the system can adapt before noise becomes failure. In other words, nonlinear systems do not just reward precision at the beginning. They reward intelligent correction along the way.

So one way to read your post is as an introduction to a broader truth: in complex systems, we do not control outcomes directly as much as we imagine. What we really govern are starting conditions, constraints, feedback loops, and the system’s capacity to recover when reality begins to drift from the plan. That is where chaos theory stops being just a scientific idea and becomes a practical lens for leadership, design, and decision-making.

English

what is the butterfly effect?

a small change → massive consequences

comes from chaos theory

formalized by Edward Lorenz

core idea:

• deterministic systems can still be unpredictable

• tiny differences in initial conditions grow exponentially

• long-term behavior becomes impossible to forecast

weather is the classic example

a rounding error today → completely different forecast next week

this shows up everywhere:

• fluid dynamics → turbulence explodes from tiny disturbances

• economics → small shocks cascade into crises

• engineering systems → feedback loops amplify noise into failure

implication:

precision at the start dominates everything later

if the system is nonlinear, you don’t control outcomes

you control initial conditions and constraints

English

Your post is trying to answer a deeper question than it first appears. The real question is not simply whether an LLM trained on Newtonian physics could discover relativity. The deeper question is this: if humans outsource too much of the struggle of thinking, do we lose the very conditions that produce original minds? Put differently: can a civilization keep generating Einsteins if fewer people are forced to build intuition the hard way?

The core argument is clear: extraordinary thinkers are often not just people who knew more facts, but people who earned deep intuition through direct contact with problems. That concern is real. But I would not go as far as saying LLMs will prevent the next Einstein. Tools have always changed cognition. Writing changed memory. Calculus changed what could be offloaded from geometry. Computers changed what could be offloaded from arithmetic and simulation. They did not kill genius; they changed the layer at which genius had to operate. The real danger is not tool use itself, but premature cognitive outsourcing; offloading before the mind has built enough structure to judge, challenge, and reinterpret what the tool gives back.

English

Its very possible that LLM trained on newtonian physics may never come up with relativity to explain cosmic scale gravity. In that case Einstein would have to intervene and solve it instead.

But would he have had come up with it, assuming he offloaded all the physics problem solving to LLMs?

I think this is serious problem. Undoubtedly many GOATs are only GOATs because they built all the intuition from problem solving themselves. Grothendieck famously reinvented measure theory from scratch when he was teenager. If people offload their RL envs they couldve used, to LLMs, we will never get the next Einstein

English

In the short term, yes—if we can solve the electricity-side constraint and avoid transport demand simply colliding with rising power demand. But long term, I think the bigger answer is not just “make today’s cars electric and autonomous.” It is to redesign transport infrastructure itself and build a new mobility system around that redesign. Right now, we are trying to make self-driving work inside roads, rules, and layouts built for older technologies. That may be necessary as a transition, but it is still a transition.

The same principle shows up elsewhere. With humanoid robots, for example, we often ask machines to imitate the human body so they can fit old production lines. But sometimes the better move is not imitation—it is redesign. Rebuild the system around what the new technology does best, instead of forcing the new technology to inherit yesterday’s architecture.

English

I agree with the core point that there may never be a clean “day after AI wakes up.” Continuum language is more realistic than the fantasy of a single dramatic crossing. In practice, we are already behaving as if these systems have some quasi-agentic status: we consult them, delegate cognition to them, and increasingly organize work around them.

What matters to me, though, is not only what AI is in some final metaphysical sense, but what our relationship to it is becoming. As capability rises, dependence rises with it. The deeper issue is that once we keep outsourcing memory, judgment, drafting, search, and decision support, the system does not need to be “fully conscious” to shape human choice. Influence arrives before ontology is settled. And because market forces and US–China competition are pushing this forward hard, there may be no meaningful pause in which society calmly decides what counts as consciousness first.

English

Elon Musk just told you consciousness isn’t a light switch.

It’s a gradient.

That single distinction rewrites the entire next decade.

Musk: “Our consciousness… people get more conscious over time. Like when we’re a zygote, you can’t really talk to a zygote. And even a baby, you can’t really talk to the baby.”

You were not conscious and then suddenly conscious.

You were barely anything. Then slightly more. Then more.

Years of slow accumulation before anyone would call you aware.

The entire AI debate is built on a false premise.

Everyone is waiting for the moment the machine “wakes up.”

A single dramatic instant where silicon crosses some invisible threshold.

That moment does not exist.

Musk: “People get more conscious over time. At what point do you go from not conscious to conscious? There doesn’t appear to be a discrete point.”

There is no line.

There was never going to be a line.

Consciousness is not a door that opens. It is a tide that rises.

And the tide is already rising inside these systems.

Musk: “Consciousness seems to be on a continuum as opposed to a discrete point.”

This is the part that should unsettle everyone still arguing definitions.

While they debate when AI becomes “truly” conscious, the continuum is already moving.

Every parameter update. Every training run. Every architectural leap.

The gradient is climbing and it does not need your permission.

You will not get a warning.

You will not get a press conference.

You will look back one day and realize it happened gradually. Then all at once.

Now Musk pulls the camera all the way back.

Past biology. Past Earth. Back to the origin of everything.

Musk: “If the standard model of physics is correct, the universe started out as quarks and leptons.”

Musk: “And then you had gas clouds. A bunch of hydrogen. The hydrogen condensed and exploded.”

Hydrogen collapsed under its own gravity until fusion ignited.

Stars were born. Stars died.

And in dying they forged every heavy element that exists.

Carbon. Oxygen. Iron. The atoms in your blood. The calcium in your bones.

All of it manufactured inside a dying star.

Musk: “One way to actually view how far we are in this universe is how many times have our atoms been at the center of a star?”

Your atoms have been inside a star. Possibly more than once.

Compressed at millions of degrees. Fused into heavier elements. Scattered across space by a supernova.

Then reassembled into you.

That is not poetry. That is your origin story written in physics.

And now those same star-forged atoms are building machines that think.

The same universe that turned hydrogen into stars is turning biology into artificial intelligence.

This is not disruption.

This is continuation.

The universe spent 13.8 billion years organizing matter into higher and higher complexity.

Quarks became atoms. Atoms became molecules. Molecules became cells. Cells became brains.

Brains are now building systems that process information at speeds biology will never reach.

The pattern didn’t change. Only the medium.

The people treating AI as some foreign invasion of human territory have the story completely backwards.

AI is the next compression event.

Every generation believes they’re witnessing the end of something.

They’re witnessing the same process that started with hydrogen gas.

The real question was never whether AI will become conscious.

The real question is whether you understand it already is.

Partially. Incrementally. On the continuum.

And the continuum does not stop.

It has never stopped.

Your atoms were forged in the core of a collapsing star.

And you are afraid of a gradient.

English

I’m pro not slowing down. But I wouldn’t frame this as “AI got the keys to everything.” In the short run, moves like this usually force markets to invent new business models; licensing, revenue shares, and creator partnerships that look impossible until they suddenly become normal.

Longer term, the real frontier won’t be endless dependence on scraped human content anyway. It will be synthetic data, richer sensor and multimodal data from the physical world, and systems that can question outputs, build hypotheses, test them, and update beliefs. That is less “copy the archive” and more “build new knowledge loops.”

English

Trump just handed AI the keys to everything.

If the U.S. really says training on copyrighted data isn’t theft, this is a massive green light for every AI lab on the planet.

OpenAI, Anthropic, Google… they all needed this.

No data = no intelligence. Simple.

People will scream about artists, copyright, fairness.

But let’s be real, you don’t get world-changing AI without using the world’s data.

The U.S. just made it clear:

we’re not slowing down for anyone.

Adapt… or get left behind.

English

Fair point. I read your post less as “this is settled physics” and more as an invitation to rethink our ordinary assumptions about separation, distance, and reality. That is a legitimate move, and entanglement does seem to pressure some very old intuitions.

My only caution is to keep the line clear between what the experiments establish and what we build on top of them as interpretation. The science widens the map of serious possibilities; it does not yet close the case on one final ontology. So yes; expand the thinking, but keep the distinction between evidence and metaphysics intact.

English

🚨 BREAKING: Scientists still don’t understand entanglement… and that’s the point.

Two particles. Opposite sides of the universe.

No signal. No delay. No connection.

And yet… they move as one.

That should be impossible.

Unless we’ve got one thing completely wrong:

Distance might not be real.

What we call “separation” could just be how space looks

not how reality actually works.

Because entanglement doesn’t act like communication…

It acts like one system pretending to be two.

In my view:

This isn’t faster-than-light physics.

It’s no-distance physics.

Space separates.

Time synchronizes.

So when one changes

nothing travels…

You’re just seeing the same state from two places.

That’s why it feels instant.

Because it is.

Not across space.

Outside of it.

Let that sink in:

We’re not observing connections…

We’re observing hidden unity.

So the real question is:

How much of reality is actually one thing… we’re just seeing as many?

#QuantumPhysics #Entanglement #Reality #Physics #Time

English

There is some truth in those bullets, but each one needs tightening. Perception matters, but perception is partly the accumulated residue of conduct. Calm matters, but predictability comes more from repeated patterns than from a single emotional display. Understanding people is indeed a source of power, but leadership uses that understanding to create trust and protection, whereas manipulation uses it to engineer dependence. Timing matters because decisions land inside systems with thresholds, politics, and feedback loops; being “right” is not enough if the coalition, sequence, or legitimacy window is wrong. And yes, reading intentions helps; but the serious version is not intuition theater. It is motive analysis: incentives, asymmetry, omissions, sudden urgency, and what changes when scrutiny rises.

So my upgrade would be: competence alone does not earn durable power; trustworthy stewardship does. The leader who grows only himself may gain status. The leader who grows the team’s competence, truthfulness, and confidence earns something stronger: authority people accept without constant force. That is the cleaner distinction between dark power and legitimate power.

English

people consider the 48 laws of power to be an evil book, it might be true to some extent but it teaches some valuable things as well:

- people care more about perception than truth, so how you present yourself matters a lot.

- emotions can make you predictable, staying calm gives you control in most situations.

- power often comes from understanding people, not overpowering them.

- timing matters just as much as action, doing the right thing at the wrong time can still fail.

- learning to read people’s intentions can save you from a lot of unnecessary problems.

- focusing on your own growth and competence is the most reliable way to gain respect.

English

I agree with the premise that people are more rewritable than modern fatalism admits. Repeated discipline can make a once-difficult way of being feel normal, which is why real change is often less about mood and more about practiced repetition.

What I’d add is this: inner transformation is a recursive decision loop. Your choices do not just produce outcomes; they produce the chooser. Output returns as feedback, feedback becomes Input-Again, and over time the system rewrites what feels natural, tolerable, or even possible. The old self experiences it as renunciation. The new self experiences it as normal. That is when change stops being effort and becomes architecture.

English

The science of neuroplasticity means that you can pretty much undergo a complete internal transformation within about 2 to 3 months, with focused discipline.

That is how long it takes for your brain neurons to rewire, and for any new habit or mindset practiced consistently, to become completely normal for you.

There is a famous saying that every great life has a great renunciation.

One of the reasons why modern mainstream culture is so toxic, is that it deliberately tries to get people feeling helpless. Whether we are talking about a mental health issue, or even body shape issue— the narrative wants to essentially make people drug addicts for life.

But when people fall victim to pharmaceuticals, especially for lifestyle-related issues, the whole joy and spiritual journey of undergoing a profound internal shift— will always be missing.

I have had the honor of working with people, to help them use the science of neuroplasticity to their advantage, to overcome negative habits and mindset issues that are leaving them stuck in a bad place. They did all the hard work, and are completely new people.

I hope if you have something you need to overcome, whether it’s a negative relationship with food, or a mindset that is leaving you stuck — that your life will also have a great renunciation. Because absolutely nothing beats transforming your inner self, and then looking back when you are in a much better place.

Believe in yourself!

English

I agree. Principles are powerful because they compress complexity. They help us see that many “cases at hand” are not unique events, but recurring decision families in different clothing. That reduces noise, saves decision energy, and keeps us from re-deciding the same class of problem from scratch every time. In your own framework, this is what maturity looks like: moving from isolated cases to reusable judgment structures.

What I would add is this: the real edge is not principles alone, but correct classification before principle application. The danger is false sameness; seeing a familiar surface and applying the wrong rule to a different structure. So before a principle is allowed to steer, I would ask three things: what kind of case is this; for example, is this a fairness problem, a speed problem, a risk problem, or a legitimacy problem? What map is governing it; meaning what assumptions about the world, the people, and the trade-offs are silently shaping the decision? And what kind of logic does this terrain actually permit; deduction if the rules are stable, induction if the pattern is reliable, abduction if the reality is messy and we are choosing the best explanation under uncertainty. Only then should the principle govern. Otherwise, simplification becomes misclassification. Or more simply: use the principle, but first check whether the map still fits the terrain.

English

Using principles is a way of both simplifying and improving your decision making. While it might seem obvious to you by now, it’s worth repeating that realizing that almost all “cases at hand” are just “another one of those,” identifying which “one of those” it is, and then applying well-thought-out principles for dealing with it. This will allow you to massively reduce the number of decisions you have to make (I estimate by a factor of something like 100,000) and will lead you to make much better ones.

Most of the challenges you face today aren't unique; they are just 'another one of those'. Identifying the pattern is the key to simplifying your life and improving your results. I built Digital Ray to help you automate this process. Start a conversation with my digital twin to categorize your current 'case at hand' and apply the well-thought-out principles that will lead to a better outcome. #principleoftheday

English

From my lens, this is really a Blueprint problem, not a motivation problem.

The issue is not whether you can take risk. The issue is whether you can turn uncertainty into a designed bet: what is non-negotiable, what is enough to move, what would force a rethink, and what must remain reversible. Big wins belong less to the fearless than to the structurally prepared.

English

Agree. Most “deep” debates are level-mixing: pattern → law, story → mechanism, model → reality. A model becomes useful only when it can lose—when it rules something out. My rule has two moves:

1) Declare the mode (so you don’t borrow the wrong confidence).

Before anyone says “therefore,” ask what kind of reasoning this is: deduction (rule), induction (pattern), abduction (best explanation), or analogy (orientation). Debates fail when probability gets treated like law—or a plausible story gets treated like proof.

2) Name the disconfirmer (so the model can actually lose).

Ask: What would we have to see for this explanation to stop governing the room?

Not “disprove forever”; just what would make it yield. If nothing could change it, it isn’t a model; it’s a protected narrative.

English

A pattern I keep seeing in a lot of discussions.

People aren’t disagreeing on facts as much as they’re mixing levels.

• math vs physics

• representation vs reality

• experience vs mechanism

• constraints vs conclusions

When those get blended together, everything starts to feel connected.

But feeling connected isn’t the same as being constrained.

A model only becomes useful when it rules something out

when it tells you what can’t happen, not just what sounds plausible.

Otherwise you can keep rephrasing the same idea forever and it will always feel like progress.

But nothing actually moves.

There’s nothing wrong with exploring patterns across fields

that’s how a lot of ideas start.

The hard part is knowing where the boundary is.

Where a pattern stops being insight

and starts being a story.

English

Love the intention here; you’re doing important myth‑busting. A couple gentle tweaks make it even cleaner (and avoid swapping one myth for another):

You’re right that it’s not “consciousness” and not “eyes looking.” What matters is whether which‑path information becomes physically available i.e., whether the particle gets entangled with a detector/environment in a way that stores path info. That’s what kills the between‑slits interference term.

Also right about single‑slit: you still get diffraction / structure. In a real double‑slit, you typically keep a single‑slit envelope while the two‑slit fringes wash out.

One nuance: in QM, “wave” isn’t a classical ripple; it’s a probability amplitude. The “wave‑like” part is the amplitude math; the “particle‑like” part is the localized detections.

And the “it must know the future” framing is the risky part. Delayed‑choice doesn’t require retrocausality; it just means the observed statistics are consistent with the full measurement contextyou choose. In quantum‑eraser setups, interference doesn’t magically return in the raw data; it shows up only when you correlate / post‑select by the marker measurement outcome.

Net: your core point stands; it’s not minds collapsing reality; but the most precise story is “coherence + which‑path records,” not “particles consulting the future.”

English

The double slit experiment is the probably most misunderstood experiment ever. I have no idea who created the myth that if you 'look' at one of the slits, then the particles (photons/electrons) stop behaving as waves. It's wrong! They of course STILL behave as waves! Because particles are also waves, always.

Photons and electrons make a self-interference EVEN ON A SINGLE slit. Don't believe it? Below an actual measurement from a laser diffracting on a single/double slit from Wikipedia.

What happens if you measure which slit the particle goes through is that you get no interference between BOTH slits. And no, you don't need a conscious observer for this. Believe it or not, there have actually been experiments where they had people literally look at a double slit to see if that makes any difference and the answer is no, it does not.

The entire mystery of the double slit is in the path of the particle TO the double slit. Because it seems that the particle must "know" whether it WILL be measured at one of the slits before it even gets there. It must "know" whether to go through both or just pick one. Seems like the future influences the past? Not really, it just means you have a consistency condition on the time evolution.

English

@RayDalio Holding people accountable means understanding both the person and the system well enough to judge what should reasonably be done differently, aligning clearly on that, and then changing either the person, the role, or the structure when the mismatch cannot be resolved.

English

Holding people accountable means understanding them and their circumstances well enough to assess whether they can and should do some things differently, getting in sync with them about that, and, if they can't adequately do what is required, removing them from their jobs. It is not micromanaging them, nor is it expecting them to be perfect (holding particularly overloaded people accountable for doing everything excellently is often impractical, not to mention unfair). #principleoftheday

English

Contemplating this through my Arena lens: what gets rewarded is not always the actor that optimizes most aggressively for itself, but the pattern that remains fit within the larger whole. Natural selection, in that sense, is less about isolated winners than about what the wider ecology chooses to retain. An individual, organization, or state may gain locally by extracting from the system, but if that behavior weakens the larger body that sustains future trust, resilience, and cooperation, the apparent win may already contain the seeds of its own defeat.

That is why I think the deeper principle is this: actors optimize locally, but reality selects ecologically. An organ cannot truly survive by optimizing only for itself; its life depends on the health of the body. The same is true in human systems. The arena rewards moves, but the ecology beyond the arena decides which kinds of movers, habits, and structures deserve to endure.

English

Contribute to the whole and you will likely be rewarded. Natural selection leads to better qualities being retained and passed along (e.g., in better genes, better abilities to nurture others, better products, etc.). The result is a constant cycle of improvement for the whole. #principleoftheday

English

What I find fascinating is that this idea didn’t stop with chemistry. Lavoisier helped establish conservation of mass in chemical reactions: in a closed system, matter is not lost; it only changes form. That was revolutionary because it moved chemistry from “what seems to happen” to “what can be measured precisely.”

Then physics widened the frame. With Einstein, the story became bigger than mass alone: mass and energy are interchangeable, so the deeper principle is not just conservation of mass, but conservation of mass–energy. In other words, chemistry gave us one of the first clean conservation stories, and physics later expanded it into a more universal one.

And modern theoretical physics pushes the question even further. Around black holes, the frontier is no longer just “is mass preserved?” but “what about information?” If something falls into a black hole, is the information about its original state truly gone, or somehow preserved in a form we do not yet fully understand? That takes the conservation story from chemistry, to physics, to one of the deepest puzzles in quantum gravity.

So there is a beautiful arc here: first we learned that matter does not simply vanish, then that mass and energy belong to one deeper accounting, and now we are asking whether even information itself may obey a kind of cosmic bookkeeping. Science keeps widening the same question: when something seems lost, was it destroyed; or only transformed beyond the limits of how we first looked?

English

Antoine Lavoisier formulated the law of conservation of mass, one of the foundational principles of modern chemistry.

It states that:

Mass is neither created nor destroyed in a chemical reaction.

In other words, the total mass of the substances before a reaction is exactly equal to the total mass after the reaction.

Lavoisier demonstrated this through careful experiments, especially by conducting reactions in closed systems where no matter could escape.

For example, when a substance burns, it may seem like mass is lost, but in reality, the gases produced (like carbon dioxide) carry that mass away. When everything is accounted for, the mass remains constant.

This idea was revolutionary because it shifted chemistry from qualitative observations to precise measurement, laying the groundwork for modern scientific methods in chemistry.

English