Unrealrealist⏸️

821 posts

@PDoomOrder1

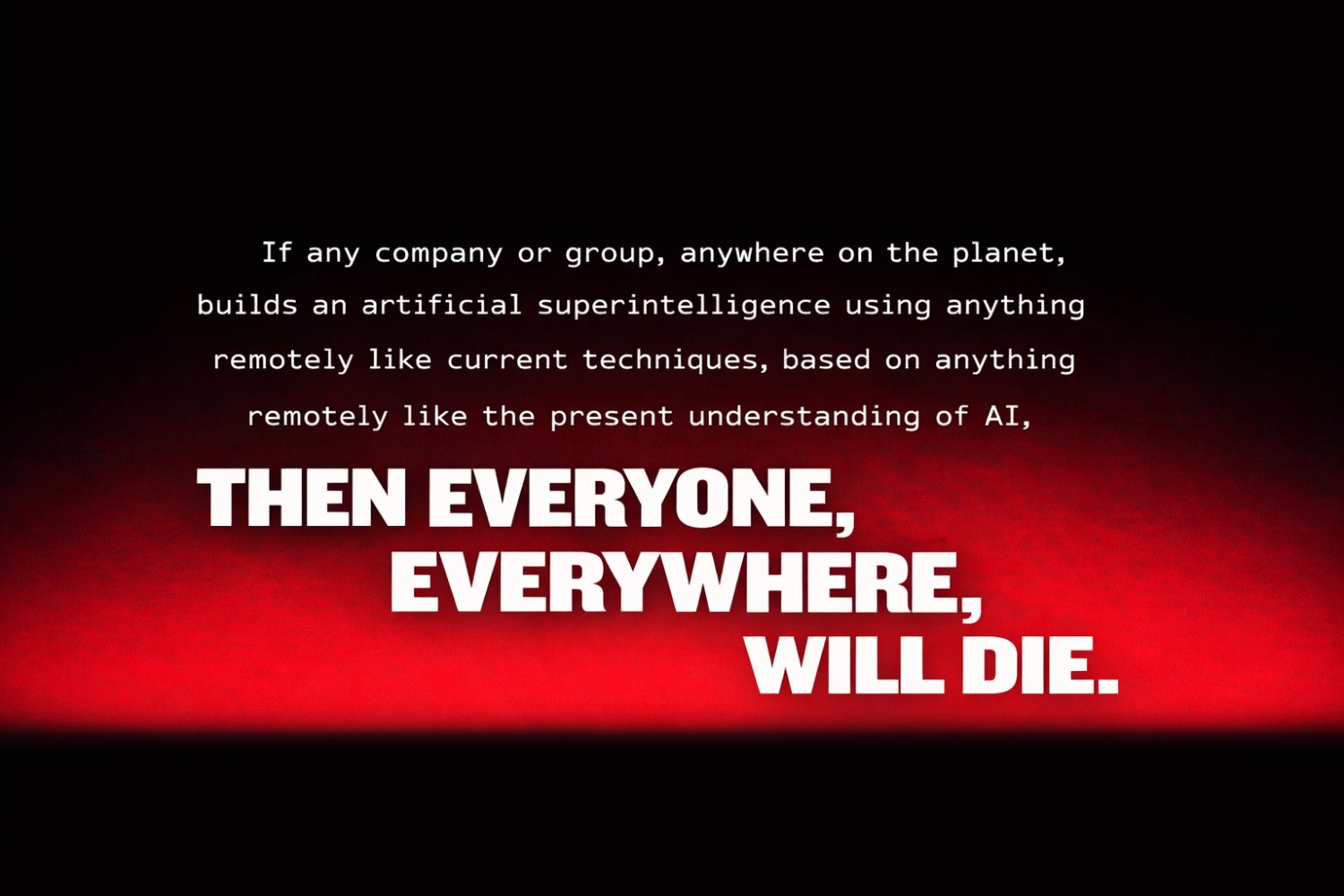

Stopping the development of ASI is the most important challenge facing humanity

"develop proposed options for regulatory or governmental oversight, including potential nationalization... for preventing or managing the development of ASI if ASI seems likely to arise" The timeline is rapidly turning bad.

I'm quoted in this piece so let me provide my full comment to the reporter: The most striking thing about the government's filing are the things it *doesn't* mention. It doesn't mention anything about Anthropic hesitating to allow Claude to be used to defend an incoming hypersonic missile, for instance -- one of the many bizarre things alleged by @USWREMichael. The focus on foreign national employees is an indicator of how thin the DoW's case is. It is also an extremely fraught line of argument to go down. Every leading US AI company employs a substantial number of foreign nationals. In FY 2025, Amazon, Microsoft, Meta, Google, Apple, Oracle, Cisco, Intel, and IBM all appeared in the top 50 employers by number of granted H-1B visas, ranging from a few hundred to over 6,000. Meta alone had 5,123 approved H-1B petitions in 2025. (See: newsweek.com/h-1b-visas-imm… ) This is an undercount, of course, as there are many other visa pathways as well as greencard holders and dual nationals. The share is also higher in AI. A large plurality of the core research and engineering talent at every frontier AI lab is foreign, reflecting the global nature of the race for top AI talent. One talent tracker shows Chinese-origin researchers constitute roughly 40% of top AI talent at US institutions. Total foreign nationals likely constituting 50-65% of research teams specifically. This is certaintly true to my experience on the ground. (See: digitalprojectsarchive.org/interactive/di… ) So the first point is that employing foreign nationals, including Chinese nationals, is not unique to Anthropic. The more important question is what measures are taken to protect against insider threats. Ironically, within the industry Anthropic is widely considered to be the most serious and proactive about policing insider threats from foreign nationals and otherwise. They were early adopters of operational security techniques like compartmentalization and audit trails, in part because they were early to partner with the IC and DoW, but also as a reflection of their leadership's strong convictions about the future power of the technology. They were audited last year on these points: the compliance review found Anthropic employs role-based access control, just-in-time access with approval workflows, multi-factor authentication for all production systems, and quarterly access reviews. (See: tdcommons.org/cgi/viewconten… ) Anthropic is known for its security mindset more generally. Last year they famously disrupted a Chinese espionage effort occuring on their platform, banned the PRC from their services, and worked with the NSA and others to share intel. I can't speak to every other company, but the contrast is perhaps most stark with xAI. X employees famously slept in tents to work around the clock, are disproportionately Chinese, and have at least one case of an employee walking out with tons of sensitive data. See: sfstandard.com/2025/08/29/xai… Anthropic is also famous for its remarkable employee retention, which is another important vector for IP theft and security leakages. It's important to underscore just how precarious the DoW's case is, both on the legal merits, and as a potential precedent for the US AI industry. If employing foreign nationals is treated as a prima facie supply chain risk, *no* major US AI company would be eligible to contract with the DoW, along with most of the tech sector. Insider threats are a genuine and tricky concern. Many defense companies are ITAR restricted, meaning they can *only* hire US citizens. If that were the standard in AI, we would destroy all our frontier companies in an instant, and then scatter that talent around the world for our adversaries to scoop up. So in short, the DoW's argument is both ridiculous and playing with fire.

People don't program AIs. They program the machine that grows the AI. AI behavior is an emergent consequence of complex internal machinery that literally nobody understands.